* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Learning, Memory and Perception.

Recurrent neural network wikipedia , lookup

Functional magnetic resonance imaging wikipedia , lookup

Synaptogenesis wikipedia , lookup

Binding problem wikipedia , lookup

Electrophysiology wikipedia , lookup

Embodied cognitive science wikipedia , lookup

Nonsynaptic plasticity wikipedia , lookup

State-dependent memory wikipedia , lookup

Neurolinguistics wikipedia , lookup

Human brain wikipedia , lookup

Selfish brain theory wikipedia , lookup

Mirror neuron wikipedia , lookup

Neuroesthetics wikipedia , lookup

Neurogenomics wikipedia , lookup

Neural coding wikipedia , lookup

Aging brain wikipedia , lookup

Multielectrode array wikipedia , lookup

Neurophilosophy wikipedia , lookup

Brain morphometry wikipedia , lookup

Neuroinformatics wikipedia , lookup

Single-unit recording wikipedia , lookup

Time perception wikipedia , lookup

Molecular neuroscience wikipedia , lookup

Environmental enrichment wikipedia , lookup

Types of artificial neural networks wikipedia , lookup

Donald O. Hebb wikipedia , lookup

Cognitive neuroscience wikipedia , lookup

Neural engineering wikipedia , lookup

Haemodynamic response wikipedia , lookup

Biochemistry of Alzheimer's disease wikipedia , lookup

Neural oscillation wikipedia , lookup

Central pattern generator wikipedia , lookup

Neuropsychology wikipedia , lookup

Artificial general intelligence wikipedia , lookup

Neuroeconomics wikipedia , lookup

Premovement neuronal activity wikipedia , lookup

History of neuroimaging wikipedia , lookup

Mind uploading wikipedia , lookup

Neuroplasticity wikipedia , lookup

Chemical synapse wikipedia , lookup

Feature detection (nervous system) wikipedia , lookup

Activity-dependent plasticity wikipedia , lookup

Pre-Bötzinger complex wikipedia , lookup

Circumventricular organs wikipedia , lookup

Neural correlates of consciousness wikipedia , lookup

Holonomic brain theory wikipedia , lookup

Synaptic gating wikipedia , lookup

Development of the nervous system wikipedia , lookup

Clinical neurochemistry wikipedia , lookup

Brain Rules wikipedia , lookup

Optogenetics wikipedia , lookup

Neural binding wikipedia , lookup

Nervous system network models wikipedia , lookup

Neuroanatomy wikipedia , lookup

Channelrhodopsin wikipedia , lookup

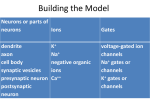

Learning, Memory and Perception. (Erin Schuman and Gilles Laurent) Most animals with a brain (including humans) use it ultimately to facilitate the transfer of their genes (or those of their kin) to a next generation. Brains “produce” innate behaviors (eating, fighting, fleeing, mating etc...), though much of what brains do is interpret the environment, that is, extract features of potential value for immediate and future use. Indeed, we can safely assume that brains evolved to detect meaningful patterns (e.g., correlated rather than uncorrelated motion), to learn, memorize and recall them, and to act adaptively. In a subset of species, many of them social ones, brains can also produce and/or decode communication signals. This deceptively simple constellation of features is the emergent property of neuronal networks optimized by hundreds of millions of years of evolution. Because animals, and thus brains, evolved on this planet, they express also the selective biases imposed by the physics of our world and environment: light-dark cycles, natural images and sounds, to take only a few examples, are not randomly distributed; they have quite specific statistics—far from randomness—to which our nervous systems are adapted. This adaptation to the statistics of our physical world is another form of learning (be it on an evolutionary time scale) expressed by today’s brains. Finally, and most miraculously of all maybe, brains self-assemble, starting with just one cell and ending sometimes with tens of billions as with humans, within every developing individual. Within each developing brain one finds both the hidden biases that result from natural selection (evolutionary “learning”), and the means to sculpt each individual brain with its own, unique, life history. Brains contain two main cell types: neurons, and support cells or glia. We will focus here mainly on neurons, even though it is clear that glia play many fundamental roles in the development, support, and plasticity of neural circuits. Neurons comprise complex and extremely diverse cell types, all involved in information transfer and processing. At the periphery, are sensory and motor neurons. Sensory neurons transform some kind of energy (photons, pressure, chemical binding etc...) into an electrical signal. Motor neurons cause, via identical electrical signals, muscles to contract (in a few cases, to relax also). The majority of neurons, however, are neither sensory nor motor- they exist in between the input and the output. They are thus usually polarized cells, with inputs at one end (called dendrites) and outputs at the other (called axons). Neurons communicate with one another via specialized junctions called synapses. Those can be electrical (allowing the direct flux of ions from one neuron to the next) or chemical (necessitating the secretion and recognition of chemical(s) on the pre- and post-synaptic sites of a junction, respectively). When neuronal networks learn something about their environment there is an adaptive regulation of the electrical properties of neurons and of their synapses. Because chemical synapses are composed of many complex and interacting molecular components, they are, with good reasons, the focus of most of the research on the mechanisms of learning and memory. Understanding learning and memory -1- thus implies understanding not only the basic properties of neurons and synapses, but also discovering the learning rules underlying circuit modifications and the mechanisms by which these learning rules can themselves be selected, modified or adapted by ill-understood processes such as sleep and attention. The complexity of this research thus lies in part on the multiplicity of scales (in space and in time) over which interesting phenomena occur, and on the existence of global properties that emerge only from cooperativity; reductionist approaches alone are thus not sufficient. It is at an intermediate scale—that of networks of interacting elements— that most discoveries remains to be made. While these issues represent fascinating and challenging problems for science, note also that malfunction of synapses and neural circuits are the causes or consequences of most developmental neuropsychiatric and neuro-degenerative diseases. Neurological diseases represent an enormous economical burden on society, estimated at about 140 billion Euros for Europe alone in the year 2004. It is through basic research on neuronal and synaptic function that we can hope to shed light on the etiology of neurological diseases and ultimately, develop modern and appropriate therapeutics. Some Important Questions 1. How is the stability of memories achieved in a distributed system whose elements (proteins, synapses, neurons) are constantly being remodeled? Some of these elements are simply lost with age—after the age of 30, it is estimated that humans lose some x neurons every day. Others, such as constitutive proteins, simply turn-over, being actively degraded in a matter of hours to weeks, and replaced in a presumably appropriate fashion, by others which will assume the same role for, again, only a limited time. On a larger scale, we know that the storage of memories shifts from one to another location at different stages of their formation and consolidation. In mammals, some of this transfer occurs over several weeks and appears to depend on brain activity during certain phases of sleep. How do these molecular, synaptic, cellular, network and dynamical phenomena all interlock to generate memories, and ensure their long-term stability? 2. What features of spatio-temporal patterns in distributed networks give rise to perception, storage and recall? Experimental results make it quite clear that the perception of even the simplest objects must be the result of the activation of millions of neurons in the human brain. These neurons are distributed over and across areas, often on both sides of the brain and yet, their activation leads to unified percepts. How does the brain, with its interconnected network of billions of neurons, generate such unified perception? Dynamics and temporal correlations are good candidate mechanisms, although only in very rare cases, have conclusive results been obtained. The recent development by MPG scientists, of molecular -2- tools to manipulate the state of neurons using light, may allow some of these hypotheses to be better tested. While neural representations are our way to describe the neuronal substrates of percepts (for example, a rabbit, a child’s voice, the smell of burning toast), they would be meaningless if it were not for our ability to link them to corresponding memories: my seeing a rabbit now is useful because I already know what a rabbit looks like and because I am able to compare my present sensory experience to a memory trace for rabbits that is already present in my brain. In other words, perception, memory formation and recall, must all rely on interlinked mechanisms and substrates. This is part of what makes brains so different from modern computers. Memory and processing units are one and the same. Some Research Opportunities and Challenges 1. Reconnecting levels of inquiry. One of the most obvious characteristics of the brain is that it derives its magic both from large-scale interactions (properties of networks) and from very high degrees of specialization expressed by its local constitutive elements. Neurons are indeed the most diverse cell types in the body. Some specializations are visible to the (nearly) naked eye: a hair cell in the cochlea differs dramatically from a Purkinje cell. Others are subtler, but no less important: the number of known principal neuron and interneuron subtypes in mammalian neocortex, for instance, now exceeds many tens. These observations (element diversity + emergent properties of assemblies) pose a major practical problem: if what makes the brain so special indeed results from these singularities on multiple scales, we should study networks, neurons and molecular constituents in combination rather than in isolation (our traditional approach). This will require a major evolution of our experimental techniques towards scaling up (e.g., the numbers of simultaneous samples), making compatible the tools and techniques that have been used traditionally in isolation, and making sense of very high dimensional datasets. These challenges will require the combined and coordinated efforts of many specialties such as molecular biology, genetics, electrophysiology, optics and imaging, electronics, nanotechnology, mathematics, computer science and nonlinear dynamics. This is terrifically exciting, but it will require a new type of cooperativity between areas of science that have often worked separately. 2. Exhaustive Connection and Molecular Mapping of Brain Circuits. The remarkable development of new analytic tools for imaging and protein chemistry, to take but two examples, now enables us to catalog and describe the constituents of brains and networks to a remarkable degree. While the task is clearly enormous, it offers no other major obstacle than time and the optimization of data sampling, analysis and usability of results. On a different level, the recent emergence of machine-vision- -3- enhanced serial electron-microscopy, the development of multi-chromatic genetic tools for neuronal labeling and the increased affordability of very large computing power, make it possible to imagine a day when the connection matrix of a small to medium sized brain (a fly’s to a mouse’s) will be known to a very reasonable degree of accuracy. While this knowledge will not, in and of itself, constitute understanding of the brain, it will most likely be an essential stone on our path towards understanding. Once again, multi-disciplinarity and very-large-scale datasets characterize this research opportunity. 3. Behavior and Brain Activity. A major challenge for modern neuroscience is to explain perception and behavior in terms of neural activity. Given the size of the brain and the distributed nature of neural activity, it is becoming increasingly clear that sparse sampling of activity (i.e., our traditional method) is wholly inadequate. Techniques such as functional MRI give us a coarse grain view of brain activity on a large scale, while patch-clamp recordings allow us to sample one or a handful of neurons at very high spatiotemporal resolution. But we really need to sample at the “mesoscale”, at the population level, with very high sampling density and in freely moving animals. This is a major technical challenge, that will rely on major developments in the fields of optics, micro- and nano-electronics and computer science. -4-