* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download Simulating Populations of Neurons - Leeds VLE

Cognitive neuroscience wikipedia , lookup

Endocannabinoid system wikipedia , lookup

Membrane potential wikipedia , lookup

Action potential wikipedia , lookup

Binding problem wikipedia , lookup

Biochemistry of Alzheimer's disease wikipedia , lookup

Neuroeconomics wikipedia , lookup

Recurrent neural network wikipedia , lookup

Apical dendrite wikipedia , lookup

Resting potential wikipedia , lookup

Activity-dependent plasticity wikipedia , lookup

Synaptogenesis wikipedia , lookup

Axon guidance wikipedia , lookup

End-plate potential wikipedia , lookup

Theta model wikipedia , lookup

Multielectrode array wikipedia , lookup

Neural modeling fields wikipedia , lookup

Holonomic brain theory wikipedia , lookup

Neurotransmitter wikipedia , lookup

Development of the nervous system wikipedia , lookup

Artificial general intelligence wikipedia , lookup

Electrophysiology wikipedia , lookup

Nonsynaptic plasticity wikipedia , lookup

Clinical neurochemistry wikipedia , lookup

Caridoid escape reaction wikipedia , lookup

Neural oscillation wikipedia , lookup

Molecular neuroscience wikipedia , lookup

Convolutional neural network wikipedia , lookup

Metastability in the brain wikipedia , lookup

Types of artificial neural networks wikipedia , lookup

Mirror neuron wikipedia , lookup

Central pattern generator wikipedia , lookup

Chemical synapse wikipedia , lookup

Stimulus (physiology) wikipedia , lookup

Single-unit recording wikipedia , lookup

Circumventricular organs wikipedia , lookup

Premovement neuronal activity wikipedia , lookup

Neuroanatomy wikipedia , lookup

Optogenetics wikipedia , lookup

Feature detection (nervous system) wikipedia , lookup

Neural coding wikipedia , lookup

Neuropsychopharmacology wikipedia , lookup

Pre-Bötzinger complex wikipedia , lookup

Channelrhodopsin wikipedia , lookup

Biological neuron model wikipedia , lookup

Simulating Populations of Neurons

George Parish

MSc Artificial Intelligence

2012/2013

"THE CANDIDATE CONFIRMS THAT THE WORK SUBMITTED IS THEIR OWN AND THE

APPROPRIATE CREDIT HAS BEEN GIVEN WHERE REFERENCE HAS BEEN MADE TO THE

WORK OF OTHERS.

I UNDERSTAND THAT FAILURE TO ATTRIBUTE MATERIAL WHICH IS OBTAINED FROM

ANOTHER SOURCE MAY BE CONSIDERED AS PLAGIARISM."

(Signature of student)

Simulating Populations of Neurons

George Parish

2013

SUMMARY

The goal of this project was to understand cognitive neuroscience to be able to simulate

networks of neurons, as well as to use analysis techniques to evaluate the interactions between

populations of neurons. To accomplish this, a lengthy literature review was undertaken to gain an

understanding of neuroscience. This would form the basis for simulations of networks of neurons to

determine: how neurons react to the background noise of surrounding spiking neurons, whether a

layered network can transmit information through precise spike-timing, as well as what initial

conditions are required for this synchronous spiking activity.

The project aimed to understand and implement known networks and techniques, as well as

to evaluate them by creating script to analyse the dynamics of populations of neurons.

ii

Simulating Populations of Neurons

George Parish

2013

ACKNOWLEDGEMENTS

Most of all I would like to thank my supervisor Dr. Marc de Kamps for the frequent meetings

which were key to the completion of this project. I would also like to thank him for his patience in

explaining topics and motivating me to push myself harder.

Thanks also to my assessor Professor Tony Cohn for giving valuable time to give feedback

which helped guide the project.

Finally I would like to thank my parents, who made it possible to me to follow my ambitions

and study for a Masters course in the first place. Thanks also to my new German family for being a

huge positive influence and supporting me through the year.

iii

Simulating Populations of Neurons

George Parish

2013

CONTENTS

Summary ................................................................................................................................................. ii

Acknowledgements................................................................................................................................ iii

List of Figures ........................................................................................................................................ vii

1. Introduction ........................................................................................................................................ 1

1.1 Overview........................................................................................................................................ 1

1.2 Aims and Objectives ...................................................................................................................... 2

1.3 Minimum Requirements and Deliverables.................................................................................... 2

1.4 Relevance to Degree ..................................................................................................................... 2

1.5 Project Management ..................................................................................................................... 3

1.6 Research Methodology ................................................................................................................. 3

1.7 Research Questions ....................................................................................................................... 3

1.8 Project Outline .............................................................................................................................. 4

2. Literature Review ................................................................................................................................ 5

2.1 Machine Learning .......................................................................................................................... 5

2.1.1 Connectionist Models ............................................................................................................. 6

2.1 2 Non-Connectionist Models ..................................................................................................... 7

2.2 Computational Modelling.............................................................................................................. 7

2.2.1 Population Firing Rate ............................................................................................................ 8

2.2.2 Raster Plot............................................................................................................................... 8

2.2.3 Voltage Trace .......................................................................................................................... 8

2.2.4 Population Density.................................................................................................................. 8

2.3 Bio-Inspired Computing................................................................................................................. 9

2.3.1 The Brain as a Whole .............................................................................................................. 9

2.3.2 Structure of a Neuron ........................................................................................................... 12

2.3.3 The Integrate-and-Fire Model .............................................................................................. 17

2.3.4 Modelling Populations of Neurons ....................................................................................... 25

iv

Simulating Populations of Neurons

George Parish

2013

3 Experiments & Evaluation .................................................................................................................. 31

3.1 Implement a Nest Simulation ...................................................................................................... 31

3.2 Simulation of 10,000 Integrate-and-Fire Neurons ...................................................................... 32

3.2.1 Implementation .................................................................................................................... 32

3.2.2 Evaluation ............................................................................................................................. 32

3.3 The Balanced Excitation/Inhibition Model .................................................................................. 35

3.3.1 Implementation .................................................................................................................... 35

3.3.2 Evaluation ............................................................................................................................. 36

3.4 The Stable Propagation of Synchronous Spiking Model ............................................................. 38

3.4.1 Previous Research................................................................................................................. 38

3.4.2 Implementation of Background Noise .................................................................................. 39

3.4.3 Implementation of Network ................................................................................................. 42

3.4.4 Evaluation ............................................................................................................................. 42

3.4.5 Case Study : Dispersion of the Propagating Volley ............................................................... 45

3.4.6 Case Study : Synchronised Initial Volley Causing Synchronisation ....................................... 47

3.4.7 Case Study : De-Synchronised Initial Volley Causing Synchronisation ................................. 49

3.4.8 Case Study : Synchronised Initial Volley Causing Late Synchronisation ............................... 51

4 Conclusion .......................................................................................................................................... 53

4.1 Project Evaluation ....................................................................................................................... 53

4.1.1 Aim and Minimum Requirements ........................................................................................ 53

4.1.2 Exceeding Requirements ...................................................................................................... 54

4.1.3 Research Questions .............................................................................................................. 54

4.2 Challenges ................................................................................................................................... 56

4.3 Future Work ................................................................................................................................ 57

Bibliography ............................................................................................................................................. i

References .............................................................................................................................................. ii

Appendix A ............................................................................................................................................. iv

v

Simulating Populations of Neurons

George Parish

2013

Project Reflection ............................................................................................................................... iv

Appendices B:1 ....................................................................................................................................... v

Initial GANTT chart .............................................................................................................................. v

Appendices B:2 ...................................................................................................................................... vi

Final GANTT chart............................................................................................................................... vi

Appendices C:1...................................................................................................................................... vii

MAIN FUNCTION FOR ANALYSING NEURONAL DATA ....................................................................... vii

Appendices C:2...................................................................................................................................... xii

FUNCTION TO PLOT EVOLUTION OF SYNCHRONOUS SPIKING ......................................................... xii

Appendix C:3 .........................................................................................................................................xiv

NEST CODE FOR SYNCHRONOUS SPIKING MODEL ............................................................................xiv

vi

Simulating Populations of Neurons

George Parish

2013

LIST OF FIGURES

Figure 1 Waterfall Methodology ............................................................................................................. 3

Figure 2 Brodmann areas of the brain (Gazzaniga, 1998) ...................................................................... 9

Figure 3 Types of biological neurons in the nervous system (Gazzaniga, 1998) .................................. 10

Figure 4 Anatomy and Functional areas of the brain (http://catalog.nucleusinc.com) ....................... 11

Figure 5 Structure of a neuron (left) and ions traversing the cell membrane (right) .......................... 12

Figure 6 The Resistance Capacitance (RC) Circuit ................................................................................ 13

Figure 7 Excitatory and Inhibitory connections for a delta model neuron ........................................... 20

Figure 8 DC generator and single delta neuron network ..................................................................... 21

Figure 9 A single integrate-and-fire neuron with a single direct current input of 200pA (500-1000ms),

380pA (1000-1500ms) and 450pA (1500-2000ms) ............................................................................... 21

Figure 10 Spike frequency of model neuron vs value of the direct current input................................ 22

Figure 11 Alpha model analysis............................................................................................................ 24

Figure 12 Serial and Parallel chains ...................................................................................................... 25

Figure 13 Converging and Diverging chains .......................................................................................... 26

Figure 14 Poisson distribution of spike events ..................................................................................... 29

Figure 15 Network for 1 DC and 2 Poisson generators connected to a single delta neuron................ 29

Figure 16 Simulation of delta neuron with poisson and DC generators ............................................... 30

Figure 17 Network of 10,000 delta neurons connected to 800 1Hz poisson generators ..................... 32

Figure 18 Analysis of 10,000 neurons with noise ................................................................................. 33

Figure 19 Time between spikes of 10000 neurons with noise ............................................................. 34

Figure 20 Network of balanced excitation/inhibition model................................................................ 35

Figure 21 Analysis of balanced excitatory/inhibitory network ............................................................ 36

Figure 22 Example of a synchronised and de-synchronised volley ...................................................... 38

Figure 23 Network showing how noise was connected to neurons in each layer ............................... 39

Figure 24 Experimenting with the value to multiply the threshold rate by (p) .................................... 40

Figure 25 Long simulation for p=235 & p=240...................................................................................... 41

vii

Simulating Populations of Neurons

George Parish

2013

Figure 26 Network for the stable propagation of synchronous spiking model .................................... 42

Figure 27 Evolution of initial spike volleys, varying in number of neurons (a) with different

synchronisations (sigma) ...................................................................................................................... 43

Figure 28 Evolution of initial spike volleys (Diesmann, Gewaltig, & Aertsen, 1999) ............................ 44

Figure 29 Case study : Dispersion of fully synchronised initial volley (a=43 =0) ................................ 46

Figure 30 Case study : Synchronised initial volley (a=44 =0) causing synchronisation ...................... 48

Figure 31 Case study : De-synchronised initial volley (a=36 =4) causing synchronisation ................. 50

Figure 32 Case study : Synchronised initial volley (a=43.5 =0) causing late synchronisation ............ 52

viii

1. INTRODUCTION

1.1 OVERVIEW

Understanding the brain is a recent fascination in modern computing. We have come to realise that

the brain is the most advanced computational tool that we know of, to be able to replicate neuronal

processes could vastly improve current computational techniques. However, the more we

understand the more we come to realise the magnitude of this undertaking. As there are billions of

neurons separated by different classes with even more connections of differing types, a top down

approach has to be taken. We can only begin to try to replicate processes once the architecture of

the brain has been structured and different areas have been assigned to different roles. The

advances we have made over the last 100 years have allowed us to now consider processes on an

individual level and use computational techniques to be able to simulate them.

This project considers the paper Stable propagation of synchronous spiking in cortical neural

networks (Diesmann, Gewaltig, & Aertsen, 1999). The paper proposes that information can be

carried by precise spike timing and challenges the view that groups of neurons are incapable of

transmitting signals with millisecond accuracy due to noise from surrounding neurons. They go on to

prove this by creating a computational model that represents biological neurons from the cerebral

cortex, where a signal is propagated through consecutively connected groups of neurons. Successive

groups of neurons fire more and more synchronously together as the signal is propagated, proving

their proposed theory.

This project will re-create this network of neurons by creating a computational model using the

software NEST. By using computational models to analyse the outputted data from this model, it

should be possible to further examine the case in which neurons are kept ‘trigger-happy’ by

surrounding by background activity in the brain, and the effect of incoming signals of varying

strength and synchronisation on the network of neurons.

1

Simulating Populations of Neurons

George Parish

2013

1.2 AIMS AND OBJECTIVES

The overall aim of this project is to clarify the results of the proposed network (Diesmann, Gewaltig,

& Aertsen, 1999). In order to do so, an understanding of the biology of neurons and how they

interact with one another must be reached. This will be achieved by completing the following

objectives:

Practice computational methods to simulate individual and networks of neurons.

Simulate networks of neurons to understand fundamentals of computational neuroscience.

Extract data from simulations and pre-process for analysis.

Use computational methods to analyse data from simulations.

Recreate proposed network for simulation.

Analyse data for evaluation and compare results.

1.3 MINIMUM REQUIREMENTS AND DELIVERABLES

The following are the minimum requirements set for the project.

1

NEST simulations of individual alpha and delta integrate-and-fire neurons.

2

NEST simulations of 10000 delta integrate-and-fire neurons.

3

NEST simulation of synchronous spiking model.

4

MATLAB script for static and dynamic analysis of populations of neurons.

1.4 RELEVANCE TO DEGREE

This project builds on techniques and applies knowledge learnt from modules on the MSc Artificial

Intelligence in the School of Computing at the University of Leeds. The module Bio-Inspired

Computing (COMP5400M) gave the basis for the understanding and knowledge in the subject area

of computational neuroscience. This knowledge was required to implement the simulations

performed by the software NEST. The module Machine Learning (COMP5425M) was useful to give

an understanding as to how the software NEST used learning rules to simulate different models

connected in various networks. Skills and techniques learnt from the Computational Modelling

module (COMP5320M) were used to analyse and pre-process data obtained from simulations. This

project provided the challenge of further exploring an area of great interest in modern computing

and applying very useful techniques from computational analysis.

2

Simulating Populations of Neurons

George Parish

2013

1.5 PROJECT MANAGEMENT

The initial project schedule is included in Appendix B:1. This schedule changed to reflect the changes

in minimum requirements as the project progressed, most notable the drop of the use of the

software MIIND, another neural simulator tool, and the addition of more MATLAB coding for

analysis. The revised schedule can be seen in Appendix B:2.

1.6 RESEARCH METHODOLOGY

The project aims to understand currently well-defined methods and models to simulate the real

biology of networks of neurons. Because of this, a well laid plan can be followed to reach the final

goal, with little foreseen deviation. Therefore, the waterfall method will be used. Under this method,

background research will be done on each type of simulation defined in the requirements. This will

effectively be the design stage shown in Figure 1. This will be followed by simulating the network

using the software NEST, undertaken during the implementation stage in Figure 1. Following this an

analysis can be undertaken to verify the results. Maintenance will be implemented in the form of

creating and updating new MATLAB script for use in the analysis section. The waterfall approach of a

linear set of objectives is well defined in the planning stage and will be useful for this type of project.

Figure 1 Waterfall Methodology

1.7 RESEARCH QUESTIONS

This project aims to answer the following questions:

How do neurons react to the background noise of other spiking neurons in the brain?

3

Simulating Populations of Neurons

George Parish

2013

Under what conditions can a network of consecutively connected groups of neurons

transmit information?

What input is required for information to be transmitted by consecutively connected

groups of neurons?

Can the implementation of such a network explain real neuronal processes?

Can this implementation be applied in other areas of computer science?

1.8 PROJECT OUTLINE

The purpose of this thesis is to understand and implement evaluation techniques used in

computational neuroscience. This understanding starts in Chapter 2 by describing the principles of

computational modelling and machine learning and how they can be used to solve and evaluate real

biological problems. The notion that everything is made up of a set of processes and can therefore

be modelled is considered.

From there, Chapter 2 continues with an overview of the history of neuroscience. This includes the

examination of the methods used to discover the inner workings of the brain, from both an

anatomical and functional point of view. The structure of individual neurons will be examined. This

will lead to the examination of the electrical properties of neurons which enables them to act as

nodes in an interconnected network. This analysis will culminate with the explanation of the

integrate-and-fire model, a commonly used computational model that aims to replicate the inner

workings of a neuron. Simulations using the software NEST will aid these descriptions.

Once the underlying operations of a single neuron have been modelled, it is necessary to examine

the interaction between many neurons. Chapter 2 goes on to discuss different methods proposed by

relevant literature in which neurons connect and transmit information to one another. Individual

neurons are subject to noise from surrounding neurons that would require the simulation of a large

portion of the brain to emulate. Methods to by-pass this are discussed and implemented via

simulations in NEST.

Chapter 3 goes on to explain how each of the 2 simulations from the requirements list, as well as

one other, are implemented. Each experiment is analysed using MATLAB script to evaluate how the

population of neurons interacts within each network. The importance of each experiment is

explained and there is a logical progression through to the final Case Study evaluations.

Chapter 4 gives a discussion on the results obtained from Chapter 4 and attempts to answer the

research questions proposed. It will also give an overview of the limitations of the project,

requirements fulfilled and proposes future research.

4

Simulating Populations of Neurons

George Parish

2013

2. LITERATURE REVIEW

The following section describes techniques used in this project by analysing the relevant literature.

Firstly, an overview of machine learning is given, followed by how it can be applied to the field of

computational neuroscience. Different types of machine learning algorithms are defined and their

usefulness predicted. This is followed by a section detailing the importance of computational

analysis of data received from machine learning algorithms. Different types of models that are used

in the field of computational neuroscience are defined with reasons for their use. Finally, there is a

detailed description of the real biology behind these models. This is important as an understanding

for the models can only be reached once the underpinning functionality is explained.

2.1 MACHINE LEARNING

Machine learning is a term to describe how learning rules can be applied for computer simulations

to ‘learn’ how to accomplish a task. Machine learning simulations effectively act as an extension of

the human brain, where a complex hierarchy of deterministic rules can sometimes outperform

human intuition, especially when vast amounts of data need to be produced or analysed.

As our methods of understanding the human brain improve, machine learning techniques have been

applied to re-create brain functions and have seen use in many disciplines. Those that study the

brain have used models to replicate brain functions and enhance our understanding of how the

brain enables the mind. Models have been inspired from these studies; artificial neural networks

(ANNs) have seen widespread use across many disciplines, often creating much more efficient

systems.

The argument of pan-computation states that everything can be defined as a set of processes. If a

process can be defined, according to machine learning it should be possible to quantify it and recreate it computationally. Therefore, according to the theory, everything can be learnt by a

computer simulation. The counter argument to this states that functions such as the weather,

digestive system and solar system, cannot be computationally explained by way of a deterministic

set of inputs and outputs, but they can be represented via a stochastic computational model to try

to predict future outcomes (Piccinini, 2007). However, this could simply be because not all

information is known. When we apply this argument to human endeavours to simulate cognition

there are two camps to choose between. Either cognition is the emergent behaviour of sets of

processes that are learnable to a machine, or it is another undefinable process that is separate from

the holistic set of processes it uses. This project assumes that pan-computation is a possibility when

5

Simulating Populations of Neurons

George Parish

2013

it comes to simulating neuronal processes; else ultimately the models proposed throughout the

project would have no justification.

An accurate computational simulation can only be accurately made if all information is known.

Often, this requires too much computational power or there is some uncertainty in experimental

literature. This means that certain assumptions are often made in order to maintain functionality.

These assumptions can be problematic and are usually refined or often redefined when more

knowledge of the process becomes available. This leads to different types of machine learning

algorithms. Some alter their assumptions to create a more functional model that may differ from the

original process. These models can claim to be inspired by the original but honed for a specific

purpose in industry. Other models attempt to replicate natural processes, thus inheriting any

computational problems from the original process. These models are especially hard to create as

they require sufficient testing under certain conditions, yet often still cannot guarantee to replicate

the original process.

When simulating these models to evaluate the brain, these models come under connectionist and

non-connectionist models. Connectionist models maintain a brain-like, network architecture where

connections are neuron-like. Models are usually developed via a connectionist learning algorithm,

assuming representations are distributed and processing is parallel. Non-connectionist models

maintain a functional architecture where the connections are not neuron-like. Models are developed

using suggested functionality from relevant literature of cognitive processes, where no assumptions

are made on representations or processing (Max, 2004).

2.1.1 CONNECTIONIST MODELS

Connectionist models are concerned with trying to replicate biological functions. These

models are made for the sole purpose of understanding the original function by simulating each of

its individual parts in as much detail as possible; because of this they are not useful for industry

purposes. The goal is to study the emergent behaviour from the sum of parts. For this reason, they

can often be computationally demanding, often requiring either a supercomputer or some form of

simplification for large simulations.

The software NEST, used in this project, uses Alpha model neurons to simulate all the known,

intricate processes of individual neurons and the way they interact as a way of simulating whole

populations of neurons. For this reason, section 2.3.2 will explain in detail these intricate processes.

6

Simulating Populations of Neurons

George Parish

2013

2.1 2 NON-CONNECTIONIST MODELS

Artificial Neural Networks (ANNs) are an attempt to create a function that is computationally

similar to a brain function. They are made up of a set of neurons which are manipulated by a

learning algorithm. The neurons are discrete, dynamic instances whose states are dependent on

their input. Dynamic connections between neurons are weighted, allowing a continuous learning

algorithm to manipulate weights, and therefore the neurons’ states. The goal of the model is to

reach an optimised solution that can take into account independent processes with multiple

constraints.

When AANs are used as a connectionist model to represent brain functions, neurons’ states bear

similarities to biological neurons, where non-linear activation rules produce spike-like behaviour.

However, the models cannot represent the full complexity of biological networks and require a

teacher to correct internal elements. It is not always clear whether ANNs represent single or sets of

biological neurons. Old information is easily lost when new information is present. All of this means

that these computational models are useful in testing known hypothesis, but less so when

generating new predictions.

Whilst the software NEST is concerned with making connectionist models to simulate real neuronal

processes, the complexity of this undertaking is such that there are still some simplified models

available. The Delta model neuron learns most of the same processes as the Alpha model neuron but

with some assumptions that limit its functionality as a connectionist model. In the context of the

software, this can be seen as a non-connectionist model. Both models are described in detail later.

2.2 COMPUTATIONAL MODELLING

Computational modelling is a method of representing data in order to show past and predict future

trends or patterns. It is a very useful technique as it can apply formula and rules to infer from large,

complex data sets. The previously described machine learning algorithms that replicate neuronal

processes output a stream of data that can be interpreted by simpler models. Computational models

offer accurate analysis that can guide evaluations of the learning algorithm. There are many

different types of model dependent on the nature of the simulation you are dealing with.

In computational modelling of the brain, there are often huge amounts of data to be processed. The

software NEST allows for the analysis of voltage data and spike data. The former gives a voltage

value (mV) for each neurons ID at every time (ms) of the simulation. The latter gives a neuron ID and

7

Simulating Populations of Neurons

George Parish

2013

a time for every spike that occurred in the simulation. These streams of data can be interpreted in

the following ways to give an analysis of single neurons or populations of neurons.

2.2.1 POPULATION FIRING RATE

The population firing rate gives a frequency in Hz of a single or group of neurons firing rate.

For example, a group of neurons firing at 12Hz will fire at 12 times a second on average. This can be

calculated by counting the number of times a neuron/groups of neurons fired in the data from the

simulation, then divide by 1000 and divided again by 1000 over the length of the simulation.

2.2.2 RASTER PLOT

Raster plots give a basic representation of spike times for individual neurons. It is essentially

a 2-dimensional plot of neuron ID against time in milliseconds. A human analysis of a raster plot is

useful in finding patterns in spike times. A raster plot can also be analysed by an algorithm in more

detail. This is a technique that will be described in more detail in Chapter 3.

2.2.3 VOLTAGE TRACE

The voltage trace is useful for analysing individual neurons. It is a simple 2-dimensional plot

of voltage value (mV) against time (ms). It shows the trace of the neurons voltage as it progresses

through time and can be useful in showing how the neurons membrane potential is affected by

eternal stimuli. These diagrams will be shown in more detail when analysing neurons later in this

project.

2.2.4 POPULATION DENSITY

The previous three approaches offer static representations of a neuron or population of

neurons as a whole. The population density of neurons is a useful, dynamic analysis technique to see

how populations react to external events in real time. It plots a frequency histogram of neurons

according to their voltage values. Voltage values are normalised between 0 (at reversal potential)

and 1 (at threshold). This method and the terms used are all explained in full in the preceding

chapters.

8

Simulating Populations of Neurons

George Parish

2013

2.3 BIO-INSPIRED COMPUTING

An accurate machine learning algorithm for use in simulation is reliant on the accuracy and extent of

the real-world information it is based upon. The information must be identified and quantified, as

well as identify parameters to enable a functional model. Parameters for a model of the brain

include inputs, outputs, the type and multitude of connections, as well as the architecture of the

space that the function operates in. All of these are defined in the relevant literature from

neuroscience, a brief account of which is given in the following section.

2.3.1 THE BRAIN AS A WHOLE

This section deals with understanding the methods used to extract the information that

machine learning algorithms in computational neuroscience are based upon. It gives a brief history

of the achievements in medical neuroscience.

ARCHITECTURE

Cytoarchitectonics is a method used to determine the different cell types in different brain

regions. This is achieved by taking tissue stains and analysing the cell structure. By this method, 52

distinct regions of the human brain were originally identified (Brodmann, 1909); this has since been

redefined as 38 by using modern techniques such as Magnetic Resonance Imaging (MRI) and

electron microscopes – which offer a resolution of about 1 cubic millimetre. This has allowed for a

visual model of the brain as a whole physical object (Figure 2), and the evaluation of the space that

brain functions operate in. The connective fibres of the brain are too dense to allow us to view

individual neurons and connections in this way, as connective highways become merged. The

BigBrain project is the latest project which analyses the state space of the brain (Amunts, et al.,

2013). This method sliced the brain into 7400 sections, stained them and scanned them into a

supercomputer to create a 3D map of the brain – offering an unparalleled resolution of 50 cubic

millimetres.

Figure 2 Brodmann areas of the brain (Gazzaniga, 1998)

9

Simulating Populations of Neurons

George Parish

2013

NEURONS

The method of impregnating neurons in a tissue stain with silver (Golgi, 1898) made it

possible to fully visualise them. This method was used to prove that neurons are independent,

unitary entities (figure 3), disproving the theory that the brain was made up of a continuous mass of

tissue that shared a common cytoplasm (Cajal, 1906). After identifying the structure of a neuron, it

was discovered that they transmitted electrical information in only one direction – a landmark

discovery that forms the basis of artificial neural-network models used today. Neuroanatomists

today use different chemicals in place of silver (Hokfelt, 1984) to create accurate, visual models of

the connections between neurons and their locations in the brain. Neurons from different locations

differ in appearance to suit their functional role. This paper considers pyramidal cells from the

cerebral cortex.

PROCESS

The most definitive method for understanding brain functions is called single-cell recording.

This method is performed on animals (Van Der Velde & De Kamps, 2001) or voluntary humans;

where an electrode is inserted into the brain and is able to record the electrical activity of a single

neuron. The cell of the neuron fires an electrical impulse when active. The goal of this method is to

experimentally manipulate conditions that cause a consistent firing of isolated cells, thus finding the

functional purpose of those cells. A functional model can then describe the interaction of neurons in

a specified region of the brain. However, brain functions enable independent processes in various

brain regions, thus the function cannot be described by the response properties of individual

neurons. However, the understanding gained from this method forms the basis of computational

models of neurons used today.

Figure 3 Types of biological neurons in the nervous system (Gazzaniga, 1998)

10

Simulating Populations of Neurons

George Parish

2013

FUNCTIONAL ROLE

Research has proved that areas of the brain with differing cell structures also represent

different functional regions of the brain. While functions are made up of independent processes

where neurons communicate across varied regions of the brain; it is possible to localise specific

functions to specific regions. The method of electrical stimulation (Penfield & Jasper, 1954)

discovered that motor control was performed by the motor and somotensory cortices; uncovering a

visual model for the motor representation of the body. MRI scanning can reveal how blood flows to

active regions of the brain, enabling a map of functionality of the brain as seen in Figure 4. This

project is concerned with the outermost layer of the brain, the cerebral cortex. It is largely

responsible for memory, attention, language and consciousness (Abeles, 1991).

Figure 4 Anatomy and Functional areas of the brain (http://catalog.nucleusinc.com)

11

Simulating Populations of Neurons

George Parish

2013

2.3.2 STRUCTURE OF A NEURON

The following section details the molecular structure of a neuron and the electrical charge that

molecules can contribute. Only by considering the smallest transitions in a single neuron can we

understand the implications of the interaction between many. This will then allow different models

to be considered in the succeeding section that attempt to replicate the processes described here.

CELLULAR STRUCTURE

The defining feature of a neuron is its ability to transmit information to surrounding cells.

This means they are particularly prevalent in the brain and nervous system, the purposes of which

are to receive and transmit signals across the body. Synapses between connected cell membranes

are the method employed by neurons to achieve their signalling purpose. A synapse is initiated by

the neurons nucleus and travels down the axon where it uses the axon terminal to connect to the

dendrites of other neurons ( Figure 5).

Like other living cells, a neuron consists of a cytoplasm surrounded by a cell membrane. The

cytoplasm is made up of different types of molecules, in particular, positive and negatively charged

ion molecules. A synapse is made possible by ion channels embedded in the cell membrane. These

act as tunnels through the impermeable membrane that allow charged ion molecules to flow in and

out, altering the overall charge of the neuron.

Figure 5 Structure of a neuron (left) and ions traversing the cell membrane (right)

12

Simulating Populations of Neurons

George Parish

2013

As there are different types of ion molecules, different types of ion channels exist that will only allow

passage to a certain type of ion molecule. If the neuron is to operate properly, it is important to

maintain differences in the concentration of ions inside and outside of the cell. This is performed by

ion pumps that expend energy to maintain this equilibrium.

MEMBRANE POTENTIAL

By definition, a neuron must be able to receive and transmit signals. This is done by

undertaking an on/off approach, where the neuron is either active or inactive. By default, a neuron

is inactive – where it’s internal charge from the collection of ion molecules is negative. This overall

charge is called the membrane potential and can be changed by ion channels opening or closing. A

membrane potential can have a charge in the range of about -90 to +50 mV. A neuron rests with a

membrane potential of about 24-27 mV in warm and cold blooded animals. Thus, the scale for

membrane potentials causing a neuron to be active or inactive ranges from about +2 to -3 times the

resting charge respectively. Such a state change caused by the membrane potential is termed an

action potential – and are the cause of synapses.

ELECTRICAL PROPERTIES

Thus far we have described how the membrane potential influences the flow of ion

molecules within a neuron to directly change its state. But how is the membrane potential altered in

order to bring about an action potential? In order to answer this question, we must consider the

electrical properties of the neuron. Figure 6 shows the RC circuit, which works in much the same way

as a model neuron, where a voltage source sends energy through the resistor to be stored in the

capacitor. Once the capacitor’s limit is reached, an excess charge is released.

Figure 6 The Resistance Capacitance (RC) Circuit

13

Simulating Populations of Neurons

George Parish

2013

As previously mentioned, a neurons typical internal charge from its collection of ion molecules is

negative. This means that there has to be a counter balance of positively charged ion molecules just

on the other side of the neuron’s cell membrane. Because of this, the membrane creates a

capacitance (Cm), where the voltage across the membrane (V) and the amount of excess charge (Q)

form the standard equation for a capacitor;

Q = CmV

All neurons have roughly the same capacitance per unit area of membrane of about 10 nF/mm 2. The

membrane capacitance is proportional to the surface area of the neuron. Neuronal surface areas

tend to be in the range 0.01 to 0.1 mm2, so the membrane capacitance for a whole neuron is

typically in the range 0.1 to 1 nF.

Determining the membrane capacitance is essential when it comes to calculating how much current

is required over time to change the membrane potential. This is given by the formulae;

Cm

=

where dQ/dt represents the cells incoming current and Cm dV/dt gives the amount of current

required to change the membrane potential.

REACTION TO EXTERNAL STIMULI

Now that we understand how the voltage of a membrane potential can be changed, the next

step in causing an action potential is to hold the membrane potential steady at a value that is not its

resting one. This requires constant current (Ie) to enforce a change in voltage ( V). According to

Ohm’s law;

V = IeRm

there has to be a resistance to input. In this case, it is called the membrane resistance. This

resistance is proportionate on the surface area of the neuron. For neurons with an area in the range

0.01 to 0.1 mm2, the membrane resistance is usually between 10 and 100 M . For example, to

cause an action potential of 50 mV where the resting value of a membrane potential is 25 mV and

the membrane resistance is 50 M , we need a constant current of 0.5 nA to hold the membrane

potential the required 25 mV from resting value.

14

Simulating Populations of Neurons

George Parish

2013

Changes in the membrane potential have to occur on a constant time scale, called the membrane

time constant. This is the membrane resistance times the membrane capacitance, typically between

10 and 100 ms.

m

= RmCm

INTRACELLULAR ACTIVITY

When measuring the membrane potential in different parts of a neuron, they often give

different values. These different charges across the neuron cause ions to flow within it in order to

equalize the differences. However, the neuron produces a resistance to this flow of ions in order to

maintain itself – this is called the intracellular medium. This is particularly high in long narrow parts

of the neuron – for example dendritic or axonal cable. In such parts, the longitudinal resistance can

be calculated as inversely proportional to the cross-sectional area of the neuron segment. The

degree of this proportionality between resistance and area is called the intracellular resistivity.

All of this means that it is possible to calculate how much voltage is required to force n amount of

current down a neuronal segment of any size. This is important when it comes to predicting the

ramifications of voltage from an action potential forcing current through the neuron and causing it

to interact with other neurons. The intracellular resistance to current flow means that different parts

of the neuron will measure different voltages at the time of an action potential. Because smaller

neurons with less axonal or dendritic cable have less intracellular resistance, they are termed

electrotonically compact and can be described by a single membrane potential as opposed to many.

THE ROLE OF DIFFUSION

The electrical properties of neurons are not the only force responsible for the flow of ions in

the ion channels. As mentioned previously, the concentration of ions on the inside and outside of

the cell membrane is maintained by ion pumps. These operate by way of diffusion. Thus, there is a

balance between the probability that an ion has sufficient energy to overcome the membrane

potential and enter or exit a neuron, and the ion traversing through an ion pump due to the

diffusion gradients. In other words, there must be a balance between electric and diffusive forces.

This occurs because if the former were solely responsible, there would be no control over the

proportion of ions inside and outside of the neuron – potentially leading to instability.

15

Simulating Populations of Neurons

George Parish

2013

FINDING AN EQUILIBRIUM

The diffusive properties of the ion pumps compensate for this by controlling the rate at

which ions flow in and out of the neuron. This can be given by the Nernst equation;

E=

(

ln

)

Where E denotes the potential of an ion channel required to enforce an equilibrium between the

two forces, VT is the voltage of the membrane potential, z is the charge of the ion and [outside] and

[inside] denote the concentration of ions both sides of the neurons cell membrane.

The value of E lies within different ranges dependent on the type of ion, for example potassium (K+)

ion channel potentials lie between -70 and -90 mV, whilst sodium (Na+) and calcium (Ca+) ion

channel potentials are 50 and 150 mV or higher respectively. As mentioned previously, this shows

how ion channels can be highly selective. We can approximate for E over the different ion channels

using the Goldman equation. E can then be termed a reversal potential, as when the membrane

potential changes value to greater than or less than E, the flow of ions in that channel reverses.

A neurons reversal potential determines what type of neuron it is. For example, a depolarized

neuron (more positive membrane potential) is when there are many sodium or calcium ion channels

active in a neuron. A hyperpolarized neuron (more negative membrane potential) is when there are

more active potassium ion channels. As there are two types of neuron because of the reversal

potential, there are also two different types of synapses. Inhibitory synapses occur when a nearby

hyperpolarized neuron spikes, lowering the neurons membrane potential. Excitatory synapses occur

when a nearby depolarized neuron spikes, raising the neurons membrane potential.

THE MEMBRANE CURRENT

Lastly, the membrane current is simply the total ion current flowing across a membrane.

When ions leave a neuron, the current is positive, when ions enter, the current is negative. As

neurons are of different size, the membrane current per unit area is more useful – denoted by im.

The equation for the membrane current is as follows;

im = ∑i gi (V - Ei)

16

Simulating Populations of Neurons

George Parish

2013

The reverse potentials of different types of ion current are denoted as Ei where i is an ion type. The

difference between the membrane potential (V) and the reverse potential (Ei) is called the driving

force, and the factor gi is the conductance per unit area.

At this point we can see that certain factors attributing to the membrane current do not fluctuate

much and remain somewhat constant. Therefore we can make a simplifying assumption that they

will always remain constant and lump them together to form a single term;

ḡL(V – EL)

This is called the leakage or passive conductance. Because of the assumptions about these values

seemingly constant nature, it is important not to use this formula as the basis for others, but to use

it to manually adjust the resting potential of the model neuron to that of the real neuron.

2.3.3 THE INTEGRATE-AND-FIRE MODEL

The integrate-and-fire model is the most commonly accepted model used when simulating

neurons. It is a simple model that allows any external inputs to alter the membrane potential (V) of

the neuron measured in millivolts. The model specifies a resting potential (E) which is the normal

resting value of the membrane potential. There is also a threshold (Vth) value and a reset (VR) value.

The threshold value specifies at what value an action potential occurs and the reset value gives the

value to which the neuron is reset to after an action potential. The integrate-and-fire model can

simulate many different types of neurons by allowing these attributes to change.

The software NEST allows for different types of integrate-and-fire neurons dependent on how much

simplicity is required. Two such models will be considered in this project, the alpha model and the

delta model. The alpha model works more like a real neuron, where the conductance of different ion

channels has more of a direct effect on the overall voltage of the membrane potential. The delta

model makes the assumption that this conductance based approach has minimal effect on the

neuron, and so uses a leakage term (as described previously) that assumes there is no variation in

the overall conductance of the membrane. This means that external events (i.e. the spiking of

neighbouring neurons) have a constant effect on a delta integrate-and-fire neuron, but a varying

effect on an alpha integrate-and-fire neuron – dependent on the conductance of ion channels at the

time of the event.

17

Simulating Populations of Neurons

George Parish

2013

SOLUTION TO THE INTEGRATE-AND-FIRE MODEL

The standard equation for an integrate-and-fire model can be given as a homogenous or

non-homogenous equation, dependent on what is required. The homogenous equation (below left)

is the formula for the membrane potential with no external input, the non-homogenous (below

right) allows for external inputs such as constant currents or membrane conductance.

The homogenous equation (left) dictates that the change of voltage over time (dv/dt) multiplied by

the membrane time constant ( ) is equal to negative membrane potential (-V). It is worth noting that

the value V here represents the driving force between the resting potential and the membrane

potential (E-V), and the varying conductance of the membrane potential due to the opening and

closing of different ion channels (gi) is assumed constant and ignored. The non-homogenous

equation in its simplest form (right) gives the same formula but with an added input of a constant

current (I). Solving these equations allows the calculation of the neuron’s membrane potential at any

time.

The solution to the homogenous equation for the integrate-and-fire model (left) shows how the

voltage at time t (V(t)) is given by the voltage at time zero (V(0)) times the exponential (e) to the

power of minus time t divided by the time constant tau (-t/ ). We call this solution the ‘general

solution.’ We then find any solution to the non-homogenous solution and call it the ‘particular

solution.’ A solution in this case is we assume V is constant and then derive V=I as a solution. The

‘most general’ solution can then be found by adding the ‘general’ and ‘particular’ solutions together.

This gives us

where k is solved to give the solution to the non-homogenous

equation on the right.

18

Simulating Populations of Neurons

George Parish

2013

CALCULATE TIME BETWEEN SPIKES

We can use the solution to the non-homogenous equation (above right) to find the time

between spike events of the integrate-and-fire model, generated because of the constant current

input I. This is done by looking at when this solution is equal to the threshold value of the model ( )

and solving for t.

Where time (t) is equal to the time constant ( ) times the natural log of the voltage at t=0 minus the

input current value (I) divided by the threshold value ( ) minus the input current value (I). This gives

the time steps in between spike events assuming that the current stays constant and there are no

other external events.

REFRACTORY PERIOD

The integrate-and-fire model makes some assumptions that deviate from the original

biological function. This includes using a simplified model to initiate an action potential as well using

a linear approximation for the membrane current. These assumptions mean that the model will

likely not perform in the same way as its biological counterpart, meaning that the model has to be

manually tweaked if it is to replicate the original function with a certain degree of accuracy. In this

case, within the model, an action potential could theoretically be caused instantly after the previous

action potential. This we know could not be true, as a biological neuron requires more time for

particular ions to traverse its membrane in order to change the overall conductance of the

membrane potential and cause a second action potential.

This problem is solved by implementing a refractory period. After an action potential, the neuron is

clamped to its resting potential for a specified time, causing an absolute refractory period. This

means that the neuron will ignore any external inputs during this time. Immediately after the

specified clamp time, a relative refractory period is introduced, where all external inputs are

weighted so they have a smaller effect on the neuron. This means that a second action potential is

inhibited but not impossible within this time. After the refractory period has finished the model

neuron continues as before.

19

Simulating Populations of Neurons

George Parish

2013

DELTA MODEL

The delta model is the implantation of the leaky-integrate-and-fire model described earlier,

where the membrane potential jumps on each spike arrival. The event where the threshold is

crossed is followed by an absolute refractory period where the membrane potential is clamped to

the resting potential. Any spikes that arrive during the refractory period are discarded. This can be

considered as a non-connectionist model in context of the software NEST, as it makes some

simplifying assumptions.

POST-SYNAPTIC SPIKE

Figure 7 is the NEST implementation of a single delta model neuron receiving a single spike

from a neighbouring neuron. The amount the potential goes up depends on the weight of the

connection between the two neurons. The higher the weight of the connection, the more a spike

from one neuron will influence the other. As the neuron is a delta model any effect from external

events is constant, and so the effect of the spike on its membrane potential is proportional to the

weight. This can be seen in the excitatory connection diagram from Figure 7 where weights of 5, 10

and 15 were used. If a single external spike had enough effect on the neuron to immediately raise

the potential to its threshold of 55mV, then it would also immediately spike, thus re-setting it to its

reversal potential of -70mV and enabling the refractory period – somewhat unrealistically causing no

change in potential overall.

The inhibitory connection diagram of Figure 7 shows how the neurons potential goes down when

there is a negative connection between the two neurons. This is to simulate how inhibitory synapses

from biological neurons with negatively charged ions affect their neighbours. Once the potential of

the delta model neuron has been changed by the excitatory or inhibitory spike, there is a gradual

change back to the reversal potential of -70mV. This simulates how the neuron’s membrane

gradually releases the charged ions that influenced the charge of its membrane potential.

Figure 7 Excitatory and Inhibitory connections for a delta model neuron

20

Simulating Populations of Neurons

George Parish

2013

CONSTANT INPUT

Figure 8 shows how a DC generator can be connected to a single neuron. The voltage

generated by the DC generator is transferred to the membrane potential of the delta model neuron

(d1) via a positive connection.

Figure 8 DC generator and single delta neuron network

Figure 9 shows a single integrate-and-fire neuron with a varying direct current input over time. It can

be seen how the current needs to be of a sufficient amount to cause the membrane potential to rise

to the threshold value (here 55mv). Once it hits threshold, the potential is reset to -70mv before

rising again due to the current. The strength of the input current determines how fast the

membrane potential rises, as can be seen in the period 1500-2000ms where the firing rate is much

faster due to a higher current.

The method of supplying the model neuron with a direct current input is used to test the

capabilities of the model and is not meant to replicate real processes. Realistically, a neuron’s inputs

will be from surrounding neurons that spike irregularly, causing an almost unpredictable and

irregular stream of inputs that can alter the membrane potential.

Figure 9 A single integrate-and-fire neuron with a single direct current input of 200pA (500-1000ms), 380pA (1000-1500ms) and 450pA

(1500-2000ms)

21

Simulating Populations of Neurons

George Parish

2013

Figure 10 is a graph showing the spike frequency of the model neuron against the value of the direct

current input. This graph shows how there needs to be a sufficient current in order to cause spiking

in the neuron, shown by spike frequency only rising past the 380pA mark. There is initially a sharper

rise in spike frequency due to a stronger current, but as more current is applied, this rise is less

dramatic. This is due to the spike frequency rising and encroaching on the territory of both the

relative refractory period and the absolute refractory period causing the input to have less of an

effect to having no effect at all. If there were no refractory period modelled, the spike frequency

would rise in a linear fashion.

Figure 10 Spike frequency of model neuron vs value of the direct current input

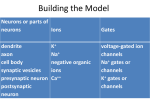

ALPHA MODEL

As mentioned previously, alpha models aim to be a more realistic representation of a

biological neuron. The effect of external stimuli on the alpha model neuron is not constant as they

depend on the conductance of the ion channels at that point in time. Ion channels operate by

opening and closing a swinging gate. As there are different types of ion channels, dependent on

which one was open, the neurons membrane potential will take on different characteristics. For

example, if potassium channels are open then the neurons potential will be more hyperpolarised

(more negative) and if sodium or calcium channels are open then the potential will be more

depolarised (more positive). This is considered a connectionist model in the context of the software

NEST as it does make any assumptions in its model.

22

Simulating Populations of Neurons

George Parish

2013

SOLUTION

In order to calculate the effect of an input on the model neuron, we much calculate the

probability that a certain ion channel gate is open.

The above equation describes the gating equation, where the probability of the gate being open

over time (dn/dt) is equal to the opening rate of the gate (

the gate is closed (1-n). The closing rate of the gate (

) multiplied by the probability that

) times the probability of the gate being

open (n) is then taken from this. The opening and closing rates can be described as follows:

(

)

(

)

The two formulas for the opening rate and closing rate are given by two exponential functions.

These functions depict the energy required for the gate to open and close, where

&

represents the amount of charge being moved as well as the distance it travels and

show

&

the energy required to close or open the gate.

POST-SYNAPTIC SPIKE

Both of these exponential functions can be seen in action in Figure 11, where a single alpha

model neuron receives one spike from a neighbouring neuron. The Alpha model neuron from NEST is

supplied via connections modelled in the same way as from the balanced excitation/inhibition model

from the documentation with NEST (Balanced random network model). The rise in membrane

potential due to the spike is modelled by the exponential for the opening rate, and the fall in

membrane potential after the spike is modelled by the exponential for the closing rate.

We can calculate the time that the voltage takes to reach the peak of an alpha synapse by deriving

the equation of the alpha model at t=0. The maximum value of the alpha synapse is called the Peak

Amplitude and can be found by obtaining the voltage value (mV) at t=0. The influence of an alpha

synapse on another neuron can be found by computing the area under the curve.

23

Simulating Populations of Neurons

George Parish

2013

The weight of the connection between the alpha model neuron and its neighbouring neuron

determines how much the membrane potential of the alpha model neuron will rise by. The effect of

changing this weight can be seen in the first graph of Figure 11, where a higher weight allows the

neighbouring neuron’s spike to influence our alpha model neuron the most. By changing the risetime of the alpha model neuron, we can influence the length of time that the potential will rise for.

The longer the time, the longer the incoming spike can influence the membrane potential, causing it

to rise to a higher value. This is shown by the second graph of Figure 11. The final graph of Figure 11

shows the effect of changing both the weight and rise-time. The dotted lines show that by increasing

the time-to-peak we magnify the effect of a spike with both a high and a low weight. We can

conclude that by increasing the weight or time-to-peak will allow alpha synapses to have a greater

influence on other neurons.

Figure 11 Alpha model analysis

24

Simulating Populations of Neurons

George Parish

2013

2.3.4 MODELLING POPULATIONS OF NEURONS

When modelling populations of neurons it is important to consider a few important steps.

The most important of these is how these neurons are connected. As neurons do little more than

essentially turn on and off, it is the connections between neurons that allow for vast networks to

communicate information. Connections indicate how likely a signal from one neuron will affect many

other neurons. Another aspect to be considered is how a background population of neurons with

many connections will affect the study of a single or group of neurons. This background noise plays a

key role in the state of neurons, oftentimes keeping whole groups of neurons poised just below their

threshold for action potential, ready for a push from a more direct stimulus to activate them.

CHAINS OF NEURONS

Neurons transmit information by sending signals that must traverse networks containing

large numbers of neurons. In order to do this, a signal must cross many neurons simultaneously. This

has been hypothesised to work in a few ways; a popular way of visualising such a transmission of

information is through a serial or parallel chain. This method will be shown as inefficient when

compared with converging and diverging connections between neurons.

SERIAL/PARALLEL CHAINS

A serial chain is where neurons are connected in a sequential linear chain, where a signal

goes through each neuron in turn. The initial action potential that causes spiking through this type of

network would need to very strong if the signal is to be transmitted through to the end node. This is

due to dispersion in the signal as it is transmitted from node to node. The single connection between

each node doesn’t give the signal much chance of successfully traversing the network.

Figure 12 Serial and Parallel chains

25

Simulating Populations of Neurons

George Parish

2013

A series of serial chains could work in parallel, making it more probable that a signal would reach its

destination. In this set-up there are w amounts of serial chains of length n, as seen in Figure 12. It

has been found that this type of formation is very unlikely in the cortex (Abeles, 1991). The reason

being that it does not offer a very flexible solution to the very complex problem of successfully

transmitting a signal across such a vast network. Signals would have to waste time and energy crisscrossing through a series of road-like parallel chains, allowing congestion and dispersion to hamper

them. It might be much faster for neurons to have direct links to many different areas, allowing

them to act as an airport-like hub where signals converge from and diverge to many different

locations.

DIVERGING/CONVERGING CHAINS

In a chain of diverging and converging connections, each neuron in a group sends excitation

to various neurons in the next group, and each neuron in this next group is excited by several

neurons of the previous group. Figure 13 shows how several neurons outputs can converge onto

one, as well as how one neuron can diverge and send signals to many other neurons. By considering

each neurons capability of having multiple incoming converging and outgoing diverging connections,

we can form a chain of such neurons as seen at the bottom of Figure 13. This limited view shows

how neuron a(0,1) receives input from some other neurons before sending output to the other

neurons in the chain, who in turn also receive input from some other neurons.

Figure 13 Converging and Diverging chains

26

Simulating Populations of Neurons

George Parish

2013

A group can be made up of neurons that are physically in the same region of the brain; however,

they can also be made up of neurons from various different areas. In this structure it is important to

view such a chain more as a time series rather than a physical chain, where a neuron is excited after

another because of their connection and not because it is physically nearby. This structure makes it

possible for a strong signal to activate many different groups of neurons in very different parts of the

brain, perhaps explaining how a smell can evoke images or feelings, utilising different areas of the

brain simultaneously.

COMPLETE & INCOMPLETE CHAINS

In order to better explain and simulate these chains we often limit our consideration to

chains where the width (w) of each chain is constant, so that for all neurons the average number of

converging connections is equal to the average number of diverging connections. We can consider

the number of neurons in each group as the width (w) of the chain, and the degree of

divergence/convergence as the multiplicity (m) of connections between groups. The case where

w=m is termed Griffith’s complete chain (Griffith, 1963), and represents how each neuron in one

group will excite every neuron in the next group. It has been proven that in the cerebral cortex we

are most likely to find complete chains of up to width 5, but these chains would not be functional as

the synapse strength would need to be higher than is thought probable for cortical neurons (Abeles,

1991).

Alternatively, it is possible to find incomplete chains of neurons, where w m. This means that the

amount of connections from and to each neuron (m) is not equal to the total number of neurons

(w). This arrangement is much more likely to be found in the brain as connections can be formed

spontaneously and it would be inconvenient for a neurons number of outgoing connections to have

to equal its incoming connections. The software NEST allows for a choice between diverging,

converging and regular connections. Any neuron can connect to any other without the limitation of

being part of a complete chain.

FEEDBACK NETWORK

What has been described so far is a feed-forward network, where a group of neurons will

excite the next group and so on. A feed-back network can be implemented when the output from a

group of neurons feeds back as its own input. This means the state of this group will be constantly

changing until it reaches equilibrium, making this type of network efficient at solving more complex

problems. If the output of the final group of neurons in a feed-forward network feeds back into the

27

Simulating Populations of Neurons

George Parish

2013

first group of neurons then a feed-forward network can also become a large feed-back network of a

sort. This project considers the use of feed-forward networks and if they encourage synchronous

spiking between groups of neurons, so networks of a more feedback architecture are not covered in

extensive detail.

GENERATING EXTERNAL EVENTS

So far, we have limited our discussion to complete groups of interconnected neurons. Whilst this is

true for the brain as a whole, it is currently impossible to have knowledge of and simulate every

neuron and their connections. When considering a group of neurons in simulation, each neuron will

be connected to any number of surrounding neurons. Whilst it isn’t feasible to simulate them all, it

isn’t sensible to ignore them either.

POISSON DISTRIBUTION

The best approach is to attempt to model the influence of the spiking of surrounding

neurons. This is done by using a poisson distribution. It is a discrete probability distribution that

takes a given number of events and gives a probability of these events occurring independently

within a fixed time interval. Events can be distributed by the following equation:

Where a the probability of a discrete event X occurring at time interval k is equal to some constant λ

to the power k times the exponential to the power minus λ, all divided by k factorial. The index k in

this case represents the time between events, where an event X has a probability of occurring k

milliseconds after the previous event. The constant lambda (λ) is equal to the expected value of X,

and will have a profound effect on the distribution. For a poisson distribution for neural simulators, a

lambda is chosen such that the exponential fit to the data is as can be seen in Figure 14, where an

event X is most likely to occur very shortly after the previous event.

The software NEST allows the user to create a ‘poisson generator,’ that generates events at a

specified rate. For example, the effect of the 20,000 surrounding neurons of the cerebral cortex can

be modelled by creating poisson generators, 88% of which fire at 2Hz connected with excitatory

connections, and 12% of which fire at 12Hz connected with inhibitory connections.

28

Simulating Populations of Neurons

George Parish

2013

Figure 14 Poisson distribution of spike events

INDIVIDUAL NEURON WITH POISSON EVENTS