* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Models of Networks of Neurons Networks of neurons What`s a

Theta model wikipedia , lookup

Electrophysiology wikipedia , lookup

Mirror neuron wikipedia , lookup

Caridoid escape reaction wikipedia , lookup

Catastrophic interference wikipedia , lookup

Single-unit recording wikipedia , lookup

Neural oscillation wikipedia , lookup

Nonsynaptic plasticity wikipedia , lookup

Synaptogenesis wikipedia , lookup

Axon guidance wikipedia , lookup

Clinical neurochemistry wikipedia , lookup

Multielectrode array wikipedia , lookup

Neurotransmitter wikipedia , lookup

Molecular neuroscience wikipedia , lookup

Development of the nervous system wikipedia , lookup

Holonomic brain theory wikipedia , lookup

Apical dendrite wikipedia , lookup

Metastability in the brain wikipedia , lookup

Neural modeling fields wikipedia , lookup

Circumventricular organs wikipedia , lookup

Premovement neuronal activity wikipedia , lookup

Neural coding wikipedia , lookup

Anatomy of the cerebellum wikipedia , lookup

Neuroanatomy wikipedia , lookup

Stimulus (physiology) wikipedia , lookup

Optogenetics wikipedia , lookup

Convolutional neural network wikipedia , lookup

Chemical synapse wikipedia , lookup

Pre-Bötzinger complex wikipedia , lookup

Recurrent neural network wikipedia , lookup

Central pattern generator wikipedia , lookup

Neuropsychopharmacology wikipedia , lookup

Types of artificial neural networks wikipedia , lookup

Channelrhodopsin wikipedia , lookup

Biological neuron model wikipedia , lookup

Feature detection (nervous system) wikipedia , lookup

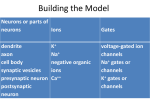

Networks of neurons

• Neurons are organized in large networks. A typical neuron is cortex

receives thousands of inputs.

• Aim of modeling networks: explore the computational potential of such

connectivity.

Models of Networks of Neurons

- What computations? (e.g. gain modulation, integration, selective

amplification of some signal, memory etc..)

- What dynamics ? (e.g. spontaneous acticity, variability, oscillations)

•Tools:

- models of neurons and synapses : spiking neurons (IAF) or firing rate

- analytical solutions, numerical integration

1.1 Introduction

3

B

A

dendrite

What’s a network ?

apical

dendrite

~80% excitatory cells (pyramidal neurons),

• In cortex,

1.1 Introduction

3 ~20% inhibitory

soma

neurons (smooth stellate + large variety of other types)/ a.k.a interneurons.

soma

A

B

axon

C

basal

dendrite

dendrite

apical

dendrite

dendrite

axon

soma

axon

collaterals

soma

axon

basal

dendrite

axon

soma

C

dendrite

axon

Figure 1.1:axon

Diagrams of three neurons. A) A cortical pyramidal cell. These are

collaterals

the primary

excitatory neurons of the cerebral cortex. Pyramidal cell axons branch

soma

locally, sending axon collaterals to synapse with nearby neurons, and also project

more distally to conduct

signals

to

other

parts

of

the

brain and nervous system.

axon

B) A Purkinje cell of the cerebellum. Purkinje cell axons transmit the output of

the cerebellar cortex. C) A stellate cell of the cerebral cortex. Stellate cells are

one of a large class of cells that provide inhibitory input to the neurons of the

Figure 1.1: Diagrams of three neurons. A) A cortical pyramidal cell. These are

cerebral

cortex. To give an idea of scale, these figures are magnified about 150 fold.

the primary excitatory neurons of the cerebral cortex. Pyramidal cell axons branch

(Drawings

from Cajal,

1911; figure

fromwith

Dowling,

1992.) and also project

locally, sending

axon collaterals

to synapse

nearby neurons,

more distally to conduct signals to other parts of the brain and nervous system.

B) A Purkinje cell of the cerebellum. Purkinje cell axons transmit the output of

the cerebellar cortex. C) A stellate cell of the cerebral cortex. Stellate cells are

one of a large class of cells that provide inhibitory input to the neurons of the

What’s a network ?

• Laminar Organization.

Cortex is divided into 6 layers.

Models usually pool all layers together.

What’s a network ?

Connectivity

• 3 types of connections: feed-forward, recurrent (lateral), feedback.

• Columnar Organization.

se

on

esp

nR

tio

Neurons in small (30-100 micrometers) columns

perpendicular to the layers

ula

op

B!

P

(across all layers) respond to similar stimulus features.

50

ves

A!

g

nin

Tu

180

r

Cu

90

25

0

!90

0

! test

V4

0

!18

50

s

180

90

25

Re

nse

spo

IT

0 180

!

!90

V2

0

! test

V1

Retina

6

FEEDBACK

• Excitatory

• Modulatory influence

V1large

• Convey information from

area of visual field

• V2, MT

• role unclear

V1

LGN

• Excitatory

• precise

topographically

• debated how strong

7

LGN

8

Local

Projections

Long-range Horizontal

Projections

V1

V1

• Excitatory + Inhibitory : lots !!

• < 1 mm

• connectivity depend on distance,

not preference.

• Excitatory - Modulatory

• 6-8 mm (cat, monkey) - Non overlapping RF

• specific/ preferences

LGN

V1: Neurons. !"#$ %&$ '()#*$ +,-.(/-0$ &112$ #3+/.(.,*)$ -#4*,-0$ (-5$ 676$ /-"/8/.,*)$ -#4*,-09$

LGN

9

10

:3+/.(.,*)$-#4*,-0$(*#$;,5#'#5$(0$*#<4'(*$0=/>/-<$+,-54+.(-+#?8(0#5$/-.#<*(.#?(-5?@/*#$-#4*,-0A$

B"/'#$ /-"/8/.,*)$ -#4*,-0$ (*#$ ;,5#'#5$ (0$ +,-54+.(-+#?8(0#5$ @(0.?0=/>/-<$ -#4*,-09$ !"#$ -#4*,-$

;,5#'$(-5$=(*(;#.#*0$B#*#$.(>#-$@*,;$C,;#*0$#.$('9$D&EE7F9$:(+"$+,*./+('$-#4*,-$/0$;,5#'#5$(0$($

V1: Neurons. !"#$ %&$ '()#*$

+,-.(/-0$ &112$ #3+/.(.,*)$ -#4*,-0$ (-5$ 676$ /-"/8/.,*)$ -#4*,-09$

0/-<'#$G,'.(<#$+,;=(*.;#-.$/-$B"/+"$."#$;#;8*(-#$=,.#-./('$/0$</G#-$8)$

:3+/.(.,*)$-#4*,-0$(*#$;,5#'#5$(0$*#<4'(*$0=/>/-<$+,-54+.(-+#?8(0#5$/-.#<*(.#?(-5?@/*#$-#4*,-0A$

$

B"/'#$ /-"/8/.,*)$ -#4*,-0$ (*#$ ;,5#'#5$ (0$ +,-54+.(-+#?8(0#5$ @(0.?0=/>/-<$ -#4*,-09$ !"#$ -#4*,-$

$%! D& F

$ %& ( !#strategies

! & % # !# " !%! D& F % )(1)

Network'"modeling

:HIJ! " % & ( !# ! & % # !# " !%! D& F % )JKLJM "

$ $

D&6F$

$&

#

#

;,5#'$(-5$=(*(;#.#*0$B#*#$.(>#-$@*,;$C,;#*0$#.$('9$D&EE7F9$:(+"$+,*./+('$-#4*,-$/0$;,5#'#5$(0$($

% ( N:OP !%! D& F % )N:OP " % ( OLQ ! & "!%! D& F % )OLQ " 9

0/-<'#$G,'.(<#$+,;=(*.;#-.$/-$B"/+"$."#$;#;8*(-#$=,.#-./('$/0$</G#-$8)$

$

IEEE TRANSACTIONS ON NEURAL NETWORKS, VOL. 14, NO. 6, NOVEMBER 2003

Network modeling strategies (2)

we choose the ratio of excitatory to inhibitory ne

we make inhibitory synaptic connections stronge

input, each neuron receives a noisy thalamic inp

In principle, one can use RS cells to model

and FS cells to model all inhibitory neurons. Th

heterogeneity (so that different neurons have d

to assign each excitatory cell

spike raster

, where is a random v

tributed on the interval [0,1], and is the neuron

corresponds to regular spiking (RS) cell, and

the chattering (CH) cell. We use

to bias the d

cells. Similarly, each inhibitory cell has

and

.

The model belongs to the class of pulse-cou

(PCNN): The synaptic connection weights bet

given by the matrix

, so that firing

stantaneously changes variable by .

e.g. integrate and fire neurons

• method 1: spiking neurons,

$

!"#$ 04;$ ,G#*$ #$ 5,#0$ -,.$ /-+'45#$ (''$ =*#0)-(=./+$ +#''0R$ /-0.#(5A$ ."#$ =*#0)-(=./+$ +#''0$ (*#$ 5*(B-$

$% D& F

!#D&FA$

'" ! $ %& ( !#=*,8(8/'/0./+('')$(++,*5/-<$.,$($0+"#;#$5#0+*/8#5$8#',B9$!"#$=(*(;#.#*$

! & % # !# " !%! D& F % ):HIJ! " % & (!# ! & % # !# " !%! D& F % )JKLJM " $ $ #!#$/0$($5#'()A$(-5$(

D&6F$

$&

#

#

."#$0)-(=./+$+,-54+.(-+#$<#-#*(.#5$(.$=,0.?0)-(=./+$+#''$!$8)$."#$0=/>/-<$,@$=*#?0)-(=./+$+#''$#A$/0$

%! D& F % )N:OP " % ( OLQ ! & "!%! D& F % )OLQ " 9

% ( N:OP !</G#-$8)$(-$('="(?@4-+./,-A$

$

$

'

( + )

( & % & *# )

0

0

$

$

$

$

D&SF$

(!# (''$

(!# & *.& % & # +/ , +#''0R$

#3= , %

9 $ =*#0)-(=./+$

! & " $=*#0)-(=./+$

--."#$

!"#$ 04;$ ,G#*$ #$ 5,#0$ -,.$ /-+'45#$

+#''0$

(*#$

5*(B-$

, # -- /-0.#(5A$

, #

*

*

=#(>

1

=#(>

1

#!#$/0$($5#'()A$(-5$(!#D&FA$

=*,8(8/'/0./+('')$(++,*5/-<$.,$($0+"#;#$5#0+*/8#5$8#',B9$!"#$=(*(;#.#*$

$

*

."

L#*#$& #$/0$."#$./;#$,@$* $0=/>#,@*,;$=*#0)-(=./+$+#''$#9$T"#-$."#$;#;8*(-#$=,.#-./('$#3+##50$."#$

."#$0)-(=./+$+,-54+.(-+#$<#-#*(.#5$(.$=,0.?0)-(=./+$+#''$!$8)$."#$0=/>/-<$,@$=*#?0)-(=./+$+#''$#A$/0$

• up to 10,000 neurons.

</G#-$8)$(-$('="(?@4-+./,-A$

0=/>#$."*#0",'5$D?77$;%FA$($0=/>#$/0$#;/..#5A$."#$0=/>#$."*#0",'5$/0$#'#G(.#5$;/;/+>/-<$($*#'(./G#$

U

neuron trace

*#@*(+.,*)$=#*/,5$D0##$C,;#*0$#.$('9$D&EE7F$@,*$5#.(/'0FA$(-5$($P

with electrophysiology, a system where all $;#5/(.#5$(@.#*?")=#*=,'(*/V(./,-$

• advantage: comparison

$

DOLQF$ +,-54+.(-+#$

B(0$ (+./G(.#59$ !"#$ OLQ$ +,-54+.(-+#A$ (OLQD.FA$ ,8#)0$ ."#$ 0(;#$ #W4(./,-$ (0$

neurons can be !recorded"

at all times.

( OLQ (-5$."#$04;$/0$,G#*$."#$/-5#3$!$D."#$+#''X0$,B-$0=/>#0F$*(."#*$

D&SF$#3+#=.$."(.$."#$=*#@(+.,*$/0$

( & % & # ) long

'( + )

simulations,

• difficulties: lots of parameters/assumptions,

$

$

$

$

D&SF$

(!# ! & " $ (!# & *.& % & *# +/ ,

-- #3= ,, %

-- 9 $

,

analysis difficult.

."(-$ ,G#*$

0=/>#0F9$

*

0 # =#(>#, D=*#0)-(=./+$

1

0 # =#(>

1 !"#$ G('4#0$ ,@$ ."#$ =#(>$ 0)-(=./+$ +,-54+.(-+#0A (!# A$ (*#$ </G#-$

*

8#',B9$I,-54+.(-+#$+"(-<#0$*#(+"#5$."#/*$;(3/;('$G('4#0$(.$#=#(>A$B"/+"$B(0$&$;0$@,*$#3+/.(.,*)$

$

*

."

0)-(=0#0A$6$;0$@,*$/-"/8/.,*)$0)-(=0#0A$(-5$6$;0$@,*$(@.#*?")=#*=,'(*/V(./,-9$!"#$0;(''$G('4#0$,@$

L#*#$& #$/0$."#$./;#$,@$* $0=/>#,@*,;$=*#0)-(=./+$+#''$#9$T"#-$."#$;#;8*(-#$=,.#-./('$#3+##50$."#$

#=#(>$;#(-0$."(.$B#$(*#$#@@#+./G#')$;,5#'/-<$OYQO$(-5$ZOMOO$0)-(=0#0R$KY[O$(-5$ZOMOM$

Fig. 3. Simulation of a network of 1000 randomly coupled spiking neurons.

Top: spike raster shows episodes of alpha and gamma band rhythms (vertical

lines). Bottom: typical spiking activity of an excitatory neuron. All spikes were

first to 30 mV and then to .

equalized at 30 mV by resetting

% Created by Eugene M.

% Excitatory neurons

Ne=800;

re=rand(Ne,1);

[Izhikevitch, 2003]

a=[0.02*ones(Ne,1);

b=[0.2*ones(Ne,1);

c=[-65+15*re.^2;

d=[8-6*re.^2;

S=[0.5*rand(Ne+Ni,Ne),

Izhikevich, F

Inhibitory ne

Ni=200;

ri=rand(Ni,1)

0.02+0.08*ri]

0.25-0.05*ri]

-65*ones(Ni,1

2*ones(Ni,1)]

-rand(Ne+Ni,N

Network modeling strategies (3)

• method 2: reduce the description to describe only rate of spiking r(t),

instead of Vm(t).

τr

dri (t)

= −ri (t) + input(t)

dt

Firing rate model (1)

• each neuron is described at time t by a firing rate v(t).

j=N

!

dvi (t)

wij uj )

τr

= −vi (t) + F (

dt

j=1

• Interpretation: average over time, average over equivalent neurons

• In absence of input, the firing rate relaxes to 0 with a time constant tr - which

also determines how quickly the neuron responds to input.

• The input from a presynaptic neuron is proportional to its firing rate u

• The weight wij determines the strength of connection of neuron j to neuron i

• The total input current is the sum of the input from all external sources.

Firing rate model (2)

• each neuron is described at time t by a firing rate v(t).

τr

j=N

!

dvi (t)

wij uj ) = −vi (t) + F (w.u)

= −vi (t) + F (

dt

dot-product

j=1

• F determines the steady state r as a function of input

• F is called the activation function

• F can be taken as a saturating function, e.g. sigmoid

• F is often chosen to be threshold linear

Network Architectures

• A: Feedforward

τr

N

!

dvi (t)

= −vi (t) + F (

Wij uj (t))

dt

j=1

• B: Recurrent

τr

N

N

!

!

dvi (t)

= −vi (t) + F (

Wij uj (t) +

Mik vk (t))

dt

j=1

k=1

rmax

F (I) =

1 + exp(g(I1/2 − I)

F (I) = G[I − I0 ]+

determined by the equation

τr

dv

= −v + F(h + M · v ) .

dt

(7.11)

Excitatory

- Inhibitory

Network

Neurons are typically

classified

as either

excitatory or inhibitory, meaning

that they have either excitatory or inhibitory effects on all of their postsynaptic

targets.

property

is formalized

Dale’s

law, which

models

haveThis

a single

population

of neuronsinand

the weights

are states that

• Some

a

neuron

cannot

excite

some

of

its

postsynaptic

targets

and

inhibit others.

allowed to be positive and negative.

In terms of the elements of M, this means that for each presynaptic neuron

models represent the excitatory and inhibitory population separately.

• Other

a" , Maa" must have the same sign for all postsynaptic neurons a. To im(more

!biological"

+ richer dynamics).

pose this restriction,

it is convenient to describe excitatory and inhibitory

matrices,

MEE, M

• 4 weight

IE, M

II, MEI

neurons separately.

The

firing-rate

vectors vE and vI for the excitatory and

inhibitory neurons are then described by a coupled set of equations identical in form to equation 7.11,

τE

dvE

= −vE + FE (hE + MEE · vE + MEI · vI )

dt

(7.12)

dvI

= −vI + FI (hI + MIE · vE + MII · vI ) .

dt

(7.13)

Dale’s law

Example:

Orientation selectivity as a model problem:

spiking networks and ring model

excitatoryinhibitory

network

and

τI

There are now four synaptic weight matrices describing the four possible

types of neuronal interactions. The elements of MEE and MIE are greater

than or equal to zero, and those of MEI and MII are less than or equal to

zero. These equations allow the excitatory and inhibitory neurons to have

different time constants, activation functions, and feedforward inputs.

In this chapter, we consider several recurrent network models described

by equation 7.11 with a symmetric weight matrix, Maa" = Ma" a for all a and

neurons

selective

to orientation,

V1’s are: analysis, but symmetric coupling

a" . LGN

Requiring

M toare

be not

symmetric

simplifies

the mathematical

Origin

Orientation

selectivitythat

? neuron a, which is exciit violates Dale’s

law.ofSuppose,

for example,

tatory, and neuron a" , which is inhibitory, are mutually connected. Then,

Draft: December 19, 2000

Theoretical Neuroscience

Model of Hubel and Wiesel (1962)

Text

• Hubel and Wiesel (1962) proposed that the oriented fields of V1 neurons could

be generated by summing the input from appropriately selected LGN neurons.

• The model accounts for selectivity in V1 on the basis of a purely feedforward

architecture.

Ferster and Miller — July 30, 2000

Text

4

Text

V1

Sclar and Freeman, 1982

V1

• Example of a computation, emergence of a new property.

LGN

Figure 1:

neurons

V1 simple cell

A. A map of the receptive field of a simple cell in the cat visual cortex. A light flashed in the ON

subregion (x) or turned off in an OFF region (triangles) excites the cell, while a light flashed in an

OFF region or turned off in the ON region inhibit the cell. Other arrangements of the subregions

are possible, such as a central OFF region and flanking ON regions, or one ON and one OFF

region. B. Hubel and Weisel’s model for how the receptive field of the simple cell can be built

from excitatory input from geniculate relay cells. The simple cell (below right) receives input from

output

output

Connectivity achieves

contrast invariance through

feedforward inhibition

Connectivity sharpens

the tuning curve

input

Due to the precise

organization of the thalamic

Due to input

the imprecise

afferents,

to V1 is

organization

of the

sharply tuned

thalamic afferents, input

to V1 is broadly tuned

output

input

V1

Feedforward

Recurrent

Hubel and Wiesel, 1962;

Troyer, Krukowski,

Priebe and Miller, 1998

Somers, Nelson and Sur 1995;

Sompolinsky and Shapley, 1997

~ 100, 000 synapses

`Feedforward’ model

vs `Recurrent’

~ 1250 conductance

based IAF neurons

Wee, Wie, Wei,

Poisson spike trains

Retina/

LGN

Seriès, Latham and

Pouget - Nature

Neuroscience 2004

ON

OFF

• Explore physiological and anatomical plausibility:

- cortical connectivity scheme,

- thalamocortical connectivity,

- properties of inhibition in Cx (inactivation)

…

(Sompolinsky and Shapley, 1997; Ferster and Miller, 2000).

• Coding efficiency

(are these models making different predictions in terms of

information transmission?)

23

22

7.4 Recurrent Networks

25

1.9. This value, being Network

larger thanmodels

one, would

lead to an unstable network

- summary

in the linear case. While nonlinear networks can also be unstable, the restriction to eigenvalues less than one is no longer the relevant condition.

The Ring Model (1)

In a nonlinear network, the Fourier analysis of the input and output remodels:astoinformative

understand the

of connectivity

• Network

sponses

is no longer

as implications

it is for a linear

network.inDue to

terms

of

computation

and

dynamics.

the rectification, the ν = 0, 1, and 2 Fourier components are all amplified

(figure 7.9D) compared to their input values (figure 7.9C). Nevertheless,

except

rectification,

the nonlinear

network amplifies the inMain

strategies: Spiking

vs Firingrecurrent

rate models.

• 2for

put signal selectively in a similar manner as the linear network.

• The issue of the emergence of orientation selectivity as a model

A Recurrent

of Simple

in Primary

Visual Cortex

problem,Model

extensively

studiedCells

theoretically

and experimentally.

- Two main models: feed-forward and recurrent.

In chapter

2, we

discussed

feedforward

model in

which

- Detailed

spiking

modelsahave

been constructed

which

canthe

be elongated

directly

receptive fields of simple cells in primary visual cortex were formed by

compared to electrophysiology

summing the inputs from lateral geniculate (LGN) neurons with their re- The

same

probleminisalternating

also investigated

with

a firing

rate cells.

model,While

a.k.a.this

ceptive

fields

arranged

rows of

ON

and OFF

thequite

!ringsuccessfully

model".

model

accounts for a number of features of simple cells,

such as orientation tuning, it is difficult to reconcile with the anatomy and

circuitry of the cerebral cortex. By far the majority of the synapses onto

any cortical neuron arise from other cortical neurons, not from thalamic

afferents. Therefore, feedforward models account for the response properties of cortical neurons while ignoring the inputs that are numerically

most prominent. The large number of intracortical connections suggests,

instead, that recurrent circuitry might play an important role in shaping

the responses of neurons in primary visual cortex.

The Ring Model (2)

+

(7.36)

The Ring Model (3)

"#!

• h is input, can be tuned (Hubel Wiesel

h(θ) = c[1 − " + " ∗ cos(2θ)]

/

"$

"#'

scenario) or very broadly tuned.

01!2

Ben-Yishai, Bar-Or, and Sompolinsky (1995) developed a model at the

N neurons,

withfor

preferred

angle, θi ,evenly

distributed

•other

extreme,

which recurrent

connections

are the primary determiners

of

orientation

tuning.

The

model

is

similar

in structure to the model

between −π/2 and π/2

of

equations

7.35

and

7.33,

except

that

it

includes

a global inhibitory inter• Neurons receive thalamic inputs h.

action. In addition, because orientation angles are defined over the range

+ recurrent connections, with excitatory weights between

from −π/2 to π/2, rather than over the full 2π range, the cosine functions

nearby

cells and

inhibitory

cells that

arebasic equation of the

in the model

have

extra weights

factors between

of 2 in them.

The

further

(mexican-hat

model,apart

as we

implement profile)

it, is

!

%

" π/2 "

$

dv(θ)

dθ #

"

"

τr

= −v(θ) + h (θ) +

−λ0 + λ1 cos(2(θ − θ )) v(θ )

dt

−π/2 π

"%

"#&

"#%

"#$

"/

!!"

"

!"

()*+,-.-*(,/!

• The steady-state can be solved analytically.

Model analyzed like a physical system.

!"

-('./*

where v(θ)

is the firing rate of a neuron with preferred orientation θ.

"

!!"

The input to the model represents the orientation-tuned feedforward in!$""

put arising

from ON-center and OFF-center LGN cells responding to an

!$!"

oriented

image. As a function of preferred orientation, the input for an

image!#""

with !!"

orientation

"

!"angle & = 0 is

%&'()*+*'%),!

h (θ) = Ac (1 − ' + ' cos(2θ))

Draft: December 19, 2000

(7.37)

Theoretical Neuroscience

• Model achieves i) orientation selectivity; ii) contrast invariance of tuning, even

if input is very broad.

• The width of orientation selectivity depends on the shape of the mexican-hat,

but is independent of the width of the input.

• Symmetry breaking /Attractor dynamics.

The Ring Model (4)

Attractor Networks

7.4 Recurrent Networks

•Attractor network : a network of neurons, usually recurrently connected, whose time

27

dynamics settle to a stable pattern.

A

B

C

80

firing rate (Hz)

30

v (Hz)

h (Hz)

60

20

10

40

20

• That pattern may be stationary (fixed points), time-varying (e.g. cyclic), or even

80

80%

40%

20%

10%

60

stochastic-looking (e.g., chaotic).

• The particular pattern a network settles to is called its !attractor".

40

•The ring model is called a line (or ring) attractor network. Its stable states are also

20

sometimes referred to as !bump attractors".

0

-40

-20

0

20

θ (deg)

40

0

-40

-20

0

20

θ (deg)

40

0

180

200

220 240

Θ (deg)

Figure 7.10: The effect of contrast on orientation tuning. A) The feedforward input as a function of preferred orientation. The four curves, from top to bottom,

correspond to contrasts of 80%, 40%, 20%, and 10%. B) The output firing rates

in response to different levels of contrast as a function of orientation preference.

These are also the response tuning curves of a single neuron with preferred orientation zero. As in A, the four curves, from top to bottom, correspond to contrasts

of 80%, 40%, 20%, and 10%. The recurrent model had λ0 = 7.3, λ1 = 11, A = 40

Hz, and " = 0.1. C) Tuning curves measure experimentally at four contrast levels

as indicated in the legend. (C adapted from Sompolinsky and Shapley, 1997; based

on data from Sclar and Freeman, 1982.)

Point Attractor

Line Attractor

A Recurrent Model of Complex Cells in Primary Visual Cortex

In the model of orientation tuning discussed in the previous section, recurrent amplification enhances selectivity. If the pattern of network connectivity amplifies nonselective rather than selective responses, recurrent interactions can also decrease selectivity. Recall from chapter 2 that neurons

in the primary visual cortex are classified as simple or complex depending on their sensitivity to the spatial phase of a grating stimulus. Simple

cells respond maximally when the spatial positioning of the light and dark

regions of a grating matches the locations of the ON and OFF regions of

their receptive fields. Complex cells do not have distinct ON and OFF regions in their receptive fields and respond to gratings of the appropriate

orientation and spatial frequency relatively independently of where their

light and dark stripes fall. In other words, complex cells are insensitive to

spatial phase.

Chance, Nelson, and Abbott (1999) showed that complex cell responses

could be generated from simple cell responses by a recurrent network. As

in chapter 2, we label spatial phase preferences by the angle φ. The feedforward input h (φ) in the model is set equal to the rectified response of

a simple cell with preferred spatial phase φ (figure 7.11A). Each neuron

in the network is labeled by the spatial phase preference of its feedforward input. The network neurons also receive recurrent input given by

the weight function M (φ − φ" ) = λ1 /(2πρφ ) that is the same for all conDraft: December 19, 2000

• Model was tested with stimuli containing more

than 1 orientation (crosses)

•Model fails to distinguish angles separated by 30

Theoretical Neuroscience

deg, overestimates larger angles

•spurious attractors with noise

31

32

The Ring Model (5): Sustained Activity

Network models - summary

• If recurrent connections are strong enough, the pattern of population

activity once established can become independent of the structure of the

input. It can persists when input is removed.

• A model of working memory ?

• Network models: to understand the implications of connectivity in

terms of computation and dynamics.

• 2 Main strategies: Spiking vs Firing rate models.

• The issue of the emergence of orientation selectivity as a model

problem, extensively studied theoretically and experimentally.

- Two main models: feed-forward and recurrent.

- Detailed spiking models have been constructed which can be directly

compared to electrophysiology

- The same problem is also investigated with a firing rate model, a.k.a.

the !ring model".