* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download decision-making in the primate brain

Holonomic brain theory wikipedia , lookup

Decision-making wikipedia , lookup

Binding problem wikipedia , lookup

Brain–computer interface wikipedia , lookup

Caridoid escape reaction wikipedia , lookup

Emotional lateralization wikipedia , lookup

Multielectrode array wikipedia , lookup

Cognitive neuroscience of music wikipedia , lookup

Human brain wikipedia , lookup

Affective neuroscience wikipedia , lookup

Biology of depression wikipedia , lookup

Single-unit recording wikipedia , lookup

Executive functions wikipedia , lookup

Types of artificial neural networks wikipedia , lookup

Activity-dependent plasticity wikipedia , lookup

Neuroesthetics wikipedia , lookup

Cortical cooling wikipedia , lookup

Environmental enrichment wikipedia , lookup

Central pattern generator wikipedia , lookup

Time perception wikipedia , lookup

Neural oscillation wikipedia , lookup

Mirror neuron wikipedia , lookup

Neuroplasticity wikipedia , lookup

Stimulus (physiology) wikipedia , lookup

Aging brain wikipedia , lookup

Neuroanatomy wikipedia , lookup

Eyeblink conditioning wikipedia , lookup

Neural coding wikipedia , lookup

Pre-Bötzinger complex wikipedia , lookup

Development of the nervous system wikipedia , lookup

Clinical neurochemistry wikipedia , lookup

Orbitofrontal cortex wikipedia , lookup

Channelrhodopsin wikipedia , lookup

Nervous system network models wikipedia , lookup

Optogenetics wikipedia , lookup

Neuropsychopharmacology wikipedia , lookup

Metastability in the brain wikipedia , lookup

Superior colliculus wikipedia , lookup

Synaptic gating wikipedia , lookup

Neural correlates of consciousness wikipedia , lookup

Premovement neuronal activity wikipedia , lookup

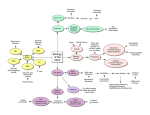

J Comp Physiol A (2005) 191: 201–211 DOI 10.1007/s00359-004-0565-9 R EV IE W Allison N. McCoy Æ Michael L. Platt Expectations and outcomes: decision-making in the primate brain Received: 2 March 2004 / Revised: 28 July 2004 / Accepted: 12 August 2004 / Published online: 12 October 2004 Springer-Verlag 2004 Abstract Success in a constantly changing environment requires that decision-making strategies be updated as reward contingencies change. How this is accomplished by the nervous system has, until recently, remained a profound mystery. New studies coupling economic theory with neurophysiological techniques have revealed the explicit representation of behavioral value. Specifically, when fluid reinforcement is paired with visually-guided eye movements, neurons in parietal cortex, prefrontal cortex, the basal ganglia, and superior colliculus—all nodes in a network linking visual stimulation with the generation of oculomotor behavior—encode the expected value of targets lying within their response fields. Other brain areas have been implicated in the processing of reward-related information in the abstract: midbrain dopaminergic neurons, for instance, signal an error in reward prediction. Still other brain areas link information about reward to the selection and performance of specific actions in order for behavior to adapt to changing environmental exigencies. Neurons in posterior cingulate cortex have been shown to carry signals related to both reward outcomes and oculomotor behavior, suggesting that they participate in updating estimates of orienting value. A. N. McCoy Æ M. L. Platt (&) Department of Neurobiology, Duke University Medical Center, 325 Bryan Research Building, Box 3209, Durham, NC 27710, USA E-mail: [email protected] M. L. Platt Center for Cognitive Neuroscience, Duke University, Durham, NC 27710, USA M. L. Platt Department of Biological Anthropology and Anatomy, Duke University, Durham, NC 27710, USA Introduction For even the simplest of organisms, adaptive behavior requires, to paraphrase Darwin, the preservation of favorable variants and the rejection of injurious ones. In other words, nervous systems must weigh the potential rewards and punishments associated with available options and select the behavior likely to yield the best possible outcome. This process of adaptive decisionmaking is well illustrated by the example of a financial advisor choosing whether to buy, sell or hold portions of stock based on calculations of the most likely monetary gain. In the face of sudden change, such as a stock market crash, the value associated with available options is rapidly updated. In this way, the decision process allows behavior to adjust to environmental flux, resulting in a net benefit to the organism. While the analogy of the financial advisor captures the iterative, adaptive nature of the decision process, how does the brain actually select an action from the behavioral repertoire? This is a question that has long puzzled philosophers, economists, behavioral ecologists, and neuroscientists. In fact, significant advancement in our understanding of the neural basis of decision-making has been made through a combination of economic theory and neurophysiological techniques (see Glimcher 2003, for exposition). This review describes current understanding of the neural mechanisms underlying decision-making, focusing on evidence for the neural representation, computation and revision of economic decision variables used to guide visual orienting in primates. Behavioral economics: expected value and decision-making In neurobiology, as in psychology, the sensory-motor reflex has been wide viewed as a useful model for directly linking sensation to action (Pavlov 1927; Sherrington 1906). As instructive as it has been, however, the simple 202 sensory-motor reflex can only begin to approximate the rich ethology of real-world behavior (Glimcher 2003; Herrnstein 1997; Platt 2002). Only recently have neurobiologists begun to investigate the idea that internal decision variables dynamically link purely sensory and purely motor processes and are explicitly represented in the nervous system (Basso and Wurtz 1997; Dorris and Munoz 1998; Kim and Shadlen 1999; Platt and Glimcher 1999; Shadlen and Newsome 1996, 2001). In contrast, over the past 400 years, economists have developed simple normative models to describe what rational agents should do when confronted with a choice between two options [Arnauld and Nichole 1662; Bernouilli (1758) in Speiser 1982]. The simplest economic model of decision-making, known as Expected Value Theory, posits that rational agents compute the likelihood that a particular action will yield a gain or loss, as well as the amount of gain or loss that can be expected from that choice (Arnauld and Nichole 1662). These values are then multiplied to arrive at an estimate of expected value for each possible course of action, and the option with the highest expected value is chosen (Arnauld and Nichole 1662). Maximizing expected value is the optimal algorithm for a rational chooser with complete information about the costs and benefits of different options, as well as their likelihood of occurrence. Expected value models, and variations on them, i.e., Expected Utility Theory [Bernouilli (1758) in Speiser (1982)], have been shown to be very good descriptors of the choices both people and animals make in a variety of simple situations (Stephens and Krebs 1986; Herrnstein 1997; Camerer 2003; Glimcher 2003). Expected value models of decision-making assume that rational choosers have access to information about the probability and amount of gain that can be expected from each action. Behavioral observations have repeatedly demonstrated that humans and animals are exquisitely sensitive to the expected value of available options (Herrnstein 1961; Stephens and Krebs 1986; Glimcher 2003). This suggests that nervous systems somehow represent information about the estimated costs and benefits of potential behaviors and use that information to dynamically link sensation to action. New studies have begun to shed light on this process. These studies suggest that neurons representing different eye movements compete to reach a firing rate threshold for movement initiation (Hanes and Schall 1996; Roitman and Shadlen 2002). The activity of these neurons is systematically enhanced by both sensory information favoring a particular eye movement (Shadlen and Newsome 1996, 2001; Roitman and Shadlen 2002) and increases in the expected value of that eye movement (Kawagoe et al. 1998; Leon and Shadlen 1999; Platt and Glimcher 1999; Coe et al. 2002). These observations intimate that the eye movement decision process may be instantiated rather simply by scaling the activity of movement-related neurons by the expected value of the eye movements they encode. Some of the important evidence supporting this model is described further below. Expected value and primate parietal cortex In an explicit application of economic theory to experimental neurophysiology, Platt and Glimcher (1999) manipulated the expected value of saccadic eye movements while monitoring the activity of neurons in the lateral intraparietal area (LIP), a subregion of primate posterior parietal cortex thought to intervene between visual sensation and eye movements (Fig. 1; Gnadt and Andersen 1988; Goldberg et al. 1990, 1998). Prior studies had suggested that the responses of LIP neurons were sensitive to the behavioral relevance of visual targets for guiding attention or gaze shifts (Gnadt and Andersen 1988; Colby et al. 1996; Platt and Glimcher 1997) as well as the strength of perceptual evidence favoring the production of a particular eye movement (Shadlen and Newsome 1996, 2001). These observations suggested that LIP neurons play a role in the oculomotor decision-making process (Platt and Glimcher 1999; Shadlen and Newsome 1996). In the first set of experiments designed to test this hypothesis (Platt and Glimcher 1999), monkeys performed cued saccade trials, in which a change in the color of a central fixation light instructed subjects to Fig. 1 A network for oculomotor decision-making. Medial and lateral views of the macaque monkey brain illustrating major pathways carrying signals related to saccadic eye movements (green) and reward (blue). Note: many of these pathways are bidirectional but have been simplified for presentation. AMYG amygdala, CGp posterior cingulate cortex, CGa anterior cingulate cortex, LIP lateral intraparietal cortex, SEF supplementary eye fields, FEF frontal eye fields, PFC prefrontal cortex, OFC orbitofrontal cortex, SC superior colliculus, NAC nucleus accumbens, SNr substantia nigra pars reticulata, SNc substantia nigra pars compacta, VTA ventral tegmental area. After (Platt et al. 2004) 203 shift gaze to one of two possible target locations in order to receive a fruit juice reward. A change from yellow to red indicated that looking to the upper target would be rewarded while a change from yellow to green indicated that the lower target would be rewarded. In successive blocks of trials, either the volume of juice delivered for each eye movement response or the probability that each possible movement would be cued and therefore reinforced was varied. The investigators found that the activity of LIP neurons was systematically modulated by both the size of the reward a monkey could expect to receive and the probability that a particular gaze shift would be reinforced—the two variables needed to compute the expect value of the movement. Intriguingly, the effects of expected value on neuronal firing rates were maximal early on each trial but diminished after the rewarded target had been cued. The information encoded by LIP neurons thus appeared to track the monkey’s running estimate of the expected value of a particular movement, which was maximal once that movement was cued (Glimcher 2002). These experiments demonstrated that LIP neurons carry information about the economic decision variables thought to drive rational decision-making. In those studies, however, monkeys did not have a choice about which eye movement to make and thus the link between decision-making and LIP activity could not be directly observed. In a second experiment (Platt and Glimcher 1999), the investigators studied the activity of LIP neurons while monkeys were permitted to freely choose between two movements differing in expected value. This allowed the investigators to derive a behavioral estimate of the monkey’s subjective valuation of each response, which could be compared directly with LIP neuronal activity. Under these conditions, monkeys behaved like rational consumers: their pattern of target choices was sensitive to the amount of fruit juice associated with each target (Fig. 2b). LIP neuronal activity was also sensitive to expected value in the free choice task (Fig. 2a, c). Activity prior to movement increased systematically with its expected value in concert with the monkey’s valuation of the same movement. Based on these data, Platt and Glimcher (1999) concluded that LIP neurons encode the expected value of potential eye movements. More specifically, Gold and Shadlen (2002) have argued that these data are consistent with the hypothesis that LIP neurons signal the instantaneous likelihood that the monkey will choose and execute a particular eye movement, and that this likelihood is computed, at least in part, based on the expected value of that movement. Neurons in several other brain areas have been shown to carry signals related to the expected value of eye movements. For example, neurons in the caudate nucleus were found to respond in anticipation of rewardpredicting stimuli on a memory saccade task (Kawagoe et al. 1998), and the strength of this signal was correlated with the latency of behavioral response (Takikawa et al. Fig. 2a–c Monkeys and posterior parietal neurons are sensitive to eye movement value. a Firing rate as a function of time for a single LIP neuron when the monkey subject chose to shift gaze to the target in the neuronal response field. Black curve high value trials, grey curve low value trials. Tick marks indicate action potentials recorded on successive trials for the first ten trials of each block. b Proportion of trials on which a single monkey subject chose target 1 as a function of its expected value [reward size target 1/ (reward size target 1 + reward size target 2)]. c Average normalized premovement firing rate (±SE) for a single posterior parietal neuron in the same monkey as a function of the expected value of the same target. After Platt and Glimcher (1999). Reprinted by permission from Nature 2002). On a reaction time eye movement task, anticipatory activity in the caudate was maximal when the contralateral target was reliably rewarded, and tracked changes in the monkeys‘ response time as the rewarded location was reversed (Lauwereyns et al. 2002). Anticipatory activity was absent, however, during a task in which the rewarded target unpredictably changed from trial to trial (Lauwereyns et al. 2002). Signals correlated with the reward value associated with eye movements have also been found in dorsolateral prefrontal cortex (Leon and Shadlen 1999), supplementary eye fields (Amador et al. 2000; Stuphorn et al. 2000a), substantia nigra pars reticulata (Sato and Hikosaka 2002), and even the superior colliculus (Ikeda and Hikosaka 2003). Expected value signals thus appear to penetrate the oculomotor system from the cortex through the final common pathway in the superior colliculus, presumably serving to bias eye movement selection towards maximization of reward. Behavioral measures indicate that expected value systematically influences not only target selection by eye movements, but also modulates saccade metrics, including amplitude, velocity, and reaction time 204 b Fig. 3a, b Monkeys rapidly learn to choose the high value target. a Proportion of trials on which a single monkey chose target 1 as a function of its expected value. Individual graphs plot choice functions for sequential five trial blocks following a change in reward contingencies favoring target 1. Red line indicates a cumulative normal function fit to the choice data. b Reward sensitivity over time. Graph plots the steepness of the psychometric function (Weber fraction) computed by dividing the standard deviation by the mean of the cumulative normal function fit to the choice data in each five trial block for two monkey subjects. After McCoy et al. (2003). Reprinted with permission from Neuron throughout the cortical and subcortical oculomotor afferents to the superior colliculus. Such modulations in saccade-associated activity presumably result in the differential activation of pools of motor neurons in the oculomotor brainstem that ultimately generate the patterns of muscle contraction responsible for shifting gaze. This model, however, has yet to be tested functionally using either microstimulation or inactivation techniques. Learning from mistakes: dopamine and prediction error (Leon and Shadlen 1999; Takikawa et al. 2002; McCoy et al. 2003). These behavioral observations are consistent with a model for oculomotor decision-making in which expected value systematically biases neuronal activity Recent studies by Platt and colleagues (McCoy et al. 2003) have demonstrated that the expected value of eye movements is rapidly updated in the primate brain when reward contingencies change. Figure 3a shows the pattern of choice behavior in the target choice task for one well-trained monkey as a function of time following the introduction of a novel set of reward contingencies. Each graph plots the frequency of choosing target 1 as a function of the relative value of that target (reward for target 1 divided by the sum of the reward values offered for targets 1 and 2) for data gathered with multiple different target values over several weeks of experimental sessions. The choice functions were well fit by cumulative normal functions, which became steeper over time but nearly reached asymptote after only about ten trials. Figure 3b plots reward sensitivity (Weber fraction) against time for two monkeys. These data indicate that the monkeys rapidly learned to choose the target associated with the greatest amount of reward. These data suggest that the brain continuously evaluates the reward (or punishment) outcome associated with movements and uses that information to update the expected value of those movements. Learning theorists have long argued that a comparison of reward expectation and reward outcome directly drives learning (Pearce and Hall 1980; Rescorla and Wagner 1972) and should therefore be encoded in the nervous system. The comparison of expected and actual reward is known as a reward prediction error (Sutton and Barto 1981), and is defined formally as the instantaneous discrepancy between the maximal associative strength sustained by the reinforcer and the current strength of the predictive stimulus (Schultz 2004). Reward prediction error models of learning have been invoked to explain previously elusive behavioral phenomena such as blocking. In traditional associative 205 models of learning (e.g., Skinner 1981; Thorndike 1898) any cue paired with reinforcement should be learned, but in blocking paradigms redundant cues paired with rewards fail to be learned (the cues are ‘‘blocked’’ from acquiring associative value). The typical blocking experiment introduces four stimuli, A, B, X, Y, which are either paired with a reward or with nothing. In the first stage of the experiment, a subject learns that stimulus A is paired with a reward while stimulus B is not. Once this is learned, the same stimuli are subsequently paired with two novel stimuli (X and Y), and, in this second stage of the experiment, the joint stimuli AX and BY are both paired with rewards. If learning were merely associative, the subject would respond to both novel stimuli X and Y as if they predicted the reward. In fact, under these conditions subjects learn to associate Y, but not X, with reward. The explanation for this effect lies in the fact that because stimulus A fully predicted reward in stage 1, stimulus X was redundant and was therefore not highlighted for learning (Rescorla and Wagner 1972). Reward prediction error models, such as the Rescorla-Wagner model or temporal difference learning models (Sutton and Barto 1981), nicely account for these results because once the relationship between cue A and reward has been learned, the prediction error term goes to 0 and remains unchanged when the combined stimulus AX is followed by a reward in stage 2; hence, no new learning occurs for the redundant cue. Over the past decade, Wolfram Schultz and colleagues have demonstrated that the activation of dopamine neurons in the midbrain represents, at least in part, an instantiation of a reward prediction error in neural circuitry (Schultz and Dickinson 2000). These conclusions rest on the observation that the activity of dopamine neurons in the substantia nigra and ventral tegmental area is elevated by the delivery of unpredicted rewards, unchanged following the delivery of predicted rewards, and depressed when expected rewards are withheld (Schultz 1998). Moreover, in blocking paradigms these dopamine neurons fail to respond to redundant cues (Waelti et al. 2001). The activity of these neurons has therefore been proposed to encode reward prediction error and thus to determine the direction and rate of learning: learning occurs when the error is positive and the reward is not predicted by the stimulus; no learning occurs when the error is 0 and the reward is fully predicted; forgetting or extinction occurs when the error is negative and the reward is less than predicted by the stimulus; and the rate of learning is determined by the absolute size of the error, whether positive or negative (Schultz and Dickinson 2000). Recent evidence has called into question whether the signals carried by dopaminergic midbrain neurons are specific to rewards or can be generalized to other salient stimuli (Horvitz 2002). Horvitz and others have shown that putative dopamine neurons respond to a variety of novel or arousing events, including aversive as well as rewarding stimuli (Salamone 1994; Horvitz 2000; but see Mirenowicz and Schultz 1996). These observations suggest that the responses of dopamine neurons might therefore signal surprising events in the environment regardless of whether they are rewarding or aversive (Horvitz 2002). Rather than encoding a reward prediction error per se, dopamine neurons might instead gate cortical and limbic inputs to the striatum following unpredicted events and thereby facilitate learning. The role of dopamine neurons in learning thus appears to be more complex than previously thought, and a deeper understanding will likely lie in further investigations of the response of dopamine neurons to all salient stimuli—good or bad. Updating expectations: the role of posterior cingulate cortex Up to this point, we have discussed the evidence that neurons in parietal cortex, prefrontal cortex, the basal ganglia, and the superior colliculus carry information about the expected value of the decision about where to look. Midbrain dopamine neurons, on the other hand, appear to play a role in processing reward-related information in the abstract—highlighting when expected and actual reward outcomes are in conflict. Such a signal would be useful for initiating the assignment of new estimates of the expected value of potential behaviors, and evidence increasingly suggests this to be the case (Montague and Berns 2002). But how are abstract prediction error signals generated by midbrain dopamine neurons linked to the selection and performance of specific actions, such as eye movements, that maximize reward? With this question in mind, Platt and colleagues (McCoy et al. 2003) investigated the properties of neurons in posterior cingulate cortex (CGp), a poorly understood part of the limbic system thought to be related to eye movements (Olson et al. 1996), spatial localization (Sutherland et al. 1988; Harker and Whishaw 2002), and learning (Bussey et al. 1997; Gabriel et al. 1980; Gabriel and Sparenborg 1987). Many CGp neurons are activated following saccades, in contrast with the activation of saccade-related neurons in parietal and prefrontal cortex prior to movement (Olson et al. 1996). Such a pattern of activation is suggestive of an evaluative, rather than generative, role in oculomotor behavior, an idea first suggested by Vogt and colleagues (Vogt et al. 1992). Anatomically, CGp is interconnected with reward-related areas of the brain (see Fig. 1), including the anterior cingulate cortex (Morecraft et al. 1993), orbitofrontal cortex (Cavada et al. 2000), and the caudate nucleus (Baleydier and Mauguiere 1980), as well as oculomotor areas such as parietal cortex (Cavada and Goldman-Rakic 1989), prefrontal cortex (Vogt and Pandya 1987) and the supplementary eye fields. These connections provide potential sources for a functional linkage of motivational and oculomotor information within CGp. To test the idea that CGp links motivational outcomes with eye movements, Platt and colleagues 206 recorded the activity of CGp neurons while monkeys shifted gaze to a single visual target for fruit juice rewards. In the first experiment, the size of the reward associated with visually-guided saccades was held constant for a block of 50 trials, and then varied between blocks, while the oculomotor behavior of the monkeys and the activity of CGp neurons were examined. The authors found that saccade metrics were sensitive to the size of reward associated with gaze shifts. Specifically, monkeys made faster, higher velocity saccades when expecting smaller rewards, a strategy consistent with reducing the delay to reinforcement and thereby increasing fluid intake rate in low reward blocks of trials. This observation is consistent with the idea that, even in the absence of an overt decision task, monkeys are sensitive to the expected value of gaze shifts. In this study, the activity of CGp neurons was also systematically modulated by reward value, illustrated by data from two example neurons in Fig. 4. Single neurons were sensitive to reward size following movement (Fig. 4a) as well as following reward delivery (Fig. 4b). These represented largely separate modulations since the events were separated in time by at least 500 ms. Across the population of studied neurons, approximately onethird of the population was sensitive to reward size following movement and another third following the receipt of reward (Fig. 4c). These modulations by reward size were independent of any effects of saccade metrics, demonstrated by the inclusion of saccade amplitude, latency, and peak velocity as independent factors in multiple linear regression analysis of firing rate as a function of reward size. These results thus demonstrate that information about both the predicted and experienced reward value of a particular eye movement is carried by the activity of CGp neurons. Such modulation in neuronal activity by reward size cannot be accounted for solely by reafferent input from motor areas. In this experiment, the net effect of changes in reward value was to scale the gain of spatially-selective neuronal responses following movement. Sixty-two percent of studied neurons in CGp responded in a spatially selective manner following eye movement onset. Among these, neurons excited following a particular movement were further excited when the expected value of that movement was increased; similarly, neurons suppressed following a particular movement were further suppressed when the expected value of that movement was increased (Fig. 4d). Across the population, reward value thus tuned the spatial sensitivity of the CGp neuronal population to saccade direction. Improved spatial sensitivity under high reward conditions may be associated with the slower, more deliberate saccades made by monkeys under high reward conditions in this experiment. The timing of modulations in CGp activity by reward value suggests that this area encodes both the predicted and experienced value of a particular movement. If so, CGp might also be expected to carry signals related to an error in reward prediction, arising from a discrepancy between predicted and experienced value. This hypoth- c Fig. 4a–d Representation of saccade value in posterior cingulate cortex. a Left panel, average firing rate (±SE) for a single CGp neuron plotted as a function of time on high reward (black curve) and low reward (grey curve) blocks of trials. Rasters indicate time of action potentials on individual trials. All trials are aligned on movement onset. Right panel, average firing rate (±SE) following movement onset (grey shaded region on left panel) plotted as a function of reward size, for the same neuron. Reward size is measured as the open time (ms) of a computer driven solenoid and is linearly related to juice volume. b left panel, average firing rate (±SE) for a second CGp neuron plotted as a function of time on high reward (black curve) and low reward (grey curve) blocks of trials. Rasters indicate time of action potentials on individual trials. All trials are aligned on reward offset. Right panel, average firing rate (±SE) following reward offset (grey shaded region on left panel) plotted as a function of reward size, for the same neuron. c Population data. Proportion of CGp neurons with significant modulation by reward size, peak saccade velocity, saccade amplitude, and saccade latency plotted as a function of time. Significant modulations P< 0.05 for each individual factor in a multiple regression analysis of firing rate in each of ten sequential 200 ms epochs. The size of liquid reward delivered on correct trials was controlled linearly by the open time of a computer-driven solenoid (volume=0.0026+0.001·open time in ms). d Reward value scales the gain of CGp responses. The correlation coefficient between firing rate and reward size for each neuron was plotted as a function of movement response index, a logarithmically scaled measure of the degree to which each neuron was excited or inhibited after movement relative to fixation level activity on mapping trials. Each dot represents data for one cell (n=67) and the best-fit line is shown in grey. Note: two outliers were removed from this analysis. After McCoy et al. (2003). Reprinted with permission from Neuron esis was addressed in a second experiment in which reward size was held constant but delivered probabilistically while monkeys shifted gaze to a target fixed in the area of maximal response for the neuron under study. Correct trials were reinforced on a variable reward schedule of 0.8, meaning that, on average, 80% of correct trials were followed by an auditory noise burst and reward (‘‘rewarded trials’’), while the remaining 20% of correct trials were followed by an auditory noise burst only but no juice (‘‘unrewarded trials’’). The relatively low frequency of unrewarded trials allowed them to serve as catch trials for which a predicted reward was unexpectedly withheld. Once again, the behavior of monkeys and the activity of CGp neurons were both sensitive to this manipulation in reward contingencies. After predicted rewards were withheld, monkeys made higher velocity saccades, similar to the effects of low reward on saccade metrics found in the previous experiment. Similarly, the activity of single CGp neurons was also significantly different on rewarded and unrewarded trials (Fig. 5a, b). Across the population of cells studied in this experiment, firing rate following the usual time of reward delivery was significantly greater when expected rewards were omitted (Fig. 5c). These data demonstrate that CGp neurons faithfully report the omission of expected reward, suggestive of a prediction error-like signal for eye movements (Schultz and Dickinson 2000). Intriguingly, many CGp neurons responded equivalently to the delivery of larger than average rewards and 207 the omission of predicted rewards (McCoy et al. 2003), unlike dopamine neurons which respond with a burst of action potentials following unpredicted rewards but are suppressed following the omission of predicted rewards (Schultz et al. 1997). The reward modulation of neuro- nal activity in CGp is therefore consistent with attentional theories of learning, which posit that reward prediction errors highlight unpredicted stimuli as important for learning (Mackintosh 1975; Pearce and Hall 1980). According to this idea, the absolute value of 208 Fig. 5a, b Representation of saccade reward prediction error in posterior cingulate cortex. a Firing rate as a function of time for a single CGp neuron on rewarded (black curve) and unrewarded (grey curve) delayed saccade trials. On average, eight out of ten randomly-selected correct trials were rewarded. Conventions as in Fig. 4. b Population response to reward omission. c Average (+SE) normalized firing rate measured after the normal time of reward delivery for the population of CGp neurons on rewarded and unrewarded trials. After McCoy et al. (2003). Reprinted with permission from Neuron the neuronal response correlates with the extent to which the reward event differed from expectation, whether in a positive or negative direction. While such a signal would not contain information about ‘‘what’’ needs to be learned about the relationship between a stimulus and reward, such a signal would be useful for instructing ‘‘when’’ and ‘‘how rapidly’’ learning should occur. Some of the saccade-related reward signals uncovered in CGp may best be understood in terms of such an attentional learning theory. Taken together, the results of these experiments suggest that neurons in CGp link motivational outcomes with gaze shifts. Recent studies have reported that neurons in the supplementary eye fields, with which CGp is reciprocally connected, also respond to reward outcomes associated with eye movements (Amador et al. 2000; Stuphorn et al. 2000b). Neurons in other areas of cingulate cortex also have been implicated in guiding actions based on reward value. While studying timing behavior in primates, Niki and Watanabe (1979) first uncovered two classes of rewarderror units in anterior cingulate cortex (CGa): one class responding after juice delivery, and the other responding following incorrect trials as well as following the omission of reward on correct trials. Single cells in anterior cingulate cortex (CGa) have also been found to carry signals related to reward expectation on a cued multi-trial color discrimination task (Shidara and Richmond 2002). In the rostral cingulate motor area (CMAr), Shima and Tanji (1998) found neurons that responded when a change in reward contingencies—but not a neutral cue—prompted monkeys to change the strategy of their behavioral response in order to maximize their receipt of reward. Inactivation of the CMAr by muscimol injection resulted in insensitivity of the monkeys to a reward decrement and an apparent inability to modify their behavioral strategy in order to maximize reward. Taken together, these data are consistent with a direct role for cingulate cortex and supplementary eye fields in linking motivational outcomes to action. Neurons in the primate ventral striatum (Cromwell and Schultz 2003; Hassani et al. 2001; Schultz 2004) and orbitofrontal cortex (Rolls 2000; Tremblay and Schultz 1999) have also been shown to carry information about predicted and experienced rewards. These neurons do not appear to be directly related to the generation of action, but their responses otherwise bear striking resemblance to those in cingulate cortex. In particular, neurons in ventral striatum and orbitofrontal cortex respond during delay periods preceding reinforcement, as well as following reinforcement, and these responses reflect the subjective preferences of subjects for particular rewards (Tremblay and Schultz 1999). Thus, orbitofrontal cortex and ventral striatum appear to convert information about rewards, punishments, and their predictors into a common internal currency (Montague and Berns 2002). Neurons in the dorsal striatum and dorsolateral prefrontal cortex, on the other hand, show similar response properties, but are activated in association with particular movements, much like neurons in CGp and supplementary eye fields. In summary, the response properties of neurons in ventral striatum and orbitofrontal cortex suggest they provide a valuation scale for a broad range of stimuli, (Montague and Berns 2002), while those in the dorsal striatum, dorsolateral prefrontal cortex, supplementary eye fields, and cingulate cortex may serve to associate this information with specific actions (Schultz 2004). 209 Decision-making in the human brain Recent neuroimaging studies suggest that some of the same neurophysiological processes guiding oculomotor decision-making characterize more abstract representations of reward, punishment, and decision-making in humans. Specifically, the striatum, amygdala, orbitofrontal cortex, prefrontal cortex, anterior cingulate cortex, and parietal cortex are activated by rewards and reward-associated stimuli (Elliott et al. 2003). Moreover, hemodynamic responses are modulated by reward uncertainty in orbitofrontal cortex, ventral striatum (Berns et al. 2001; Critchley et al. 2001), and CGp (Smith et al. 2002) and are linearly correlated with expected value in orbitofrontal cortex (O’Doherty et al. 2001), ventral and dorsal striatum (Delgado et al. 2000, 2003), amygdala (Breiter et al. 2001), and premotor cortex (Elliott et al. 2003). Further, errors in predicting rewards or punishments evoke hemodynamic responses in anterior cingulate cortex (Holroyd et al. 2004), ventral striatum (Pagnoni et al. 2002; Seymour et al. 2004), CGp (Smith et al. 2002) and insula (Seymour et al. 2004), and error-related electrophysiological responses have been recorded from the medial frontal and anterior cingulate cortices with scalp electrodes in humans as well (Holroyd et al. 2003). These observations indicate that brain regions carrying value-related information are activated in a similar fashion in monkeys and humans performing disparate types of learning and decision-making tasks. Human neuroimaging studies, however, have not yet tested the hypothesis that pools of neurons coding different movements are activated in proportion to movement value. Conclusions In summary, brain regions have been identified that appear to participate in several stages of oculomotor decision-making, from sensation, to reward expectation, to action, to outcome evaluation. Neurons in parietal cortex, prefrontal cortex, the basal ganglia, and the superior colliculus have been shown to encode in their firing rates the expected value of eye movements. Dopamine neurons in the midbrain, on the other hand, appear to signal an abstract reward prediction error, encoding in their firing rates a discrepancy between predicted and actual rewards. Because dopamine neurons terminate on glutamatergic inputs to the striatum from orbitofrontal cortex and amygdala (among other cortical and limbic structures), they are well-situated to gate the flow of motivational information through the striatum and other basal ganglia structures, whose purpose is ultimately to produce adaptive behaviors. Neurons in the ventral striatum, orbitofrontal cortex, and cingulate cortices, in turn, appear to signal the relative value or salience of features in the environment that may be important for learning and controlling behavior. In particular, posterior cingulate and supplementary eye field neurons have been recently shown to carry signals related to both reward outcomes and oculomotor behavior, and may contribute to updating orienting value signals in parietal and prefrontal cortex. Activation of this complex network thus appears to underlie visuallyguided behavior—in particular, how animals choose where to look- and serves as a model for understanding behavioral decision-making more generally. References Amador N, Schlag-Rey M, Schlag J (2000) Reward-predicting and reward-detecting neuronal activity in the primate supplementary eye field. J Neurophysiol 84:2166–2170 Arnauld A, Nichole P (1662) The art of thinking: Port-Royal logic. Translated by Dickoff J, James P. Bobbs-Merrill, Indianapolis Baleydier C, Mauguiere F (1980) The duality of the cingulate gyrus in monkey. Neuroanatomical study and functional hypothesis. Brain 103(3):525–554 Basso MA, Wurtz RH (1997) Modulation of neuronal activity by target uncertainty. Nature 389:66–69 Berns GS, McClure SM, Pagnoni G, Montague PR (2001) Predictability modulates human brain response to reward. J Neurosci 21:2793–2798 Breiter HC, Aharon I, Kahneman D, Dale A, Shizgal P (2001) Functional imaging of neural responses to expectancy and experience of monetary gains and losses. Neuron 30:619–639 Bussey TJ, Everitt BJ, Robbins TW (1997) Dissociable effects of cingulate and medial frontal cortex lesions on stimulus-reward learning using a novel Pavlovian autoshaping procedure for the rat: implications for the neurobiology of emotion. Behav Neurosci 111:908–919 Camerer CF (2003) Behavioral game theory: experiments in strategic interaction (Roundtable Series in Behavioral Economics). Princeton University Press, Princeton Cavada C, Goldman-Rakic PS (1989) Posterior parietal cortex in rhesus monkey: I. Parcellation of areas based on distinctive limbic and sensory corticocortical connections. J Comp Neurol 287:393–421 Cavada C, Company T, Tejedor J, Cruz-Rizzolo RJ, Reinoso-Suarez F (2000) The anatomical connections of the macaque monkey orbitofrontal cortex. A review. Cereb Cortex 10:220– 242 Coe B, Tomihara K, Matsuzawa M, Hikosaka O (2002) Visual and anticipatory bias in three cortical eye fields of the monkey during an adaptive decision-making task. J Neurosci 22(12):5081–5090 Colby CL, Duhamel JR, Goldberg ME (1996) Visual, presaccadic, and cognitive activation of single neurons in monkey lateral intraparietal area. J Neurophysiol 76:2841–2852 Critchley HD, Mathias CJ, Dolan RJ (2001) Neural activity in the human brain relating to uncertainty and arousal during anticipation. Neuron 29:537–545 Cromwell HC, Schultz W (2003) Effects of expectations for different reward magnitudes on neuronal activity in primate striatum. J Neurophysiol 29:29 Delgado MR, Nystrom LE, Fissell C, Noll DC, Fiez JA (2000) Tracking the hemodynamic responses to reward and punishment in the striatum. J Neurophysiol 84:3072–3077 Delgado MR, Locke HM, Stenger VA, Fiez JA (2003) Dorsal striatum responses to reward and punishment: effects of valence and magnitude manipulations. Cogn Affect Behav Neurosci 3:27–38 Dorris MC, Munoz DP (1998) Saccadic probability influences motor preparation signals and time to saccadic initiation. J Neurosci 18:7015–7026 210 Elliott R, Newman JL, Longe OA, Deakin JF (2003) Differential response patterns in the striatum and orbitofrontal cortex to financial reward in humans: a parametric functional magnetic resonance imaging study. J Neurosci 23(1):303–307 Gabriel M, Sparenborg S (1987) Posterior cingulate cortical lesions eliminate learning-related unit activity in the anterior cingulate cortex. Brain Res 409:151–157 Gabriel M, Orona E, Foster K, Lambert RW (1980) Cingulate cortical and anterior thalamic neuronal correlates of reversal learning in rabbits. J Comp Physiol Psychol 94:1087–1100 Glimcher P (2002) Decisions, decisions, decisions: choosing a biological science of choice. Neuron 36:323–332 Glimcher P (2003) Decisions, Uncertainty, and the brain: the science of neuroeconomics. MIT Press, Cambridge Gnadt JW, Andersen RA (1988) Memory related motor planning activity in posterior parietal cortex of macaque. Exp Brain Res 70:216–220 Gold JI, Shadlen MN (2002) Banburismus and the brain: decoding the relationship between sensory stimuli, decisions, and reward. Neuron 36:299–308 Goldberg ME, Colby CL, Duhamel JR (1990) Representation of visuomotor space in the parietal lobe of the monkey. Cold Spring Harb Symp Quant Biol 55:729–739 Gottlieb JP, Kusunoki M, Goldberg ME (1998) The representation of visual salience in monkey parietal cortex. Nature 391:481–484 Hanes DP, Schall JD (1996) Neural control of voluntary movement initiation. Science 274:427–430 Harker KT, Whishaw IQ (2002) Impaired spatial performance in rats with retrosplenial lesions: importance of the spatial problem and the rat strain in identifying lesion effects in a swimming pool. J Neurosci 22:1155–1164 Hassani OK, Cromwell HC, Schultz W (2001) Influence of expectation of different rewards on behavior-related neuronal activity in the striatum. J Neurophysiol 85:2477–2489 Herrnstein RJ (1961) Relative and absolute strength of response as a function of frequency of reinforcement. J Exp Anal Behav 4:267–272 Herrnstein RJ (1997) The matching law: papers in psychology and economics. Harvard University Press, Cambridge Holroyd CB, Nieuwenhuis S, Yeung N, Cohen JD (2003) Errors in reward prediction are reflected in the event-related brain potential. Neuroreport 14:2481–2484 Holroyd CB, Nieuwenhuis S, Yeung N, Nystrom L, Mars RB, Coles MG, Cohen JD (2004) Dorsal anterior cingulate cortex shows fMRI response to internal and external error signals. Nat Neurosci 7:497–498 Horvitz JC (2000) Mesolimbocortical and nigrostriatal dopamine responses to salient non-reward events. Neuroscience 96:651–656 Horvitz JC (2002) Dopamine gating of glutamatergic sensorimotor and incentive motivational input signals to the striatum. Behav Brain Res 137:65–74 Ikeda T, Hikosaka O (2003) Reward-dependent gain and bias of visual responses in primate superior colliculus. Neuron 39:693– 700 Kawagoe R, Takikawa Y, Hikosaka O (1998) Expectation of reward modulates cognitive signals in the basal ganglia. Nat Neurosci 1:411–416 Kim JN, Shadlen MN (1999) Neural correlates of a decision in the dorsolateral prefrontal cortex of the macaque. Nat Neurosci 2:176–185 Lauwereyns J, Watanabe K, Coe B, Hikosaka O (2002) A neural correlate of response bias in monkey caudate nucleus. Nature 418:413–417 Leon MI, Shadlen MN (1999) Effect of expected reward magnitude on the response of neurons in the dorsolateral prefrontal cortex of the macaque. Neuron 24:415–425 Mackintosh NJ (1975) Blocking of conditioned suppression: role of the first compound trial. J Exp Psychol Anim Behav Process 1:335–345 McCoy AN, Crowley JC, Haghighian G, Dean HL, Platt ML (2003) Saccade reward signals in posterior cingulate cortex. Neuron 40:1031–1040 Mirenowicz J, Schultz W (1996) Preferential activation of midbrain dopamine neurons by appetitive rather than aversive stimuli. Nature 379:449–451 Montague PR, Berns GS (2002) Neural economics and the biological substrates of valuation. Neuron 36:265–284 Morecraft RJ, Geula C, Mesulam MM (1993) Architecture of connectivity within a cingulo-fronto-parietal neurocognitive network for directed attention. Arch Neurol 50:279–284 Niki H, Watanabe M (1979) Prefrontal and cingulate unit activity during timing behavior in the monkey. Brain Res 171:213– 224 O’Doherty J, Kringelbach ML, Rolls ET, Hornak J, Andrews C (2001) Abstract reward and punishment representations in the human orbitofrontal cortex. Nat Neurosci 4:95–102 Olson CR, Musil SY, Goldberg ME (1996) Single neurons in posterior cingulate cortex of behaving macaque: eye movement signals. J Neurophysiol 76:3285–3300 Pagnoni G, Zink CF, Montague PR, Berns GS (2002) Activity in human ventral striatum locked to errors of reward prediction. Nat Neurosci 5:97–98 Pavlov IP (1927) Conditioned reflexes. Oxford University Press, London Pearce JM, Hall G (1980) A model for Pavlovian learning: variations in the effectiveness of conditioned but not of unconditioned stimuli. Psychol Rev 87:532–552 Platt ML (2002) Neural correlates of decisions. Curr Opin Neurobiol 12(2):141–148 Platt ML, Glimcher PW (1997) Responses of intraparietal neurons to saccadic targets and visual distractors. J Neurophysiol 78:1574–1589 Platt ML, Glimcher PW (1999) Neural correlates of decision variables in parietal cortex. Nature 400:233–238 Platt ML, Lau B, Glimcher PW (2004) Situating the Superior Colliculus within the Gaze Control Network. In: Hall WC, Moschovakis A (eds) The superior colliculus: new approaches for studying sensorimotor integration. CRC Press, New York Rescorla RA, Wagner AR (1972) A theory of Pavlovian conditioning. Variations in the effectiveness of reinforcement and nonreinforcement. In: Black AHaP WF (eds) Classical conditioning. II. Current research and theory. Appleton-CenturyCrofts, New York Roitman JD, Shadlen MN (2002) Response of neurons in the lateral intraparietal area during a combined visual discrimination reaction time task. J Neurosci 22:9475–9489 Rolls ET (2000) The orbitofrontal cortex and reward. Cereb Cortex 10:284–294 Salamone JD (1994) The involvement of nucleus accumbens dopamine in appetitive and aversive motivation. Behav Brain Res 61:117–133 Sato M, Hikosaka O (2002) Role of primate substantia nigra pars reticulata in reward-oriented saccadic eye movement. J Neurosci 22:2363–2373 Schultz W (1998) Predictive reward signal of dopamine neurons. J Neurophysiol 80:1–27 Schultz W (2004) Neural coding of basic reward terms of animal learning theory, game theory, microeconomics and behavioural ecology. Curr Opin Neurobiol 14:139–147 Schultz W, Dickinson A (2000) Neuronal coding of prediction errors. Annu Rev Neurosci 23:473–500 Schultz W, Dayan P, Montague PR (1997) A neural substrate of prediction and reward. Science 275:1593–1599 Seymour B, O’Doherty JP, Dayan P, Koltzenburg M, Jones AK, Dolan RJ, Friston KJ, Frackowiak RS (2004) Temporal difference models describe higher-order learning in humans. Nature 429:664–667 Shadlen MN, Newsome WT (1996) Motion perception: seeing and deciding. Proc Natl Acad Sci U S A 93:628–633 Shadlen MN, Newsome WT (2001) Neural basis of a perceptual decision in the parietal cortex (area LIP) of the rhesus monkey. J Neurophysiol 86:1916–1936 Sherrington CS (1906) The integrative action of the nervous system. Scribner, New York 211 Shidara M, Richmond BJ (2002) Anterior cingulate: single neuronal signals related to degree of reward expectancy. Science 296:1709–1711 Shima K, Tanji J (1998) Role for cingulate motor area cells in voluntary movement selection based on reward. Science 282:1335–1338 Skinner BF (1981) Selection by consequences. Science 213:501–504 Smith K, Dickhaut J, McCabe K, Pardo JV (2002) Neuronal substrates for choice under ambiquity, risk, gains, and losses. Mangement Science 48:711–718 Speiser D (ed) (1982) The works of Daniel Bernouilli. Birkhauser, Boston Stephens DW, Krebs JR (1986) Foraging theory. Princeton University Press, Princeton Stuphorn V, Taylor TL, Schall JD (2000a) Performance monitoring by the supplementary eye field. Nature 408:857–860 Stuphorn V, Taylor TL, Schall JD (2000b) Performance monitoring by the supplementary eye field. Nature 408:857–860 Sutherland RJ, Whishaw IQ, Kolb B (1988) Contributions of cingulate cortex to two forms of spatial learning and memory. J Neurosci 8:1863–1872 Sutton RS, Barto AG (1981) Toward a modern theory of adaptive networks: expectation and prediction. Psychol Rev 88:135–170 Takikawa Y, Kawagoe R, Itoh H, Nakahara H, Hikosaka O (2002) Modulation of saccadic eye movements by predicted reward outcome. Exp Brain Res 142:284–291 Thorndike LL (1898) Animal intelligence: an experimental study of the asssociative processes in animals. Psychol Rev Monogr [Suppl 11] Tremblay L, Schultz W (1999) Relative reward preference in primate orbitofrontal cortex. Nature 398:704–708 Vogt BA, Pandya DN (1987) Cingulate cortex of the rhesus monkey. II. Cortical afferents. J Comp Neurol 262:271–289 Vogt BA, Finch DM, Olson CR (1992) Functional heterogeneity in cingulate cortex: the anterior executive and posterior evaluative regions. Cereb Cortex 2:435–443