* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download The Neuroscientist

Neural oscillation wikipedia , lookup

Sensory substitution wikipedia , lookup

Neuroanatomy wikipedia , lookup

Affective neuroscience wikipedia , lookup

Clinical neurochemistry wikipedia , lookup

Emotional lateralization wikipedia , lookup

Neurocomputational speech processing wikipedia , lookup

Human brain wikipedia , lookup

Embodied cognitive science wikipedia , lookup

Bird vocalization wikipedia , lookup

Neuroesthetics wikipedia , lookup

Aging brain wikipedia , lookup

Optogenetics wikipedia , lookup

Metastability in the brain wikipedia , lookup

Premovement neuronal activity wikipedia , lookup

Neural coding wikipedia , lookup

Neuropsychopharmacology wikipedia , lookup

Nervous system network models wikipedia , lookup

Evoked potential wikipedia , lookup

Development of the nervous system wikipedia , lookup

Neuroplasticity wikipedia , lookup

Neuroeconomics wikipedia , lookup

Eyeblink conditioning wikipedia , lookup

Music-related memory wikipedia , lookup

Animal echolocation wikipedia , lookup

Neural correlates of consciousness wikipedia , lookup

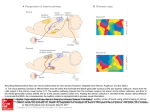

Sound localization wikipedia , lookup

Cortical cooling wikipedia , lookup

Perception of infrasound wikipedia , lookup

Synaptic gating wikipedia , lookup

Time perception wikipedia , lookup

Cerebral cortex wikipedia , lookup

Sensory cue wikipedia , lookup