* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download Auditory cortical processing: Binaural interaction in healthy

Cognitive neuroscience wikipedia , lookup

Embodied language processing wikipedia , lookup

Clinical neurochemistry wikipedia , lookup

Nervous system network models wikipedia , lookup

Bird vocalization wikipedia , lookup

Functional magnetic resonance imaging wikipedia , lookup

Neuropsychology wikipedia , lookup

Neuroanatomy wikipedia , lookup

Synaptic gating wikipedia , lookup

Neuroesthetics wikipedia , lookup

Human multitasking wikipedia , lookup

Sensory substitution wikipedia , lookup

Eyeblink conditioning wikipedia , lookup

Human brain wikipedia , lookup

Neuroeconomics wikipedia , lookup

Neurogenomics wikipedia , lookup

Emotional lateralization wikipedia , lookup

Neurocomputational speech processing wikipedia , lookup

Neuropsychopharmacology wikipedia , lookup

Aging brain wikipedia , lookup

History of neuroimaging wikipedia , lookup

Neurolinguistics wikipedia , lookup

Neuroplasticity wikipedia , lookup

Cortical cooling wikipedia , lookup

Animal echolocation wikipedia , lookup

Embodied cognitive science wikipedia , lookup

Perception of infrasound wikipedia , lookup

Metastability in the brain wikipedia , lookup

Feature detection (nervous system) wikipedia , lookup

Evoked potential wikipedia , lookup

Sensory cue wikipedia , lookup

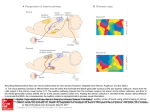

Sound localization wikipedia , lookup

Time perception wikipedia , lookup