* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

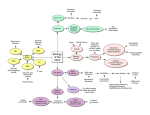

Download learning motor skills by imitation: a biologically inspired robotic model

Neurocomputational speech processing wikipedia , lookup

Neural coding wikipedia , lookup

Neural engineering wikipedia , lookup

Artificial neural network wikipedia , lookup

Neural modeling fields wikipedia , lookup

Time perception wikipedia , lookup

Activity-dependent plasticity wikipedia , lookup

Donald O. Hebb wikipedia , lookup

Environmental enrichment wikipedia , lookup

Neuroeconomics wikipedia , lookup

Neuropsychopharmacology wikipedia , lookup

Neuroesthetics wikipedia , lookup

Neuroanatomy wikipedia , lookup

Catastrophic interference wikipedia , lookup

Biological neuron model wikipedia , lookup

Optogenetics wikipedia , lookup

Convolutional neural network wikipedia , lookup

Machine learning wikipedia , lookup

Mirror neuron wikipedia , lookup

Synaptic gating wikipedia , lookup

Concept learning wikipedia , lookup

Central pattern generator wikipedia , lookup

Feature detection (nervous system) wikipedia , lookup

Development of the nervous system wikipedia , lookup

Cognitive neuroscience of music wikipedia , lookup

Eyeblink conditioning wikipedia , lookup

Anatomy of the cerebellum wikipedia , lookup

Metastability in the brain wikipedia , lookup

Channelrhodopsin wikipedia , lookup

Muscle memory wikipedia , lookup

Types of artificial neural networks wikipedia , lookup

Recurrent neural network wikipedia , lookup

Nervous system network models wikipedia , lookup

Embodied language processing wikipedia , lookup