* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download learning - Science of Psychology Home

Behavioral modernity wikipedia , lookup

Social psychology wikipedia , lookup

Observational methods in psychology wikipedia , lookup

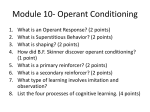

Learning theory (education) wikipedia , lookup

Thin-slicing wikipedia , lookup

Neuroeconomics wikipedia , lookup

Theory of planned behavior wikipedia , lookup

Abnormal psychology wikipedia , lookup

Theory of reasoned action wikipedia , lookup

Attribution (psychology) wikipedia , lookup

Parent management training wikipedia , lookup

Sociobiology wikipedia , lookup

Applied behavior analysis wikipedia , lookup

Verbal Behavior wikipedia , lookup

Psychophysics wikipedia , lookup

Adherence management coaching wikipedia , lookup

Descriptive psychology wikipedia , lookup

Behavior analysis of child development wikipedia , lookup

Social cognitive theory wikipedia , lookup

Insufficient justification wikipedia , lookup

Psychological behaviorism wikipedia , lookup

Behaviorism wikipedia , lookup