* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Preview Chapter 5 - Macmillan Learning

Behavioral modernity wikipedia , lookup

Prosocial behavior wikipedia , lookup

Observational methods in psychology wikipedia , lookup

Abnormal psychology wikipedia , lookup

Theory of planned behavior wikipedia , lookup

Thin-slicing wikipedia , lookup

Theory of reasoned action wikipedia , lookup

Attribution (psychology) wikipedia , lookup

Neuroeconomics wikipedia , lookup

Counterproductive work behavior wikipedia , lookup

Sociobiology wikipedia , lookup

Learning theory (education) wikipedia , lookup

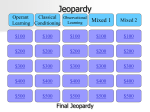

Applied behavior analysis wikipedia , lookup

Parent management training wikipedia , lookup

Psychophysics wikipedia , lookup

Descriptive psychology wikipedia , lookup

Verbal Behavior wikipedia , lookup

Insufficient justification wikipedia , lookup

Behavior analysis of child development wikipedia , lookup

Social cognitive theory wikipedia , lookup

Behaviorism wikipedia , lookup

Classical conditioning wikipedia , lookup