* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download BF Skinnner - Illinois State University Websites

Observational methods in psychology wikipedia , lookup

Symbolic behavior wikipedia , lookup

Abnormal psychology wikipedia , lookup

Insufficient justification wikipedia , lookup

Thin-slicing wikipedia , lookup

Attribution (psychology) wikipedia , lookup

Theory of planned behavior wikipedia , lookup

Neuroeconomics wikipedia , lookup

Transtheoretical model wikipedia , lookup

Theory of reasoned action wikipedia , lookup

Sociobiology wikipedia , lookup

Applied behavior analysis wikipedia , lookup

Adherence management coaching wikipedia , lookup

Descriptive psychology wikipedia , lookup

Psychological behaviorism wikipedia , lookup

Behavior analysis of child development wikipedia , lookup

Verbal Behavior wikipedia , lookup

Classical conditioning wikipedia , lookup

Psychophysics wikipedia , lookup

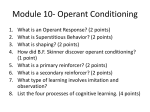

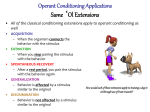

B.F. Skinnner Radical and then Modern Behaviorism Burris Fredric Skinner Burris Frederic Skinner • Born in Pennsylvania • • BA degree in English from Hamilton College Masters/PhD from Harvard in 1930, 1931 • Taught at Univ of Minnesota 1936-1945 – Behaviors of Organisms, 1938 • • 1945 to Indiana University 1948 to Harvard; there until his death in 1990 • Several important “human rights” books – Beyond Freedom and Dignity – Walden Two – Enjoy Old Age • 2 daughters: one is psychologist Julie Vargas (runs autism program at WVU) and a pianist Why Behaviorism • Defines "behavior" as what the animal is (observed to be) doing. – Avoid anthropomorphizing or implying conceptual schemes – Simply describe what the animal is doing • Avoids preconceived notions and concepts about the animal's behavior Narration as a descriptor: defining what is behavior • Narrate what the animal is doing- running frame of reference • “Stimulus” refers to environment: Subscripts tell what part of the environment – SD: the discriminative stimulus – SR: The reinforcing stimulus, or the reinforcer • Correlated or observed behavior is the response • Reflex = observed relation between the stimulus and response – Implies lawfulness – Is a fact, not a theory • Not want to "botanize" - but come up with general laws of behavior Several laws of classical conditioning • Uses to distinguish from operant behavior • Static laws of the Reflex: Really discussing classical conditioning here – Law of threshold: the intensity of the stimulus must reach or exceed a certain critical value in order to elicit a response – Law of latency: an interval of time elapses between the beginning of the stimulus and the beginning of the response – Law of magnitude of the response: the magnitude of the response is a function of the intensity of the stimulus – Law of after discharge: the response may persist for some time after the cessation of the stimulus – Law of temporal summation: prolongation of a stimulus or repetitive presentation within certain limiting rates has the same effect as increasing the intensity Several laws of classical conditioning • Dynamic laws of reflex strength: – Law of refractory phase: immediately after eliciation the strength of some reflexes exists at a low, perhaps zero, value. It returns to its former state during subsequent activity – Law of reflex fatigue: the strength of a reflex declines during repeated elicitation and returns to its former value during subsequent inactivity – Law of facilitation: the strength of a reflex may be increased through presentation of a second stimulus which does not itself elicit the response – Law of inhibition: the strength of a reflex may be decreased through the presentation of a second stimulus which has no other relation to the effector involved • Also discusses law of conditioning of Type S and law of extinction of Type S Distinguishes between PAVLOVIAN and OPERANT conditioning • Operant behavior is EMITTED not elicited • static laws DO NOT apply to operant behavior • Remember: still in day when CC does NOT equal OC – Believed were different kinds of learning – CC: visceral muscles – OC: skeletal responses Dynamic laws of Type R behavior: • HIS version of the Law of Effect • Law of conditioning of Type R behavior: If the occurrence of an operant is followed by a presentation of a reinforcing stimulus, the strength is increased • -notice that conditioning = strength of the operant • Law of extinction of Type R behavior: If the occurrence of an operant already strengthened through conditioning is not followed by the reinforcing stimulus, the strength is decreased • Can get stimuli that are correlated with R-S connections: thus can set the occasion for the R-S contingency The reflex reserve: • Reflex reserve = total available activity for an animal • There is a relation between • The number of responses appearing during the extinction of an operant and • The number of preceding reinforcements • Changes in drive do not change the total number of available responses, • Although the rate of responding may vary greatly • Changes in drive change the rate or pattern of responding • Emotional, facilitative, and inhibitory changes are compensated for by later changes in strength Interaction of reflexes: • Important in that responses not occur in isolation • Law of compatibility: Two or more responses which do not overlap topographically may occur simultaneously without interference • Law of prepotency: When two reflexes overlap topographically, and the responses are incompatible, one response may occur to the exclusion of another • Law of algebraic summation: The simultaneous elicitation of two responses utilizing the same effectors but in opposite directions produces a response the extent of which is an algebraic resultant Interaction of reflexes: • Law of blending: Two responses showing some topographical overlap may be elicited together but in necessarily modified forms • Law of spatial summation: When two reflexes have the same form of response, the response to both stimuli in combination has a greater magnitude and a shorter latency • Law of chaining: The response of one reflex may constitute or produce the eliciting or discriminative stimulus of another • Law of induction: A dynamic change in strength of a reflex may be accompanied by a similar but not so extensive change in a related reflex, where the relation is due to the possession of some common properties of stimulus or response Defines properties of a class of a reflex • Under what conditions does the R occur? – In operant conditioning: what are the defining characteristics for reinforcement – Under what stimulus conditions does a response occur? – What are the results? – Really the ABCs of operant behavior! – Antecedants – Behavior – Consequences • What does the animal DO to get reinforced – Must show a correlation between R and S – We will argue later that this must be a contingency! – Must show that dynamic laws apply Defining Skinner's methodology: • Direction of inquiry: – Inductive rather than deductive – Hypotheses declared to direct the choice of facts – Not necessary, but guide what is a useful vs useless fact • The organism: – Skinner wants to limit to one single representative sample – The white rat and/or pigeon- many advantages in terms of control • The operant: – – – – Use bar pressing Skinner box Again- assume that is equivalent to any other response Easy to measure- reliable, controllable, etc. Skinner box: Pigeon pecks or rat bar presses to receive reinforcers System of notation • • • • S = stimulus R = response S.R = respondent SR = reinforcer • Properties of term indicated with lower letters: – – – – Rabc response with properties a b and c Superscripts comment upon term- place, formula, etc. e.g. S1 or SD also composite stimuli: S1SD • --> = is followed by • Now can analyze a chain or sequence of behavior: and string together to make "behavior sentences" Important to control Extraneous Factors • Use maximal isolation e.g. sound attenuating chamber • Control "hunger" with deprivation, etc. – Usually around 80% free feeding – This is higher today (85-90%) – Maintains a constant “hunger” • Standardize feeders and reinforcers • Control light/day cycles, etc. • As much experimental control as possible to reduce variance in experiments The Cumulative Recorder • Measuring the Behavior: • Important characteristics of measurement: • Definition of behavior as that part of activity of the organism which affects the external world • The practical isolation of the unit of behavior • Definition of a response as a class of events • Demonstration that the rate of responding is the principal measure of the strength of an operant • Cumulative record • • Responses accrue or are cumulative What happens if the line goes down? Reinforcers vs. Punishers Positive vs. Negative • Reinforcer = rate of response INCREASES • Punisher = rate of response DECREASES • Positive: something is ADDED to environment • Negative: something is TAKEN AWAY from environment • Can make a 4x4 contingency table Reinforcement Punishment Positive Add Stimulus Positive Reinforcement make bed-->10cent (Positive) Punishment hit sister->spanked Negative Negative Reinforcement Negative Punishment Remove make bed-> Mom stops hit sister->lose TV Stimulus nagging Parameters or Characteristics of Operant Behavior • Strength of the response: – With each pairing of the R and Sr/P, the responsecontingency is strengthened – The learning curve is • Monotonically ascending • Has an asymptote • There is a maximum amount of responding the organism can make Parameters or Characteristics of Operant Behavior • Extinction of the response: – Remove the R Sr or RP contingency – Now the R 0 • Different characteristics than with classical conditioning: – Animal increases behavior immediately after the extinction begins: TRANSIENT INCREASE – Animal shows extinction-induced aggression! – Why? More parameters: • Generalization can occur: – Operant response may occur in situations similar to the one in which originally trained – Can learn to behavior in many similar settings • Discrimination can occur – Operant response can be trained to very specific stimuli – Only exhibit response under specific situations • Can use a cue to teach animal: – S+ or SD : contingency in place – S- or S : contingency not in place – Thus: SD: RSr Schedules of Reinforcement: • Continuous reinforcement: – Reinforce every single time the animal performs the response – Use for teaching the animal the contingency – Problem: Satiation • Solution: only reinforce occasionally – – – – Partial reinforcement Can reinforce occasionally based on time Can reinforce occasionally based on amount Can make it predictable or unpredictable Partial Reinforcement Schedules • Fixed Ratio: every nth response is reinforced • Fixed interval: the first response after x amount of time is reinforced • Variable ratio: on average of every nth response is reinforced • Variable interval: the first response after an average of x amount of time is reinforced More parameters • Shaping – Final behavior must be within repertoire of organism – Break behaviors into smallest component – Chain up or down • Secondary reinforcement – Stimuli can be paired with primary reinforcer – E.g. money • Generalized reinforcers – Reinforcers reinforce many behaviors – E.g., money reinforcers many, many behaviors • Chaining: – Make a chain of behaviors – E.g., 1 behavior leads to another to another to another……makes a chain of behavior.