* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Hierarchical Neural Network for Text Based Learning

Indirect tests of memory wikipedia , lookup

Formulaic language wikipedia , lookup

Natural computing wikipedia , lookup

Network science wikipedia , lookup

Mirror neuron wikipedia , lookup

Holonomic brain theory wikipedia , lookup

Premovement neuronal activity wikipedia , lookup

Neuropsychopharmacology wikipedia , lookup

Misattribution of memory wikipedia , lookup

Stephen Grossberg wikipedia , lookup

Sparse distributed memory wikipedia , lookup

Neural modeling fields wikipedia , lookup

Social network (sociolinguistics) wikipedia , lookup

Optogenetics wikipedia , lookup

Central pattern generator wikipedia , lookup

Hierarchical temporal memory wikipedia , lookup

Catastrophic interference wikipedia , lookup

Convolutional neural network wikipedia , lookup

Nervous system network models wikipedia , lookup

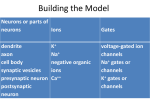

Hierarchical Neural Network for Text Based Learning Janusz A Starzyk, Basawaraj Ohio University, Athens, OH Rules Contd. Template design only ©copyright 2008 • Ohio University•Media Production • 740.597-2521 • Spring Quarter SLA IL X2 X1 … PN X3 where ILX is the input list of neuron X and OLA is the output list of neuron A X4 X1 X3 X2 X4 OL X i OL X i OLC C create a new node C. B ILB ILB ILA A Decrease in Activation v/s No. of Words Layer 3 Decrease in Processing Time v/s No. of Words 2 10 70 Tbatch / Treference Tdynamic / Treference Batch Dynamic Layer 4 Fig. 4 Neuron “A” with single output is merged with Neuron “B”, and “A” is removed. Layer 6 “A” “Y” “R” Fig. 1 LTM cell with minicolumns [4] In such networks, the interconnection scheme is naturally obtained through sequence learning and structural self-organization. No prior assumption about locality of connections or structure sparsity is made. Machine learns only inputs useful to its objectives, a process that is regulated by reinforcement signals and self organization. Rules for Self-Organization Few simple rules used for self organization X2 X3 X4 X1 X2 X3 X4 B B OL A OL A OLC C OLB OLB OLC C A B Fig. 2 If OL A OLB 3 create a new node C. ILX i ILX i ILC C 40 30 1 10 0 10 10 A A OLA OLA OLB 0 -1 0 1000 2000 3000 No. of Words 4000 5000 6000 10 0 1000 2000 3000 No. of Words 4000 5000 6000 Network Simplification Time v/s No. of Words 1400 Final Connection as percent of Original Connections v/s No. of Words 85 Fig. 5 Neuron “B” with single input is merged with Neuron “A”, and “B” is removed. Implementation Batch Mode: All words used for training are available at initiation. Network simplification & optimization is done by processing Total number of neurons is 23% higher than the reference (6000) Dynamic Mode: Words used for training are increased incrementally, - one word at a time Simplification & optimization is done by processing - one word at a time. Total number of neurons is 68% higher than the reference (6000) Batch Dynamic 80 75 70 65 60 55 50 Batch Dynamic 1200 1000 800 600 400 45 200 40 35 ILC A B C A OLC OL A OLB 50 20 - all the words in the training set. X1 Decrease in Processing Time 60 X X OLA ILB ILB ILC C Tests were run with dictionary up to 6000 words The percent reduction in number of interconnections increases (by up to 65 – 70%) as the number of words increase. The time required to process network activation for all the words used decreases as the number of words increases (reduction by a factor of 55, in batch mode; and 35, in dynamic mode; for 6000 words). Dynamic implementation takes longer compared to the batch implementation, mainly due to the additional overhead required for bookkeeping. The savings (connections and activations) obtained in case of dynamic implementation are less compared to the batch implementation Combination of both methods is advisable for continuous learning and self-organization. Network Simplification Time (sec) Proposed approach uses intermediate neurons to lower the computational cost Intermediate neurons decrease number of activations associated with higher level neurons This concept can be extended to associations of words Small number of rules for concurrent processing are used We can arrive at local optimum of network structure / performance The network topology is self-organizing through addition and removal of neurons and redirecting of neuron connections Neurons are described by their sets of input and output neurons Local optimization criteria are checked by searching the set SLA before the structure is updated when creating or merging the neurons. ILA ILA ILC C LTM cell (“ARRAY”) Hierarchical Network Network Simplification OLC A B B C B A hierarchical neural network structure for text learning is obtained through self-organization Similar representation for text based semantic network was used [2] An input layer takes in characters, then learns and activates words stored in memory Direct activation of words requires large computational cost for large dictionaries Extension to phrases, sentences or paragraphs would render such a network impractical due to associated computational cost Computer memory required would also be tremendously large A B A Fig. 3 If IL A ILB 3 Layer 2 Results and Conclusion ILC ILA ILB Decrease in Activation Traditional approach is to describe semantic network structure and/or probabilities of transition in associated Markov models Biological networks learn Different Neural Network structures, but common goal Simple and efficient to solve the given problem Sparsity is essential Size of the network and time to train important for large data sets Hierarchical structure of identical processing units was proposed [1] Layered organization and sparse structure is biologically inspired Neurons on different layers interact through trained links This leads to a sparse hierarchical structure. The higher layers represent more complex concepts. Basic nodes in this network are capable of differentiating input sequences. Sequence learning is prerequisite to building spatio-temporal memories. This is performed using laminar minicolumn [3] LTM cells (Fig.1) Final Connections as percent of Original Connections Introduction 0 0 1000 2000 3000 No. of Words 4000 5000 6000 0 1000 2000 3000 No. of Words 4000 5000 6000 References Mountcastle, V. B., et. al, Response Properties of Neurons of Cat’s Somatic Sensory Cortex to Peripheral Stimuli, J. Neurophysiology, vol. 20, 1957, pp. 374-407. Rogers, T. T., McClelland, J. L., Semantic Cognition text: A parallel Distributed Processing Approach, 2004, MIT Press . Grossberg, S., How does the cerebral cortex work? Learning, attention and grouping by the laminar circuits of visual cortex. Spatial Vision, 12, 163-186,1999. Starzyk J.A., Liu Y., Hierarchical spatio-temporal memory for machine learning based on laminar minicolumn structure, 11th ICCNS, Boston, 2007.