* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download ExampleDesignDescription

Neuropsychopharmacology wikipedia , lookup

Artificial neural network wikipedia , lookup

Holonomic brain theory wikipedia , lookup

Metastability in the brain wikipedia , lookup

Computer vision wikipedia , lookup

Neuroesthetics wikipedia , lookup

Feature detection (nervous system) wikipedia , lookup

Neural engineering wikipedia , lookup

Nervous system network models wikipedia , lookup

Development of the nervous system wikipedia , lookup

Neural correlates of consciousness wikipedia , lookup

Recurrent neural network wikipedia , lookup

Types of artificial neural networks wikipedia , lookup

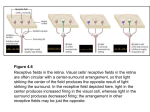

1(16) Course CDT310, Software Engineering Group no. Date Proj. mgr. E-mail Design Description Project Blind Cortex DISTRIBUTION Steering group: Frank Lüders Farhang Nemati Yue Lu Project group: Marcus Adolfsson Sven Almgren Mario Cardador Martin Ulrika Hjälmgården Johan Holmqvist Jenny Jutterström Tord Karlsson Mikael Svensson Jonatan Treijs Others: Baran Cürüklü CONTENTS 1 27 April 2008 Jenny Jutterström jjm05001 1 Introduction ............................................................................................................... 4 1.1 Background ...................................................................................................... 4 1.2 Definitions........................................................................................................ 4 1.3 Related Documents .......................................................................................... 4 2 Functional Description .............................................................................................. 5 2.1 Use Case Model ............................................................................................... 5 2.1.1 Actors 5 2.1.2 Use Cases 5 2.2 Select image ................................................... Error! Bookmark not defined. 2.2.1 Requirements Reference 6 2.2.2 Related Use Cases 6 2.3 Run edge detection ......................................... Error! Bookmark not defined. 2.3.1 Requirements reference not defined. Error! Bookmark 2.3.2 Related use cases not defined. Error! Bookmark 3 External Interfaces .................................................................................................... 6 3.1 Graphical User Interface .................................................................................. 6 4 Software Architecture ............................................................................................... 8 4.1 Overview and Rationale ................................................................................... 8 4.2 System Decomposition .................................................................................... 8 4.3 Hardware/Software Mapping ........................................................................... 9 4.4 Persistent Data.................................................................................................. 9 4.5 Start-Up and Shut-Down .................................................................................. 9 4.6 Error Handling ............................................................................................... 10 5 Detailed Software Design ........................................................................................ 10 5.1 GUI................................................................................................................. 10 5.1.1 Static Structure 10 5.1.2 Dynamic Behaviour 10 5.2 Input/Output Mapping.................................................................................... 10 5.2.1 Input mapping 10 5.2.2 Output mapping 10 5.3 Main functionality .......................................................................................... 11 5.3.1 Static Structure 11 5.3.2 Dynamic Behaviour 12 5.4 Neural Network Topology ............................................................................. 12 5.4.1 Retina 12 5.4.2 Primary Visual Cortex (V1) 13 5.5 Dynamic Behaviour ....................................................................................... 15 4(16) Course CD5360, Software Engineering Group no. Date 1 Maj 05, 2008 1 Introduction 1.1 Background The Blind Cortex software development team has been contracted by Baran Cürüklü to develop a software tool for edge detection in images based on a plausible biological model. The software should simulate the areas of the human brain that are active in edge detection (the retina, LGN and primary visual cortex), see the Requirements Definition for additional details. The software will contribute to and be used for scientific research about the Primary Visual Cortex in the brain, and it will also deepen the understanding of what is possible to accomplish using the NEST simulation tool. This document is supposed to give an overview of the design of the software and specify how it will be implemented. The document should be distributed to the Blind Cortex steering group, which is in charge of the quality assurance of the project, and also to the customer so that it can be used for future reference. 1.2 Definitions Terms Neural Net Retina V1 LGN Neuron Synapse Spike Gabor filter Definitions A group of neurons connected to each other to form a net. The first visual process in the human brain, the retina contains the on/off off/on cells. The human primary visual cortex. Lateral Geniculate Nucleus, a structure in the brain. A small processing unit that is found in the brain by the billions (about one hundred billions). Neurons are connected through synapses to each other to form a neural net. A connection between neurons, a synapse has a weight that decides the size of the current that is sent through it. In nest a synapse can be either exitatory or inhibitory. When a neuron sends a current, it is said to spike. “A Gabor filter is a linear filter whose impulse response is defined by a harmonic function multiplied by a Gaussian function.” [Wikipedia] 1.3 Related Documents Document identity RequirementsDefinition.doc ClassDiagram.uml UseCases.uml Document title Requirements Definition Class Diagram and Sequence Diagram The Use Case Diagrams 5(16) Course CD5360, Software Engineering Group no. Date 1 Maj 05, 2008 2 Functional Description 2.1 Use Case Model Figure 1: Use case for running edge detection . 2.1.1 Actors User: Provides the system with an input image and parameters for the neural network. 2.1.2 Use Cases Run edge detection described in section 2.2 2.2 Run edge detection Initiator User Goal System generates an image that represents the edges of the objects in the input image 1. User browses and selects an image 2. System validates the image 3. User specifies neural network parameters 4. User instructs system to process image 5. System processes input image 6. System displays output image Extensions: 2. Image file format or resolution is invalid a. Inform user b. Resume at 1 5. Processing fails or times out a. Inform user b. Scenario ends 6(16) Course CD5360, Software Engineering Group no. Date 1 Maj 05, 2008 2.2.1 Requirements Reference R-UI-002 From the GUI, the user should be able to choose an image and load it into the simulation model for processing. R-IO-005 JPEG image format. R-IO-006 Bitmap image format. R-IO-001 The software must be able to detect edges in still images. R-IO-002 The software should output some representation of the dominant orientations in each spot of the visual field. R-IO-003 The software should output an image showing the detected edges. R-DC-001 The software must detect edges by simulating neurons in the human primary visual cortex. R-UI-003 When the processing of an image is done, the user should be able to choose to view the result from the GUI. R-UI-004 Width and height of simple cell receptive fields and number of spots should be adjustable. 2.2.2 Related Use Cases This is the only use case for this system. 3 External Interfaces 3.1 Graphical User Interface 7(16) Course CD5360, Software Engineering Group no. Date 1 Maj 05, 2008 Figure 2: The GUI This is a captured image of how the final graphical user interface looks like. Options: The “Load Image” button allows the user to browse the file system and choose an image for processing. The “Process Image” button will start the processing of the chosen image, using the current parameter settings, and the progress bar will show how the processing is proceeding. The GUI also show information about the current progress work on the status bar. The “Reset” button resets the preview and result image panels, as well as the parameters to the default values. A save prompt will appear if a processed image was not saved before. The “Save” button allows the user to save the resulting image to a specific location on your computer. 8(16) Course CD5360, Software Engineering Group no. Date 1 Maj 05, 2008 The “Cancel” will hold the program if it is processing. It only will be available if there is an image processing. You have access to a menubar with the same options as the buttons in the application. Parameters: You can determine the spots with respect to the size of the image in both X and Y values the spots correspond to the pixels. The ganglion weight can be modified as a float to increase the accuracy. You can also set the ganglion size of the cells and only accepted values are odd numbers. The number of orientations is defined 180 degrees divided by the value you configure. You can specify the weight, height and width of the simplecells. The initial spike threshold can be specified before the simulation is done and it is possible to recalculate it by altering the value and pressing the “R” button. Upon pressing the R button after changing the values the result image will be updated. On the result image you can hover with the mouse over the lines to get the angle of the orientation. The preview image will show the image the user has chosen for processing, without any modifications. The result image will show once the processing is done. The process will output three different images and a spike diagram, which can be toggled with the radio buttons labelled “Result”, “On/Off”, “Off/On” and “Diagram”. The On/Off and Off/On images will show the edges detected by the corresponding cells in the retina. The result image is the final result of the processing, showing the edges detected by the primary visual cortex. 4Software Architecture 4.1 Overview and Rationale The Main System – The main system is designed with a client-server architectural style, where the system uses the services of the neural net. The Neural Net – The neural net will be designed with the layer style architecture as is the human V1. 4.2 System Decomposition The system is divided into four main parts; GUI, input mapping, output mapping and the neural net. GUI – The GUI deals with taking input from the user in the form of an image and a couple of parameters, and also to display the final processed image. Input Mapping – The input mapping subsystem deals with mapping the image provided by the UI to a matrix of parameters that can be interpreted by the neural net. 9(16) Course CD5360, Software Engineering Group no. Date 1 Maj 05, 2008 Output Mapping – The output mapping subsystem deals with taking the output from the neural net and interpret and draw the resulting image. Neural Net – The neural net subsystem is the main image processing unit, which takes a matrix provided by the input mapping subsystem and through a series of biologically plausible sub processes determines the contrasts in the image and the dominating orientation at each point according to current beliefs about the biological processes in the human V1. The result of the process is passed on to the output-mapping subsystem. Figure 3: The system composition 4.3 Hardware/Software Mapping The system uses a couple of “off the shelf” systems: NEST Nest is a neural network simulation tool, used for the simulation of the neural nets in the brain PIL ( Python Imaging Library ) PIL is an imaging library for python and is used to open images, convert them, grab pixel data and output our results. 4.4 Persistent Data Our system has no persistent data. 4.5 Start-Up and Shut-Down The GUI can start-up and shut-down the background processes if the user finds them taking too much time or needs to change something during run-time. 10(16) Course CD5360, Software Engineering Group no. Date 1 Maj 05, 2008 4.6 Error Handling The parts of the system have their own error handling, checks for image-compatibility output access and “too large”-images is being done. Errors during runtime will be reported to the user with id's and some information. 5 Detailed Software Design 5.1 GUI 5.1.1 Static Structure Upon starting the application all the buttons will be disabled but the Load Image button and the Help information due to its crucial to have an image to process. 5.1.2 Dynamic Behaviour The buttons, parameter fields and menu bar will dynamically change availability depending on what current state we are in. 5.2 Input/Output Mapping IO mapping is not a separate system, but still a complex task of its own. As such we have designed the system as if they where external services, The neural net requires the input image to be mapped to specific entities, but the GUI is not responsible for the mapping, so the network will do the mapping as an extra service, that could have been outsourced to specific classes, but in this system they will be inlined into the initialization and finalization of the processing methods. 5.2.1 Input mapping For the network to understand the image it is to process we have to translate it into an array of integers, the integers will then represent the intensity of brightness of each pixel at this point (a value between 0 and 255). To feed the neural network with this information we have to use a device called a “dc generator”. A dc generator simulates a current in nest and the current can be set to any wanted amplitude. In our network we will create dc generators for each pixel in the image and let each dc generator’s amplitude be influenced by the integer at that point from the intensity matrix. This will create a good simulation of the retina and its input. 5.2.2 Output mapping The output from the last part of the neural network (the V1) will have to be interpreted to get the dominant orientation at each spot. The output will be in the form of 12 – 15 text-files holding information about spike times. Each text-file will represent a different orientation. The interpretation will analyse the spike times and determine the dominant orientation in each spot. The analysis will show that the orientation with most spikes in a spot will be the most dominant one. This information will then be used to draw the final resulting picture. 11(16) Course CD5360, Software Engineering Group no. 1 Maj 05, 2008 Date 5.3 Main functionality This section will explain the general structure of the image processing parts of the system. V1 will be discussed in greater depth in section 5.4. 5.3.1 Static Structure In this design the Image object will be a representation of the raw pixel data. ProcessRequest will contain all the information that the Processor need to be able to complete the request. The Processor will then take the incoming request, with its image, and build the required neural network, with the input mapping. The simulation will then commence and the result will be passed back as a ProcessResponse, which will contain every data the processor has produced that's relevant for the application. GUI +processor: BackgroundProcessor ProcessRequest +image: Image +spikeFile: filepath +xSpots: int +ySpots: int +simpleCellWidth: int +simpleCellHeight: int +simpleCellWait: int +orientations: int +ganglionWeight: float +ganglionSize: int +spikeThreshold: int <<interface>> ProgressListener +processProgress(progress: float, message: text) +processCompleted(pr: ProcessResponse) +processStarted() ProcessResponse +outputMapping: OutputMapping +spikeDiagram: Image -onoff: Image -offon: Image -request: ProcessRequest -result: Image BackgroundProcessor -processor: Processor -progressListener: ProgressListener Processor +addListener(listener: ProgressListener) +process(pr: ProcessRequest) +getProcessor(): Processor +cancel(): void +cancel(): void +process(pr: ProcessRequest, pl: ProgressListener): ProcessResponse +getNewResult(): Image -getImageIntensities(): intArray Retina +imgIntens: int2DArray +listener: ProgressListener -ganglionSize -ganglionWeight -currentProgress -progressLength -dc -on -off +retinaSetup() V1 +currentProgress: int +progressLength: int +spikeMatrices1 +spikeMatrices2 -simpleCellWidth -simpleCellHeight -xSpots -ySpots -onMatrix -offMatrix -orientations -subfieldWeight -listener: ProgressListener -orientationMatrices1 -orientationMatrices2 -orientationMatrix +getSpotCenters() +getRetinaLoops() -connectSpikeDetectors() -createSpikeMatrix() -connectToRetina() -getSubfieldWeights() -createNeuronMatrix() -rotatePoint() -pointIsInterior() -getPolygonInteriorPoints() -getPolygonExtremes() -loadOrientationMatrix() -getRetinaCells() OutputMapping +retina: Retina -v1: V1 -request: ProcessRequest -spotSize: int +getResponse(): ProcessResponse +getSpikeArrays() -calculateDominantOrientationImage() -calculateOnOffImage() -calculateOffOnImage() -calculateSpotSize(): int -calculateDominantOrientationForSpot(): float -drawDominantOrientation(): void Diagram +drawDiagramMarkings() +plot() 12(16) Course CD5360, Software Engineering Group no. Date 1 Maj 05, 2008 BackgroundProcessor is used to allow the application to run the simulation in the background, and is responsible for informing the application when the processing is completed. Figure 4: The system composition 5.3.2 Dynamic Behaviour : GUI : ProcessRequest : Processor : BackgroundProcessor : ProgressListener 1 : loadImage() 2 : set x() 3 : process() 4 : process() 5 : processProgress() 6 : processCompleted() 7 : showImage() Figure 5: The system composition 5.4 Neural Network Topology The neural network can be divided into two phases; the first part, which is the retina, acts as a Gabor field and finds contrast edges in the image. The second part is the primary visual cortex also, called V1; this part uses the result from the retina and finds the dominating orientation at each spot. 5.4.1 Retina For each pixel in the image a static current is simulated in the neural net by dc generators. The current at each point is influenced by the intensity of brightness in the pixel at this point provided by the input mapping subsystem. This matrix of dc generators is then connected to the retina. 13(16) Course CD5360, Software Engineering Group no. Date 1 Maj 05, 2008 The Retina consists of 2 matrices of neurons, one matrix with on/off cells and one with off/on. An on/off (on centre / off surround) cell has an exciting connection going from the centre of its receptive field and eight inhibiting connections from its surrounding points. An off/on cell has the same connections just with its exiting connection changed to inhibiting connection and inhibiting connections changed to exciting connections. Setting the connections in this way creates an effective way to find edges in an image and depending on how strong the connections are; different degrees of edge intensity can be detected. Figure 6: The Connections from 9 DC generators to an on-center / off-surround retina cell. The retina on/off and off/on cells are then connected to the cells in the primary visual cortex. 5.4.2 Primary Visual Cortex (V1) The objective for the V1 in our model is to find the dominating orientation in each point. To achieve this, our V1 simulates simple cells which because of its connections can detect the dominating orientation. A simple cell receptive field is a rectangle divided into 2 parts; an on-part with connections from the on/off matrix and an offpart with connections from the off/on matrix. 14(16) Course CD5360, Software Engineering Group no. Date 1 Maj 05, 2008 Figure 7: The Connections of a V1 simple cell with horizontal orientation (0 degrees) Because of the need to find the dominating orientation in each spot we will need a V1matrix for each orientation we want to examine. The difference between an orientation and the next is 12 – 15 degrees, which means that we will have 12 – 15 matrices (180 / 15 = 12 and 180/15 = 12). 15(16) Course CD5360, Software Engineering Group no. Date 1 Maj 05, 2008 5.5 Dynamic Behaviour The image values matrix will be loaded into the dc generators which will output a current. The retina on/off and off/on cells will spike if the exiting and inhibiting signals makes it pass its threshold. The V1 simple cells will spike if the signals sent forward to them makes them pass their threshold. If a neuron spike in the V1 then this means that the dominating orientation in that spot is the matrix’s orientation. If more than one orientation spikes in the same spot a calculation is made to find out which is the most dominating. 16(16) Course CD5360, Software Engineering Group no. Date REVISION Rev. Page (P) Ind. Chapt.(C) 001 P4,5 C2 002 P5, 6, 7. C3, 4, 5 003 P4,5,6 C2 004 005 006 007 008 009 010 011 012 013 014 015 016 017 018 019 1 Maj 05, 2008 Description Added use case definitions Described the decomposition into subsystems and formatted the document. Updated the use case definitions: Added system parameters to action list, changed name from "Generate output" to "Run edge detection" and updated the use case diagram. P4, C1 Added background section P4, C1 Added related document: Requirements Definition P4, C1 Added LGN definition P9, 10, 11, Added the Network Topology Section and described the network 12, C6 layout and function. P7, C4 Changed some small things in the overview architecture. P10, 11 C6 Changed some minor formal things. P4 C1 Added some related documents. P4 Added word definitions P3 Added first draft of the GUI P9 Removed unnecessary chapters (4.5 and 4.6) C5 Added text bodies C5 Changed some architecture and subsystem descriptions, and some minor things. P6,7 C2 Updated the use cases P5,7,9,11, Added figure descriptions 12,13,14 C2,3,4,5 P5,6 C2 Updated use cases C3, Updated GUI and design regarding GUI P1,3.1,3.2 C5 Date Initials 20080413 MA 20080413 JT 20080417 MA 20080421 UH, JJ 20080421 UH, JJ 20080421 UH, JJ 20080424 JT 20080424 JT 20080425 JH 20080425 JH 20080425 JT 20080425 MS, MC 20080427 SA 20080427 SA, MS 20080429 JT, UH 20080430 MA 20080505 JH 20080516 MA 20080527 MS, MC