* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download My slides - people.vcu.edu

Survey

Document related concepts

Genome evolution wikipedia , lookup

Gene expression programming wikipedia , lookup

Quantitative trait locus wikipedia , lookup

Genomic imprinting wikipedia , lookup

Artificial gene synthesis wikipedia , lookup

Genome (book) wikipedia , lookup

Microevolution wikipedia , lookup

Ridge (biology) wikipedia , lookup

Epigenetics of human development wikipedia , lookup

Species distribution wikipedia , lookup

Minimal genome wikipedia , lookup

Biology and consumer behaviour wikipedia , lookup

Transcript

Significance Testing

of Microarray Data

BIOS 691 Fall 2008

Mark Reimers

Dept. Biostatistics

Outline

Multiple Testing

Family wide error rates

False discovery rates

Application to microarray data

Practical issues – correlated errors

Computing FDR by permutation procedures

Conditioning t-scores

Reality Check

Goals of Testing

To identify genes most likely to be changed or

affected

To prioritize candidates for focused follow-up

studies

To characterize functional changes

consequent on changes in gene expression

So in practice we don’t need to be exact…

but we do need to be principled!

Multiple comparisons

Suppose no genes really changed

10,000 genes on a chip

Each gene has a 5% chance of exceeding

the threshold at a p-value of .05

(as if random samples from same population)

Type I error

The test statistics for 500 genes should

exceed .05 threshold ‘by chance’

Distributions of p-values

Random Data

Real Microarray Data

When Might it not be Uniform?

When actual distribution of test statistic

departs from reference distribution

Outliers in data may give rise to more

extremes

More small p-values

Approximate tests – often conservative

P-values are larger than occurrence

probability

Distribution shifted right

Distribution of Numbers of p-values

Each bin of width w contains a random

number of p-values

The expected number is Nw

Each p-value has a probability w of lying in

the bin

The distribution follows the Poisson law

SD ~ (mean)1/2

Characterizing False Positives

Family-Wide Error Rate (FWE)

probability of at least one false positive

arising from the selection procedure

Strong control of FWE:

Bound on FWE independent of number changed

False Discovery Rate:

Proportion of false positives arising from

selection procedure

ESTIMATE ONLY!

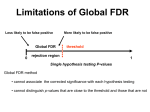

General Issues for Multiple Comparisons

FWER vs FDR

FDR: E(FDR) or P(FDR < Q)?

Actual (random) FDR has a long-tailed distribution

But E(FDR) methods are simpler and cleaner

Correlations

Are you willing to tolerate some false positives

Many procedures surprise you when tests are

correlated

Always check assumptions of procedure!

Models for Null distribution: a matter of art

Strong vs weak control

Will the procedure work for any combination of true and

false null hypotheses?

FWER - Setting a Higher Threshold

Suppose want to test N independent genes at

overall level a

What level a* should each gene be tested at?

Want to ensure

P( any false positive) < a

i.e. 1 – a = P( all true negatives )

= P( any null accepted )N

= ( 1 – a* ) N

Solve for a* = 1 – (1 – a )1/N

Expectation Argument

P( any false positive )

<= E( # false positives )

= N E( any false positive)

= N a*

So we set a* = a / N

NB. No assumptions about joint

distribution

‘Corrected’ p-Values for FWE

Sidak (exact correction for independent

tests)

pi* = 1 – (1 – pi)N if all pi are independent

pi* @ 1 – (1 – Npi + …) gives Bonferroni

Bonferroni correction

pi* = Npi, if Npi < 1, otherwise 1

Expectation argument

Still conservative if genes are co-regulated

(correlated)

Both are too conservative for array use!

Traditional Multiple Comparisons Methods

Key idea: sequential testing

Order p-values: p(1), p(2), …

If p(1) significant then test p(2) , etc …

Mostly improvements on this simple idea

Complicated proofs

Holm’s FWER Procedure

Order p-values: p(1), …, p(N)

If p(1) < a/N, reject H(1) , then…

If p(2) < a/(N-1), reject H(2) , then…

Let k be the largest n such that p(n) < a/n, for

all n <= k

Reject p(1) … p(k)

Then P( at least one false positive) < a

Proof doesn’t depend on distributions

Hochberg’s FWER Procedure

Find largest k: p(k) < a / (N – k + 1 )

Then select genes (1) to (k)

More powerful than Holm’s procedure

But … requires assumptions:

independence or ‘positive dependence’

When one type I error, could have many

Holm & Hochberg Adjusted P

Order p-values pr1 , pr2, …, prM

Holm (1979) step-down adjusted p-values

p(j)* = maxk = 1 to j {min ((M-k+1)p(k), 1)}

Adjust out-of-order p-values in relation to

those lower (‘step-down’)

Hochberg (1988) step-up adjusted p-values

p(j)* = mink = j to M {min ((M-k+1)p(k), 1) }

Adjust out-of-order p-values in relation to

those higher (‘step-up’)

Simes’ Lemma

Suppose we order the p-values from N

independent tests using random data:

p(1), p(2), …, p(N)

Pick a target threshold a

P( p(1) < a /N || p(2) < 2 a /N || p(3) < 3 a /N || … ) = a

a/2

P = P( min(p1,p2) < a/2) +

P(min(p1,p2) > a/2 & max(p1,p2) < a)

Area = (a/2 + a/2 – a2/4 )

+ a2/4

p2

a/2

p1

Simes’ Test

Pick a target threshold a

Order the p-values : p(1), p(2), …, p(N)

If for any k, p(k) < k a /N

Select the corresponding genes (1) to (k)

Test valid against complete Null

hypothesis, if tests are independent or

‘positively dependent’

Doesn’t give strong control

Somewhat non-conservative if negative

correlations among tests

Correlated Tests and FWER

Typically tests are correlated

Extreme case: all tests highly correlated

One test is proxy for all

‘Corrected’ p-values are the same as

‘uncorrected’

Intermediate case: some correlation

Usually probability of obtaining a p-value by

chance is in between Sidak and uncorrected

values

Symptoms of Correlated Tests

P-value Histograms

Distributions of numbers of p-values

below threshold

10,000 genes;

10,000 random drawings

L: Uncorrelated R: Highly correlated

Permutation Tests

We don’t know the true distribution of gene

expression measures within groups

We simulate the distribution of samples

drawn from the same group by pooling the

two groups, and selecting randomly two

groups of the same size we are testing.

Need at least 5 in each group to do this!

Permutation Tests – How To

Suppose samples 1,2,…,10 are in group 1

and samples 11 – 20 are from group 2

Permute 1,2,…,20: say

13,4,7,20,9,11,17,3,8,19,2,5,16,14,6,18,12,15,10

Construct t-scores for each gene based on

these groups

Repeat many times to obtain Null

distribution of t-scores

This will be a t-distribution original

distribution has no outliers

Critiques of Permutations

Variances of permuted values for really

separate groups are inflated

Permuted t -scores for many genes may

be lower than from random samples from

the same population

Therefore somewhat too conservative pvalues for some genes

Multivariate Permutation Tests

Want a null distribution with same

correlation structure as given data but no

real differences between groups

Permute group labels among samples

redo tests with pseudo-groups

repeat ad infinitum (10,000 times)

Westfall-Young Approach

Procedure analogous to Holm, except that

at each stage, they compare the smallest

p-value to the smallest p-value from an

empirical null distribution of the

hypotheses being tested.

How often is smallest p-value less than a

given threshold if tests are correlated to

the same extent and all Nulls are true?

Construct permuted samples: n = 1,…,N

Determine p-values pj[n] for each sample n

Westfall-Young Approach – 2

Construct permuted samples: n = 1,…,N

Determine p-values pj[n] for each sample n

p

I (min

=

j

p

p(1) )

N

To correct the i-th smallest p-value, drop those

hypotheses already rejected (at a smaller level)

p

corrected

(1)

[n]

j

corrected

(i )

=

I (min

j(1)...( i 1)

p[jn ] p(i ) )

N

The i-th smallest p-value cannot be smaller than

any previous p-values

Critiques of MV Permutation as Null

Correlation structure of 2nd order statistics

is not equivalent

E.g. we sometimes want to find significant

correlations among genes

The permutation distribution of correlations

is NOT an adequate Null distribution –

why?

Use a bootstrap algorithm on centered

variables

see papers by Dudoit and van der Laan

False Discovery Rate

In genomic problems a few false positives

are often acceptable.

Want to trade-off power .vs. false positives

Could control:

Expected number of false positives

Expected proportion of false positives

What to do with E(V/R) when R is 0?

Actual proportion of false positives

Truth vs. Decision

Decision # not rejected # rejected

Truth

# true null H U

V (F +)

totals

# non-true H

T (F -)

S

m1

totals

m-R

R

m

m0

Catalog of Type I Error Rates

Per-family Error Rate

PFER = E(V)

Per-comparison Error Rate

PCER = E(V)/m

Family-wise Error Rate

FWER = p(V ≥ 1)

False Discovery Rate

i) FDR = E(Q), where

Q = V/R if R > 0; Q = 0 if R = 0

(Benjamini-Hochberg)

ii) FDR = E( V/R | R > 0) (Storey)

Benjamini-Hochberg

Can’t know what FDR is for a particular sample

B-H suggest procedure controlling average FDR

Order the p-values : p(1), p(2), …, p(N)

If any p(k) < k a /N

Then select genes (1) to (k)

q-value: smallest FDR at which the gene

becomes ‘significant’

NB: acceptable FDR may be much larger than

acceptable p-value (e.g. 0.10 )

Argument for B-H Method

If no true changes (all null H’s hold)

Q=1

condition of Simes’ lemma holds

Therefore probability < a

Otherwise Q = 0

If all true changes (no null H’s hold)

Q=0<a

Build argument by induction from both

ends and up from N = 2

Practical Issues

Actual proportion of false positives varies

from data set to data set

Mean FDR could be low but could be high

in your data set

Distributions of numbers of p-values

below threshold

10,000 genes;

10,000 random drawings

L: Uncorrelated R: Highly correlated

Controlling the Number of FP’s

B-H procedure only guarantees long-term

average value of E(V/R|R>0)P(R>0)

Korn’s method gives confidence bound on

individual case

can be quite badly wrong in individual cases

also addresses issue of correlations

Builds on Westfall-Young approach to

control tail probability of proportion of false

positives (TPPFP)

Korn’s Procedure

To guarantee no more than k false positives

Construct null distribution as in WestfallYoung

Order p-values: p(1), …,p(M)

Reject H(1), …,H(k)

For next p-values

Compare p-value to full null

N.B. This gives strong control

Continue until one H not rejected

Issues with Korn’s Procedure

Valid if select k first then follow through

procedure, not if try a number of different k

and pick the one with most genes – as

people actually proceed

Only approximate FDR

Computationally intensive

Available in BRB

Storey’s pFDR

Storey argues that E(Q | V > 0 ) is what

most people think FDR means

Sometimes quite different from B-H FDR

Especially if number of rejected nulls needs to

be quite small in order to get acceptable FDR

E.G. if P(V=0) = 1/2 , then pFDR = 2*FDR

A Bayesian Interpretation

Suppose nature generates true nulls with

probability p0 and false nulls with P = p1

Then pFDR = P( H true | test statistic)

Question: We rarely have an accurate

prior idea about p0

Storey suggests estimating it

Storey’s Procedure

1.

Estimate proportion of true Nulls (p0)

2.

3.

4.

5.

6.

Count number of p-values greater than ½

Fix rejection region (or try several)

Estimate probability of p-value for true

Null in rejection region

Form ratio: 2*{# p > ½} p0 / {# p < p0}

Adjust for small numbers (# p < p0)

Bootstrap ratio to obtain confidence

interval for pFDR

Practical Issues

Storey’s procedure may give reasonable

estimates for p0 ~ O(1), but can’t

distinguish values of p1 that are very small

How much does the significance test

depend on the choice of p0?

Such differences may have a big impact

on posterior probabilities

Moderated Tests

Many false positives with t-test arise

because of under-estimate of variance

Most gene variances are comparable

(but not equal)

Can we use ‘pooled’ information about all

genes to help test each?

Stein’s Lemma

Whenever you have multiple variables with

comparable distributions, you can make a

more efficient joint estimator by ‘shrinking’

the individual estimates toward the

common mean

Can formalize this using Bayesian analysis

Suppose true values come from prior distrib.

Mean of all parameter estimates is a good

estimate of prior mean

SAM

Statistical Analysis of Microarrays

Uses a ‘fudge factor’ to shrink individual

SD estimates toward a common value

di = (x1,i – x2,i / ( si + s0)

Patented!

limma

Empirical Bayes formalism

Depends on prior estimate of number of

genes changed

Bioconductor’s approach – free!

limma Distribution Models

Sample statistics:

Priors

Coefficients:

Variances:

Moderated T Statistic

Moderated variance estimate:

Moderated t

Moderated t has t distribution on d0+dg df.