* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

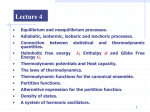

Download Theoretical Statistical Physics

Quantum entanglement wikipedia , lookup

Renormalization wikipedia , lookup

Franck–Condon principle wikipedia , lookup

Wave–particle duality wikipedia , lookup

Matter wave wikipedia , lookup

Symmetry in quantum mechanics wikipedia , lookup

Lattice Boltzmann methods wikipedia , lookup

Ensemble interpretation wikipedia , lookup

Path integral formulation wikipedia , lookup

Quantum electrodynamics wikipedia , lookup

Elementary particle wikipedia , lookup

Ferromagnetism wikipedia , lookup

Particle in a box wikipedia , lookup

Quantum state wikipedia , lookup

Density matrix wikipedia , lookup

Canonical quantization wikipedia , lookup

Identical particles wikipedia , lookup

Relativistic quantum mechanics wikipedia , lookup

Renormalization group wikipedia , lookup

Ising model wikipedia , lookup

Probability amplitude wikipedia , lookup

Theoretical and experimental justification for the Schrödinger equation wikipedia , lookup