* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Ec 385

Survey

Document related concepts

Transcript

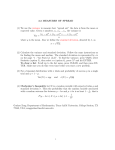

Page 1 Ec 385 Suggested problems from Chapters 11 and 12 Chapter 11: 11.1 Don’t estimate these models, just describe the proper transformation for generalized least squares (correcting for heteroskedasticity) 11.2 For part e): I want you to correct for heteroskedasticity using two different forms for var(et): i) Var(et) = 2Xt. ii) Var(et) = 2Xt2 11.10 (a and b only) Answers 11.1 Specification Transformation: for var(et) 2 xt Divide the model by X1/4: independent variables: 1/X1/4 and X/X1/4 Why??? We divide by the standard deviation, which is the square root of the variance : X1/4. We can ignore the term in all of the models since it doesn’t vary over observations. 2xt Divide the model by X1/2 Independent variables: 1/X1/2 and X/X1/2 This is just like the one we did in class. The standard deviation is xt 2 xt2 Divide the model by X: independent variables: 1/x plus an intercept Here, the standard deviation is xt, so we divide by xt 2ln(xt) Divide the model by (ln(X))1/2 Here the standard deviation is Independent variables: 1/(ln(x))1/2 ln( xt ) 11.2 Code and SAS output appear below. (a) Countries with high per capita income can decide whether to spend larger amounts on education than their poorer neighbours, or to spend more of their larger income on other things. They are likely Page 2 to have more discretion with respect to where public monies are spent. On the other hand, countries with low per capita income may regard a particular level of education spending as essential, meaning that they have less scope for deviating from a mean function. These differences can be captured by a model with heteroskedasticity. Remember that heteroskedasticity is more common in cross-section data. (b) The least squares estimated function is yt 01246 . 0.07317 xt (0.0485) (0.00518) R2 0.862 This function and the corresponding residuals appear in Figure 11.1. The absolute magnitude of the errors does tend to increase as x increases suggesting the existence of heteroskedasticity. Yt 1.6 1.4 1.2 y = - 0.1246 + 0.0732x 1.0 0.8 0.6 0.4 0.2 0.0 -0.2 0 5 10 15 20 Xt Figure 11.1 Estimated Function for Education Expenditure (c) Since it is suspected that, if heteroskedasticity exists, the variance is related to xt , we begin by ordering the observations according to the magnitude of xt. Then, splitting the sample into two equal subsets of 17 observations each, and applying least squares to each subset, we obtain 12 = 0.0081608 and 22 = 0.029127 leading to a Goldfelt-Quandt statistic of GQ 0.029127 = 3.569 0.008161 The critical value from an F-distribution with (15,15) degrees of freedom and a 5% significance level is Fc = 2.40. Since 3.569 > 2.40 we reject a null hypothesis of homoskedasticity and conclude that the error variance is directly related to per capita income xt. (e) Generalized least squares estimation under the assumption var et 2 xt yields yt 0.0929 0.06932 xt (0.0289) (0.00441) (note: I have expressed these results in the model’s original form although it was estimated with no intercept and two independent variables: the reciprocal of the square root of x and x over the square root of x.) The estimated response of per capita education expenditure to per capita income has declined slightly relative to the least squares estimate. The associated 95% confidence interval is (0.0603, 0.0783). This interval is narrower than both those computed from least squares estimates. The comparison with the White-calculated interval suggests that generalized least squares is more efficient; a comparison with the conventional least squares interval is not really valid because the standard errors used to compute that interval are not Page 3 valid. See below for the case were Var(et) = X and how to interpret the results is important. 2 Part B Source 2 t. The differences of how this is carried out Least Squares results The REG Procedure Model: MODEL1 Dependent Variable: y Analysis of Variance Sum of DF Squares Model Error Corrected Total 1 32 33 3.68386 0.59063 4.27449 Root MSE Dependent Mean Coeff Var 0.13586 0.47674 28.49753 Variable Intercept x DF 1 1 Mean Square F Value Pr > 199.59 <.00 3.68386 0.01846 R-Square Adj R-Sq Parameter Estimates Parameter Standard Estimate Error -0.12457 0.04852 0.07317 0.00518 0.8618 0.8575 t Value -2.57 14.13 Pr > |t| 0.0151 <.0001 **************************************************************************** The REG Procedure Model: MODEL1 Dependent Variable: y This is part D, White standard Error for b2 would the the square root of 0.0000363146 = 0.006. This is larg than the 0.00518 value reported y least squares. Consistent Covariance of Estimates Variable Intercept x Intercept 0.0015372135 -0.000211654 x -0.000211654 0.0000363146 **************************************************************************** This regression gets you the numerator for the GQ-statistic The REG Procedure Model: MODEL1 Dependent Variable: y Analysis of Variance Sum of Mean Source DF Squares Square F Value Pr > Model Error Corrected Total Root MSE 1 15 16 0.42220 0.43690 0.85910 0.17067 0.42220 0.02913 R-Square 14.50 0.4914 0.00 Page 4 Dependent Mean Coeff Var Variable Intercept x DF 1 1 0.78115 Adj R-Sq 0.4575 21.84803 Parameter Estimates Parameter Standard Estimate Error t Value Pr > |t| -0.14087 0.24569 -0.57 0.5749 0.07516 0.01974 3.81 0.0017 This regression gets you the denominator for the GQ-statistic The REG Procedure Model: MODEL1 Dependent Variable: y Analysis of Variance Sum of Mean Source DF Squares Square F Value Model 1 0.14225 0.14225 17.43 Error 15 0.12241 0.00816 Corrected Total 16 0.26466 Pr > 0.00 Root MSE Dependent Mean Coeff Var Variable Intercept x DF 1 1 0.09034 R-Square 0.5375 0.17232 Adj R-Sq 0.5066 52.42382 Parameter Estimates Parameter Standard Estimate Error t Value Pr > |t| -0.03807 0.05495 -0.69 0.4990 0.05047 0.01209 4.17 0.0008 **************************************************************************** These are the critical values The SAS System 17 Obs fc tc 1 2.40345 2.03693 This regression corrects for heteroskedasticity of the form var(et) = 2Xt The REG Procedure Model: MODEL1 Dependent Variable: ystar NOTE: No intercept in model. R-Square is redefined. Analysis of Variance Sum of Mean Source DF Squares Square F Value Pr > Model Error Uncorrected Total 2 32 34 0.96083 0.06341 1.02423 Root MSE Dependent Mean Coeff Var 0.04451 0.15116 29.44875 0.48041 0.00198 R-Square Adj R-Sq 242.45 0.9381 0.9342 <.00 Page 5 Parameter Estimates Parameter Standard Variable DF Estimate Error t Value Pr > |t| x1star 1 -0.09292 0.02890 -3.21 0.0030 x2star 1 0.06932 0.00441 15.71 <.0001 We predict that if GDP per capita increases by $1.00, pubic expenditures on education per capital will increase by $0.069 **************************************************************************** This regression corrects for heteroskedasticity of the form var(et) = 2X2t The REG Procedure Model: MODEL1 Dependent Variable: ystar Analysis of Variance Sum of Mean Source DF Squares Square F Value Pr > Model 1 0.00349 0.00349 12.69 0.00 Error 32 0.00880 0.00027504 Corrected Total 33 0.01229 Root MSE 0.01658 R-Square 0.2840 Dependent Mean 0.05153 Adj R-Sq 0.2616 Coeff Var 32.18259 Parameter Estimates Parameter Standard Variable DF Estimate Error t Value Pr > |t| Intercept 1 0.06443 0.00460 13.99 <.0001 xstar 1 -0.06739 0.01892 -3.56 0.0012 We predict that if GDP per capita increases by $1.00, pubic expenditures on education per capital will increase by $0.064, because the intercept in this transformed model is actually the slope coefficient in the original model. Here is the code that generated the results: data pubexp; infile 'A:pubexp.dat' firstobs=2; input ee gdp pop; y = ee/pop; x = gdp/pop; proc sort; by descending x; proc reg; model y = x / acov; output out=pubout p=yhat r=ehat; symbol1 i=join c=green v=circle; proc gplot; plot y*x = '*' yhat*x = 1 /overlay; plot ehat*x; * create data set; Page 6 data one; set pubexp; if _n_ <= 17; proc reg; model y = x; data two; set pubexp; if _n_ >= 18; proc reg; model y = x; * critcal values for tests; data; fc = finv(.95,15,15); tc = tinv(.975,32); proc print; *PART E GLS via weighted least squares;; data two; set pubexp; ystar = y/sqrt(x); x1star = 1/sqrt(x); x2star = x/sqrt(x); ** this code corrects for hetero assuming var(et) = sigmasq*xt; proc reg ; model ystar = x1star x2star / noint; run; data three; set pubexp; ystar = y/x; xstar = 1/x; ** this code corrects for hetero assuming var(et) = sigmasq*xtsquare; proc reg ; model ystar = xstar; run; 11.10 (a) The graphs for plotting the residuals against income and age show that the absolute values of the residuals increase as income increases but they appear to be constant as age increases. This indicates that the error variance depends on income. Page 7 (b) Since the residual plot shows that the error variance may increase when income increases, and this is a reasonable outcome since greater income implies greater flexibility in travel, we set the null and alternative hypotheses as H0 : 12 22 against H1 : 12 22 . The test statistic is GQ ˆ 12 (2.9471 107 ) (100 4) 2.8124 ˆ 22 (1.0479 107 ) (100 4) The 5% critical value for (96, 96) degrees of freedom is Fc 1.35 . Thus, we reject H 0 and conclude that the error variance depends on income.