* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download chapter 13_uncertainty

History of randomness wikipedia , lookup

Indeterminism wikipedia , lookup

Probabilistic context-free grammar wikipedia , lookup

Infinite monkey theorem wikipedia , lookup

Birthday problem wikipedia , lookup

Probability box wikipedia , lookup

Ars Conjectandi wikipedia , lookup

Conditioning (probability) wikipedia , lookup

Inductive probability wikipedia , lookup

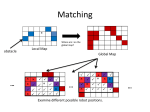

Chapter 13 Uncertainty 1 Outline Acting under Uncertainty Basic Probability Notation The Axioms of probability Inference Using Joint Distributions Independence Bayes’s Rule and Its Use The Wumpus World Revisited 2 Remember the “Logics in General” Slide? Ontological Commitment: What exists in the world — TRUTH Epistemological Commitment: What an agent believes about facts — BELIEF 本體論 認識論 3 Uncertainty When an agent knows enough facts about its en- vironment, the logical approach enables it to derive plans that are guaranteed to work. Unfortunately, agents almost never have access to the whole truth about their environment. Agents must act under uncertainty. For example: a wumpus agent often will find itself unable to discover which of two squares contains a pit. If those squares are en route to the gold, then the agent might have to take a chance and enter one of the two squares. 4 Uncertainty Let action At = leave for airport t minutes before flight. Will At get me there on time? Problems – partial observability (road state, other drivers’ plans, etc.) – noisy sensors (traffic reports, etc.) – uncertainty in outcomes (flat tire, etc.) – immense complexity modeling and predicting traffic 5 Can we take a purely logical approach? Risks falsehood: “A25 will get me there on time” Leads to conclusions that are too weak for decision making: – A25 will get me there on time if there is no accident on the bridge and it doesn’t rain and my tires remain intact, etc. – A1440 might reasonably be said to get me there on time but I’d have to stay overnight at the airport! – A90 may be good Logic represents uncertainty by disjunction but cannot tell us how likely the different conditions are. 6 Methods for Handling Uncertainty Default or nonmontonic logic: – Assume my car does not have a flat tire – Assume A25 works unless contradicted by evidence – Issues: What assumptions are reasonable? How to handle contradictions? Logic Rules with fudge factors: – – – – – – A25 |→0.3 get there on time Sprinkler(灑水車)|→ 0.99 WetGrass WetGrass |→ 0.7 Rain Issues: Problems with combination, e.g., Sprinkler causes Rain? 7 Uncertainty The information the agent has cannot guarantee any of these outcomes for A90, but it can provide some degree of belief that they will be achieved. Other plans, such as Al20, might increase the agent's belief that it will get to the airport on time, but also increase the likelihood of a long wait. The right thing to do—the rational decision—therefore depends on both the relative importance of various goals and the likelihood that, and degree to which, they will be achieved. 8 Handling Uncertainty knowledge 9 An Alternative: Use Probability Given the available evidence, A25 will get me there on time with probability 0.04 Probabilistic assertions summarize the effects of – Laziness(弛散): too much work to list the complete set of antecedents or consequents to ensure no exceptions – Theoretical ignorance: medical science has no complete theory for the domain – Practical ignorance: Even if we know all the rules, we might be uncertain about a particular patient 10 Degree of Belief The connection between toothaches and cavities is just not a logical consequence in either direction. This is typical of the medical domain, as well as most other judgmental domains: law, business, design, automobile repair, gardening, dating, and so on. The agent's knowledge can at best provide only a degree of belief in the relevant sentences. Our main tool for dealing with degrees of belief will be probability theory, which assigns to each sentence a numerical degree of belief between 0 and 1. 11 Probability Probability provides a way of summarizing the uncertainty that comes from our laziness and ignorance. We might not know for sure what afflicts a particular patient, but we believe that there is, say, an 80% chance—that is, a probability of 0.8—that the patient has a cavity if he or she has a toothache. That is, we expect that out of all the situations that are in distinguishable from the current situation as far as the agent's knowledge goes, the patient will have a cavity in 80% of them. This belief could be derived from – statistical – general rules, or – a combination of evidence sources. The missing 20% summarizes all the other possible causes of toothache that we are too lazy or ignorant to confirm or deny. 12 Probability Assigning a probability of 0 to a given sentence corresponds to an unequivocal belief that the sentence is false, Assigning a probability of 1 corresponds to an unequivocal belief that the sentence is true. Probabilities between 0 and 1 correspond to intermediate degrees of belief in the truth of the sentence. The sentence itself is in fact either true or false. It is important to note that a degree of belief is different from a degree of truth. – A probability of 0.8 does not mean "80% true" but rather an 80% degree of belief—that is, a fairly strong expectation. – Thus, probability theory makes the same ontological commitment as logic— namely, that facts either do or do not hold in the world. Degree of truth, as opposed to degree of belief, is the subject of fuzzy logic, which is covered in Section 14.7. 13 Probability All probability statements must therefore indicate the evidence with respect to which the probability is being assessed. As the agent receives new percepts, its probability assessments are updated to reflect the new evidence. Before the evidence is obtained, we talk about prior or unconditional probability; after the evidence is obtained, we talk about posterior or conditional probability. In most cases, an agent will have some evidence from its percepts and will be interested in computing the posterior probabilities of the outcomes it cares about. 14 Uncertainty and rational decisions The presence of uncertainty radically changes the way an agent makes decisions. A logical agent typically has a goal and executes any plan that is guaranteed to achieve it. An action can be selected or rejected on the basis of whether it achieves the goal, regardless of what other actions might achieve. When uncertainty enters the picture, this is no longer the case. Consider again the A90 plan for getting to the airport. Suppose it has a 95% chance of succeeding. Does this mean it is a rational choice? Not necessarily: There might be other plans, such as Al20, with higher probabilities of success. What about A1440? In most circumstances, this is not a good choice, because, although it almost guarantees getting there on time, it involves an intolerable wait. 15 PREFERENCES To make such choices, an agent must first have preferences between the different possible outcomes of the various plans. A particular outcome is a completely specified state, including such factors as whether the agent arrives on time and the length of the wait at the airport. We will be using utility theory to represent and reason with preferences. Utility theory says that every state has a degree of usefulness, or utility, to an agent and that the agent will prefer states with higher utility. 16 Examples Suppose I believe the following: – – – – P(A25 gets me there on time|…) = 0.04 P(A90 gets me there on time|…) = 0.70 P(A120 gets me there on time|…) = 0.95 P(A1440 gets me there on time|…) = 0.9999 Which do I choose? Depends on my preferences for missing flight vs. airport cuisine, etc. 17 Decision theory Preferences, as expressed by utilities, are combined with probabilities in the general theory of rational decisions called decision theory: Decision theory = probability theory + utility theory . A agent is rational if and only if it chooses the action that yields the highest expected utility, averaged over all the possible outcomes of the action. This is called the principle of Maximum Expected Utility (MEU). 18 Design for a decision-theoretic agent 19 13.2 Basic Probability Notation We will need a formal language for representing and reasoning with uncertain knowledge. Any notation for describing degrees of belief must be able to deal with two main issues: – the nature of the sentences to which degrees of belief are assigned and – the dependence of the degree of belief on the agent's experience. The version of probability theory we present uses an extension of propositional 20 Random Variables A random variable is a function that takes discrete values from a countable domain and maps them to a number between 0 and 1 Example: Weather is a discrete (propositional) random variable that has domain <sunny, rain, cloudy, snow>. – sunny is an abbreviation for Weather = sunny – P(Weather=sunny)=0.72, P(Weather=rain)=0.1, etc. – Can be written: P(sunny)=0.72, P(rain)=0.1, etc. Other types of random variables: – Boolean random variable has the domain <true,false>, e.g., Cavity (special case of discrete random variable) – Continuous random variable as the domain of real numbers, e.g., Temp 21 Atomic Events An atomic event is a complete specification of the state of the world about which the agent is uncertain. It can be thought of as an assignment of particular values to all the variables of which the world is composed. For example, if my world consists of only the Boolean variables Cavity and Toothache, then there are just four distinct atomic events; – – – – Cavity = false Toothache = true Cavity = false Toothache = false Cavity = true Toothache = true Cavity = true Toothache = false 22 Properties of Atomic events : They are mutually exclusive—at most one can actually be the case. – For example, cavity toothache and cavity itoothache cannot both be the case. The set of all possible atomic events is exhaustive—at least one must be the case. That is, the disjunction of all atomic events is logically equivalent to true. Any particular atomic event entails the truth or falsehood of every proposition, whether simple or complex. This can be seen by using the standard semantics for logical connectives. – For example, the atomic event cavity toothache entails the truth of cavity and the falsehood of cavity toothache. Any proposition is logically equivalent to the disjunction of all atomic events that entail the truth of the proposition. – For example, the proposition cavity is equivalent to disjunction of the atomic events cavity toothache and cavity toothache. 23 Prior Probability Prior (unconditional) probability corresponds to belief prior to arrival of any (new) evidence – P(sunny)=0.72, P(rain)=0.1, etc. – Vector notation: Weather is one of <0.7, 0.2, 0.08, 0.02> • P( Weather = sunny) = 0.7 • P( Weather = rain) = 0.2 • P( Weather = cloudy) = 0.08 • P( Weather = snow) = 0.02 . This statement defines a prior probability distribution for the random variable Weather (sums to 1 over the domain) 24 Atomic Events and the Universe An atomic event is a complete specification of the state of the world about which the agent is uncertain (like interpretations in logic). If the world consists of the Boolean variables Cavity and Toothache, there are four distinct atomic events, one of which is: Cavity=false Æ Toothache=true The universe consists of atomic events An event is a set of atomic events P: events ! [0,1] – P(true) = 1 = P(U) – P(false) = 0 = P(;) – P(A B) = P(A) + P(B) – P(A ∩ B) A B A∩B U 25 Joint Distribution Probability assignment to all combinations of values of random variables Toothache Toothache Cavity 0.04 0.06 Cavity 0.01 0.89 The sum of the entries in this table has to be 1 Given this table, we can answer all probability questions about this domain Probability of a proposition is the sum of the probabilities of atomic events in which it holds – P(cavity) = 0.1 [add elements of cavity row] – P(toothache) = 0.05 [add elements of toothache column] 26 Conditional Probability Toothache Toothache Cavity 0.04 0.06 Cavity 0.01 0.89 A B U A∩B P(cavity)=0.1 and P(cavity ∩ toothache)=0.04 are both prior (unconditional) probabilities Once the agent has new evidence concerning a previously unknown random variable, e.g., toothache, we can specify a posterior (conditional) probability, e.g., P(cavity | toothache) P(A | B) = P(A ∩ B)/P(B) [prob of A w/ U limited to B] P(cavity | toothache) = 0.04/0.05 = 0.8 27 Conditional Probability (continued) Definition of Conditional Probability: P(A | B) = P(A ∩ B)/P(B) Product rule gives an alternative formulation: P(A ∩ B) = P(A | B) P(B) = P(B | A) P(A) A general version holds for whole distributions: P(Weather, Cavity) = P( Weather | Cavity) P( Cavity) 28 29 13.3 The Axioms of probability All probabilities are between 0 and 1. For any proposition a, 0<P(a)<1. Necessarily true (i.e., valid) propositions have probability 1, and necessarily false (i.e., unsatisfiable) propositions have probability 0. – P(true) = 1 P(false) = 0 . The probability of a disjunction is given by – P(a b) = P(a) + P(b) - P(a b) . 30 Using the axioms of probability 31 Probability axioms Recall that any proposition a is equivalent to the disjunction of all the atomic events in which a holds; call this set of events e(a). Recall also that atomic events are mutually exclusive, so the probability of any conjunction of atomic events is zero, by axiom 2. Hence, from axiom 3, we can derive the following simple relationship: The probability of a proposition is equal to the sum of the probabilities of the atomic events in which it holds; that is, This equation provides a simple method for computing the probability of any proposition, given a full joint distribution that specifies the probabilities of all atomic events. 32 Why the axioms of prob. Are reasonable The axioms of probability can be seen as restricting the set of probabilistic beliefs that an agent can hold. This is somewhat analogous to the logical case, where a logical agent cannot simultaneously believe A, B, and (A B), for example. In the logical case, the semantic definition of conjunction means that at least one of the three beliefs just mentioned must be false in the world, so it is unreasonable for an agent to believe all three. With probabilities, on the other hand, statements refer not to the world directly, but to the agent's own state of knowledge. Why, then, can an agent not hold the following set of beliefs, which clearly violates axiom 3? – P(a)=0.4 P(ab)=0.0 – P(b) = 0.3 P(a b) = 0.8 33 de Finetti’s augument Axioms of probability: – imply that certain logically related events must have related probabilities – restrict the set of probabilistic beliefs that an agent can hold – Example: P(A B) = P(A) + P(B) – P(A∩ B) de Finetti (1931) proved: An agent who bets according to probabilities that violate these axioms can be forced to bet so as to lose money regardless of the outcome Let’s look at an example … 34 Example 35 13.4 Probabilistic Inference Probabilistic inference is the computation from observed evidence of posterior probabilities for query propositions. We use the full joint distribution as the “knowledge base” form which answers to questions may be derived. Probabilities in joint distribution sum to 1 36 Probabilistic Inference (cont) Probability of any proposition computed by finding atomic events where proposition is true and adding their probabilities – P(cavity toothache) = 0.108 + 0.012 + 0.072 + 0.008 + 0.016 + 0.064 = 0.28 – P(cavity) = 0.108 + 0.012 + 0.072 + 0.008 = 0.2 P(cavity) is called a marginal probability and the process of computing this is called marginalization 37 Probabilistic Inference (cont.) Can also compute conditional probabilities. P( cavity | toothache) = P(cavity toothache)/P(toothache) = (0.016 + 0.064) / (0.108 + 0.012 + 0.016 + 0.064) = 0.4 Denominator is viewed as a normalization constant: Stays constant no matter what the value of Cavity is. (Book uses a to denote normalization constant 1/P(X), for random variable X.) 38 39 40 Enumerate-Joint-Ask 41 13.5 Independence How are P(toothache, catch, cavity, Weather = cloudy) and P(toothache, catch, cavity) related? 42 Independence 43 44 Independence A and B are independent iff – P(A B) = P(A) P(B) – P(A | B) = P(A) – P(B | A) = P(B) Independence is essential for efficient probabilistic reasoning Cavity Toothache Weather Xray decomposes into Cavity Toothache Xray Weather P(T, X, C, W) = P(T, X, C) P(W) 45 13.6 Bayes' Rule 46 Bayes’s Rule Product rule P(ab) = P(a | b) P(b) = P(b | a) P(a) Bayes' rule: P(a | b) = P(b | a) P(a) / P(b) or in distribution form P(Y|X) = P(X|Y) P(Y) / P(X) = αP(X|Y) P(Y) Useful for assessing diagnostic probability from causal probability: – P(Cause|Effect) = P(Effect|Cause) P(Cause) / P(Effect) – 47 Example 1(simple case) A doctor knows that the disease meningitis(腦膜炎) causes the patient to have a stiff neck(頸部僵硬), say, 50% of the time. The prior probability that a patient has meningitis is 1/50,000, The prior probability that any patient has a stiff neck is 1/20. Letting s be the proposition that the patient has a stiff neck and m be the proposition that the patient has meningitis, we have 48 49 Example 2(combining evidence) 50 Conditional independence 51 52 Separates 53 54 Bayes' Rule and conditional independence P(Cavity | toothache catch) = αP(toothache catch | Cavity) P(Cavity) = αP(toothache | Cavity) P(catch | Cavity) P(Cavity) This is an example of a naïve Bayes model: P(Cause,Effect1, … ,Effectn) = P(Cause) πiP(Effecti|Cause) 55 Conditioning Idea: Use conditional probabilities instead of joint probabilities P(A) = P(A B) + P(A B) = P(A | B) P(B) + P(A | B) P( B) Example: P(symptom) = P(symptom|disease) P(disease) + P(symptom|:disease) P(:disease) More generally: P(Y) = z P(Y|z) P(z) Marginalization and conditioning are useful rules for derivations involving probability expressions. 56 Conditional Independence A and B are conditionally independent given C iff – P(A | B, C) = P(A | C) – P(B | A, C) = P(B | C) – P(A B | C) = P(A | C) P(B | C) Toothache (T), Spot in Xray (X),Cavity (C) – None of these propositions are independent of one other – But T and X are conditionally independent given C 57 Conditional Independence (cont.) If I have a cavity, the probability that the XRay shows a spot doesn’t depend on whether I have a toothache: P(X|T,C)=P(X|C) The same independence holds if I haven’t got a cavity: P(X|T, C)=P(X|C) Equivalent statements: P(T|X,C)=P(T|C) and P(T,X|C)=P(T|C) P(X|C) Write out full joint distribution (chain rule): P(T,X,C) = P(T|X,C) P(X,C) = P(T|X,C) P(X|C) P(C) = P(T|C) P(X|C) P(C) 58 Another Example Battery is dead (B) Radio plays (R) Starter turns over (S) None of these propositions are independent of one another R and S are conditionally independent given B 59 Combining Evidence Bayesian updating given two pieces of information Assume that T and X are conditionally independent given C (naïve Bayes Model) C Cause T X Effect1 Effect2 We can do the evidence combination sequentially 60 How do we Compute the Normalizing Constant (a)? 61 13.7 Wumpus World (Revisited) •Pij true iff [i,j] contains pit •Bij true iff [i,j] is breezy •Include only B1,1, B1,2, B2,1 in the probability model •Known facts: b = : b1,1 Æ b1,2 Æ b2,1 known = : p1,1 Æ : p1,2 Æ : p2,1 Query: P(P1,3|known,b) 62 63 64 Wumpus World (cont.) •Key insight: Observed breezes conditionally independent of other variables given the known, fringe, and query variables •Define Unknown=Fringe [ Other P(b|P1,3,Known,Unknown) =P(b|P1,3,Known,Fringe) •Book has details. Query: P(P1,3|known,b) •Bottom line: - P(P1,3|known,b) ¼ <0.31,0.69> - P(P2,2|known,b) ¼ <0.86,0.14> 65 66 67 68 69 70 Some Problems for Fun Prove the following: – P(: A) = 1 – P(A) – P(A Ç B Ç C) = P(A) + P(B) + P(C) – P(A Æ B) – P(A Æ C) – P(B Æ C) + P(A Æ B Æ C) Show that P(A) · P(A,B) Show that P(A|B) + P(:A|B) = 1 Show that the different formulations of conditional independence are equivalent: – P(A | B, C) = P(A | C) – P(B | A, C) = P(B | C) – P(A Æ B | C) = P(A | C) ¢ P(B | C) Conditional Bayes’ rule. Write an expression for P(A | B,C) in terms of P(B | A,C). 71