Numerical Analysis

... The eigenvalues of AT are the same as those of A The eigenvectors from A and AT are orthogonal (associated with different eigenvalues) ...

... The eigenvalues of AT are the same as those of A The eigenvectors from A and AT are orthogonal (associated with different eigenvalues) ...

Eigenvalues, eigenvectors, and eigenspaces of linear operators

... Suppose that λ is an eigenvalue of A. That means there is a nontrivial vector x such that Ax = λx. Equivalently, Ax−λx = 0, and we can rewrite that as (A − λI)x = 0, where I is the identity matrix. When 1 is an eigenvalue. This is another im- But a homogeneous equation like (A−λI)x = 0 has portant s ...

... Suppose that λ is an eigenvalue of A. That means there is a nontrivial vector x such that Ax = λx. Equivalently, Ax−λx = 0, and we can rewrite that as (A − λI)x = 0, where I is the identity matrix. When 1 is an eigenvalue. This is another im- But a homogeneous equation like (A−λI)x = 0 has portant s ...

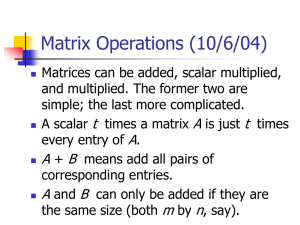

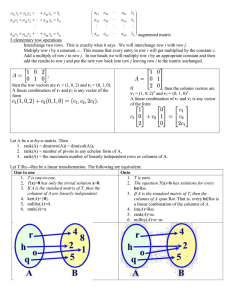

Matrices Linear equations Linear Equations

... If there are no zero eigenvalues – matrix is invertible If there are no repeated eigenvalues – matrix is diagonalizable If all the eigenvalues are different then eigenvectors are linearly independent ...

... If there are no zero eigenvalues – matrix is invertible If there are no repeated eigenvalues – matrix is diagonalizable If all the eigenvalues are different then eigenvectors are linearly independent ...

Mathematics Qualifying Exam University of British Columbia September 2, 2010

... 1. A matrix A ∈ Rn×n is called positive definite if x" Ax > 0 for all x ∈ Rn \ {0}. Show: a) If A ∈ Rn×n is symmetric and positive definite, then all its eigenvalues are positive. ...

... 1. A matrix A ∈ Rn×n is called positive definite if x" Ax > 0 for all x ∈ Rn \ {0}. Show: a) If A ∈ Rn×n is symmetric and positive definite, then all its eigenvalues are positive. ...

(1.) TRUE or FALSE? - Dartmouth Math Home

... (p.) Let A ∈ Mn×n (F ) and β = {v1 , v2 , . . . , vn } be an ordered basis for F n consisting of eigenvectors of A. If Q is the n × n matrix whose j th column is vn (1 ≤ j ≤ n), then Q−1 AQ is a diagonal matrix. TRUE. Q−1 AQ is the matrix of LA in the basis β, which is a diagonal matrix. (q.) A line ...

... (p.) Let A ∈ Mn×n (F ) and β = {v1 , v2 , . . . , vn } be an ordered basis for F n consisting of eigenvectors of A. If Q is the n × n matrix whose j th column is vn (1 ≤ j ≤ n), then Q−1 AQ is a diagonal matrix. TRUE. Q−1 AQ is the matrix of LA in the basis β, which is a diagonal matrix. (q.) A line ...

Eigenvalues and Eigenvectors

... the characteristic polynomial of matrix A. The eigenvalues are the solutions of (2) or equivalently the roots of the characteristic polynomial (3). Once we have the n eigenvalues of A, 1, 2, ..., n, the corresponding eigenvectors are nontrivial solutions of the homogeneous linear systems (A - iI ...

... the characteristic polynomial of matrix A. The eigenvalues are the solutions of (2) or equivalently the roots of the characteristic polynomial (3). Once we have the n eigenvalues of A, 1, 2, ..., n, the corresponding eigenvectors are nontrivial solutions of the homogeneous linear systems (A - iI ...

2.2 The n × n Identity Matrix

... (i.e. multiplying any matrix A (of admissible size) on the left or right by In leaves A unchanged). Proof (of Statement 1 of the Theorem): Let A be a n × p matrix. Then certainly the product In A is defined and its size is n × p. We need to show that for 1 ≤ i ≤ n and 1 ≤ j ≤ p,the entry in the ith ...

... (i.e. multiplying any matrix A (of admissible size) on the left or right by In leaves A unchanged). Proof (of Statement 1 of the Theorem): Let A be a n × p matrix. Then certainly the product In A is defined and its size is n × p. We need to show that for 1 ≤ i ≤ n and 1 ≤ j ≤ p,the entry in the ith ...

Section 7-2

... behaviour. We illustrate with two simple examples some of the possible behaviour. Example. We illustrate the existence of complex eigenvalues. Let A= ...

... behaviour. We illustrate with two simple examples some of the possible behaviour. Example. We illustrate the existence of complex eigenvalues. Let A= ...

finm314F06.pdf

... (d) Show from de nition that if λ is an eigenvalue of invertible A, then 1/λ is an eigenvalue of A−1 . ...

... (d) Show from de nition that if λ is an eigenvalue of invertible A, then 1/λ is an eigenvalue of A−1 . ...

Jordan normal form

In linear algebra, a Jordan normal form (often called Jordan canonical form)of a linear operator on a finite-dimensional vector space is an upper triangular matrix of a particular form called a Jordan matrix, representing the operator with respect to some basis. Such matrix has each non-zero off-diagonal entry equal to 1, immediately above the main diagonal (on the superdiagonal), and with identical diagonal entries to the left and below them. If the vector space is over a field K, then a basis with respect to which the matrix has the required form exists if and only if all eigenvalues of the matrix lie in K, or equivalently if the characteristic polynomial of the operator splits into linear factors over K. This condition is always satisfied if K is the field of complex numbers. The diagonal entries of the normal form are the eigenvalues of the operator, with the number of times each one occurs being given by its algebraic multiplicity.If the operator is originally given by a square matrix M, then its Jordan normal form is also called the Jordan normal form of M. Any square matrix has a Jordan normal form if the field of coefficients is extended to one containing all the eigenvalues of the matrix. In spite of its name, the normal form for a given M is not entirely unique, as it is a block diagonal matrix formed of Jordan blocks, the order of which is not fixed; it is conventional to group blocks for the same eigenvalue together, but no ordering is imposed among the eigenvalues, nor among the blocks for a given eigenvalue, although the latter could for instance be ordered by weakly decreasing size.The Jordan–Chevalley decomposition is particularly simple with respect to a basis for which the operator takes its Jordan normal form. The diagonal form for diagonalizable matrices, for instance normal matrices, is a special case of the Jordan normal form.The Jordan normal form is named after Camille Jordan.