* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Chapter 2. Simple Linear Regression Model Background Suppose

Data assimilation wikipedia , lookup

Expectation–maximization algorithm wikipedia , lookup

Choice modelling wikipedia , lookup

Regression analysis wikipedia , lookup

German tank problem wikipedia , lookup

Linear regression wikipedia , lookup

Instrumental variables estimation wikipedia , lookup

1

Chapter 2. Simple Linear Regression Model

Background

Suppose we wish to learn about the effect of education (x) on wage rate (y) in the U.S.

We believe that the average (expected) wage rate depends on the education level

𝐸(𝑌𝑖 ) = 𝛽0 + 𝛽1 𝑋𝑖

Deviation of individual's wage rate from the average is random.

𝑌𝑖 = 𝐸(𝑌𝑖 ) + 𝑢𝑖 = 𝛽0 + 𝛽1 𝑋𝑖 + 𝑢𝑖

Yi=dependent variable, or explained variable

Xi=independent variable, or explanatory variable

ui=error term, or disturbance term

𝛽0 =intercept parameter, or intercept coefficient, or constant term

𝛽1 =slope parameter, or slope coefficient

- The error term captures the effects of all other variables, some of which are observable and some are

not.

- Properties of error terms play an important role in determining the properties of parameter estimates. We

will talk about this much later.

- The slope parameter represents the marginal effect of X on Y. If X increases by one unit, Y changes by

𝛽1 .

What is the estimation? See Excel file 2.1

- collect data on x and y from n individuals

- plot them on x-y plane. This is called the scatterplot. Each point represents an individual

- Since the model is specified as a linear model, we wish to find a straight line that captures the

relationship between the two variables.

- There can be many lines that appear a good line.

- We need to pick one line. Which line is the best?

- We need to decide what we mean by the “best”

Estimation Methods

We will start a simpler approach, called the Ordinary Least Squares (OLS) Estimation method.

To learn about the OLS method, let

𝛽̂0 , 𝛽̂1 estimated parameters

2

𝑌̂𝑖 = 𝛽̂0 + 𝛽̂1 𝑋𝑖 predicted (or estimated) dependent variable

𝑢̂𝑖 = 𝑌𝑖 − 𝑌̂𝑖

(regression) residuals, (or prediction errors)

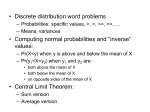

12

10

u5

8

6

4

u4

2

0

0

1

2

3

4

5

6

7

8

9

10

See Excel 2.2

The line of predicted y for each x is the regression line we are looking for.

The objective of estimation is to find a line that is closest to the sample points.

- Parameter estimates that make the predicted value 𝑌̂𝑖 and actual observed value Yi as close as possible.

- But, we cannot make all predicted values close to actual values. Some are close and some are not.

How do we measure the overall closeness?

Idea 0: measure by the sum of residuals. This does not work because positive and negative residuals

cancel each other.

Idea 1. to avoid the cancellation problem, we can take the sum of absolute residuals (SAR):

𝑆𝐴𝑅 = ∑𝑛𝑖=1 |𝑢̂𝑖 |

And we find parameters that make this SAR the smallest. Such estimator is called the Minimum Absolute

Deviation (MAD) estimator, or the Least Absolute Deviation (LAD) estimator.

Idea 2. Another way to avoid the cancellation problem is to take the sum of squared residuals (SSR):

𝑆𝑆𝑅 = ∑𝑛𝑖=1 𝑢̂𝑖 2 = ∑𝑛𝑖=1(𝑌𝑖 − 𝛽̂0 − 𝛽̂1 𝑋𝑖 )2

The parameter values that make the SSR the smallest are called the Ordinary Least Squares (OLS)

estimator.

Remarks:

- The LAD estimator of parameters represent the intercept and marginal effect of Xi on the median of

3

Yi. That is, median of Yi is equal to 𝛽0 + 𝛽1 𝑋𝑖

- The OLS estimator of parameters represent the intercept and marginal effect of Xi on the mean of

Yi. That is, mean of Yi is equal to 𝛽0 + 𝛽1 𝑋𝑖

- We may be interested in to know the effect of Xi on the quantiles (eg., 25% quantile, or 75%

qyantile) of Yi. Since the 50% quantile is the median, this is a generalization of the idea of LAD

estimator. And it is called the Quantile Regression instead of Linear Regression.

- Objective functions of LAD and Quantile estimators ( quantile)

LAD estimator:

min 𝑆𝐴𝑅 = ∑𝑛

̂ 𝑖 | = ∑𝑛𝑖=1 |𝑌𝑖 − 𝛽̂0 − 𝛽̂1 𝑋𝑖 |

𝑖=1 |𝑢

𝛽0 ,𝛽1

Quantile estimator:

min 𝑄(𝜏) = ∑𝑢̂𝑖 >0 𝜏|𝑢

̂ 𝑖 | + ∑𝑢̂𝑖≤0(1 − 𝜏)|𝑢̂𝑖 |

𝛽0 ,𝛽1

- Note that the LAD estimator gives the same weight (equal to 1) on the absolute error regardless of

the sign of the error (whether it is positive or negative).

- Note that the quantile estimator gives different asymmetric weight on absolute error: weight on

the positive error (under-prediction) and weight (1-) on the negative error (over-prediction)

How do we compute the estimator of parameters? See Excel 2.3

(1) Use Excel’s intercept and slope commands

(2) Use Excel’s Regression function

(3) Use excel’s Solver function

(4) Use algebraic solutions

𝛽̂0 = 𝑌̅ − 𝛽̂1 𝑋̅

𝑛

∑ (𝑋𝑖 −𝑋̅)(𝑌𝑖 −𝑌̅)

𝛽̂1 = 𝑖=1

̅ 2

∑𝑛

𝑖=1(𝑋𝑖 −𝑋)

where 𝑋̅ and 𝑌̅ are the sample means of X and Y

Derivation of OLS estimators

𝑆𝑆𝑅 = ∑𝑛𝑖=1 𝑢̂𝑖 2 = ∑𝑛𝑖=1(𝑌𝑖 − 𝛽̂0 − 𝛽̂1 𝑋𝑖 )2

𝑆𝑆𝑅

𝜕𝑆𝑆𝑅

̂0

𝜕𝛽

= −2 ∑𝑛𝑖=1(𝑌𝑖 − 𝛽̂0 − 𝛽̂1 𝑋𝑖 ) = 0 ⇒ 𝛽̂0 = 𝑌̅ − 𝛽̂1 𝑋̅

𝑆𝑆𝑅

𝜕𝑆𝑆𝑅

̂1

𝜕𝛽

= −2 ∑𝑛𝑖=1 𝑋𝑖 (𝑌𝑖 − 𝛽̂0 − 𝛽̂1 𝑋𝑖 ) = 0 ⇒ ∑𝑛𝑖=1 𝑋𝑖 𝑢̂𝑖 = 0

Interpretation of OLS estimator

For proper interpretation of the estimation results, you have to remember

(i) the measurement unit of each variable

(ii) the functional form of the regression

4

- Measurement Unit

Example

wage=-0.90+0.54 educ

wage is measured in dollars and educ is measured in years

Slope estimate 0.54: one more year of education is expected to raise the hourly wage by 0.54

dollars (54 cents) on the average. (1976 data)

Intercept estimate -0.90: A person with no education receives negative 90 cents per hour -- silly

result. This is caused by the lack of sample with zero education and hence the estimate at this low

end is not good.

Prediction: The predicted wage of a person who has 10 years of education is $4.50/hour, which is

computed by substituting 10 for educ: 4.50=-0.90+0.5410.

- Functional form

ln(wage)=0.584+0.083 educ

wage is measured in dollars and educ is measured in years

Slope estimate 0.083: one more year of education is expected to increase the hourly wage by

8.3% (1000.083)

Intercept estimate 0.584: A person with no education is expected to receive wage equal to

$1.79=exp(0.584)

Prediction: The predicted wage of a person who has 10 years of education is $4.50/hour, which is

computed by substituting 10 for educ: 4.50=-0.90+0.5410.

Unexpected Results

We expect that the education level has a positive effect on the wage, i.e., 𝛽1 is positive.

What if its estimate is negative? i.e., unexpected result?

It is due to misspecification of the model such as

- omitted explanatory variables

- wrong functional form

- the coefficient (the marginal effect of educ) is not the same for all levels of education.

- and others

What do we have to do?

Example of Phillips Curve

- simple regression model gives a positively sloped Phillips curve.

- this is caused by the shift of the curve over time.

- See Excel file 2.4

5

A Measure of Goodness-of-fit: R Squared R2

Example

- Differences in wage rates can be explained partly by the differences in education levels

- Remaining part of differences in wage is due to unobservable random factors (error terms)

Question

- Is this model any good?

- Does the education explain the wage well?

- How much of the differences in years of education explain the differences in wage rates across

individuals?

- How can we measure it?

To answer this question, we may compare two models

- One model that uses information on education... unrestricted model

𝑌𝑖 = 𝛽0 + 𝛽1 𝑋𝑖 + 𝑢𝑖

- One model that does not use information on education... restricted model

𝑌𝑖 = 𝛽0 + 𝑢𝑖

The restriction is 𝛽1 = 0

How do we measure the relative performances of these two models?

- The Least Squares estimators are the estimators that make the SSR as small as possible.

- Smaller SSR means a relatively better overall prediction.

- Therefore, we can compare the minimum SSR of the two models

Estimate both models using the OLS method, and let their SSR’s be denoted by

SSRu from the unrestricted model

SSRr from the restricted model

If education has a strong explanatory power, we would expect SSRu is much smaller than SSRr.

What is the percentage reduction in SSR when education level is used to predict wages? This ratio in

fraction is called decimal point

𝑆𝑆𝑅𝑢

𝑅2 = 1 −

0 ≤ 𝑅2 ≤ 1

𝑆𝑆𝑅𝑟

A high R2 means that the differences in education have a strong explanatory power for the differences in

wage rates, and hence our model is good, and vice versa.

Note: The estimator of 𝛽0 in the restricted model is 𝛽̃0 = 𝑌̅. Therefore,

𝑆𝑆𝑅𝑟 = ∑𝑛𝑖=1(𝑌𝑖 − 𝑌̅ )2

This is called the total sum of squares (TSS) and SSRu is the usual SSR that we discussed before.

Therefore, R2 is usually written as

𝑆𝑆𝑅

𝑅 2 = 1 − 𝑇𝑆𝑆 =

𝑇𝑆𝑆−𝑆𝑆𝑅

𝑇𝑆𝑆

SSE=explained sum of squares

𝑆𝑆𝐸

= 𝑇𝑆𝑆

6

Sampling Variations of OLS Estimator

𝑌𝑖 = 𝛽0 + 𝛽1 𝑋𝑖 + 𝑢𝑖

Collect a data and estimate parameters: 𝛽̂0 , 𝛽̂1

A simple regression model:

Collect another data and estimate parameters

These estimates will be different from previous estimates

Repeat the procedure and get different estimated values

What can we say about these different estimated values of the same unknown parameters?

Average of these estimates?

Dispersion of these estimates?

Basic facts

ui is a random variable, Yi is a function of ui. Therefore, Yi is also a random.

Xi can be a random variable too.

𝑛

∑ (𝑋𝑖 −𝑋̅)(𝑌𝑖 −𝑌̅)

From the estimators, 𝛽̂0 = 𝑌̅ − 𝛽̂1 𝑋̅ and 𝛽̂1 = 𝑖=1

, they are random variables also.

∑𝑛 (𝑋 −𝑋̅)2

𝑖=1

𝑖

Therefore, estimators vary from sample to sample (realized values of error terms)

Desired properties of estimators

Unbiasedness: The average of estimators is equal to their true values

Precision of estimators: Smallest dispersion

If an estimator is unbiased and has the smallest dispersion among all unbiased, it is called the best

unbiased estimator.

Can we claim that the OLS estimators are the best unbiased estimators?

The answer is yes under certain conditions.

Assumption 1. Conditional distribution of ui given Xi has a zero mean.

Assumption 2. (Xi,Yi) are independently and identically distributed.

Assumption 3. Large outliers are unlikely.

Under these assumptions, the OLS estimators are the BLUE (Best Linear Unbiased Estimator)

To understand these concepts, we will do a very brief review of basic statistic theory.

7

Review of Statistics Theory

Random Experiment: an experiment whose outcome cannot be predicted with certainty.

Probability: A numerical measure of the relative likelihood (frequencies) of various outcomes of a

random experiment. It takes a value in a closed interval [0,1].

Toss a coin with an outcome of either Head (H) or Tail (T). Let

=prob(H), and 1-=prob(T).

If =1/2, it is a fair coin.

Define a random variable Y

Y=1 if the coin lands on H

Y=0 if the coin lands on T

Probability density function (pdf) of Y: f(y)

f(y)=P(Y=y): f(1)=, f(0)=1-

Graph of the pdf

Cumulative distribution function (cdf): F(y)

F(y)=P(Y<=y)

F(y)=0 if y<0

=1-if 0≤y<1

=1 if y≥ 1

Graph of the cdf

Moments of random variable Y: Expected value and Variance

Summary value of the characteristics of the probability distribution

Mean or Expected value of Y

The expected value of Y is a measure of the location of the “center” of the probability distribution

It is a probability weighted average of the outcomes of Y

μ=E(Y)=1+(1-)0=

Variance and standard deviation of Y

The variance is a measure of the degree of dispersion or spread of the random outcomes around the

mean

𝜎 2 = 𝑣𝑎𝑟(𝑌) = 𝐸[(𝑌 − 𝜇)2 ]=E[Y2-2μY+μ2]=E(Y2)- μ2

𝜎 = √𝜎 2

8

For the example above,

𝜎 2 = 𝜃(1 − 𝜇)2 + (1 − 𝜃)(0 − 𝜇)2 = 𝜃(1 − 𝜃)2 + (1 − 𝜃)(0 − 𝜃)2 = 𝜃(1 − 𝜃)

This shows that the variance is the probability weighted average of the squared distance of outcomes

from their mean.

Example: more than two outcomes

Toss two balanced coins (or a balanced coin twice)

Potential outcomes: {HH}, {HT}, {TH}, {TT}

Each outcome is equally likely, and hence the probability of each outcome is 1/4

You win a prize in dollars that is equal to the number of heads

Let Y denote the amount of prize that you can win

Y can take $2, $1, $1, and $0

Expected prize:

μ=E(Y)=1/42+1/41+1/41+1/40=1

Variance of prize:

𝜎 2 =var(Y)=1/4(2-1)^2+1/4(1-1)^2+1/4(1-1)^2+1/4(0-1)^2=1/2

9

Joint Distribution of two random variables

Toss a fair coin twice

Possible outcomes: {HH}, {HT}, {TH}, {TT}

equally likely: probability of each outcome is ¼

Two prizes X and Y as specified below.

X

-1

{HT or TH}

2

{HH or TT}

f(y)

-2

{TT}

Y

0

{HT or TH}

2

{HH}

f(x)

0

1/2

0

1/2

1/4

0

1/4

1/2

1/4

1/2

1/4

1

Jane is given ticket Y and John is given ticket X.

Probability of individual random variable - marginal probability

What are the probabilities that Jane wins $-2? $0? $2?

What are the probabilities that John wins $2? lose $1?

Joint Probability

(i) What is the probability that both win $2?

This happens only if {HH} and its probability is 1/4.

(ii) What is the probability that John wins $2 and Jane lose $2?

This happens only if {TT} and its probability is 1/4

The last column shows the marginal probability density of X

The last row shows the marginal probability density of Y

𝜇𝑥 =0.5, 𝜎𝑥2 =E(X2)-𝜇𝑥2 =2.5-0.25=2.25

𝜇𝑦 =0.0, 𝜎𝑦2 =E(Y2)-𝜇𝑦2 =2.0-0.0=2.0

Covariance of two random variables X and Y

A measure of the degree of covariation of the two random variables

It is computed by

𝜎𝑥𝑦 =cov(X,Y)=E[(X-𝜇𝑥 )(Y-𝜇𝑦 )]=E(XY)-E(X) 𝜇𝑦 − 𝜇𝑥 𝐸(𝑌) + 𝜇𝑥 𝜇𝑦 = 𝐸(𝑋𝑌) − 𝜇𝑥 𝜇𝑦

In the example above, 𝜎𝑥𝑦 = 𝐸(𝑋𝑌) − 𝜇𝑥 𝜇𝑦 =-1+1-0=0

10

Covariance can take a value of positive, negative or zero.

Value of covariance depends on the measurement unit: A covariance of 2 will change to 20000 if the

measurement unit changes from dollars to cents.

Let X and Y are measured by dollars, and W and Z are measured in cents. Then,

W=100X and Z=100Y. Therefore,

𝜇𝑤 =E(W)=100E(X),~~ 𝜇𝑧 =E(Z)=100E(Y)

cov(W,Z)=cov(100X,100Y)=E(100X-100 𝜇𝑥 )(100Y-100𝜇𝑤 )=10000E(X-𝜇𝑥 )(Y-𝜇𝑦 )

Correlation Coefficient of X and Y

To avoid the dependence of covariance on the measurement unit, standardize it

It is computed by

𝑐𝑜𝑣(𝑋,𝑌)

𝜎𝑥𝑦

𝜌𝑥𝑦 =corr(X,Y)=𝑠𝑑(𝑋)𝑠𝑑(𝑌)=𝜎

𝑥 𝜎𝑦

Its value lies between -1 and 1:

𝜌𝑥𝑦 =+1: X and Y are perfectly positively correlated

𝜌𝑥𝑦 =-1: X and Y are perfectly negatively correlated

𝜌𝑥𝑦 =0: X and Y are uncorrelated

Conditional Probability and Conditional Moments

X

-1

{HT or TH}

2

{HH or TT}

f(y)

-2

{TT}

Y

0

{HT or TH}

2

{HH}

f(x)

0

1/2

0

1/2

1/4

0

1/4

1/2

1/4

1/2

1/4

1

Suppose that you are told that X=-1 (i.e., you are told that outcomes are either TH or HT).

What is the probability of Y=-2? That is, when the outcome is known to be either TH or HT, what is

the probability that the outcome is {TT}? It is zero because {TT} will not occur when the outcome is

either TH or HT.

A similar reasoning gives P(Y=2|X=-1)=0, and P(Y=0|X=-1)=1

P(Y=-2|X=2)=1/2, P(Y=0|X=2)=0, P(Y=2|X=2)=1/2

Conditional probability is denoted by f(y|x)=P(Y=y|X=x)

The conditional probability density is computed by

𝑓(𝑦|𝑥) =

𝑓(𝑥,𝑦)

𝑓(𝑥)

𝑓(𝑥) ≠ 0

11

To understand this formula, suppose Y takes values 𝑦1 , 𝑦2 , and 𝑦3 . For a given X=x, we wish to find

𝑓(𝑦1 |𝑥), 𝑓(𝑦2 |𝑥), and 𝑓(𝑦3 |𝑥). These conditional probabilities must satisfy two properties:

(1) They must sum to 1: 𝑓(𝑦1 |𝑥) + 𝑓(𝑦2 |𝑥) + 𝑓(𝑦3 |𝑥) = 1

𝑓(𝑦𝑖 |𝑥 )

𝑓(𝑥,𝑦𝑖 )

(2) They must maintain relative probabilities of outcomes of Y:

=

𝑓(𝑥,𝑦𝑗 )

𝑓(𝑦𝑗 |𝑥 )

Joint probabilities of course satisfy property 2, but their sum is not equal to 1. Their sum is the marginal

probability f(x). Therefore, we divide the with f(x) so that

𝑓(𝑦1 |𝑥) + 𝑓(𝑦2 |𝑥) + 𝑓(𝑦3 |𝑥) =

𝑓(𝑥,𝑦1 )

𝑓(𝑥)

+

𝑓(𝑥,𝑦2 )

𝑓(𝑥)

+

𝑓(𝑥,𝑦3 )

𝑓(𝑥)

=

𝑓(𝑥,𝑦1 )+𝑓(𝑥,𝑦2 )+𝑓(𝑥,𝑦3 )

𝑓(𝑥)

𝑓(𝑥)

= 𝑓(𝑥) = 1

The mean and the variance based on the conditional probabilities are called the conditional mean

conditional expected value) and the conditional variance.

E(Y|X=-1)=(-2)×0+(0×1)+2×0=0

E(Y|X=2)=(-2)×1/2+(0×0)+2×1/2=0

Statistical Independence of Random Variables

Random variables X and Y are statistically independent if an information on X does not change the

marginal probability of Y, i.e., P(Y=y|X=x)=P(Y=y),

X and Y are independent if f(x,y)=f(x)f(y), and f(Y=y|X=x)=f(Y=y)

X

-1

{HT or TH}

2

{HH or TT}

f(y)

-2

{TT}

Y

0

{HT or TH}

2

{HH}

f(x)

0

1/2

0

1/2

1/4

0

1/4

1/2

1/4

1/2

1/4

1

We have shown that X and Y are not correlated. But, they are not independent statistically. This is easily

verified by checking weather joint probabilities are product of marginal probabilities.

Computations of moments of a function of random variables

Moments of functions of random variables

Consider two random variables X and Y, and let a, b, c and d be constants.

(i) Let Z=a+bX. Then, E(Z)=E(a+bX)=a+b E(X) and 𝜎𝑧2 =var(Z)=𝑏 2 𝜎𝑥2

Prove these results.

(ii) Let Z=a+bX+cY

E(Z)=E(a+bX+cY)=a+b E(X)+c E(Y)

𝜎𝑧2 =𝑏 2 𝜎𝑥2 + 𝑐 2 𝜎𝑦2 + 2𝑏𝑐𝜎𝑥𝑦

12

If X and Y are uncorrelated (or independent), then

𝜎𝑧2 =𝑏 2 𝜎𝑥2 + 𝑐 2 𝜎𝑦2

Prove these results.

(iii) cov(a+bX, c+dY)=bc cov(X,Y)

13

Desired Properties of Estimators

Toss a coin n times. Let the outcomes be denoted by Xi for the outcome of ith toss.

Xi=1 if H and Xi=0 if T

The coin is not necessarily balanced. Let θ=prob(H).

We decided to estimate θ by the fraction of the number of heads in n tosses:

𝑛

∑𝑖=1 𝑋𝑖

# 𝑜𝑓 ℎ𝑒𝑎𝑑𝑠

𝜃̂ =

=

𝑛

𝑛

What can we say about the statistical properties of this estimator?

(a) 𝐸(𝜃̂) = 𝜃 for any θ

𝜃(1−𝜃)

(b) 𝑣𝑎𝑟(𝜃̂) =

𝑛

Unbiased Estimator: An estimator that satisfies property (a) is called an unbiased estimator.

bias= 𝐸(𝜃̂) − 𝜃

Best Estimator: An unbiased estimator that has the smallest variance among all unbiased estimator is

called the Best Unbiased Estimator.

Alternative unbiased estimator

𝑋 +𝑋 +𝑋

𝜃̃ = 1 33 5

𝐸(𝑋1 )+𝐸(𝑋3 )+𝐸(𝑋5 )

𝐸(𝜃̃ ) =

=𝜃

... 𝜃̃ is an unbiased estimator

𝑣𝑎𝑟(𝜃̃ ) =

... If n>3, then 𝜃̂ has a smaller variance than 𝜃̃

3

𝜃(1−𝜃)

3

14

Statistical Properties of OLS estimators

Linear Model: 𝑌𝑖 = 𝛽0 + 𝛽1 𝑋𝑖 + 𝑢𝑖

𝛽̂0 = 𝑌̅ − 𝛽̂1 𝑋̅

OLS estimators:

𝑛

∑ (𝑋𝑖 −𝑋̅)(𝑌𝑖 −𝑌̅)

𝛽̂1 = 𝑖=1

̅ 2

∑𝑛

𝑖=1(𝑋𝑖 −𝑋)

Best Linear Unbiased Estimator (BLUE)

An estimator is called the best linear unbiased estimator if it is a linear estimator of Yi and unbiased, and

its variance is the smallest among all linear unbiased estimators.

Assumption 1. Conditional distribution of ui given Xi has a zero mean.

Assumption 2. (Xi,Yi) are independently and identically distributed.

Assumption 3. Large outliers are unlikely.

Assumption 1. E(ui|Xi)=0 ⇒ 𝐸(𝑌𝑖 |𝑋𝑖 ) = 𝛽0 + 𝛽1 𝑋𝑖 ,

𝐸(𝑢𝑖 ) = 𝐸[𝐸(𝑢𝑖 |𝑋𝑖 )] = 𝐸[0] = 0

Example of wage rate of individuals: Individuals of 12 year education (Xi=12) get 𝛽0 + 𝛽1 𝑋𝑖

on the average. Some individual gets a higher and some gets a lower wage rate than the average rate and

the average of deviations from the mean is zero.

Violation of Assumption 1.

Classical case is the simultaneous equation model: Supply-Demand equilibrium model

𝑄 𝑠 = 𝑎 + 𝑏𝑃 + 𝑢 𝑠 ,

𝑃=

𝛼−𝑎

𝑏+𝛽

+

𝑢𝑑 −𝑢𝑠

𝑏+𝛽

𝑄 𝑑 = 𝛼 − 𝛽𝑃 + 𝑢𝑑 ,

𝑄 = 𝑄 𝑠 = 𝑄𝑑

𝑢𝑑 = (𝑎 − 𝛼) + (𝑏 + 𝛽)𝑃 + 𝑢 𝑠

𝐸(𝑢𝑑 |𝑃) = (𝑎 − 𝛼) + (𝑏 + 𝛽)𝑃 + 𝐸(𝑢 𝑠 |𝑃)

Feedback Policy

GDP growth rate depends on the interest rate and the monetary authority sets the target interest rate

considering the GDP growth.

Connecticut Huskies won 100th consecutive games.

Performance of a team depends on the quality of players. The quality of new players also depend on

the performance of the team.

Omitted variable. Omitted variable may cause the correlation between the error term and the explanatory

variable. The regression is the effect of the percentage of children who are eligible for free lunch program

(lunch) on the percentage (math) of 10th graders who pass the math exam. The regression result is

math=32.14 - 0.319lunch, n=408, R2=0.171

This indicates that a 10% increase in the number of students who are eligible for free lunch will reduce

the passing percentage by 3.19 percent. A policy implication is that the government must tighten the

15

eligibility criteria to increase the passing percentage. This regression result doesn't seem to be right. It is

likely that the explanatory variable (percentage of eligible students) is correlated with the poverty level,

school quality and resources of the school which are contained in the error term. This causes the OLS

estimator biased.

Assumption 2.

This assumption means that samples are drawn randomly. This is possible in experiments. But, with

observed data, we hope that they are reasonably independent.

Independence of Yi and Yj means that ui and uj are independent. That is, ui's are i.i.d. random variables.

𝐸(𝑢𝑖 |𝑋𝑖 ) = 𝐸(𝑢𝑖 ) = 0

𝑣𝑎𝑟(𝑢𝑖 |𝑋𝑖 ) = 𝑣𝑎𝑟(𝑢𝑖 ) = 𝜎𝑢2

homoscedasticity

𝑣𝑎𝑟(𝑢𝑖 |𝑋𝑖 ) = 𝑣𝑎𝑟(𝑢𝑖 ) = 𝜎𝑢2

Heteroskedasticity

𝑣𝑎𝑟(𝑢𝑖 |𝑋𝑖 ) = 𝑣𝑎𝑟(𝑢𝑖 ) = 𝜎𝑖2

Theorem. The OLS estimator of coefficients are the BLUE under assumptions 1,2 and 3.

Proof of unbiasedness

(a) OLS estimators 𝛽̂1 can be written as

(𝑋 −𝑋̅)

𝛽̂1 = 𝛽1 + ∑𝑛𝑖=1 𝑤𝑖 𝑢𝑖 where 𝑤𝑖 = ∑𝑛 𝑖 ̅

𝑖=1(𝑋𝑖 −𝑋)

2

𝛽̂0 = 𝑌̅ − 𝛽̂1 𝑋̅ = (𝛽0 + 𝛽1 𝑋̅ + 𝑢̅) − 𝛽̂1 𝑋̅ = 𝛽0 − (𝛽̂1 − 𝛽1 )𝑋̅ + 𝑢̅

Proof.

Note that

𝑌̅ = 𝛽0 + 𝛽1 𝑋̅ + 𝑢̅

∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)𝑌̅ = ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)𝛽0 = 0

∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)𝑋𝑖 = ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)(𝑋𝑖 − 𝑋̅) = ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)2

Using these relationships we can write

∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)(𝑌𝑖 − 𝑌̅) = ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)𝑌𝑖 = ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)(𝛽0 + 𝛽1 𝑋𝑖 + 𝑢𝑖 ) =

= ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)𝑋𝑖 + ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)𝑢𝑖 = 𝛽1 ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)2 + ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)𝑢𝑖

Therefore,

𝑛

∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)𝑢𝑖

𝛽1 ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)2 + ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)𝑢𝑖

𝛽̂1 =

=

𝛽

+

= 𝛽1 + ∑ 𝑤𝑖 𝑢𝑖

1

∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)2

∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅)2

𝑖=1

Taking conditional expectation

𝐸(𝛽̂1 |𝑋𝑖 , 𝑖 = 1,2, … , 𝑛) = 𝛽1 + ∑𝑛𝑖=1 𝑤𝑖 𝐸(𝑢𝑖 | 𝑋𝑖 , 𝑖 = 1,2, … , 𝑛) = 𝛽1

𝐸(𝛽̂0 |𝑋𝑖 , 𝑖 = 1,2, … , 𝑛) = 𝛽0 − 𝐸[(𝛽̂1 − 𝛽1 )𝑋̅|𝑋𝑖 , 𝑖 = 1,2, … , 𝑛] + 𝐸[𝑢̅|𝑋𝑖 , 𝑖 = 1,2, … , 𝑛] = 𝛽0

16

Theorem. Variance and covariance of coefficient estimators under homoscedasticity

Under the assumptions listed above, the variances of OLS estimators are given by

𝜎̂2 = 𝜎𝑢2 𝑄0

𝜎̂2 = 𝜎𝑢2 𝑄1

𝜎̂ ̂ = 𝑐𝑜𝑣(𝛽̂0 , 𝛽̂1 ) = −𝑋̅𝑣𝑎𝑟(𝛽̂1 ) = −𝜎𝑢2 𝑋̅𝑄1

𝛽0

𝛽0 ,𝛽1

𝛽1

∑𝑛 𝑋 2

𝑖

𝑄0 = 𝑛 ∑𝑛 𝑖=1

(𝑋 −𝑋̅)2

𝑖=1

𝑖

𝑄1 = ∑𝑛

1

̅ 2

𝑖=1(𝑋𝑖 −𝑋)

Remarks:

1. Estimators become less precise (i.e., higher variances) as there is more uncertainty in the error term

(i.e., higher value of 𝜎𝑢2 ).

2. Estimators become more precise (i.e., lower variances) as the regressor X is more widely dispersed

around its mean, i.e., the denominator term is larger.

3. Estimators become more precise (i.e., lower variances) as the sample size n increases.

4. Variance of intercept term increases if values of regressor X are far away from the origin 0.

5. 𝛽̂0 and 𝛽̂1 are negatively (positively) correlated if 𝑋̅ is positive (negative) because the regression line

must pass the point of sample means (𝑋̅, 𝑌̅).

6. Variances of coefficient estimators are unknown because they involve 𝜎𝑢2 which is unknown. To

compute their variances, we need an estimator for 𝜎𝑢2 .

Least squares estimator of 𝜎𝑢2

Variances of least squares estimators of coefficients and their covariance cannot be computed from the

given data because 𝜎𝑢2 is unknown. How do we estimate it?

- 𝜎𝑢2 is the variance of error term: 𝜎𝑢2 = 𝑣𝑎𝑟(𝑢𝑖 ) = 𝐸[𝑢𝑖 − 𝐸(𝑢𝑖 )]2 = 𝐸[𝑢𝑖 ]2

- Expected value is a theoretical counter part of sample mean

- If we have observed data on ui, we may estimate the variance by sample mean ∑𝑛𝑖=1 𝑢𝑖2 /𝑛.

- Since we don’t have observed values of error terms, we may use its estimate 𝑢̂𝑖2

- A problem is that not all 𝑢̂𝑖2 can take independent values:

For example, ∑𝑛𝑖=1 𝑢̂𝑖 = 0. This means that if we have values of the first n-1 estimated error

terms, the last one is automatically determined.

This is called the loss of degrees of freedom.

- The number loss in degrees of freedom is equal to the number of parameters we estimate.

17

- in the simple linear regression model, it is two.

- when we add all estimated residuals, are actually adding n-2 independent values.

- the degrees of freedom is therefore n-2.

- and we estimate the variance by the average of n-2 independent residuals

𝜎̂𝑢2 =

∑𝑛

̂𝑖2

𝑖=1 𝑢

𝑛−2

Estimated variances and covariance of coefficient estimators are obtained by replacing the unknown

sigma^2 with its unbiased estimate sigma hat^2.

𝜎̂𝛽̂2 = 𝜎̂𝑢2 𝑄0

𝜎̂𝛽̂2 = 𝜎̂𝑢2 𝑄1

0

𝜎̂𝛽̂0 ,𝛽̂1 = −𝑋̅𝜎̂𝛽̂2

1

1

Remark: Goodness of Fit R2

The idea of the R2 measure of goodness of fit was to compare the SSR in models with and without

information about the regressor and measure the fraction of the reduction in the SSR

𝑅2 =

𝑆𝑆𝑅𝑟 −𝑆𝑆𝑅𝑢

𝑆𝑆𝑅𝑟

=1−

𝑆𝑆𝑅𝑢

𝑆𝑆𝑅𝑟

Another idea is to see how close predicted values 𝑌̂𝑖 are to the observed 𝑌𝑖 . The closeness is measured by

the sample correlation coefficient or its squared value

𝑐𝑜𝑟𝑟(𝑌𝑖 , 𝑌̂𝑖 ) =

𝑐𝑜𝑣(𝑌𝑖 ,𝑌̂𝑖 )

𝑆𝐷(𝑌𝑖 )𝑆𝐷(𝑌̂𝑖 )

̂

2

[𝑐𝑜𝑣(𝑌𝑖 ,𝑌𝑖 )]

𝑅 2 = [𝑐𝑜𝑟𝑟(𝑌𝑖 , 𝑌̂𝑖 )]2 =

𝑣𝑎𝑟(𝑌 )𝑣𝑎𝑟(𝑌̂ )

𝑖

𝑖

2

To show the last expression is the same as the previous expression of R , we first show

∑𝑛𝑖=1 𝑢̂𝑖 = ∑𝑛𝑖=1(𝑌𝑖 − 𝛽̂0 − 𝛽̂1 𝑋𝑖 ) = ∑𝑛𝑖=1(𝑌𝑖 − 𝑌̅) + 𝛽̂1 ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅) = 0 + 0 = 0

∑𝑛𝑖=1 𝑋𝑖 𝑢̂𝑖 = ∑𝑛𝑖=1(𝑌𝑖 − 𝛽̂0 − 𝛽̂1 𝑋𝑖 ) = ∑𝑛𝑖=1(𝑌𝑖 − 𝑌̅) + 𝛽̂1 ∑𝑛𝑖=1(𝑋𝑖 − 𝑋̅) = 0 + 0 = 0

Noting

𝑌𝑖 = 𝑌̂𝑖 + 𝑢̂𝑖

𝑌̅ = 𝑌̅̂ + 𝑢̅̂ = 𝑌̅̂

⇒

it is easy to show

1

1

1

𝑐𝑜𝑣(𝑌𝑖 , 𝑌̂𝑖 ) = 𝑛 ∑𝑛𝑖=1(𝑌𝑖 − 𝑌̅)(𝑌̂𝑖 − 𝑌̅̂ ) = 𝑛 ∑𝑛𝑖=1(𝑌̂𝑖 + 𝑢̂𝑖 − 𝑌̅̂ )(𝑌̂𝑖 − 𝑌̅̂ ) = 𝑛 ∑𝑛𝑖=1(𝑌̂𝑖 − 𝑌̅̂ )2

where the last equality is due to ∑𝑛𝑖=1 𝑋𝑖 𝑢̂𝑖 = 0. This shows

1

𝑣𝑎𝑟(𝑌̂𝑖 ) = 𝑛 ∑𝑛𝑖=1(𝑌̂𝑖 − 𝑌̅̂ )2 = 𝑐𝑜𝑣(𝑌𝑖 , 𝑌̂𝑖 )

Therefore,

[𝑐𝑜𝑣(𝑌 ,𝑌̂ )]2

𝑣𝑎𝑟(𝑌̂ )

𝑖 𝑖

𝑅 2 = 𝑣𝑎𝑟(𝑌 )𝑣𝑎𝑟(𝑌

= 𝑣𝑎𝑟(𝑌𝑖)

̂)

𝑖

𝑖

𝑖

18

Note that

1

𝑛

1

𝑛

𝑣𝑎𝑟(𝑌𝑖 ) = ∑𝑛𝑖=1(𝑌𝑖 − 𝑌̅)2 = 𝑆𝑆𝑅𝑟

Now, we will show 𝑛 × 𝑣𝑎𝑟(𝑌̂𝑖 ) = 𝑆𝑆𝑅𝑟 − 𝑆𝑆𝑅𝑢

∑𝑛𝑖=1(𝑌𝑖 − 𝑌̅)2 = ∑𝑛𝑖=1(𝑌𝑖 − 𝑌̂𝑖 + 𝑌̂𝑖 − 𝑌̅)2

2

= ∑𝑛𝑖=1(𝑌𝑖 − 𝑌̂𝑖 )2 + ∑𝑛𝑖=1(𝑌̂𝑖 − 𝑌̅) + 2 ∑𝑛𝑖=1(𝑌𝑖 − 𝑌̂𝑖 )(𝑌̂𝑖 − 𝑌̅)

2

= ∑𝑛𝑖=1 𝑢̂𝑖 2 + ∑𝑛𝑖=1(𝑌̂𝑖 − 𝑌̅) + 2 ∑𝑛𝑖=1 𝑢̂𝑖 (𝑌̂𝑖 − 𝑌̅)

The last term is zero

∑𝑛𝑖=1 𝑢̂𝑖 (𝑌̂𝑖 − 𝑌̅) = ∑𝑛𝑖=1 𝑢̂𝑖 (𝑌̂𝑖 − 𝑌̅) = ∑𝑛𝑖=1 𝑢̂𝑖 (𝛽̂0 + 𝛽̂1 𝑋𝑖 − 𝑌̅) = 0

where the last equality is due to

∑𝑛𝑖=1 𝑢̂𝑖 (𝛽̂0 − 𝑌̅) = (𝛽̂0 − 𝑌̅) ∑𝑛𝑖=1 𝑢̂𝑖 = 0

∑𝑛𝑖=1 𝑢̂𝑖 𝑋𝑖 = 0

This is shown before.

Putting all these results together, we have

𝑅2 =

[𝑐𝑜𝑣(𝑌𝑖 ,𝑌̂𝑖 )]2

𝑣𝑎𝑟(𝑌𝑖 )𝑣𝑎𝑟(𝑌̂𝑖 )

=

𝑣𝑎𝑟(𝑌̂𝑖 )

𝑣𝑎𝑟(𝑌𝑖 )

=

𝑆𝑆𝑅𝑟 −𝑆𝑆𝑅𝑢

𝑆𝑆𝑅𝑟

=1−

𝑆𝑆𝑅𝑢

𝑆𝑆𝑅𝑟