* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Residual Analysis

Data assimilation wikipedia , lookup

Interaction (statistics) wikipedia , lookup

Forecasting wikipedia , lookup

Instrumental variables estimation wikipedia , lookup

Choice modelling wikipedia , lookup

Time series wikipedia , lookup

Linear regression wikipedia , lookup

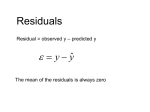

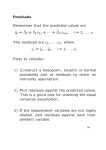

Residual Analysis Residual Analysis • Purposes – Examine Functional Form (Linear vs. NonLinear Model) – Evaluate Violations of Assumptions • Graphical Analysis of Residuals Y (X1, mY1) X1 For one value X1, a population contains may Y values. Their mean is mY1. Y A Population Regression Line mY = a + BX X A Sample Regression Line Y The sample line approximates the population regression line. y = a + bx x Histogram of Y Values at X = X1 f(e) Y X1 X mY1 = a + BX1 mY = a + BX Normal Distribution of Y Values when X = X1 f(e) The standard deviation of the normal distribution is the standard error of estimate. Y X1 X mY1 = a + BX1 mY = a + BX Normality & Constant Variance Assumptions f(e) Y X2 X X1 A Normal Regression Surface f(e) Every cross-sectional slice of the surface is a normal curve. Y X2 X X1 Analysis of Residuals A residual is the difference between the actual value of Y and the predicted value Yˆ . Linear Regression and Correlation Assumptions • The independent variables and the dependent variable have a linear relationship. • The dependent variable must be continuous and at least interval-scale. • Linear Regression Assumptions Normality Y Values Are Normally Distributed with a mean of Zero For Each X. The residuals ( e ) are normally distributed with a mean of Zero. Homoscedasticity (Constant Variance) The variation in the residuals must be the same for all values of Y. The standard deviation of the residuals is the same regardless of the given value of X. Independence of Errors The residuals are independent for each value of X The residuals ( e ) are independent of each other The size of the error for a particular value of x is not related to the size of the error for any other value of x Evaluating the Aptness of the Fitted Regression Model Does the model appear linear? Residual Plot for Linearity (Functional Form) Aptness of the Fitted Model Correct Specification Add X2 Term e e X X Residual Plots for Linearity of the Fitted Model • Scatter Plot of Y vs. X value • Scatter Plot of residuals vs. X value Using SPSS to Test for Linearity of the Regression Model • Analyze/Regression/Linear – Dependent - Sales – Independent - Customers – Save • Predicted Value (Unstandardized or Standardized) • Residual (Unstandardizedor Standardized) • Graphs/Scatter/Simple • Y-Axis: residual [ res_1 or zre_1 ] • X-Axis: Customer (independent variable) t t a a o o n n d e s a e n d d a e L O l s u E 1 5 1 7 1 5 5 2 9 2 6 1 1 9 93 3 6 7 9 9 24 4 1 9 8 2 15 5 9 9 9 1 36 6 9 0 3 3 7 9 7 4 9 9 9 8 1 8 0 7 1 1 9 4 9 9 7 6 4 1 3 1 0 0 6 7 3 1 7 1 1 9 8 7 7 1 3 1 2 2 9 3 3 1 1 3 5 4 9 5 5 1 4 1 7 8 5 5 5 1 5 2 7 1 9 1 9 1 6 9 9 3 7 1 7 1 7 4 9 4 4 1 4 1 8 4 0 1 9 1 9 1 9 0 2 8 2 21 2 0 1 7 6 6 62 T N o 0 0 0 0 N 0T a . Scatter Plot of Customer by Sales 12 11 SALES 10 9 8 7 6 400 500 600 700 800 CUSTOMER 900 1000 1100 Scatter Plot of Customer by Residuals 1.0 .5 0.0 -.5 -1.0 -1.5 400 500 600 700 800 CUSTOMER 900 1000 1100 Plot of Residuals vs R&D Expenditures Plot of Residuals vs X Values 60 Residual 40 20 0 -20 -40 0 2 4 6 8 10 RDEXPEND ELECTRONIC FIRMS 12 14 16 The Linear Regression Assumptions 1. Normality of residuals (Errors) 2. Homoscedasticity (Constant Variance) 3. Independence of Residuals (Errors) Need to verify using residual analysis. Residual Plots for Normality • Construct histogram of residuals – Stem-and-leaf plot – Box-and-whisker plot – Normal probability plot • Scatter Plot residuals vs. X values – Simple regression • Scatter Plot residuals vs. Y – Multiple regression Residual Plot 1 for Normality Construct histogram of residuals • Nearly symmetric • Centered near or at zero • Shape is approximately normal 10 8 6 4 Std. Dev = 1.61 Mean = 0.0 N = 31.00 2 0 -3.0 -2.0 -1.0 0.0 RESIDUAL 1.0 2.0 3.0 Using SPSS to Test for Normality Histogram of Residuals • Analyze/Regression/Linear – – – – Dependent - Sales Independent - Customers Plot/Standardized Residual Plot: Histogram Save • Predicted Value (Unstandardized or Standardized) • Residual (Unstandardizedor Standardized) • Graphs/Histogram – Variable - residual (Unstandardized or Standardedized) Histogram of Residuals of Sales and Customer Problem from regression output Histogram Dependent Variable: SALES 7 6 5 4 Frequency 3 2 Std. Dev = .97 1 Mean = 0.00 0 N = 20.00 -2.00 -1.50 -1.00 -.50 0.00 .50 Regression Standardized Residual 1.00 1.50 Histogram of Residuals of Sales and Customer Problem from graph output 7 6 5 4 3 2 Std. Dev = .49 1 Mean = 0.00 N = 20.00 0 -1.00 -.75 -.50 -.25 Unstandardized Residual 0.00 .25 .50 .75 Residual Plot 2 for Normality Plot residuals vs. X values • Points should be distributed about the horizontal line at 0 • Otherwise, normality is violated Residuals 0 X Using SPSS to Test for Normality Scatter Plot • Simple Regression – Graph/Scatter/Simple • Y-Axis: residual [ res_1 or zre_1 ] • X-Axis: Customers [independent variable ] • Multiple Regression – Graph/Scatter/Simple • Y-Axis: residual [ res_1 or zre_1 ] • X-Axis: predicted Y values Scatter Plot of Customer by Residuals 1.0 .5 0.0 -.5 -1.0 -1.5 400 500 600 700 800 CUSTOMER 900 1000 1100 The Electronic Firms An accounting standards board investigating the treatment of research and development expenses by the nation’s major electronic firms was interested in the relationship between a firm’s research and development expenditures and its earnings. Earnings = 6.840 + 10.671(rdexpend) List of Data, Predicted Values and Residuals RDEXPEND EARNINGS 15.00 8.50 12.00 6.50 4.50 2.00 .50 1.50 14.00 9.00 7.50 .50 2.50 3.00 6.00 221.00 83.00 147.00 69.00 41.00 26.00 35.00 40.00 125.00 97.00 53.00 12.00 34.00 48.00 64.00 Data PRE_1 RES_1 ZPR_1 ZRE_1 166.90075 97.54224 134.88913 76.20116 54.86008 28.18373 12.17792 22.84846 156.23021 102.87751 86.87170 12.17792 33.51900 38.85427 70.86589 54.09925 -14.54224 12.11087 -7.20116 -13.86008 -2.18373 22.82208 17.15154 -31.23021 -5.87751 -33.87170 -.17792 .48100 9.14573 -6.86589 1.84527 .48229 1.21620 .06291 -.35647 -.88070 -1.19523 -.98554 1.63558 .58713 .27260 -1.19523 -.77585 -.67101 -.04194 2.39432 -.64361 .53600 -.31871 -.61342 -.09665 1.01006 .75909 -1.38218 -.26013 -1.49909 -.00787 .02129 .40477 -.30387 Predicted Value Residual Standardized Standardized Predicted Value Residual ELECTRONIC FIRMS Histogram Dependent Variable: EARNINGS 6 Frequency 5 4 3 2 Std. Dev = .96 Mean = 0.00 N = 15.00 1 0 -1.50 -.50 -1.00 .50 0.00 1.50 1.00 2.50 2.00 Regression Standardized Residual ELECTRONIC FIRMS Standardized Residual Plot of St. Residuals vs RDexpend Plot of Standardized Residuals vs X Value 3 2 1 0 -1 -2 0 2 4 6 8 10 RDEXPEND ELECTRONIC FIRMS 12 14 16 Residual Plot for Homoscedasticity Constant Variance Correct Specification Heteroscedasticity SR SR 0 0 X X Fan-Shaped. Standardized Residuals Used. Using SPSS to Test for Homoscedasticity of Residuals • Simple Regression – Graphs/Scatter/Simple • Y-Axis: residual [ res_1 or zre_1 ] • X-Axis: rdexpend [independent variable ] • Multiple Regression – Graphs/Scatter/Simple • Y-Axis: residual [ res_1 or zre_1 ] • X-Axis: predicted Y values Test for Homoscedasticity Plot of Residuals vs Number 1.5 Residual 1.0 .5 0.0 -.5 -1.0 -1.5 0 1 2 3 NUMBER DUNTON’S WORLD OF SOUND 4 5 6 Test for Homoscedasticity Plot of Residuals vs R&D Expenditures Plot of Residuals vs X Values 60 Residual 40 20 0 -20 -40 0 2 4 6 8 10 RDEXPEND ELECTRONIC FIRMS 12 14 16 Scatter Plot of Customer by Residuals 1.0 .5 0.0 -.5 -1.0 -1.5 400 500 600 700 800 CUSTOMER 900 1000 1100 Residual Plot for Independence Correct Specification Not Independent SR SR X Plots Reflect Sequence Data Were Collected. X Two Types of Autocorrelation • Positive Autocorrelation: successive terms in time series are directly related • Negative Autocorrelation: successive terms are inversely related Positive autocorrelation: Residuals tend to be followed by residuals with the same sign Residual y-y 20 0 -20 0 4 8 12 Time Period, t 16 20 Negative Autocorrelation: Residuals tend to change signs from one period to the next Residual y-y 20 0 -20 0 4 8 12 Time Period, t 16 20 Problems with autocorrelated time-series data • sy.x and sb are biased downwards • Invalid probability statements about regression equation and slopes • F and t tests won’t be valid • May imply that cycles exist • May induce a falsely high or low agreement between 2 variables Using SPSS to Test for Independence of Errors • Graphs/Sequence – Variables: residual (res_1) • Durbin-Watson Statistic Time Sequence of Residuals 1.5 Residual 1.0 .5 0.0 -.5 -1.0 -1.5 1 2 3 4 5 Sequence number DUNTON’S WORLD OF SOUND 6 7 Time Sequence Plot of Residuals 60 Residual 40 20 0 -20 -40 1 2 3 4 5 6 7 8 Sequence number ELECTRONIC FIRMS 9 10 11 12 13 14 15 Customers 794 799 837 855 845 844 863 875 880 905 886 843 904 950 841 Sales($000) 9 8 7 9 10 10 11 11 12 13 12 10 12 12 10 Customers and sales for period of 15 consecutive weeks. Residuals over Time 2 1 0 -1 -2 -3 1 2 3 4 5 6 7 8 Time 9 10 11 12 13 14 15 Durbin-Watson Procedure • Used to Detect Autocorrelation – Residuals in One Time Period Are Related to Residuals in Another Period – Violation of Independence Assumption • Durbin-Watson Test Statistic n D= (ei - ei -1 ) i =2 n i =1 2 ei 2 H0 : No positive autocorrelation exists (residuals are random) H1 : Positive autocorrelation exists Accept Ho if d> du Reject Ho if d < dL Inconclusive if dL < d < du d= Testing for Positive Autocorrelation There is The test is positive autocorrelation inconclusive 0 dL du There is no evidence of autocorrelation 2 4 Rule of Thumb • Positive autocorrelation - D will approach 0 • No autocorrelation - D will be close to 2 • Negative autocorrelation - D is greater than 2 and may approach a maximum of 4 Using SPSS with Autocorrelation • Analyze/Regression/Linear • Dependent; Independent • Statistics/Durbin-Watson (use only time series data) Customers 794 799 837 855 845 844 863 875 880 905 886 843 904 950 841 Sales($000) 9 8 7 9 10 10 11 11 12 13 12 10 12 12 10 Customers and sales for period of 15 consecutive weeks. Residuals over Time 2 1 0 -1 -2 -3 1 2 3 4 5 6 7 8 Time 9 10 11 12 13 14 15 b u E b u i s r Durbin-Watson q s R q s M a .883 1 1 4 3 a P b D Using SPSS with Autocorrelation • Analyze/Regression/Linear • Dependent; Independent • Statistics/ Durbin-Watson (use only time series data) • If DW indicates autocorrelation, then … – Analyze/Time Series/Autoregression – Cochrane-Orcutt – OK Solutions for autocorrelation • Use Final Parameters under Cochrane-Orcutt • Changes in the dependent and independent variables - first differences • Transform the variables • Include an independent variable that measures the time of the observation • Use lagged variables (once lagged value of dependent variable is introduced as independent variable, Durbon-Watson test is not valid