* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Key Components Overview, part-of

Word-sense disambiguation wikipedia , lookup

Old Norse morphology wikipedia , lookup

Udmurt grammar wikipedia , lookup

English clause syntax wikipedia , lookup

Ojibwe grammar wikipedia , lookup

Lithuanian grammar wikipedia , lookup

Modern Greek grammar wikipedia , lookup

Old English grammar wikipedia , lookup

Macedonian grammar wikipedia , lookup

Swedish grammar wikipedia , lookup

Georgian grammar wikipedia , lookup

Modern Hebrew grammar wikipedia , lookup

Old Irish grammar wikipedia , lookup

Arabic grammar wikipedia , lookup

Kannada grammar wikipedia , lookup

Navajo grammar wikipedia , lookup

Lexical semantics wikipedia , lookup

Portuguese grammar wikipedia , lookup

Romanian nouns wikipedia , lookup

Italian grammar wikipedia , lookup

Compound (linguistics) wikipedia , lookup

French grammar wikipedia , lookup

Spanish grammar wikipedia , lookup

Chinese grammar wikipedia , lookup

Sotho parts of speech wikipedia , lookup

Zulu grammar wikipedia , lookup

Ancient Greek grammar wikipedia , lookup

Latin syntax wikipedia , lookup

Vietnamese grammar wikipedia , lookup

Esperanto grammar wikipedia , lookup

Serbo-Croatian grammar wikipedia , lookup

Malay grammar wikipedia , lookup

Scottish Gaelic grammar wikipedia , lookup

English grammar wikipedia , lookup

Yiddish grammar wikipedia , lookup

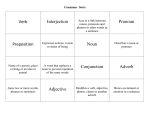

KEY COMPONENTS OVERVIEW POS TAGGING Heng Ji [email protected] September 9, 2016 Key NLP Components • Baseline Search • Math basics, Information Retrieval • Question Understanding • Lexical Analysis, Part-of-Speech Tagging, Parsing • Deep Document Understanding • Syntactic Parsing • Semantic Role Labeling • Dependency Parsing • Name Tagging • Coreference Resolution • Relation Extraction • Temporal Information Extraction • Event Extraction • Answer Ranking, Confidence Estimation • Knowledge Base Construction, Population and Utilization What We have as Input • Question: when and where did Billy Mays die? • Source Collection: millions of news, web blogs, discussion forums, tweets, Wikipedia, … • Question Parsing: • [Billy Mays, date-of-death] • [Billy Mays, place-of-death] Question Analysis Part-of-Speech Tagging and Syntactic Parsing S NP NN VP IN School of NP NP CC NP NN and NN Theatre NP VBZ Dance presents JJ NN Wonderful Town Semantic Role Labeling: Adding Semantics into Trees S NP/ARG0 DT JJ VP NN The Supreme Court VBD gave NNS VBG states Predicate NP/ARG1 NP/ARG2 NN working Leeway/ARG0 Predicate Core Arguments • • • • • Arg0 = agent Arg1 = direct object / theme / patient Arg2 = indirect object / benefactive / instrument / attribute / end state Arg3 = start point / benefactive / instrument / attribute Arg4 = end point Dependency Parsing They hid the letter on the shelf Dependency Relations Name Tagging • Since its inception in 2001, <name ID=“1” type=“organization”>Red</name>has caused a stir in <name ID=“2” type=“location”>Northeast Ohio</name> by stretching the boundaries of classical by adding multimedia elements to performances and looking beyond the expected canon of composers. • Under the baton of <name ID=“3” type=“organization”> Red</name> Artistic Director <name ID=“4” type=“person”>Jonathan Sheffer</name>, <name ID=“5” type=“organization”>Red</name> makes its debut appearance at <name ID=“6” type=“organization”> Kent State University</name> on March 7 at 7:30 p.m. Coreference • But the little prince could not restrain admiration: • "Oh! How beautiful you are!" • "Am I not?" the flower responded, sweetly. "And I was born at the same moment as the sun . . ." • The little prince could guess easily enough that she was not any too modest--but how moving--and exciting--she was! • "I think it is time for breakfast," she added an instant later. "If you would have the kindness to think of my needs--" • And the little prince, completely abashed, went to look for a sprinkling-can of fresh water. So, he tended the flower. Relation Extraction relation: a semantic relationship between two entities ACE relation type Agent-Artifact Discourse Employment/ Membership Place-Affiliation Person-Social Physical Other-Affiliation example Rubin Military Design, the makers of the Kursk each of whom Mr. Smith, a senior programmer at Microsoft Salzburg Red Cross officials relatives of the dead a town some 50 miles south of Salzburg Republican senators Temporal Information Extraction • In 1975, after being fired from Columbia amid allegations that he used company funds to pay for his son's bar mitzvah, Davis founded Arista • Is ‘1975’ related to the employee_of relation between Davis and Arista? • If so, does it indicate START, END, HOLDS… ? • Each classification instance represents a temporal expression in the context of the entity and slot value. • We consider the following classes • START Rob joined Microsoft in 1999. • END Rob left Microsoft in 1999. • HOLDS In 1999 Rob was still working for Microsoft. • RANGE Rob has worked for Microsoft for the last ten years. • NONE Last Sunday Rob’s friend joined Microsoft. 13 Event Extraction • An event is specific occurrence that implies a change of states • event trigger: the main word which most clearly expresses an event occurrence • event arguments: the mentions that are involved in an event (participants) • event mention: a phrase or sentence within which an event is described, including trigger and arguments • ACE defined 8 types of events, with 33 subtypes Argument, role=victim ACE event type/subtype Life/Die Transaction/Transfer Movement/Transport Business/Start-Org Conflict/Attack Contact/Meet Personnel/Start-Position Justice/Arrest trigger Event Mention Example Kurt Schork died in Sierra Leone yesterday GM sold the company in Nov 1998 to LLC Homeless people have been moved to schools Schweitzer founded a hospital in 1913 the attack on Gaza killed 13 Arafat’s cabinet met for 4 hours She later recruited the nursing student Faison was wrongly arrested on suspicion of murder Putting Together: Candidate Answer Extraction Mays, 50, had died in his sleep at his Tampa home the morning of June 28. {NUM } 【Per:age】 50 {PER.Individual, NAM, Billy Mays} 【Query】 Mays amod nsubj aux Tampa nn had sleep prep_at home poss {FAC.Building-Grounds.NOM} poss June,28 {Death-Trigger} prep_in located_in prep_of died his {PER.Individual.PRO, Mays} Ranking Answers/Confidence Estimation • Problems: • different information sources may generate claims with varied trustability • various systems may generate erroneous, conflicting, redundant, complementary, ambiguously worded, or interdependent claims from the same set of documents Ranking Answers Hard Constraints Used to capture the deep syntactic and semantic knowledge that is likely to pertain to the propositional content of claims. Node Constraints e.g., Entity type, subtype and mention type Path Constraints Trigger phrases, relations and events, path length Position of a particular node/edge type in the path Interdependent Claims Conflicting slot fillers Inter-dependent slot types Ranking Answers • Soft Features: • Cleanness • Informativeness • Local Knowledge Graph • Voting NLP+Knowledge Base • Knowledge Base (KB) • Attributes (a.k.a., “slots”) derived from Wikipedia infoboxes are used to create the reference KB • Source Collection • A large corpus of unstructured texts Knowledge Base Linking (Wikification) NIL Query = “James Parsons” Knowledge Base Population (Slot Filling) <query id="SF114"> <name>Jim Parsons</name> <docid>eng-WL-11-174592-12943233</docid> <enttype>PER</enttype> <nodeid>E0300113</nodeid> <ignore>per:date_of_birth per:age per:country_of_birth per:city_of_birth</ignore> </query> School Attended: University of Houston KB Slots Person per:alternate_names per:date_of_birth per:age per:country_of_birth per:stateorprovince_of_birth per:city_of_birth per:origin per:date_of_death per:country_of_death per:stateorprovince_of_death per:city_of_death per:cause_of_death per:countries_of_residence per:stateorprovinces_of_residence per:cities_of_residence per:schools_attended per:title per:member_of per:employee_of per:religion per:spouse per:children per:parents per:siblings per:other_family per:charges Organization org:alternate_names org:political/religious_affiliation org:top_members/employees org:number_of_employees/members org:members org:member_of org:subsidiaries org:parents org:founded_by org:founded org:dissolved org:country_of_headquarters org:stateorprovince_of_headquarters org:city_of_headquarters org:shareholders org:website Text to Speech Synthesis • ATT: • http://www.research.att.com/~ttsweb/tts/demo.php • IBM • http://www-306.ibm.com/software/pervasive/tech/demos/tts.shtml • Cepstral • http://www.cepstral.com/cgi-bin/demos/general • Rhetorical (= Scansoft) • http://www.rhetorical.com/cgi-bin/demo.cgi • Festival • http://www-2.cs.cmu.edu/~awb/festival_demos/index.html Information Retrieval ① ② ① Relevant Document Set ② Sentence Set[Tree representation] ③ Extracted Paths ③ Question Answering Outline • Course Overview • Introduction to NLP • Introduction to Watson • Watson in NLP View Machine Learning for NLP • Why NLP is Hard Models and Algorithms • By models we mean the formalisms that are used to capture the various kinds of linguistic knowledge we need. • Algorithms are then used to manipulate the knowledge representations needed to tackle the task at hand. Some Early NLP History • 1950s: • Foundational work: automata, information theory, etc. • First speech systems • Machine translation (MT) hugely funded by military (imagine that) • Toy models: MT using basically word-substitution • Optimism! • 1960s and 1970s: NLP Winter • Bar-Hillel (FAHQT) and ALPAC reports kills MT • Work shifts to deeper models, syntax • … but toy domains / grammars (SHRDLU, LUNAR) • 1980s/1990s: The Empirical Revolution • • • • Expectations get reset Corpus-based methods become central Deep analysis often traded for robust and simple approximations Evaluate everything Two Generations of NLP • Hand-crafted Systems – Knowledge Engineering [1950s– ] • Rules written by hand; adjusted by error analysis • Require experts who understand both the systems and domain • Iterative guess-test-tweak-repeat cycle • Automatic, Trainable (Machine Learning) System [1985s– ] • The tasks are modeled in a statistical way • More robust techniques based on rich annotations • Perform better than rules (Parsing 90% vs. 75% accuracy) Corpora • A corpus is a collection of text • Often annotated in some way • Sometimes just lots of text • Balanced vs. uniform corpora • Examples • Newswire collections: 500M+ words • Brown corpus: 1M words of tagged “balanced” text • Penn Treebank: 1M words of parsed WSJ • Canadian Hansards: 10M+ words of aligned French / English sentences • The Web: billions of words of who knows what Resources: Existing Corpora • Brown corpus, LOB Corpus, British National Corpus • Bank of English • Wall Street Journal, Penn Tree Bank, Nombank, • • • • • • Propbank, BNC, ANC, ICE, WBE, Reuters Corpus Canadian Hansard: parallel corpus English-French York-Helsinki Parsed corpus of Old Poetry Tiger corpus – German CORII/CODIS - contemporary written Italian MULTEX 1984 and The Republic in many languages MapTask Paradigms • In particular.. • State-space search • To manage the problem of making choices during processing when we lack the information needed to make the right choice • Dynamic programming • To avoid having to redo work during the course of a state-space search • CKY, Earley, Minimum Edit Distance, Viterbi, Baum-Welch • Classifiers • Machine learning based classifiers that are trained to make decisions based on features extracted from the local context Information Units of Interest - Examples • Explicit units: • Documents • Lexical units: words, terms (surface/base form) • Implicit (hidden) units: • Word senses, name types • Document categories • Lexical syntactic units: part of speech tags • Syntactic relationships between words – parsing • Semantic relationships Data and Representations • Frequencies of units • Co-occurrence frequencies • Between all relevant types of units (term-doc, term-term, termcategory, sense-term, etc.) • Different representations and modeling • Sequences • Feature sets/vectors (sparse) Learning Methods for NLP • Supervised: identify hidden units (concepts) of explicit units • Syntactic analysis, word sense disambiguation, name classification, relations, categorization, … • Trained from labeled data • Unsupervised: identify relationships and properties of explicit units (terms, docs) • Association, topicality, similarity, clustering • Without labeled data • Semi-supervised: Combinations Avoiding/Reducing Manual Labeling • Basic supervised setting – examples are annotated manually by labels (sense, text category, part of speech) • Settings in which labeled data can be obtained without manual annotation: • Anaphora, target word selection The system displays the file on the monitor and prints it. • Unsupervised/Semi-supervised Learning approaches Sometimes referred as unsupervised learning, though it actually addresses a supervised task of identifying an externally imposed class (“unsupervised” training) Outline • POS Tagging and HMM • Formal Grammars • Context-free grammar • Grammars for English • Treebanks • Parsing and CKY Algorithm 38/39 What is Part-of-Speech (POS) • Generally speaking, Word Classes (=POS) : • Verb, Noun, Adjective, Adverb, Article, … • We can also include inflection: • Verbs: Tense, number, … • Nouns: Number, proper/common, … • Adjectives: comparative, superlative, … • … 39/39 Parts of Speech • 8 (ish) traditional parts of speech • Noun, verb, adjective, preposition, adverb, article, interjection, pronoun, conjunction, etc • Called: parts-of-speech, lexical categories, word classes, morphological classes, lexical tags... • Lots of debate within linguistics about the number, nature, and universality of these • We’ll completely ignore this debate. 40/39 7 Traditional POS Categories •N • • • • • • noun verb chair, bandwidth, pacing V study, debate, munch ADJ adj purple, tall, ridiculous ADV adverb unfortunately, slowly, P preposition of, by, to PRO pronoun I, me, mine DET determiner the, a, that, those 41/39 POS Tagging • The process of assigning a part-of-speech or lexical class marker to each word in a collection. WORD tag the koala put the keys on the table DET N V DET N P DET N 42/39 Penn TreeBank POS Tag Set • Penn Treebank: hand-annotated corpus of Wall Street Journal, 1M words • 46 tags • Some particularities: • to /TO not disambiguated • Auxiliaries and verbs not distinguished 43/39 Penn Treebank Tagset 44/39 Why POS tagging is useful? • Speech synthesis: • How to pronounce “lead”? • INsult inSULT • OBject obJECT • OVERflow overFLOW • DIScount disCOUNT • CONtent conTENT • Stemming for information retrieval • Can search for “aardvarks” get “aardvark” • Parsing and speech recognition and etc • Possessive pronouns (my, your, her) followed by nouns • Personal pronouns (I, you, he) likely to be followed by verbs • Need to know if a word is an N or V before you can parse • Information extraction • Finding names, relations, etc. • Machine Translation 45/39 Open and Closed Classes • Closed class: a small fixed membership • Prepositions: of, in, by, … • Auxiliaries: may, can, will had, been, … • Pronouns: I, you, she, mine, his, them, … • Usually function words (short common words which play a role in grammar) • Open class: new ones can be created all the time • English has 4: Nouns, Verbs, Adjectives, Adverbs • Many languages have these 4, but not all! 46/39 Open Class Words • Nouns • Proper nouns (Boulder, Granby, Eli Manning) • English capitalizes these. • Common nouns (the rest). • Count nouns and mass nouns • Count: have plurals, get counted: goat/goats, one goat, two goats • Mass: don’t get counted (snow, salt, communism) (*two snows) • Adverbs: tend to modify things • Unfortunately, John walked home extremely slowly yesterday • Directional/locative adverbs (here,home, downhill) • Degree adverbs (extremely, very, somewhat) • Manner adverbs (slowly, slinkily, delicately) • Verbs • In English, have morphological affixes (eat/eats/eaten) 47/39 Closed Class Words Examples: • prepositions: on, under, over, … • particles: up, down, on, off, … • determiners: a, an, the, … • pronouns: she, who, I, .. • conjunctions: and, but, or, … • auxiliary verbs: can, may should, … • numerals: one, two, three, third, … 48/39 Prepositions from CELEX 49/39 English Particles 50/39 Conjunctions 51/39 POS Tagging Choosing a Tagset • There are so many parts of speech, potential distinctions we can • • • • draw To do POS tagging, we need to choose a standard set of tags to work with Could pick very coarse tagsets • N, V, Adj, Adv. More commonly used set is finer grained, the “Penn TreeBank tagset”, 45 tags • PRP$, WRB, WP$, VBG Even more fine-grained tagsets exist 52/39 Using the Penn Tagset • The/DT grand/JJ jury/NN commmented/VBD on/IN a/DT number/NN of/IN other/JJ topics/NNS ./. • Prepositions and subordinating conjunctions marked IN (“although/IN I/PRP..”) • Except the preposition/complementizer “to” is just marked “TO”. 53/39 POS Tagging • Words often have more than one POS: back • The back door = JJ • On my back = NN • Win the voters back = RB • Promised to back the bill = VB • The POS tagging problem is to determine the POS tag for a particular instance of a word. These examples from Dekang Lin 54/39 How Hard is POS Tagging? Measuring Ambiguity 55/39 Current Performance • How many tags are correct? • About 97% currently • But baseline is already 90% • Baseline algorithm: • Tag every word with its most frequent tag • Tag unknown words as nouns • How well do people do? 56/39 Quick Test: Agreement? • the students went to class • plays well with others • fruit flies like a banana DT: the, this, that NN: noun VB: verb P: prepostion ADV: adverb 57/39 Quick Test • the students went to class DT NN VB P NN • plays well with others VB ADV P NN NN NN P DT • fruit flies like a banana NN NN VB DT NN NN VB P DT NN NN NN P DT NN NN VB VB DT NN 58/39 How to do it? History Trigram Tagger (Kempe) 96%+ DeRose/Church Efficient HMM Sparse Data 95%+ Greene and Rubin Rule Based - 70% 1960 Brown Corpus Created (EN-US) 1 Million Words HMM Tagging (CLAWS) 93%-95% 1970 Brown Corpus Tagged LOB Corpus Created (EN-UK) 1 Million Words Tree-Based Statistics (Helmut Shmid) Rule Based – 96%+ Transformation Based Tagging (Eric Brill) Rule Based – 95%+ 1980 Combined Methods 98%+ Neural Network 96%+ 1990 2000 LOB Corpus Tagged POS Tagging separated from other NLP Penn Treebank Corpus (WSJ, 4.5M) British National Corpus (tagged by CLAWS) 59/39 Two Methods for POS Tagging Rule-based tagging 1. • 2. (ENGTWOL) Stochastic 1. Probabilistic sequence models • • HMM (Hidden Markov Model) tagging MEMMs (Maximum Entropy Markov Models) 60/39 Rule-Based Tagging • Start with a dictionary • Assign all possible tags to words from the dictionary • Write rules by hand to selectively remove tags • Leaving the correct tag for each word. 61/39 Rule-based taggers • Early POS taggers all hand-coded • Most of these (Harris, 1962; Greene and Rubin, 1971) and the best of the recent ones, ENGTWOL (Voutilainen, 1995) based on a two-stage architecture • Stage 1: look up word in lexicon to give list of potential POSs • Stage 2: Apply rules which certify or disallow tag sequences • Rules originally handwritten; more recently Machine Learning methods can be used 62/39 Start With a Dictionary • she: • promised: • to • back: • the: • bill: PRP VBN,VBD TO VB, JJ, RB, NN DT NN, VB • Etc… for the ~100,000 words of English with more than 1 tag 63/39 Assign Every Possible Tag NN RB VBN JJ PRP VBD TO VB She promised to back the VB DT bill NN 64/39 Write Rules to Eliminate Tags Eliminate VBN if VBD is an option when VBN|VBD follows “<start> PRP” NN RB JJ VB VBN PRP VBD TO VB DT NN She promised to back the bill 65/39 POS tagging The involvement of ion channels in B and T lymphocyte activation is DT NN IN NN NNS IN NN CC NN NN NN VBZ supported by many reports of changes in ion fluxes and membrane VBN IN JJ NNS IN NNS IN NN NNS CC NN ……………………………………………………………………………………. ……………………………………………………………………………………. training Unseen text We demonstrate that … Machine Learning Algorithm We demonstrate PRP VBP that … IN Goal of POS Tagging We want the best set of tags for a sequence of words (a sentence) W — a sequence of words T — a sequence of tags ^ Our Goal T arg max P(T | W ) T Example: P((NN NN P DET ADJ NN) | (heat oil in a large pot)) 66/39 67/39 But, the Sparse Data Problem … • Rich Models often require vast amounts of data • Count up instances of the string "heat oil in a large pot" in the training corpus, and pick the most common tag assignment to the string.. • Too many possible combinations 68/39 POS Tagging as Sequence Classification • We are given a sentence (an “observation” or “sequence of observations”) • Secretariat is expected to race tomorrow • What is the best sequence of tags that corresponds to this sequence of observations? • Probabilistic view: • Consider all possible sequences of tags • Out of this universe of sequences, choose the tag sequence which is most probable given the observation sequence of n words w1…wn. 69/39 Getting to HMMs • We want, out of all sequences of n tags t1…tn the single tag sequence such that P(t1…tn|w1…wn) is highest. • Hat ^ means “our estimate of the best one” • Argmaxx f(x) means “the x such that f(x) is maximized” 70/39 Getting to HMMs • This equation is guaranteed to give us the best tag sequence • But how to make it operational? How to compute this value? • Intuition of Bayesian classification: • Use Bayes rule to transform this equation into a set of other probabilities that are easier to compute Reminder: Apply Bayes’ Theorem (1763) likelihood posterior prior P(W | T ) P(T ) P(T | W ) P(W ) Our Goal: To maximize it! marginal likelihood Reverend Thomas Bayes — Presbyterian minister (1702-1761) 71/39 How to Count ^ T arg max P(T | W ) T P(W | T ) P(T ) arg max P(W ) T arg max P(W | T ) P(T ) T P(W|T) and P(T) can be counted from a large hand-tagged corpus; and smooth them to get rid of the zeroes 72/39 Count P(W|T) and P(T) Assume each word in the sequence depends only on its corresponding tag: n P(W | T ) P( wi | ti ) i 1 73/39 74/39 Count P(T) P(t1 ,..., t n ) history P(t1 ) P(t2 | t1 ) P(t3 | t1t 2 ) ... P(tn | t1 ,..., t n 1 ) Make a Markov assumption and use N-grams over tags ... P(T) is a product of the probability of N-grams that make it up n P(t1 ,..., t n ) P(t1 ) P(ti | ti 1) i 2 75/39 Part-of-speech tagging with Hidden Markov Models Pw1...wn | t1...t n Pt1...t n Pt1...t n | w1...wn Pw1...wn tags words Pw1...wn | t1...t n Pt1...t n n Pwi | ti Pti | ti 1 i 1 output probability transition probability 76/39 Analyzing Fish sleep. 77/39 A Simple POS HMM 0.2 start 0.8 0.1 0.8 noun verb 0.2 0.1 0.1 0.7 end 78/39 Word Emission Probabilities P ( word | state ) • A two-word language: “fish” and “sleep” • Suppose in our training corpus, • “fish” appears 8 times as a noun and 5 times as a verb • “sleep” appears twice as a noun and 5 times as a verb • Emission probabilities: • Noun • P(fish | noun) : 0.8 • P(sleep | noun) : 0.2 • Verb • P(fish | verb) : 0.5 • P(sleep | verb) : 0.5 79/39 Viterbi Probabilities 0 start verb noun end 1 2 3 80/39 0.2 start 0.8 0.1 0.8 noun 0.7 verb end 0.2 0.1 0.1 0 start 1 verb 0 noun 0 end 0 1 2 3 81/39 0.2 start 0.8 0.1 0.8 noun 0.7 verb end 0.2 0.1 0.1 Token 1: fish 0 1 start 1 0 verb 0 .2 * .5 noun 0 .8 * .8 end 0 0 2 3 82/39 0.2 start 0.8 0.1 0.8 noun 0.7 verb end 0.2 0.1 0.1 Token 1: fish 0 1 start 1 0 verb 0 .1 noun 0 .64 end 0 0 2 3 83/39 0.2 start 0.8 0.1 0.8 noun 0.7 verb end 0.2 0.1 0.1 Token 2: sleep 0 1 2 start 1 0 0 verb 0 .1 .1*.1*.5 noun 0 .64 .1*.2*.2 end 0 0 - (if ‘fish’ is verb) 3 84/39 0.2 start 0.8 0.1 0.8 noun 0.7 verb end 0.2 0.1 0.1 Token 2: sleep 0 1 2 start 1 0 0 verb 0 .1 .005 noun 0 .64 .004 end 0 0 - (if ‘fish’ is verb) 3 85/39 0.2 start 0.8 0.1 0.8 noun 0.7 verb end 0.2 0.1 0.1 Token 2: sleep 0 1 2 start 1 0 0 verb 0 .1 noun 0 .64 .005 .64*.8*.5 .004 .64*.1*.2 end 0 0 (if ‘fish’ is a noun) - 3 86/39 0.2 start 0.8 0.1 0.8 noun 0.7 verb end 0.2 0.1 0.1 Token 2: sleep 0 1 2 start 1 0 0 verb 0 .1 noun 0 .64 .005 .256 .004 .0128 end 0 0 (if ‘fish’ is a noun) - 3 87/39 0.2 start 0.8 0.1 0.8 noun 0.7 verb end 0.2 0.1 Token 2: sleep take maximum, set back pointers 0.1 0 1 2 start 1 0 0 verb 0 .1 noun 0 .64 .005 .256 .004 .0128 end 0 0 - 3 88/39 0.2 start 0.8 0.1 0.8 noun 0.7 verb end 0.2 0.1 Token 2: sleep take maximum, set back pointers 0.1 0 1 2 start 1 0 0 verb 0 .1 .256 noun 0 .64 .0128 end 0 0 - 3 89/39 0.2 start 0.8 0.1 0.8 noun 0.7 verb end 0.2 0.1 0.1 Token 3: end 0 1 2 start 1 0 0 verb 0 .1 .256 - noun 0 .64 .0128 - end 0 0 - 3 0 .256*.7 .0128*.1 90/39 0.2 start 0.8 0.1 0.8 noun 0.7 verb end 0.2 0.1 Token 3: end take maximum, set back pointers 0.1 0 1 2 start 1 0 0 verb 0 .1 .256 - noun 0 .64 .0128 - end 0 0 - 3 0 .256*.7 .0128*.1 91/39 0.2 start 0.8 0.1 0.8 noun 0.7 verb end 0.2 0.1 Decode: fish = noun sleep = verb 0.1 0 1 2 start 1 0 0 verb 0 .1 .256 - noun 0 .64 .0128 - end 0 0 - 3 0 .256*.7 Markov Chain for a Simple Name Tagger George:0.3 0.6 Transition Probability W.:0.3 Bush:0.3 Emission Probability Iraq:0.1 PER $:1.0 0.2 0.3 0.1 START 0.2 LOC 0.2 0.5 0.3 END 0.2 0.3 0.1 0.3 George:0.2 0.2 Iraq:0.8 X W.:0.3 0.5 discussed:0.7 93/39 Exercise • Tag names in the following sentence: • George. W. Bush discussed Iraq. 94/39 POS taggers • Brill’s tagger • http://www.cs.jhu.edu/~brill/ • TnT tagger • http://www.coli.uni-saarland.de/~thorsten/tnt/ • Stanford tagger • http://nlp.stanford.edu/software/tagger.shtml • SVMTool • http://www.lsi.upc.es/~nlp/SVMTool/ • GENIA tagger • http://www-tsujii.is.s.u-tokyo.ac.jp/GENIA/tagger/ • More complete list at: http://www-nlp.stanford.edu/links/statnlp.html#Taggers