* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download November 20, 2013 NORMED SPACES Contents 1. The Triangle

Survey

Document related concepts

Determinant wikipedia , lookup

Jordan normal form wikipedia , lookup

Matrix (mathematics) wikipedia , lookup

Non-negative matrix factorization wikipedia , lookup

Gaussian elimination wikipedia , lookup

Exterior algebra wikipedia , lookup

Symmetric cone wikipedia , lookup

Eigenvalues and eigenvectors wikipedia , lookup

Perron–Frobenius theorem wikipedia , lookup

Orthogonal matrix wikipedia , lookup

System of linear equations wikipedia , lookup

Vector space wikipedia , lookup

Matrix calculus wikipedia , lookup

Cayley–Hamilton theorem wikipedia , lookup

Singular-value decomposition wikipedia , lookup

Matrix multiplication wikipedia , lookup

Four-vector wikipedia , lookup

Transcript

November 20, 2013

NORMED SPACES

RODICA D. COSTIN

Contents

1. The Triangle Inequality

2. Normed spaces

2.1. Examples in finite dimensions.

2.2. Examples in infinite dimensions.

3. The norm of a matrix

3.1. The normed linear space of matrices

3.2. Other norms for matrices

3.3. Remarks on infinite dimensions

3.4. Exercises

3.5. Proof of the Perron Theorem

3.6. The Perron-Frobenius Theorem

1

2

2

3

3

4

6

7

7

8

9

10

2

RODICA D. COSTIN

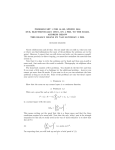

1. The Triangle Inequality

Let (V, h·, ·i) be an inner product space over F = R or C, finite or infinite

dimensional. As in the case of vectors in R3 we can define a length of vectors

in V by

p

kxk = hx, xi

The length thus defined satisfy: kxk

0 and kxk = 0 only for x = 0,

and also kcxk = |c| kxk for all c 2 F and x 2 V . Let us not forget the very

useful Cauchy-Schwarts inequality

|hx, yi| kxk kyk

which in R3 tells us how the inner product measures the angle between two

vectors.

There is one more important geometric property that the length satisfies:

the triangle inequality

R3

kx + yk kxk + kyk

For x, y vectors in

this simply means that the sum of two sides of a

triangle is at least as large as the third side (with equality only when the

triangle is degenerated to a segment: the vectors x, y are scalar multiples

of each other).

Theorem 1. The triangle inequality holds in any inner product space, with

equality if and only if x, y are linearly dependent.

Proof.

Expanding, then using the Cauchy-Schwarts inequality:

kx + yk2 = hx + y, x + yi = hx, xi + hx, yi + hy, xi + hy, yi

= kxk2 + 2<hx, yi + kyk2 kxk2 + 2kxk kyk + kyk2 = (kxk + kyk)2

with equality only when the Cauchy-Schwarts inequality has equality, which

is if and only if x, y are linearly dependent. 2

2. Normed spaces

There are many sets of functions, or of transformations, which have the

structure of a linear spaces. We can often define a useful magnitude of these

functions, having many of the properties of the length in inner product

spaces - except that it does not come from an inner product.

In this section the element of the vector/linear space will not be denoted

by boldfaced letters, as these are more often functions, or linear transformations.

Definition 2. Consider a linear space V over F .

A norm on V is a function k · k : V ! R so that:

(i) it is positive definite: kxk 0 and kxk = 0 only for x = 0,

NORMED SPACES

3

(ii) it is positive homogeneous: kcxk = |c| kxk for all c 2 F and x 2 V ,

(iii) satisfies the the triangle inequality (i.e. it is subadditive):

kx + yk kxk + kyk

Definition 3. A linear space V equipped with a norm, (V, k.k), is called a

normed space.

An inner product space is, in particular a normed space, but the converse

is not true. (A norm comes from an inner product if and only if it satisfies

the parallelogram identity.)

2.1. Examples in finite dimensions. Other examples of useful norms for

Rn and Cn (easily and usefully generalized to infinite dimensional spaces)

include:

(1)

0

11/p

X

kxkp = @

|xj |p A

j

The norm kxk2 is the norm given by inner product equal to the dot

product of vectors, and it is called the Euclidian norm.

(The norm kxk1 is also called the taxicab norm, or the Manhattan norm,

alluding to the distance a taxicab need to drive in a rectangular street grid).

There are also weighted varieties: for wj > 0, j = 1, . . . , n the following

are norms:

0

11/p

X

(2)

kxkp,w = @

wj |xj |p A

j

(3)

kxk1 = max |xj |

j

Of all these, only the norms k · k2,w comes from an inner product:

p

k · k2,w = hx, xiw , where hx, yiw = w1 x1 y1 + . . . + wn xn yn

2.2. Examples in infinite dimensions. Consider a vector space of functions V , for example:

(i) Pn , the space of polynomials of degree at most n (which is finite

dimensioal),

(ii) P, the space of all polynomials (infinite dimensional),

(iii) C[a, b], the space of continuous functions on [a, b],

(iv) C 1 [a, b], the space of continuous functions on [a, b], di↵erentiable on

(a, b), with continuous derivative on [a, b].

4

RODICA D. COSTIN

On any of these spaces we can introduce a norm which is continuous

analogue of (1), called the Lp norm:

✓Z b

◆1/p

p

(4)

kf kp =

|f (t)| dt

, for f 2 V

a

Lp

or, a weighted

norm: if w(t) > 0 and continuous on [a, b] continuous

analogue of the norm (2 ) is

✓Z b

◆1/p

p

(5)

kf kp,w =

w(t)|f (t)| dt

, for f 2 V

a

The continuous analogue of the norm (3) is the sup-norm:

kf k1 = max |f (t)|

(6)

t2[a,b]

Of these, only the L2 norm (weighted or not) comes from an inner product,

namely

Z b

hf, gi =

f (t)g(t) dt

a

and in the weighted case

hf, giw =

Z

b

w(t)f (t)g(t) dt

a

Theorem. On finite dimensional spaces all norms are equivalent, in

the sense that if k · k0 and k · k00 are two norms on the finite dimensional

vector space V , then there are two constants c1 , c2 so that kxk0 c2 kxk00

and kxk00 c1 kxk0 for all x.

Note: a sequence converges when distances are measured by a norm if

and only if the sequence converges when distances are measured by any

equivalent norm.

Idea of the proof of the Theorem: it suffices to show that any norm on Rn

(or Cn ) is equivalent to the Euclidian norm. Using the triangle inequality,

c1,2 are found as the max/min of the norm on the vectors of the canonical

basis. 2

3. The norm of a matrix

Remark. From now on the term ”linear transformation” will be replaced

by its synonym ”linear operator”, which is more common in infinite dimensions.

An n ⇥ n matrix M , thought as a linear operator from Rn to Rn (or from

Cn to Cn ) acting by usual multiplication, by x ! M x, deforms the space.

The norm is defined to be the maximal dilation factor that M subjects

vectors to.

NORMED SPACES

5

For example, consider R2 with the Euclidian norm, and the 2-dimensional

diagonal matrix M with entries 2 and 3 transforms e2 into 3e2 , a vector

three times as large. All other vectors have lower dilations, since

2 0

x1

2x1

Mx =

=

0

3

x2

3x2

hence the length of M x is

q

q

2

2

kM xk = 4x1 + 9x2 3 x21 + x22 = 3kxk

Definition 4. Let (V, k.k) be a normed space, and L : V ! V be a linear

transformation. The norm kLk of the linear operator L is the largest

possible dilation:

kLxk

kxk

kLk = sup

(7)

x6=0

Equivalently:

kLk = sup kLuk

(8)

kuk=1

and also, equivalently:

(9)

kLk = inf{C | kLxk C kxk

for all x 2 V }

Note that we have

(10)

kLxk kLk kxk

More generally, the norm of a linear transformation between two di↵erent

normed spaces is defined in a similar way:

Definition 5. Let L : V ! W be a linear transformation between two

normed spaces (V, k.kV ) and (W, k.kW ) The norm kLk of the linear operator

L (induces by the given norms on V and W ) is

(11)

kLk = sup

x6=0

kLxkW

kxkV

In particular, this definition accommodates defining the norm of rectangular matrices.

This definition for the norm of a matrix is also called the operator norm.

There are other possible useful norms, see §3.2.

3.0.1. An application: error estimates. Suppose that the matrix M provides

a linear model for the quantity y, and y = M x is the output corresponding

to the input x. Suppose the input x is a↵ected by an error x, which we

cannot control, but we can estimate its magnitude k xk. How big will be

the error y in the output? Since M (x + x) = M x + M x then y = M x

6

RODICA D. COSTIN

and we can estimate: k yk = kM xk kM k k xk so we expect the output

error to be at most kM k times bigger than the input error.

3.0.2. The operator norm of a matrix induced by the Euclidian norm. Consider an m ⇥ n matrix M , and the Euclidian norms on Rn , respectively Rm .

The definition (11) of the norm of M can be reformulated in more familiar

terms by noting that

kM xk2

hM x, M xi

hx, M ⇤ M xi

=

=

= Rayleigh quotient of M ⇤ M

kxk2

kxk2

kxk2

Therefore:

Proposition 6. The norm of a matrix M is the largest singular value of

M (the radical of the largest eigenvalue of M ⇤ M ).

There is xmax 2 V (eigenvector of M ⇤ M ) so that kM xmax k = kM k kxmax k.

In particular, the norm of a self-adjoint matrix is the largest absolute

value among its eigenvalues:

Proposition 7. If A = A⇤ then kAk = max{| | ;

2 (A)} = ⇢(A).

3.1. The normed linear space of matrices.

Exercise. Show that the norm of linear transformations satisfies:

(i) kLk = 0 if and only if L is identically zero;

(ii) kcLk = |c| kLk for any scalar c;

(iii) the triangle inequality: if L, R are linear transformation on V then

This proves:

kL + Rk kLk + kRk

Theorem 8. Consider F n , F m (F = R or C) with the Euclidian norm.

Let Mm,n (F ) be the set of all m ⇥ n matrices with entries in F .

Equipped with the operator norm, Mm,n (F ) is a normed space over F .

The normed spaces of matrices are not inner product spaces (the norm

does not come from an inner product).

Normed algebras. The spaces of square matrices (and of linear transformations) are more that linear spaces: there is an additional operation multiplication of matrices (respectively, composition of functions).

Exercise. Show that if L, R are linear transformation from V to itself,

then

(12)

kLRk kLk kRk

Relation (12) shows that composition/matrix multiplication is compatible

with the operator norm.

NORMED SPACES

7

A vector space having an additional multiplicative operation, satisfying

distributivity and associativity conditions we usually expect from well behaved operations is called an algebra (a formal statement of the axioms

should be listed here, but we omit it).

An algebra with a norm satisfying (12) is called a normed algebra.

The normed space of square matrices Mn (F ) is a normed algebra.

3.2. Other norms for matrices. The Frobenius norm (or the HilbertSchmidt norm) is defined as

v

u r

p

uX

2

⇤

kM kF = T r(M M ) = t

k

k=1

It is the same as the Euclidian norm in (13) - when M is viewed as a vector

with mn components.

The Frobenius norm is easier to calculate than the operator norm, and it is

invariant under unitary transformations (i.e. under changes of orthonormal

bases), since kM kF = kU M V ⇤ kF if U, V are unitary (because the matrices

M and U M V ⇤ have the same singular values).

The Frobenius norm is compatible to matrix multiplication, as relation

(12) can be checked by direct calculation:

X

X X

2

kM N k2F =

|(M )ij |2 =

Mik Nkj

ij

ij

k

and, using the Cauchy-Schwartz inequality,

XX

X

2

(

|Mik |2 )(

|Nlj ) = kM k2F kN k2F

ij

k

l

There are other matrix norms easier to calculate than the operator norm

(9), for example kM k1 = maxi,j |Mij | (but it is not compatible with matrix

multiplication).

Since Mn (F ) is finite dimensional, all the norms are equivalent. Therefore, to check convergence, any of the norms can be used. Depending on the

practical applications some norms are more useful than others.

3.3. Remarks on infinite dimensions. By contrast to the finite-dimensional

vector spaces, if V is infinite dimensional there is no guarantee that the norm

of L exists, since in (8) the supremum may be +1.

Definition 9. Linear operators which do have a norm are called bounded.

It can be proved that linear operators which are continuous are precisely

the bounded operators.

A result similar to Theorem 8 holds in infinite dimensions.

8

RODICA D. COSTIN

3.4. Exercises.

1. Find a 2 dimensional matrix whose norm is larger than the modulus of

its eigenvalues.

2. Show that the norm of any matrix is greater or equal to the modulus of

all eigenvalues.

3. Show that the norm of any unitary matrix is 1.

4. a) Show that the operator norm of the matrix M = [Mi,j ]i,j=1,...,n when

F n is equipped with the Euclidian norm satisfies

sX

sX

(13)

max

|Mij |2 kM k kM kF :=

|Mij |2

j

i

i,j

b) Find similar estimates for the operator norm a matrix in terms of its

entries when Rn is equipped with the sup norm (3).

5. Show that

M k kM kk for k = 0, 1, 2, 3, . . .

and that

etM etkM k for t > 0

6. Consider the solution of the di↵erential system

dx

dt

dy

dt

= 5x + 2y

= x + 4y

x(0) = 2

y(0) = 3

a) Without solving the system, find an apriori estimate on the growth of

the solution: find constants B, C > 0 so that k(x(t), y(t))k BeCt for all

t > 0.

b) Find the exact solution and compare it to the estimate found.

7. Consider the solution of the discrete system

xk+1 = 5xk + 2yk

yk+1 = xk + 4yk

x0 = 2

y0 = 3

a) Without solving the system, find an apriori estimate on the growth of

the solution: find constants B, C > 0 so that k(xk , yk )k B C k for all k

positive integers.

b) Find the exact solution and compare it to the estimate found.