* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Statistical Inference

Psychometrics wikipedia , lookup

Degrees of freedom (statistics) wikipedia , lookup

Bootstrapping (statistics) wikipedia , lookup

Taylor's law wikipedia , lookup

History of statistics wikipedia , lookup

Gibbs sampling wikipedia , lookup

Foundations of statistics wikipedia , lookup

Statistical inference wikipedia , lookup

Resampling (statistics) wikipedia , lookup

Key points

An

estimate

is

an

indication of the value of

an unknown quantity

based on observed data.

A population is the entire

collection of people or

things you are interested

in;

A

census

is

a

measurement of all the

units in the population;

A population parameter is

a number that results from

measuring all the units in

the population;

A sampling frame is the

specific data from which

the sample is drawn, e.g.,

a telephone book;

A unit of analysis is the

type of object of interest,

e.g.,

arsons,

fire

departments, firefighters;

A sample is a subset of

some of the units in the

population;

A statistic is a number that

results from measuring all

the units in the sample;

Statistics derived from

samples are used to

estimate

population

parameters.

N = the number of cases in

the sampling frame

n = the number of cases in

the sample

NCn = the number of

combinations (subsets) of

n from N

f = n/N = the sampling

fraction

INFERENTIAL STATISTICS

Table of Contents

SAMPLE: ....................................................................................................................

SAMPLING .................................................................................................................

REASONS FOR SAMPLING.....................................................................................

ECONOMY ...........................................................................................................

TIME FACTOR: ...................................................................................................

VERY LARGE POPULATION: ..........................................................................

PARTLY ACCESSIBLE POPULATIONS: .........................................................

THE DESTRUCTIVE NATURE OF THE OBSERVATION: ............................

ACCURACY AND SAMPLING: ........................................................................

BIAS AND ERROR IN SAMPLING ........................................................................

SAMPLING ERROR..................................................................................................

NON SAMPLING ERROR ........................................................................................

POPULATION PARAMETER AND SAMPLE STATISTICS .............................

PROBABILITY OF RANDOM SAMPLING ..........................................................

Simple Random Sampling .........................................................................................

1.

Stratified Random sampling ..............................................................................

Systematic Random Sampling : .................................................................................

Cluster random Sampling: ........................................................................................

Multistage random sampling: ...................................................................................

Sequential Random Sampling : .................................................................................

NON PROBABILITY SAMPLING ............................................... Error! Bookmark

Purposive sampling: .................................................................................................

Quota Sampling ........................................................................................................

Convenience sampling: .............................................................................................

DIFFERENCES BETWEEN RANDOM AND NON RANDOM

SAMPLING .................................................................................................................

Sampling techniques: Advantages and disadvantages ............................................

How to Choose the Best Sampling Method ..............................................................

SAMPLING DISTRIBUTION ..................................................................................

Why the sampling distribution is important ..............................................................

1

SAMPLING

Central Limit Theorem ..............................................................................................

As you increase the

sample size, regardless of

the shape you create, the

distribution (i.e. look at

the histogram) becomes

more bell-shaped.

Variability of a Sampling Distribution: ....................................................................

SAMPLING DISTRIBUTION OF MEANS ...............................................................

Sampling distribution in case of without replacement: ............... Error! Bookmark

Sampling distribution of difference between means: ................................................

Sampling distribution of proportions ........................................................................

Statistics: The mean x

Sampling distribution of differences of proportions: ................................................

and standard deviation s

for the sample are Objectives ....................................................................................................................

statistics. They are used as

estimates

of

the

parameters. Statistics are

variables.

A sample mean, denoted

(pronounced “x-bar”), is

an

average

of

n

observations. It measures

the center of the observed

data values.

A

sample

standard

deviation, denoted, is an

average deviation of n

observations. It measures

the spread or dispersion of

the observed data values.

INFERENTIAL STATISTICS

2

SAMPLING

SAMPLING

SAMPLE:

A sample is a group of units selected from a larger group (the population). By studying the

sample it is hoped to draw valid conclusions about the larger group.

A sample is generally selected for study because the population is too large to study in its

entirety. The sample should be representative of the general population. This is often best

achieved by random sampling (probability sampling). Also, before collecting the sample, it is

important that the researcher carefully and completely defines the population, including a

description of the members to be included.

Example

In a classroom of 30 students in which half the students are male and half are female, a representative

sample might include six students: three males and three females.

SAMPLING

A process used in statistical analysis in which a predetermined number of observations will

be taken from a larger population. There are two major categories in sampling:

Probability sampling

Non-probability sampling

Examples

1. Conducting a poll to predict the winner of an upcoming election

2. Inspecting a sample of parts to determine if the entire lot meets requirements

3. Sometimes "measuring" or "testing" something destroys it. The government requires

automakers who want to sell cars in the U.S. to demonstrate that their cars can

survive certain crash tests. Obviously, the company can't be expected to crash every

car, to see if it survives! So the company crashes only a sample of cars.

REASONS FOR SAMPLING

ECONOMY:

There is an economic advantage of using a sample in research. Obviously, taking a sample

requires fewer resources than a census.

INFERENTIAL STATISTICS

3

SAMPLING

TIME FACTOR:

A sample may provide you with needed information quickly. For example, you are a Doctor

and a disease has broken out in a village within your area of jurisdiction, the disease is

contagious and it is killing within hours nobody knows what it is. You are required to

conduct quick tests to help save the situation. If you try a census of those affected, they may

be long dead when you arrive with your results. In such a case just a few of those already

infected could be used to provide the required information.

VERY LARGE POPULATION:

Many populations about which inferences must be made are quite large. For example,

consider the population of high school seniors in United States of America, a group

numbering 4,000,000. The responsible agency in the government has to plan for how they

will be absorbed into the different departments and even the private sector. The employers

would like to have specific knowledge about the student`s plans in order to make compatible

plans to absorb them during the coming year. But the big size of the population makes it

physically impossible to conduct a census. In such a case, selecting a representative sample

may be the only way to get the information required from high school seniors.

PARTLY ACCESSIBLE POPULATIONS:

There are some populations that are so difficult to get access to that only a sample can be

used. Like people in prison, like crashed aero planes in the deep seas, presidents etc. The

inaccessibility may be economic or time related. For example natural disasters like a flood

that occurs every 100 years or take the example of the flood that occurred in Noah`s days. It

has never occurred again.

THE DESTRUCTIVE NATURE OF THE OBSERVATION:

Sometimes the act of observing the desired characteristic of a unit of the population destroys

it for the intended use. Good examples of this occur in quality control. For example to test

the quality of a fuse, to determine whether it is defective, it must be destroyed. To obtain a

census of the quality of a lorry load of fuses, you have to destroy all of them. This is contrary

to the purpose served by quality-control testing. In this case, only a sample should be used to

assess the quality of the fuses.

ACCURACY AND SAMPLING:

A sample may be more accurate than a census. A sloppily conducted census can provide less

reliable information than a carefully obtained sample.

INFERENTIAL STATISTICS

4

SAMPLING

BIAS AND ERROR IN SAMPLING

Sampling bias is a tendency to favor the selection of units that have particular characteristics.

A sample is expected to mirror the population from which it comes; however, there is no

guarantee that any sample will be precisely representative of the population from which it

comes. Chance may dictate that a disproportionate number of untypical observations will be

made like for the case of testing fuses, the sample of fuses may consist of more or less faulty

fuses than the real population proportion of faulty cases.

SAMPLING ERROR

Sampling error is incurred when the statistical characteristics of a population are estimated

from a subset, or sample, of that population. Since the sample does not include all members

of the population, statistics on the sample, such as means and quantiles, generally differ from

parameters on the entire population. For example, if one measures the height of a thousand

individuals from a country of one million, the average height of the thousand is typically not

the same as the average height of all one million people in the country. Since sampling is

typically done to determine the characteristics of a whole population, the difference between

the sample and population values is considered a sampling error.“Increasing the sample

size can decrease the sampling error”

NON SAMPLING ERROR

A statistical error caused by human error to which a specific statistical analysis is exposed.

These errors can include, but are not limited to, data entry errors, biased questions in a

questionnaire, biased processing/decision making, inappropriate analysis conclusions and

false information provided by respondents.

POPULATION-PARAMETER AND SAMPLE-STATISTICS

A parameter is a value, usually unknown (and which therefore has to be estimated or tested),

used to represent a certain population characteristic. For example, the population mean is a

parameter that is often used to indicate the average value of a quantity.

Within a population, a parameter is a fixed value which does not vary. Each sample drawn

from the population has its own value of any statistic that is used to estimate this parameter.

For example, the mean of the data in a sample is used to give information about the overall

mean in the population from which that sample was drawn.

INFERENTIAL STATISTICS

5

SAMPLING

For example, say you want to know the mean income of the subscribers to a particular

magazine—a parameter of a population. You draw a random sample of 100 subscribers and

determine that their mean income is $27,500 (a statistic). You conclude that the population

means income μ is likely to be close to $27,500 as well. This example is one of statistical

inference.

“σ”

“σ2 ”

INFERENTIAL STATISTICS

6

SAMPLING

PROBABILITY OR RANDOM SAMPLING

Simple Random Sampling

In statistics, a simple random sample is a subset of individuals (a sample) chosen from a

larger set (a population). Each individual is chosen randomly and entirely by chance, such

that each individual has the same probability of being chosen at any stage during the

sampling process, and each subset of k individuals has the same probability of being chosen

for the sample as any other subset of k individuals. This process and technique is known as

simple random sampling. A simple random sample is an unbiased surveying technique.

In small populations and often in large ones, such sampling is typically done "without

replacement", i.e., one deliberately avoids choosing any member of the population more

than once. Although simple random sampling can be conducted with replacement instead,

this is less common and would normally be described more fully as simple random

sampling with replacement.

Example 1

Let’s say you have a population of 1,000 people and you wish to choose a simple random

sample of 50 people. First, each person is numbered 1 through 1,000. Then, you generate a

list of 50 random numbers and those individuals assigned those numbers are the ones you

include in the sample.

Example 2

Figure 1.1

An example of simple random sampling of 10 subjects, represented by the red ‘stickmen’,

selected at random from a total of 50 subjects.

INFERENTIAL STATISTICS

7

SAMPLING

Case Study: Selecting a simple random sample of students

A simple random sample of 25 students is to be selected from a school of 500 students. Using

a list of all 500 students, each student is given a number (1 to 500), and these numbers are

written on small pieces of paper. All the 500 papers are put in a box, after which the box is

shaken vigorously to ensure randomization. Then, 25 papers are taken out of the box, and the

numbers are recorded. The students belonging to these numbers will constitute the simple

random sample.

Stratified Random sampling:

Stratification is the process of dividing members of the population into homogeneous

subgroups before sampling.

Example 1

Figure 1.2

The 50 subjects in Figure 1.2 have been stratified (divided) into two subgroups – one of 30

subjects (outlined in blue), and one of 20 subjects (outlined in green). A sample of 10

subjects has been selected, but they have not been picked entirely at random. Instead, 6 have

been selected at random from the 30 blue subjects and 4 have been selected at random from

the 20 green subjects, to ensure that the blue and green individuals are proportionately

represented in the sample of 10 selected individuals.

Example 2

A survey is conducted on household water supply in a district comprising 2,000 households,

of which 400 (or 20%) are urban and 1,600 (or 80%) are rural. It is suspected that in urban

areas the access to safe water sources is much more satisfactory than in rural areas. A

decision is made to sample 200 household’s altogether, but to include 100 urban households

and 100 rural households.

INFERENTIAL STATISTICS

8

SAMPLING

Probability base and non-probability base:

Representation of the subgroups can be proportionate or disproportionate. For example, if

you wanted to sample 100 farmers from a population of farmers in which 90% are male and

10% are female, a proportionate stratified sample would select 90 males and 10 females. But

you may want to know more about the women farmers than is possible in a sample of only

ten subjects. So you can select a disproportionate stratified sample, for example, you could

select 50 males and 50 females.

Systematic Random Sampling:

It is a method of selecting sample members from a larger population, according to a random

starting point and a fixed, periodic interval. Typically, every "nth" member is selected from

the total population for inclusion in the sample population.

Example 1

An example of systematic sampling of every tenth subject selected systematically from a

total of 50 subjects.

Example 2

A systematic sample is to be selected from 1,200 students from the same school. The

required sample size is 100. The study population is 1,200 and the sample size is 100, so a

systematic sampling interval is found by dividing the study population by the sample size:

1,200 ÷ 100 = 12

the sampling interval is therefore 12.

The number of the first student to be included in the sample should be chosen randomly, for

example by blindly picking one out of twelve pieces of paper, numbered 1 to 12. If number 6

INFERENTIAL STATISTICS

9

SAMPLING

is picked, then every twelfth student will be included in the sample, starting with student

number 6, until 100 students have been selected.

Cluster random Sampling:

Cluster sampling is a sampling technique used when "natural" but relatively homogeneous

groupings are evident in a statistical population. It is often used in marketing research. In this

technique, the total population is divided into groups (or clusters) and a simple random

sample of the groups is selected. Then the required information is collected from a simple

random sample of the elements within each selected group.

Example 1

Let’s say that a researcher is studying the academic performance of high school students in

the United States and wanted to choose a cluster sample based on geography. First, the

researcher would divide the entire population of the United States into clusters, or states.

Then, the researcher would select either a simple random sample or a systematic random

sample of those clusters/states. Let’s say he/she chose a random sample of 15 states and

he/she wanted a final sample of 5,000 students. The researcher would then select those 5,000

high school students from those 15 states either through simple or systematic random

sampling.

Example 2

The most common cluster used in research is a geographical cluster. For example, a

researcher wants to survey academic performance of high school students in Spain.

1. He can divide the entire population (population of Spain) into different clusters

(cities).

INFERENTIAL STATISTICS

10

SAMPLING

2. Then the researcher selects a number of clusters depending on his research through

simple or systematic random sampling.

3. Then, from the selected clusters (randomly selected cities) the researcher can either

include all the high school students as subjects or he can select a number of subjects

from each cluster through simple or systematic random sampling.

The important thing to remember about this sampling technique is to give all the clusters

equal chances of being selected.

Multistage random sampling:

Multistage sampling is a complex form of cluster sampling. Cluster sampling is a type of

sampling which involves dividing the population into groups (or clusters). Then, one or more

clusters are chosen at random and everyone within the chosen cluster is sampled.

Example 1

For instance, when the polling organization samples US voters, they don’t do a SRS. Since

voter lists are compiled by counties, they might first do a sample of the counties and then

sample within the selected counties. This illustrates two stages. In some instances, they might

use even more stages. At each stage, they might do a stratified random sample on gender,

race, income level, or any other useful variable on which they could get information before

sampling.

INFERENTIAL STATISTICS

11

SAMPLING

Example 2

For example, household surveys conducted by the Australian Bureau of Statistics begin by

dividing metropolitan regions into 'collection districts' and selecting some of these collection

districts (first stage). The selected collection districts are then divided into blocks, and blocks

are chosen from within each selected collection district (second stage). Next, dwellings are

listed within each selected block, and some of these dwellings are selected (third stage). This

method makes it unnecessary to create a list of every dwelling in the region and necessary

only for selected blocks.

NON PROBABILITY SAMPLING

Sequential Random Sampling:

Sequential sampling is a non-probability sampling technique wherein the researcher picks a

single or a group of subjects in a given time interval, conducts his study, analyzes the results

then picks another group of subjects if needed and so on.

Example 1

If a business organization wanted to determine the need for a new product, it might use

sequential sampling as part of its research process. The business might distribute

questionnaires to a selected group of potential customers asking for response to questions or

scenarios that would help to measure the perceptions of the responders to the idea for the

potential product.

Example 2

A manufacturing plant might pull off the assembly line for close evaluation every fourth

product that was created with a new type of material or process. The testing of that sample

portion of the output would verify whether or not the new material or process contributed to

the making of a final product that met required specifications.

Purposive sampling:

A purposive, or judgmental, sample is one that is selected based on the knowledge of a

population and the purpose of the study. It is based on the researcher’s own expertise.

Example 1

If a researcher is studying the nature of school spirit as exhibited at a school pep rally, he or

she might interview people who did not appear to be caught up in the emotions of the crowd

or students who did not attend the rally at all. In this case, the researcher is using a purposive

sample because those being interviewed fit a specific purpose or description.

INFERENTIAL STATISTICS

12

SAMPLING

Example 2

In a study wherein a researcher wants to know what it takes to graduate summa cum laude in

college, the only people who can give the researcher first hand advise are the individuals who

graduated summa cum laude. With this very specific and very limited pool of individuals that

can be considered as a subject, the researcher must use judgmental sampling.

Quota Sampling

A quota sample is a survey design in which interviewers recruit respondents according to a

set of guidelines that will result in an overall sample with certain proportions of people with

various social characteristics.

Example 1

The requirement might be to produce a collection of interviews that is evenly divided

between men and women, has certain percentages of people from different races and age

categories etc.

Example 2

Let’s say, for example, that you want to obtain a proportional quota sample of 100 people

based on gender. First you would need to find out the proportion of the population that is

men and the proportion that is women. If you found out the larger population is 40% women

and 60% men, you would need a sample of 40 women and 60 men for a total of 100

respondents. You would start sampling and continue until you got those proportions and then

you would stop. So, if you’ve already got 40 women for the sample, but not 60 men, you

would continue to sample men and discard any legitimate women respondents that came

along.

Convenience sampling:

A convenience sample is simply one in which the researcher uses any subjects that are

available to participate in the research study. This could mean stopping people in a street

corner as they pass by or surveying passersby in a mall. It could also mean surveying friends,

students, or colleagues that the researcher has regular access to.

Example 1

Let’s say that a researcher and professor at a University are interested in studying drinking

behaviors among college students. The professor teaches a sociology 101 class to mostly

college freshmen and decides to use his or her class as the study sample. He or she passes out

surveys during class for the students to complete and hand in.

Example 2

INFERENTIAL STATISTICS

13

SAMPLING

Convenience sampling is often used when statistical data gathered from a specific group of

people is desired. For example, if a company wants to figure out what flavor of pizza sells

the best in college students, they could poll an average local college and reliably say that that

is an accurate representation of most college students.

DIFFERENCES BETWEEN RANDOM AND NON RANDOM SAMPLING

The differences between Probability (Random) Sampling and Non-Probability (NonRandom) Sampling are summarized below.

Probability (Random) Sampling

Non-Probability (Non-Random) Sampling

Allows the use of statistics, tests hypotheses

Exploratory research, generates hypotheses

Can estimate population parameters

Population parameters are not of interest

Eliminates bias

Adequacy of the sample can't be known

Must have random selection of units

Cheaper, easier, quicker to carry out

Sampling techniques: Advantages and disadvantages

Technique Descriptions

Advantages

Simple

Random sample from

whole population

Highly representative if Not

possible

without

all subjects participate; complete list of population

the ideal

members;

potentially

uneconomical to achieve;

can be disruptive to isolate

members from a group;

time-scale may be too long,

data/sample could change

Stratified

Random sample from

identifiable groups

(strata), subgroups,

etc.

Can ensure that specific

groups are represented,

even proportionally, in

the sample(s) (e.g., by

gender), by selecting

INFERENTIAL STATISTICS

14

Disadvantages

More complex, requires

greater effort than simple

random; strata must be

carefully defined

SAMPLING

individuals from strata

list

Cluster

Random samples of

successive clusters of

subjects (e.g., by

institution) until small

groups are chosen as

units

Possible

to

select

randomly when no single

list

of

population

members exists, but

local lists do; data

collected on groups may

avoid introduction of

confounding by isolating

members

Stage

Combination

of

cluster

(randomly

selecting clusters) and

random or stratified

random sampling of

individuals

Can make up probability Complex,

combines

sample by random at limitations of cluster and

stages

and

within stratified random sampling

groups; possible to select

random sample when

population lists are very

localized

Purposive

Hand-pick subjects on Ensures balance of group Samples are not easily

the basis of specific sizes when multiple defensible

as

being

characteristics

groups are to be selected representative

of

populations due to potential

subjectivity of researcher

Quota

Select individuals as

they come to fill a

quota

by

characteristics

proportional

to

populations

Ensures selection of Not possible to prove that

adequate numbers of the sample is representative

subjects with appropriate of designated population

characteristics

Snowball

Subjects with desired

traits or characteristics

give names of further

appropriate subjects

Possible

to

include

members of groups

where no lists or

identifiable clusters even

exist (e.g., drug abusers,

criminals)

INFERENTIAL STATISTICS

15

Clusters in a level must be

equivalent and some natural

ones are not for essential

characteristics

(e.g.,

geographic: numbers equal,

but unemployment rates

differ)

No way of knowing

whether the sample is

representative

of

the

population

SAMPLING

Volunteer,

accidental,

convenience

Either asking for volunteers, or

the consequence of not all those

selected finally participating, or

a set of subjects who just

happen to

Inexpensive way Can be highly

of

ensuring unrepresentative

sufficient numbers

of a study

be available

How to Choose the Best Sampling Method

In this section, we illustrate how to choose the best sampling method by working through a

sample problem. Here is the problem:

Problem Statement

At the end of every school year, the state administers a reading test to a sample of third

graders. The school system has 20,000 third graders, half boys and half girls. There are

1000 third-grade classes, each with 20 students.

The maximum budget for this research is $3600. The only expense is the cost to proctor

each test session. This amounts to $100 per session.

The purpose of the study is to estimate the reading proficiency of third graders, based on

sample data. School administrators want to maximize the precision of this estimate

without exceeding the $3600 budget. What sampling method should they use?

Finding the "best" sampling method is a four-step process. We work through each step

below.

List goals. This study has two main goals: (1) maximize quality production and (2)

stay within budget.

Identify potential sampling methods.

Test methods. A key part of the analysis is to test the ability of each potential

sampling method to satisfy the research goals. Specifically, we will want to know the

INFERENTIAL STATISTICS

16

SAMPLING

level of precision and the cost associated with each potential method. For our test, we

use the standard error to measure precision. The smaller the standard error, the greater

the precision.

Choose best method. In this example, the cost of each sampling method is identical,

so none of the methods has an advantage on cost. However, the methods do differ

with respect to precision (as measured by standard error). Cluster sampling provides

the most precision (i.e., the smallest standard error); so cluster sampling is the best

method.

SAMPLING DISTRIBUTION

1) The sampling distribution is a theoretical distribution of a sample statistic.

2.) There is a different sampling distribution for each sample statistic.

3) The sampling distribution of the mean is a special case of the sampling distribution.

4.) The Central Limit Theorem relates the parameters of the sampling distribution of the

mean to the population model and is very important in statistical thinking.

Why the sampling distribution is important?

We use the sampling distribution of a statistic to determine the probability that the value of

the statistic is like other possible sample values. It helps us determine the likelihood of error

in concluding there is a relationship when there is not, or in concluding that two statistics are

different.

The sampling distribution is derived assuming the null hypothesis is correct. The sampling

distribution says, if there is no relationship between x and y, these are the statistics we would

expect and their associated probabilities.

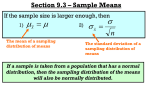

Central Limit Theorem

The central limit theorem states that the sampling distribution of any statistic will be normal

or nearly normal, if the sample size is large enough.

How large is "large enough"? As a rough rule of thumb, many statisticians say that a

sample size of 30 is large enough. If you know something about the shape of the sample

distribution, you can refine that rule. The sample size is large enough if any of the following

conditions apply.

The population distribution is normal.

The sampling distribution is symmetric, uni modal, without outliers, and the sample

size is 15 or less.

INFERENTIAL STATISTICS

17

SAMPLING

The sampling distribution is moderately skewed, uni modal, without outliers, and the

sample size is between 16 and 40.

The sample size is greater than 40, without outliers.

The exact shape of any normal curve is totally determined by its mean and standard

deviation. Therefore, if we know the mean and standard deviation of a statistic, we can find

the mean and standard deviation of the sampling distribution of the statistic (assuming that

the statistic came from a "large" sample).

Sampling distribution

Distribution of a sample statistic is called sampling distribution.

OR

A probability distribution of all the possible means of the samples is distributions of the

sample means; statisticians call this a sampling distribution of mean.

Suppose that we draw all possible samples of size n from a given population. Suppose further

that we compute a statistic (e.g., a mean, proportion, standard deviation) for each sample.

The probability distribution of this statistic is called a sampling distribution.

Variability of a Sampling Distribution:

Variability of sampling distribution is measured by its standard deviation or variance, it

depends upon three factors.

N: no of observations in the population.

n: no of observations in the sample

How random sample is chosen?

Sampling distribution will have roughly the same sampling error if population size is

much larger than the sample size, whether sampling is done with or without

replacement. Sampling error would be smaller if the sample represents a significant

figure (say, 1/10) of population, when we sample without replacement.

What is the difference between sampling and population distribution?

Sampling distribution is a distribution of sample statistic while population distribution is the

distribution of the population we selected for deducing our results, our area of interest.

Population distribution refers to the patterns that a population creates as they spread within

an area. A sampling distribution is a representative, random sample of that population.

INFERENTIAL STATISTICS

18

SAMPLING

SAMPLING DISTRIBUTION OF MEANS

In order to demonstrate the properties of sampling distribution, let us consider a simple

example. Suppose that our population consists of N=5 numbers 1, 2, 3, 4, 5. The mean (

and the standard deviation (σ) of this population are given by

CASE I: SAMPLING DISTRIBUTION WITH REPLACEMENT

When N=5, n=2

µ= ∑

=

=3

=1.4142

Suppose that we draw all possible samples of size n=2 with replacement and then for each

sample compute the sample mean x. There are Nn=52=25 samples of size 2 which can b

drawn with replacement. These samples are

Sample

mean

Sample

Mean

Sample

mean

sample

Mean

(1,1)

1

(2,3)

2.5

(3,5)

4

(5,2)

3.5

(1,2)

1.5

(2,4)

3

(4,1)

2.5

(5,3)

4

(1,3)

2

(2,5)

3.5

(4,2)

3

(5,4)

4.5

(1,4)

2.5

(3,1)

2

(4,3)

3.5

(5,5)

5

(1,5)

3

(3,2)

2.5

(4,4)

4

(2,1)

1.5

(3,3)

3

(4,5)

4.5

(2,2)

2

(3,4)

3.5

(5,1)

3

INFERENTIAL STATISTICS

19

SAMPLING

X

Tally

F

Pr(x)

∑

∑(x2.Pr(x))

1

I

1

1/25

1/25

1/25

1.5

II

2

2/25

3/25

9/25

2

III

3

3/25

6/25

36/25

2.5

IIII

4

4/25

10/25

100/25

3

IIII

5

5/25

15/25

225/25

3.5

IIII

4

4/25

14/25

196/25

4

III

3

3/25

12/25

144/25

4.5

II

2

2/25

9/25

81/25

5

I

1

1/25

5/25

25/25

∑=75/25=3

E(X)2=32.68

∑f=25

E(x) =∑ (x. Pr(x) = 3

V(x) =E(x2) –E (x) 2

=32.68-(3)2

=23.68

To prove functional relationships

1. E(x) = µ

µ=

=3

E(x) = µ

3=3

INFERENTIAL STATISTICS

20

SAMPLING

2. V(x)=

σ

= 6.88

σ2=47.36

V(x) =

σ

23.68=23.68

CASE II: Sampling distribution with replacement

N=5, n=3

Suppose that we draw all possible samples of size n=3 with replacement and then for each

sample compute the sample mean x. There are Nn=53=125 samples of size 3 which can b

drawn with replacement these samples are

Samples Mean Sample Mean Sample Mean

Sample Mean Sample Mean

1,1,1

1

2,1,2

2.66

3,1,3

2.33

4,1,4

3

5,1,5

3.33

1,1,2

1.33

2,1,3

2

3,1,4

2.66

4,1,5

3.33

5,2,1

2.66

1,1,3

1.66

2,1,4

2.33

3,1,5

3

4,2,1

2.33

5,2,2

3

1,1,4

2

2,1,5

2.66

3,2,1

2

4,2,2

2.66

5,2,3

3.33

1,1,5

2.33

2,2,1

1.33

3,2,2

2.33

4,2,3

3

5,2,4

3.66

1,2,1

1.33

2,2,2

2

3,2,3

2.66

4,2,4

3.33

5,2,5

4

1,2,2

1.66

2,2,3

2.33

3,2,4

3

4,2,5

3.66

5,3,1

3

1,2,3

2

2,2,4

2.66

3,2,5

3.33

4,3,1

2.66

5,3,2

3.33

1,2,4

2.33

2,2,5

3

3,3,1

2.33

4,3,2

3

5,3,3

3.66

INFERENTIAL STATISTICS

21

SAMPLING

1,2,5

2.66

2,3,1

2

3,3,2

2.66

4,3,3

3.33

5,3,4

4

1,3,1

1.66

2,3,2

2.33

3,3,3

3

4,3,4

3.66

5,3,5

4.33

1,3,2

2

2,3,3

2.66

3,3,4

3.33

4,3,5

4

5,4,1

3.33

1,3,3

2.33

2,3,4

3

3,3,5

3.66

4,4,1

3

5,4,2

3.66

1,3,4

2.66

2,3,5

3.33

3,4,1

2.66

4,4,2

3.33

5,4,3

4

1,3,5

3

2,4,1

2,33

3,4,2

3

4,4,3

3.66

5,4,4

4.33

1,4,1

2

2,4,2

2.66

3,4,3

3.33

4,4,4

4

5,4,5

4.66

1,4,2

2.33

2,4,3

3

3,4,4

3.66

4,4,5

4.33

5,5,1

3.66

1,4,3

2.66

2,4,4

3.33

3,4,5

4

4,5,1

3.33

5,5,2

4

1,4,4

3

2,4,5

3.66

3,5,1

3

4,5,2

3.66

5,5,3

4.33

1,4,5

3.33

2,5,1

2.66

3,5,2

3.33

4,5,3

4

5,5,4

4.66

1,5,1

2.33

2,5,2

3

3,5,3

3.66

4,5,4

4.33

5,5,5

5

1,5,2

2.66

2,5,3

3.33

3,5,4

4

4,5,5

4.66

1,5,3

3

2,5,4

3.66

3,5,5

4.33

5,1,1

2.33

1,5,4

3.33

2,5,5

4

4,1,1

2

5,1,2

2.66

1,5,5

3.66

3,1,1

1.33

4,1,2

2.33

5,1,3

3

2,1,1

1.33

3,1,2

2

4,1,3

2.66

5,1,4

3.33

X

Tally

F

Pr(x)

∑

1

I

1

1/125

1/25

1/125

1.33

IIII/

5

5/125

6.65/125

8.84/125

1.66

III

3

3/125

4.98/125

8.26/125

2

IIII/ IIII/

10

10/125

20/125

40/125

INFERENTIAL STATISTICS

22

∑(x2.Pr(x))

SAMPLING

2.33

IIII/

IIII/

IIII/ 15

15/125

34.95/125

81.42/125

2.66

IIII/

IIII/ 19

IIII/ III

19/125

50.54/125

134.42/125

3

IIII/

IIII/ 19

IIII/ IIII

19/125

57/125

171/125

3.33

IIII/

IIII/ 19

IIII/ IIII

19/125

63.27/125

210.52/125

3.66

IIII/

IIII/ 19

IIII/ IIII

19/125

69.54/125

254.41/125

4

IIII/ IIII/

10

10/125

40/125

160/125

4.33

IIII/ I

6

6/125

25.98/125

112.49/125

4.66

III

3

3/125

13.98/125

65.146/125

5

I

1

1/125

5/125

25/125

∑=392.89/125=3.1 E(X)2=10.18

∑f=125

E(x) =∑ (x. Pr(x)

=3.1

V(x) =E(x2) –E (x) 2

=-(10.18)–(3.14)2

=

0.32

To prove functional relationship

1. E(x) = µ

3.1=3.1 hence proved

µ=

= 3.1

2. V(x)=

INFERENTIAL STATISTICS

23

SAMPLING

σ2=V(x).n = (0.32). (3) = 0.96

V(x) =

=0.96/3 =0.32 hence proved

CASE III: SAMPLING DISTRIBUTION IN CASE OF WITHOUT

REPLACEMENT

Suppose now we draw all possible samples of size 2 from our population without

replacement, for each sample we will compute the sample mean. As N=1, 2, 3, 4, 5 with n=2

Sample

Mean(x)

Sample

Mean(x)

(1,2)

1.5

(2,4)

3

(1,3)

2

(2,5)

3.5

(1,4)

2.5

(3,4)

3.5

(1,5)

3

(3,5)

4

(2,3)

2.5

(4,5)

4.5

x

tally

F

Pr(x)

∑

∑(x2.Pr(x))

1.5

I

1

1/10

1.5/10

2.25/10

2

I

1

1/10

2/10

4/10

2.5

II

2

2/10

5/10

25/10

3

II

2

2/10

6/10

36/10

3.5

II

2

2/10

7/10

49/10

4

I

1

1/10

4/10

16/10

INFERENTIAL STATISTICS

24

SAMPLING

4.5

I

1

1/10

∑f=10

4.5/10

20.25/10

∑=30/10=3

E(X)2=15.25

Prove the results

1. E(X) =µ

∑

µ

=

=3

So E(X) =∑(x .Pr(x))

3=3

σ

2. V(x)=

V(x) =E(x2)-E(x) 2=15.25-(3)2=6.25

σ2= ∑(x-µ) 2/N

σ2 =

(1-3)2+ (2-3)2+ (3-3)2+ (4-3)2+ (5-3)2

σ2 =

σ2 = 2

6.25=6.25 hence proved

CASE IV: Sampling distribution in case of without replacement

A population consists of four numbers 2, 4, 6, 8 all the possible samples of size n=3 are given

below in the table which can be drawn without replacement from this population

Here N=4 and n=3 the number of possible samples of size 3 which can be drawn are as given

below

INFERENTIAL STATISTICS

25

SAMPLING

Samples

Mean

(2,4,6)

4

(2,4,8)

4.67

(2,6,8)

5.33

(4,6,8)

6

X

Tally

F

Pr(x)

∑

∑(x2.Pr(x))

4

I

1

1/4

4/4

16/4

4.67

I

1

1/4

4.67/4

21.80/4

5.33

I

1

1/4

5.33/4

28.40/4

6

I

1

1/4

6/4

36/4

∑=20/4=5

E(X)2=25.55

∑f=4

To prove the results:

1. E(x)=

Where E(x) = ∑ (x. Pr(x)) =5

µ=

=5

Hence 5=5

2. V(x) =

V(X) = E(x2)-E(x) 2

V(X) = 25.55-25 = 0.55

INFERENTIAL STATISTICS

26

SAMPLING

And σ2= ∑(x-µ) 2/N

2

+ (4-5)2+ (6-5)2+ (8-5)2

σ2 =

σ2 = 5

σ2 =

(

)=0.55

Hence proved

CASE V: SAMPLING DISTRIBUTION OF DIFFERENCE BETWEEN

MEANS

Suppose we have two infinite populations I and II with means µ1 andµ2 and standard

deviations σ1 andσ2 respectively. Let X1 be the mean of a sample of size n1 from population I

and X2 be the mean of the sample of size n2 from population II, independent of the sample I.

the means of samples, each of size n1 from the population will yield a sampling distribution

of X1 with mean µ1 and standard deviation σ1.similarly the means of samples each of size n2

from population II will yield a sampling distribution of X2.with a mean µ2 and standard

deviation σ2

From all combinations of these samples from the two populations we can obtain a

distribution of differences of means, X1-X2 which is called the sampling distribution of

differences of the means. The mean and standard deviation of this sampling distribution is

denoted by µ1-µ2 and σ1- σ2 are given by

µ1-µ2

= µx 1- µx

2

σ x1 - x 2 =V(X1-X2) = V(X1) + V(X2) = (σx1)2+ (σx2)2 x 2 = (σx1)2/n1+ (σx2)2/n2

Suppose that population I consists of 2 numbers (4, 6, 8) and population II consists of 3

numbers (1, 2, 3)

For population I and II: N1=3, n1=2, N2=3, n2=2

N1

X1

N2

X2

4, 4

4

1,1

1

4, 6

5

1,2

1.5

4,8

6

1,3

2

6,4

5

2,1

1.5

INFERENTIAL STATISTICS

27

SAMPLING

6,6

6

2,2

2

6,8

7

2,3

2.5

8,4

6

3,1

2

8,6

7

3,2

2.5

8,8

8

3,3

3

Difference Table X1-X2

x1

x2 1

1.5

2

1.5

2

2.5

2

2.5

3

4

3

2.5

2

2.5

2

1.5

2

1.5

1

5

4

3.5

3

3.5

3

2.5

3

2.5

2

6

5

4.5

4

4.5

4

3.5

4

3.5

3

5

4

3.5

3

3.5

3

2.5

3

2.5

2

6

5

4.5

4

4.5

4

3.5

4

3.5

3

7

6

5.5

5

5.5

5

4.5

5

4.5

4

6

5

4.5

4

4.5

4

3.5

4

3.5

3

7

6

5.5

5

5.5

5

4.5

5

4.5

4

8

7

6.5

6

6.5

6

5.5

6

5.5

5

X1-X2=d

TALLY

F

Pr(d)

d. Pr(d)

d2.Pr(d)

1

I

1

1/81

1/81

1/81

1.5

II

2

2/81

3/81

4.5/81

2

IIII/

5

5/81

10/81

20/81

2.5

IIII/I

6

6/81

15/81

37/81

3

IIII/ IIII/

10

10/81

30/81

90/81

INFERENTIAL STATISTICS

28

SAMPLING

3.5

IIII/IIII/

10

10/81

35/81

122.5/81

4

IIII/ IIII/ 13

III

13/81

52/81

208/81

4.5

IIII/IIII/

10

10/81

45/81

202.5/81

5

IIII/IIII/

10

10/81

50/81

250/81

5.5

IIII/I

6

6/81

33/81

181.5/81

6

IIII/

5

5/81

30/81

180/81

6.5

II

2

2/81

13/81

84/81

7

I

1

1/81

7/81

49/81

E(x1x2)=E(d)=4

E(X1)2-(X2)2=E(d2)= 17.6

∑f=81

Prove the results:

1. E(d) = µ1-µ2

E (d) = 2.5

µ1-µ2=∑ (X1-X2) / N

µ1-µ2=4

Hence proved 4 = 4

2. V(d) = (σ x1 )2/n1+ (σx2)2/n2

(σ1)2= (4-6)2 + (6-6)2+ (8-6)/3 =8/3

(σ2)2= (1-2)2 + (2-2)2+ (3-2)2/3=2/3

(σ x1 ) 2/n1+ (σx2)2/n2=8/3 .1/2 +5/3 .1/2

=5/3

=1.66

V (d) = E (d2) –E (d) 2

V (d) =17.6 – (4)2

V (d) =17.6 - 16

V (d) = 1.6

Hence proved

INFERENTIAL STATISTICS

29

SAMPLING

SAMPLE PROPORTION

Proportion is referred as a certain fraction of the total possessing certain attribute of our

interest; let us take an example to understand the concept of proportion

Suppose a student guesses at the answer on every question in a 300-question exam. If he gets

60 questions correct, then his proportion of correct guesses is 60/300=.20. If he gets 75

questions correct, then his proportion of correct guesses is 75/300=.25. The proportion of

correct guesses is simply the number of correct guesses divided by the total number of

questions.

Now, let X denote the number of successes out of a sample of n observations. If each

observation is a success with probability p independently of the other observations, then X is

a binomial random variable with parameters n and p. Furthermore, the proportion of

successes in the sample is also a random variable and is computed as

Sampling distribution of proportions:

Consider an experiment that results in a success on each trial with probability p or a failure

with a probability q=1-p. to obtain a sample of size n we perform n trials of experiment and

we are sampling from an infinite population. For example the population may be all possible

tosses of a fair coin in which the probability of getting head (success) is p=1/2 the mean

would be µ=np and the standard deviation σ=√

Question: 01 A population consists of five members .the marital status of each member

is given below, where M and S stand for married and single respectively.

Member

1

2

3

4

5

Marital

status

M

S

M

S

S

a) Determine the proportion of married members in the population

b) Select all possible samples of two members from this population (i) with replacement, (ii)

without replacement and compute the proportion of married members in each sample.

INFERENTIAL STATISTICS

30

SAMPLING

Solution:

a) Since there are 2 married members in the population

N=5, p=2/5

=0.4 or 40%

b) There are Nn=52 =25 possible samples of size n=2 which can be drawn with

replacement form the population these samples are given below

sample

p

sample

p

sample

p

sample

p

(1,1)

1

(2,4)

0

(4,2)

0

(5,5)

0

(1,2)

0.5

(2,5)

0

(4,3)

0.5

(1,3)

1

(3,1)

1

(4,4)

0

(1,4)

0.5

(3,2)

0.5

(4,5)

0

(1,5)

0.5

(3,3)

1

(5,1)

0.5

(2,1)

0.5

(3,4)

0.5

(5,2)

0

(2,2)

0

(3,5)

0.5

(5,3)

0.5

(2,3)

0.5

(4,1)

0.5

(5,4)

0

P

tally

f

Pr(p)

p.Pr(p)

p2

p2.Pr(p)

0

IIII/ IIII

9

9/25

0

0

0

0.5

IIII/ IIII/ 12

II

12/25

6/25

.25

3/25

1

IIII

4/25

4/25

1

4/25

4

∑f=25

E(p)=0.4

E(p2)=0.28

To prove the results:

1) E(p) = P

P=

0.4=0.4

INFERENTIAL STATISTICS

31

SAMPLING

2) V ( p ) = pq/n

V (p) =E (p2)-E (p) 2

=0.28-(0.4)2

=0.12

Pq/n= (0.4) (1-0.4)/2

=0.12

0.12=0.12 hence proved

In case of without replacement the samples drawn of sine n=2 are 10

Sample

Proportion

Sample

proportion

(1,2)

0.5

(2,4)

0

(1,3)

1

(2,5)

0

(1,4)

0.5

(3,4)

0.5

(1,5)

0.5

(3,5)

0.5

(2,3)

0.5

(4,5)

0

P

Tally

F

Pr(p)

p.Pr(p)

p2

p2.Pr(p)

0

III

3

3/10

O

0

0

0.5

IIII/ I

6

6/10

3/10

0.25

1.5/10

1

I

1

1/10

1/10

1

1/10

∑f=10

E(p)=0.4

E(p2)=0.25

Prove the results

1) E(p)=µp

0.4=0.4

INFERENTIAL STATISTICS

32

SAMPLING

2) V (p) = √

√

V (p) = √

–

=√

=√

√

= 0.3

√

√

√

=√

√

=√

= 0.3

Hence proved

SAMPLING DISTRIBUTION OF DIFFERENCES OF PROPORTIONS

Consider independent samples of size n1 and n2 drawn at random from two binomial

populations with parameters p1, q1 and p2 and q2 respectively, we denote proportion of

successes of each sample by P1 & P2. From all combination of these samples from the true

population we can obtain the sampling distributions of the differences of P1-P2 which is

called the sampling distribution of differences of proportions. The mean and standard

deviation are given below.

Mean: µp1-µp2 =p1-p2

Standard deviation: (σp1-p2) = √

=√

Question 1: let P1 denote the proportion of odd numbers in a random sample of size n1=2

with replacement from a finite population of size N1=3: 3, 6, 9.similarly, let P2 denote the

proportion of odd numbers in a random sample of size n2=3 with replacement from a finite

population of N2=2: (6, 7). Form sampling distributions of P1-P2 also find the mean and

variance of a sampling distribution of P1-P2 and verify the results.

Solution:

In population 1, N1=3, n1=2 there are Nn=32= 9 possible samples which can be drawn with

replacement from this population. These samples are

Samples

Proportion

Samples

Proportion

3,3

1

6,6

0

3,6

½

6,9

½

INFERENTIAL STATISTICS

33

SAMPLING

3,9

1

9,3

1

6,2

½

9,6

½

9,9

1

In population 2, N2=2 n2=3 there are Nn=23=8 possible samples which can be drawn from

this population. These samples are

Samples

Proportion

Sample

Proportion

6,6,6

0

7,6,6

1/3

6,6,7

1/3

7,6,7

2/3

6,7,6

1/3

7,7,6

2/3

6,7,7

2/3

7,7,7

1

Difference table =p1-p2

P1/p2

0

1/3

1/3

1/3

2/3

2/3

2/3

1

0

0

-1/3

-1/3

-1/3

-2/3

-2/3

-2/3

-1

1/2

1/2

1/6

1/6

1/6

-1/6

-1/6

-1/6

-1/2

P1-P2

tally

f

-1

-2/3

-1/2

-1/3

-1/6

I

III

IIII

III

IIII/

IIII/ II

IIII/

IIII/

IIII/ II

IIII/

0

1/6

1/3

INFERENTIAL STATISTICS

1/2

½

1/6

1/6

1/6

-1/6

-1/6

-1/6

-1/2

1/2

½

1/6

1/6

1/6

-1/6

-1/6

-1/6

-1/2

1/2

½

1/6

1/6

1/6

-1/6

-1/6

-1/6

-1/2

1

1

2/3

2/3

2/3

1/3

1/3

1/3

0

1

1

2/3

2/3

2/3

1/3

1/3

1/3

0

1

1

2/3

2/3

2/3

1/3

1/3

1/3

0

1

1

2/3

2/3

2/3

1/3

1/3

1/3

0

-1/72

-2/72

-2/72

-1/72

-2/72

(P1P2)2

1

4/6

¼

1/9

1/36

(P1-P2)2. F(P1P2)

1/72

4/216

1/72

1/216

1/216

5/72

12/72

0

2/72

0

1/36

0

1/216

12/72

4/72

1/9

4/216

P1-P2. f(p1-p2)

1

3

4

3

12

F(p1P2)

1/72

3/72

4/72

3/72

12/72

5

12

12

34

SAMPLING

½

2/3

1

IIII/ II

IIII

IIII/

IIII/ II

IIII

4

12

4/72

12/72

2/72

8/72

¼

4/9

1/72

16/216

4

4/72

4/72

1

4/72

∑f=72

∑(P1-p2). f(P1P2) = 12/72

∑(P1-P2)2. f(P1P2) = 48/216

Prove the results

Mean:

µp1-p2=∑ (P1-P2) f (P1-P2)

∑ (P1-P2) f (P1-P2) =12/72

=1/6

Variance:

(σp1-p2)2=∑ (P1-P2)2 f (P1-P2) – (µp1-p2)2

=48/216-1/36

=7/36

The proportion of odd numbers in population 1 and 2 are P1=2/3 and P2=1/2

respectively

Prove the results

1) µp1-p2= P1-P2

= 2/3-1/2

=1/6

2) (σp1-p2)=p1 (1-p1)/n1 + p2(1-p2)/n2

= (2/3) (1/3)/2 + (1/2) (1/2)/3

= (1/9) + (1/12)

=7/36

Which agrees with the results obtained above?

INFERENTIAL STATISTICS

35

SAMPLING

ASSESSMENT QUESTION

Question 1: A population consists of 4 numbers 3, 7, 11, 15 considering all possible samples

of size n=2 which can be drawn from this population find i) the population mean ii) the

population standard deviation iii) the mean of the sampling distribution of means iv) the

standard deviation of the sampling distribution of means. Verify iii) and IV) directly from i)

and ii) by one of the suitable formulae

1. Compute µx , (σx)2 and σx directly without forming the frequency/ sampling

distribution of means if the sampling is without replacement and thus verify the

results

Question 2: Random samples of size 2 are selected from the finite population consisting

of the numbers 3, 5, 7, 9, 11, 13.

a) Find the mean and standard deviation of this population.

b) List the 15 possible random samples (n = 2) that can be selected from this population and

calculate their means.

c) Use the results of part (b) to construct the sampling distribution of the means of these

samples.

d) Calculate the mean l and variance r ² of the probability distribution in part (c) and compare

them with the results obtained in part (a).

Question 3: A city currently does not have a National Football League team. Fifty-four

percent of all the city’s residents are in favor of attracting an NFL team. A random sample of

1000 of the city’s residents is selected, and asked if they would want an NFL team.

A. What is the probability the percentage of those residents polled who are in favor of

attracting an NFL team is less than 50%?

B. What is the probability the percentage of those residents polled who are in favor of

attracting an NFL team is more than 3% from the actual percentage of 54%?

Question4: Let P1 denote the proportion of even numbers in a random sample of size n1=2

without replacement from a population of size N1=3 consisting of value 4, 6, 9 similarly let

P2 denotes the proportion of even numbers in a random sample of size n2=2 without

replacement from a population size N2=3 consisting of values 2, 3, 5. Find the mean and the

variance of the differences of two proportions and verify the results.

1.µp1-p2=∑ (P1-P2) f (P1-P2)

2. (σp1-p2) = p1 (1-p1)/n1 + p2 (1-p2)/n2

Question5: A population consists of 6 values 1, 3, 5, 7, 9, 11.Take all the possible samples

of size 2 which can be drawn i) with replacement ii) without replacement from this

population. Find the sample means and form a sampling distribution of the mean in case of

with replacement. Compute mean and variance directly in case of without replacement. Find

the means and variances and verify the results

INFERENTIAL STATISTICS

36

SAMPLING

I) E(x) =µ, V(x) =

And for ii) E(x) =µ, V(X) =

Question 6: the weights of 1000 students of a college are normally distributed with a mean

68.5kg and standard deviation 2.7kg.if 200 random samples of 25 student each are obtained

from this population find the expected mean and standard deviation of the sampling

distribution of means if sampling is done i) with replacement, ii) without replacement also

verify the respective results.

Question7: draw all possible random samples of size n1=2 with replacement from a

population 3, 4, 5 similarly draw all possible random samples of size n2=2 with replacement

from another finite population 1,2,3 a) find sample means X1 and X2 and the possible

differences between the sample means of the two populations. B) Form a sampling

distribution of X1-X2 and compute its mean and variance and verify the results of difference

between means.

Question8: A population consists of 7 numbers 1, 1, 2, 3, 4, 5, 6 draw all possible samples of

size n=3 without replacement from this population and find the sample proportion of odd

numbers in the sample. Construct the sampling distribution of proportions and also verify

their respective results.

1) E(p)=µp

2) V (p) = √

√

Objectives

1. When each member of a population has an equally likely chance of being selected, this is

called:

a)

b)

c)

d)

A nonrandom sampling method

A quota sample

A snowball sample

An Equal probability selection method

2. Which of the following techniques yields a simple random sample?

a) Choosing volunteers from an introductory psychology class to participate

b) Listing the individuals by ethnic group and choosing a proportion from within each

ethnic group at random.

INFERENTIAL STATISTICS

37

SAMPLING

c) Numbering all the elements of a sampling frame and then using a random number

table to pick cases from the table.

d) Randomly selecting schools, and then sampling everyone within the school.

3. Which of the following is not true about stratified random sampling?

a) It involves a random selection process from identified subgroups

b) Proportions of groups in the sample must always match their population proportions

c) Disproportional stratified random sampling is especially helpful for getting large

enough subgroup samples when subgroup comparisons are to be done

d) Proportional stratified random sampling yields a representative sample

4. Which of the following statements are true?

a) The larger the sample size, the greater the sampling error

b) The more categories or breakdowns you want to make in your data analysis, the

larger the sample needed

c) The fewer categories or breakdowns you want to make in your data analysis, the

larger the sample needed

d) As sample size decreases, so does the size of the confidence interval

5. Which of the following formulae is used to determine how many people to include in the

original sampling?

a)

b)

c)

d)

Desired sample size/Desired sample size + 1

Proportion likely to respond/desired sample size

Proportion likely to respond/population size

Desired sample size/Proportion likely to respond

6. Which of the following sampling techniques is an equal probability selection method (i.e.,

EPSEM) in which every individual in the population has an equal chance of being selected?

a)

b)

c)

d)

Simple random sampling

Systematic sampling c. Proportional stratified sampling

Cluster sampling using the PPS technique

All of the above are EPSEM

7. Which of the following is not a form of nonrandom sampling?

a) Snowball sampling

INFERENTIAL STATISTICS

38

SAMPLING

b)

c)

d)

e)

Convenience sampling

Quota sampling

Purposive sampling

They are all forms of nonrandom sampling

8. Which of the following will give a more “accurate” representation of the population from

which a sample has been taken?

a)

b)

c)

d)

A large sample based on the convenience sampling technique

A small sample based on simple random sampling

A large sample based on simple random sampling

A small cluster sample

9. Sampling in qualitative research is similar to which type of sampling in quantitative

research?

a)

b)

c)

d)

Simple random sampling

Systematic sampling

Quota sampling

Purposive sampling

10. Which of the following would generally require the largest sample size?

a)

b)

c)

d)

e)

Cluster sampling

Simple random sampling

Systematic sampling

Proportional stratified sampling

Answers:

1. D

6. E

2. C

7. E

3. B

8. C

4. B

9. D

5. D

10. A

INFERENTIAL STATISTICS

39

SAMPLING

1) Choose the pair of symbols that complete the sentence-------------- is a parameter,

whereas-------------- is a statistic.

a) N, µ

b) n, s

c)

d)

2) In which of the following situations would x=

computing x?

√ be the correct formula to use for

a) Sampling from infinite population without replacement

b) Sampling from infinite or finite population without replacement

c) Sampling from finite population without replacement

d) Both b and c but not a

3) suppose that a population has standard deviation 5 what is the standard deviation of

the sampling distribution of the mean of sample size n=25

a) 5

b) 25

c) 1

d) 0.2

4) A border patrol check point stops every 10th passenger van is using

a) Stratified sampling

b) Cluster sampling

c) Systematic sampling

d) Sequential sampling

5) Standard error of the mean is the standard deviation of the

a) Population

b) Statistic

c) Sample

d) Sampling distribution of means

6) If samples of size n are drawn without replacement from a population of size N with

mean (µ) and variance( ), the standard error of the sample mean would be

a)

√

c) (N-n/N-1).

b)

d) (N-n/N-1).

INFERENTIAL STATISTICS

40

SAMPLING

7) in sampling without replacement

a) n<N

b) n>N

c) n≤N

d) n≥N

8) The standard error increases when the sample size is

a) Increased

b) Decreased

c) small

d) Large

9) The number of possible samples drawn by using with replacement as compared to

without replacement would be

a) more

b) Less

c) Equal

d) None

10) The difference between a statistic and a parameter is called

a) Sampling distribution

b) Sampling error

c) Systematic error

d) Non sampling error

11) Which of the following statements best describes the relationship between a parameter

and a statistic?

a)

b)

c)

d)

A parameter has a sampling distribution with the statistic as its mean.

A parameter has a sampling distribution that can be used to determine what

values the statistic is likely to have in repeated samples.

A parameter is used to estimate a statistic.

A statistic is used to estimate a parameter.

12) Sampling distribution of is the

a)

b)

c)

probability distribution of the sample mean

probability distribution of the sample proportion

mean of the sample

INFERENTIAL STATISTICS

41

SAMPLING

a. mean of the population

13) A simple random sample of 100 observations was taken from a large population. The

sample mean and the standard deviation were determined to be 80 and 12 respectively. The

standard error of the mean is

a. 1.20

b. 0.12

c. 8.00

d. 0.80

14) The probability distribution of all possible values of the sample proportion is the

A. probability density function of

B. sampling distribution of

C. same as , since it considers all possible values of the sample proportion

D. sampling distribution of

15) Since the sample size is always smaller than the size of the population, the sample mean

A. must always be smaller than the population mean

B. must be larger than the population mean

C. must be equal to the population mean

D. can be smaller, larger, or equal to the population mean

16) Standard deviation of all possible values is called the

A. standard error of proportion

B. standard error of the mean

C. mean deviation

D. central variation

INFERENTIAL STATISTICS

42

SAMPLING

17) As the sample size becomes larger, the sampling distribution of the sample mean

approaches a

A. binomial distribution

b. Poisson distribution

C. normal distribution

D. chi-square distribution

INFERENTIAL STATISTICS

43

SAMPLING

References:

http://www.southalabama.edu/coe/bset/johnson/dr_johnson/mcq/mc7.pdf

http://labspace.open.ac.uk/mod/oucontent/view.php?id=454418§ion=1.5.2

http://sociology.about.com/od/Q_Index/g/Quota-Sample.htm

http://www.stats.gla.ac.uk/steps/glossary/basic_definitions.html#sampdistn

http://www.investopedia.com/terms/s/sampling.asp

http://www.csulb.edu/~msaintg/ppa696/696sampl.htm#Why sample

http://schatz.sju.edu/methods/sampling/intro.html

https://www.google.com.pk/url?sa=t&rct=j&q=&esrc=s&source=web&cd=1&cad=rja

&ved=0CCcQFjAA&url=http%3A%2F%2Flibguides.usc.edu%2Floader.php%3Ftype

%3Dd%26id%3D675792&ei=OzujUoWnK4_KsgbHyYCwDg&usg=AFQjCNHi8T9Ua

REnJxzUbP_Y72YacNEoaQ&bvm=bv.57752919,d.Y

http://www.vtasq.org/pdf_ppt/program_presentations/Sampling%20Presentation%20

Oct%2026%202011.pdf

http://www.slideshare.net/samanshuaib7/savedfiles?s_title=sampling-ppt-myreport&user_login=mjfababaer

http://www.investopedia.com/terms/n/non-samplingerror.asp

http://www.cliffsnotes.com/math/statistics/sampling/populations-samples-parametersand-statistics

http://stattrek.com/sampling/sampling-distribution.aspx

http://people.uncw.edu/pricej/teaching/statistics/hyp_test.htm

http://stattrek.com/survey-research/compare-sampling-methods.aspx

INFERENTIAL STATISTICS

44

SAMPLING

STATISTICAL INFERENCE: HYPOTHESIS TESTING

Statistical inference:

Statistical inference is the process of drawing conclusions from data

that are subject to random variation, for example, observational

errors or sampling variation.

It is concerned with making predictions or inferences about a

population from observations and analyses of a sample. This means

But keep in mind that

sample should be large

enough to represent the

population or we can say it

should be a representative

part of it.

that by using sample results of a particular population, we can

conclude about whole population and its characteristics.

Hypothesis:

A statistical hypothesis is a claim (assertion, statement, belief or assumption) about an unknown

population parameter value.

For example, an investment company claims that the average return across all its investments is

20 percent and so on. To test such claims sample data are collected and analyzed. On the basis of

sample findings, hypothesized value of population parameter is accepted or rejected.

STEPS IN HYPOTHESIS TESTING

Specification of hypothesis:

The

Null Hypothesis

rejection

hypothesis

leads

of

to

null

the

An assumption to be tested for possible rejection is called null

acceptance of an alternative

hypothesis and is denoted by H0.

hypothesis.

Alternate Hypothesis

Any hypothesis that is different from the null hypothesis and is set up

in parallel to the null hypothesis, is called an alternative hypothesis

and is denoted by H1

INFERENTIAL STATISTICS

45

HYPOTHESIS TESTING

Types of Hypothesis

Directional:

Directional hypothesis are those where one can predict the direction (effect of one variable

on the other as 'Positive' or 'Negative')

For example, Girls perform better than boys (‗better than‘ shows the direction predicted)

One tail test:

A one tailed test looks for an increase or decrease in the parameter. In a one-tailed test, the

critical region will have just one part (the red area below). If our sample value lies in this

region, we reject the null hypothesis in favor of the alternative.

Suppose we are looking for a definite decrease. Then the critical region will be to the left.

Note, however, that in the one-tailed test the value of the parameter can be as high as you

like.

Non - Directional

Non Directional hypothesis are those where one does not predict the kind of effect but can

state a relationship between variable 1 and variable 2.

For example, there will be a difference in the performance of girls & boys (Not defining

what kind of difference)

Two tail test:

A two-tailed test looks for any change in the parameter (which can be any change- increase

or decrease).A two-tailed t-test divides distribution in half, placing half in the each tail. The

INFERENTIAL STATISTICS

46

HYPOTHESIS TESTING

null hypothesis in this case is a particular value, and there are two values for alternative

hypotheses, one positive and one negative. The critical value of t, tcrit, is written with both a

plus and minus sign (±). For example, the critical value of t when there are ten degrees of

freedom (DF=10) and

is set to .05, is tcri = ± 2.228. The sampling distribution model used

in a two-tailed t-test is illustrated below:

Level of significance:

The significance level is

usually denoted by α

The significance level of a statistical hypothesis test is a fixed

Significance Level = P (type

I error) = α

probability of wrongly rejecting the null hypothesis H0, if it is in

fact true. It is the probability of a type I error (explained

Usually, the significance

level is chosen to be 0.05 (or

equivalently, 5%).