Distance Methods - Publicera vid SLU

... seedling no. 1, no. 2, and no. 3 in a population where the individuals are distributed in a square lattice, respectively randomly, are derived in chapter 2. In this chapter values of means, standard deviations and medians of the distributions are also given. These values are partly quoted from t h e ...

... seedling no. 1, no. 2, and no. 3 in a population where the individuals are distributed in a square lattice, respectively randomly, are derived in chapter 2. In this chapter values of means, standard deviations and medians of the distributions are also given. These values are partly quoted from t h e ...

Statistics Using R with Biological Examples

... that it completely free, making it wonderfully accessible to students and researchers. The structure of the R software is a base program, providing basic program functionality, which can be added onto with smaller specialized program modules called packages. One of the biggest growth areas in contri ...

... that it completely free, making it wonderfully accessible to students and researchers. The structure of the R software is a base program, providing basic program functionality, which can be added onto with smaller specialized program modules called packages. One of the biggest growth areas in contri ...

Statistical Inference

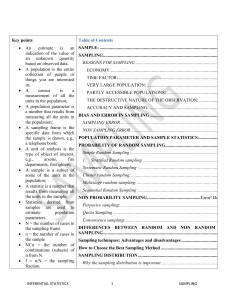

... In statistics, a simple random sample is a subset of individuals (a sample) chosen from a larger set (a population). Each individual is chosen randomly and entirely by chance, such that each individual has the same probability of being chosen at any stage during the sampling process, and each subset ...

... In statistics, a simple random sample is a subset of individuals (a sample) chosen from a larger set (a population). Each individual is chosen randomly and entirely by chance, such that each individual has the same probability of being chosen at any stage during the sampling process, and each subset ...

Survey Sampling

... Thus, the estimator is unbiased. Note that the mathematical definition of bias in (2.4) is not the same thing as the selection or measurement bias described in Chapter 1. All indicate a systematic deviation from the population value, but from different sources. Selection bias is due to the method of ...

... Thus, the estimator is unbiased. Note that the mathematical definition of bias in (2.4) is not the same thing as the selection or measurement bias described in Chapter 1. All indicate a systematic deviation from the population value, but from different sources. Selection bias is due to the method of ...

Course Notes

... customer confidence (for safety concerns). • In a marketing project, store managers in Aiken, SC want to know which brand of coffee is most liked among the 18-24 year-old population. • In a clinical trial, physicians on a Drug and Safety Monitoring Board want to determine which of two drugs is more ...

... customer confidence (for safety concerns). • In a marketing project, store managers in Aiken, SC want to know which brand of coffee is most liked among the 18-24 year-old population. • In a clinical trial, physicians on a Drug and Safety Monitoring Board want to determine which of two drugs is more ...