Homework #1 (with paper and pencil)

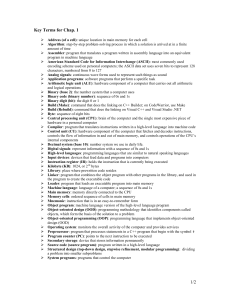

... Algorithm: step-by-step problem-solving process in which a solution is arrived at in a finite amount of time Assembler: program that translates a program written in assembly language into an equivalent program in machine language American Standard Code for Information Interchange (ASCII): most commo ...

... Algorithm: step-by-step problem-solving process in which a solution is arrived at in a finite amount of time Assembler: program that translates a program written in assembly language into an equivalent program in machine language American Standard Code for Information Interchange (ASCII): most commo ...

Monica Borra 2

... Scalability: DataMPI achieves high scalability as Hadoop and 40% performance improvement. Flexibile and the coding complexity of using DataMPI is on par with that of using traditional Hadoop ...

... Scalability: DataMPI achieves high scalability as Hadoop and 40% performance improvement. Flexibile and the coding complexity of using DataMPI is on par with that of using traditional Hadoop ...

2. Computers: The Machines Behind Computing

... ◦ Increases computer _________________ by helping users share computer resources and performing repetitive tasks for users ...

... ◦ Increases computer _________________ by helping users share computer resources and performing repetitive tasks for users ...

Appendix

... – built by John Mauchly and J. Presper Eckert at the University of Pennsylvania – 17,480 vacuum tubes, 30 tons, 10’ high, 3’ wide, 100’ long – had to be rewired to change program ...

... – built by John Mauchly and J. Presper Eckert at the University of Pennsylvania – 17,480 vacuum tubes, 30 tons, 10’ high, 3’ wide, 100’ long – had to be rewired to change program ...

biological sequence analysis

... Essential Computing for Bio-Informatics Description: This course provides a broad yet intense overview of the discipline of Computer Science at a level suitable for a mature graduate student audience. It first discusses the most important mathematical computing models and uses them to illustrate the ...

... Essential Computing for Bio-Informatics Description: This course provides a broad yet intense overview of the discipline of Computer Science at a level suitable for a mature graduate student audience. It first discusses the most important mathematical computing models and uses them to illustrate the ...

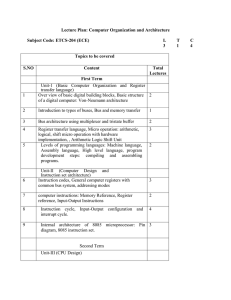

COA -ECE - Lecture plan

... logical, shift micro operation with hardware implementation, , Arithmetic Logic Shift Unit Levels of programming languages: Machine language, Assembly language, High level language, program development steps: compiling and assembling programs. ...

... logical, shift micro operation with hardware implementation, , Arithmetic Logic Shift Unit Levels of programming languages: Machine language, Assembly language, High level language, program development steps: compiling and assembling programs. ...

F21/1947/2012 ANGELA WAITHERA NABA FEB 116 ASSIGNMENT

... speed of the computers. They were relatively smaller and cheaper than the previous generation. Fourth generation-were developed between 1972 and 1984. There was development of large scale and very large scale integrated circuits. There was development of programming languages like functional program ...

... speed of the computers. They were relatively smaller and cheaper than the previous generation. Fourth generation-were developed between 1972 and 1984. There was development of large scale and very large scale integrated circuits. There was development of programming languages like functional program ...

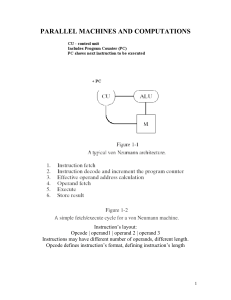

Parallel Machines and Computations (P1)

... SISD computers are the conventional von Neumann’s machines. In SIMD computers same operations are to be performed on disjoint data items, which may be viewed as vectors. For example, a single vector add operation is used to produce new vector C = A + B, whose elements are component wise addition of ...

... SISD computers are the conventional von Neumann’s machines. In SIMD computers same operations are to be performed on disjoint data items, which may be viewed as vectors. For example, a single vector add operation is used to produce new vector C = A + B, whose elements are component wise addition of ...

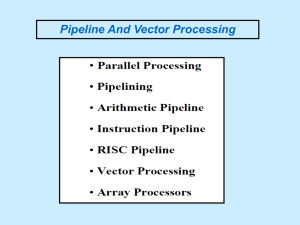

Slide 1

... The purpose of parallel processing is to speed up the computer processing capability and increase its throughput, that is, the amount of processing that can be accomplished during a given interval of time. The amount of hardware increases with parallel processing, and with it, the cost of the system ...

... The purpose of parallel processing is to speed up the computer processing capability and increase its throughput, that is, the amount of processing that can be accomplished during a given interval of time. The amount of hardware increases with parallel processing, and with it, the cost of the system ...

Generations of Computers

... These perform multi-tasking and allow many terminals to be connected to their services. Business, to process large amount of data. PRICE: between $15,000 $150,000 ...

... These perform multi-tasking and allow many terminals to be connected to their services. Business, to process large amount of data. PRICE: between $15,000 $150,000 ...

Document

... CPU • “Brains” of the computer – Arithmetic calculations are performed using the Arithmetic/Logical Unit or ALU – Control unit decodes and executes instructions – Registers hold information and instructions for CPU to process ...

... CPU • “Brains” of the computer – Arithmetic calculations are performed using the Arithmetic/Logical Unit or ALU – Control unit decodes and executes instructions – Registers hold information and instructions for CPU to process ...

Intro to computers

... Computer algorithms are written in programming languages (e.g., Java). Such algorithms are precise enough for the computer – they require no judgment. Example: Compute the remainder of 23/7. ...

... Computer algorithms are written in programming languages (e.g., Java). Such algorithms are precise enough for the computer – they require no judgment. Example: Compute the remainder of 23/7. ...

Computer Architecture

... Objectives of the course This course provides the basic knowledge necessary to • understand the hardware operation of digital computers: • It presents the various digital components used in the organization and design of digital computers. • Introduces the detailed steps that a designer must go thr ...

... Objectives of the course This course provides the basic knowledge necessary to • understand the hardware operation of digital computers: • It presents the various digital components used in the organization and design of digital computers. • Introduces the detailed steps that a designer must go thr ...

EECS 252 Graduate Computer Architecture Lec 01

... • Make programming easier by developing higher level programming languages, so that users did not need to use binary machine code instructions – First compilers in late 1950’s, for Fortran and Cobol ...

... • Make programming easier by developing higher level programming languages, so that users did not need to use binary machine code instructions – First compilers in late 1950’s, for Fortran and Cobol ...

john von neumann

... arithmetically modified in the same way as data. Thus, data was the same as program. As a result of these techniques and several others, computing and programming became faster, more flexible, and more efficient, with the instructions in subroutines performing far more computational work. Frequently ...

... arithmetically modified in the same way as data. Thus, data was the same as program. As a result of these techniques and several others, computing and programming became faster, more flexible, and more efficient, with the instructions in subroutines performing far more computational work. Frequently ...

Multicore, parallelism, and multithreading

... Form of computation in which many calculations are carried out simultaneously. Operating ...

... Form of computation in which many calculations are carried out simultaneously. Operating ...

CSCI 4550/8556 Computer Networks

... Hardware configurations differ from machine to machine (even with the same Flynn classification) Address spaces of processors vary among different architectures, and depend on memory organization, and should match target application domain. The communication model and language environments should id ...

... Hardware configurations differ from machine to machine (even with the same Flynn classification) Address spaces of processors vary among different architectures, and depend on memory organization, and should match target application domain. The communication model and language environments should id ...

Introduction to Programming 1

... to be carried out by a computer; an example of software • program execution: act of carrying out the instructions contained in a program • programming language: systematic set of rules used to describe computations in a format that is editable by humans; One is used to specify algorithms ...

... to be carried out by a computer; an example of software • program execution: act of carrying out the instructions contained in a program • programming language: systematic set of rules used to describe computations in a format that is editable by humans; One is used to specify algorithms ...

Parallel computing

Parallel computing is a form/type of computation in which many calculations are carried out simultaneously, operating on the principle that large problems can often be divided into smaller ones, which are then solved at the same time. There are several different forms of parallel computing: bit-level, instruction level, data, and task parallelism. Parallelism has been employed for many years, mainly in high-performance computing, but interest in it has grown lately due to the physical constraints preventing frequency scaling. As power consumption (and consequently heat generation) by computers has become a concern in recent years, parallel computing has become the dominant paradigm in computer architecture, mainly in the form of multi-core processors.Parallel computing is closely related to concurrent computing – they are frequently used together, and often conflated, though the two are distinct: it is possible to have parallelism without concurrency (such as bit-level parallelism), and concurrency without parallelism (such as multitasking by time-sharing on a single-core CPU).In parallel computing, a computational task is typically broken down in several, often many, very similar subtasks that can be processed independently and whose results are combined afterwards, upon completion. In contrast, in concurrent computing, the various processes often do not address related tasks; when they do, as is typical in distributed computing, the separate tasks may have a varied nature and often require some inter-process communication during execution.Parallel computers can be roughly classified according to the level at which the hardware supports parallelism, with multi-core and multi-processor computers having multiple processing elements within a single machine, while clusters, MPPs, and grids use multiple computers to work on the same task. Specialized parallel computer architectures are sometimes used alongside traditional processors, for accelerating specific tasks.In some cases parallelism is transparent to the programmer, such as in bit-level or instruction-level parallelism, but explicitly parallel algorithms, particularly those that use concurrency, are more difficult to write than sequential ones, because concurrency introduces several new classes of potential software bugs, of which race conditions are the most common. Communication and synchronization between the different subtasks are typically some of the greatest obstacles to getting good parallel program performance.A theoretical upper bound on the speed-up of a single program as a result of parallelization is given by Amdahl's law.