MPI Program Structure - Universitas Kuningan

... • Single Instruction Single Data (SISD): Serial Computers • Single Instruction Multiple Data (SIMD) - Vector processors and processor arrays ...

... • Single Instruction Single Data (SISD): Serial Computers • Single Instruction Multiple Data (SIMD) - Vector processors and processor arrays ...

History of Computer Science

... Magnetic tapes and disks for storage Reductions in size and heat generation Increase in processing speed and reliability Increased use of high-level languages ...

... Magnetic tapes and disks for storage Reductions in size and heat generation Increase in processing speed and reliability Increased use of high-level languages ...

Advanced Processor Technologies

... • It should be clear in the language when this is happening • It should be detectable statically • Ideally, it should be possible to check automatically the need for atomic ...

... • It should be clear in the language when this is happening • It should be detectable statically • Ideally, it should be possible to check automatically the need for atomic ...

Parallelism - Electrical & Computer Engineering

... physical resources (i.e. hardware) to work on more than one thing at a time There are different types of parallelism that are important for GPU computing: Task parallelism – the ability to execute different tasks within a problem at the same time Data parallelism – the ability to execute parts ...

... physical resources (i.e. hardware) to work on more than one thing at a time There are different types of parallelism that are important for GPU computing: Task parallelism – the ability to execute different tasks within a problem at the same time Data parallelism – the ability to execute parts ...

Chapter 1 Exercises and Answers

... language is a language made up of mnemonic codes that represent machine-language instructions. Programs written in assembly language are translated into machine language programs by a computer program called an assembler. Distinguish between assembly language and high-level languages. Whereas assemb ...

... language is a language made up of mnemonic codes that represent machine-language instructions. Programs written in assembly language are translated into machine language programs by a computer program called an assembler. Distinguish between assembly language and high-level languages. Whereas assemb ...

Introduction Of Hw AND Sw - GTU e

... – the extension, (usually 3 letters long), describes the type of program used for that file » doc(Word), xls(Excel), ppt(PowerPoint) ...

... – the extension, (usually 3 letters long), describes the type of program used for that file » doc(Word), xls(Excel), ppt(PowerPoint) ...

Instruction Set Architecture

... – This representation is called “machine language”. There’s a 1-to-1 mapping between these two representations, but this form can be stored in computer memory ...

... – This representation is called “machine language”. There’s a 1-to-1 mapping between these two representations, but this form can be stored in computer memory ...

Chapter 1 – Introduction to Computers and C++ Programming

... Enhance the functionality of Web servers Provide applications for consumer devices (such as cell phones, pagers and personal digital assistants) ...

... Enhance the functionality of Web servers Provide applications for consumer devices (such as cell phones, pagers and personal digital assistants) ...

ANTHONY MAIYO 22nd FEBRUARY 2013 F21/1946/2012

... They will be able to take commands in an audio visual way and carry out instructions. Many of the operations which require low human intelligence will be performed by these computers. Parallel processing is coming and showing the possibility that the power of many CPU’s can be used side by side and ...

... They will be able to take commands in an audio visual way and carry out instructions. Many of the operations which require low human intelligence will be performed by these computers. Parallel processing is coming and showing the possibility that the power of many CPU’s can be used side by side and ...

powerpoint 1

... Network CPU: (stupid) brain of the computer can do very simple tasks VERY FAST add, write in memory... Goal: Perform elaborate tasks by putting together many simple tasks HOW ? ...

... Network CPU: (stupid) brain of the computer can do very simple tasks VERY FAST add, write in memory... Goal: Perform elaborate tasks by putting together many simple tasks HOW ? ...

Systems Programming - Purdue University :: Computer Science

... We want to teach the students that computer programs are everywhere and not only in Windows, Linux, and Macintosh computers. The students also program in Robots Phones Embedded ...

... We want to teach the students that computer programs are everywhere and not only in Windows, Linux, and Macintosh computers. The students also program in Robots Phones Embedded ...

Intro to computer programming

... Portable (Can be executed on more than one platforms/ environments) Written in one instruction to carry out several instructions in machine level E.g. discount_price = price – discount; needs a compiler : a system software that translates source program to object program - translates the cod ...

... Portable (Can be executed on more than one platforms/ environments) Written in one instruction to carry out several instructions in machine level E.g. discount_price = price – discount; needs a compiler : a system software that translates source program to object program - translates the cod ...

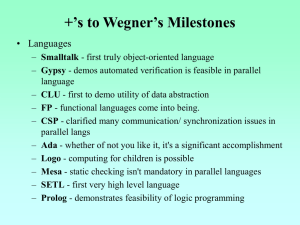

PPT - University of Virginia, Department of Computer Science

... Dijkstra: Threats to Computing Science “It is not only the performing artist who is, in a very real sense, shaped by the instrument he plays; this holds as well for the Reasoning Man…” - p. 8 “The quest for the ideal programming language and ideal man-machine interface that would make the software ...

... Dijkstra: Threats to Computing Science “It is not only the performing artist who is, in a very real sense, shaped by the instrument he plays; this holds as well for the Reasoning Man…” - p. 8 “The quest for the ideal programming language and ideal man-machine interface that would make the software ...

Languages - Computer Science@IUPUI

... Managing allocation of memory, of processor MS_DOS VMS time, and of other resources for various tasks I/O handling: BIOS vs DOS services (Interrupts) Read/Write data from secondary storage ...

... Managing allocation of memory, of processor MS_DOS VMS time, and of other resources for various tasks I/O handling: BIOS vs DOS services (Interrupts) Read/Write data from secondary storage ...

Assembly Programming and Computer Architecture for Software

... - Applied and practical - Platform diverse (Windows, Linux, Mac) and thus assembler diverse (MASM, NASM, GAS) – Flexible assembly programming education. - Discuss intricate differences between assemblers (MASM, NASM, GAS), differences between syntaxes (Intel, AT&T), and differences between OS platfo ...

... - Applied and practical - Platform diverse (Windows, Linux, Mac) and thus assembler diverse (MASM, NASM, GAS) – Flexible assembly programming education. - Discuss intricate differences between assemblers (MASM, NASM, GAS), differences between syntaxes (Intel, AT&T), and differences between OS platfo ...

program

... Main memory is often called RAM (Random Access Memory). Computer programs are loaded into RAM when they are run ...

... Main memory is often called RAM (Random Access Memory). Computer programs are loaded into RAM when they are run ...

cs1026_topic1 - Computer Science

... A bit is a binary digit – a 0 or 1 A string of 8 bits are a byte A kilobit is 1000 bits, a megabit is 1,000,000 and so on Computers can only do on or off, and a certain number of these at a time – hence a 64 bit processor a 32 bit processor, etc.. ...

... A bit is a binary digit – a 0 or 1 A string of 8 bits are a byte A kilobit is 1000 bits, a megabit is 1,000,000 and so on Computers can only do on or off, and a certain number of these at a time – hence a 64 bit processor a 32 bit processor, etc.. ...

Document

... This is used to store the result of an execution in the buffer until a later stage when it is suitable to write to the correct location. This is particular issue when writing to system memory which is slow to access ...

... This is used to store the result of an execution in the buffer until a later stage when it is suitable to write to the correct location. This is particular issue when writing to system memory which is slow to access ...

CS2 (Java) Exam 1 Review - Pennsylvania State University

... Central Processing Unit (CPU) Basic job: handle processing of instructions ...

... Central Processing Unit (CPU) Basic job: handle processing of instructions ...

CPS120 - Washtenaw Community College

... – and the operating system, which decides which programs – to run and when. Separation between Users and Hardware Computer programmers now created programs to ...

... – and the operating system, which decides which programs – to run and when. Separation between Users and Hardware Computer programmers now created programs to ...

CSCE590/822 Data Mining Principles and Applications

... Process manipulates its portion of data to produce its ...

... Process manipulates its portion of data to produce its ...

HPCC - Chapter1 - Auburn Engineering

... linking together 2 or more computers to jointly solve some computational problem since the early 1990s, an increasing trend to move away from expensive and specialized proprietary parallel supercomputers towards networks of workstations the rapid improvement in the availability of commodity high per ...

... linking together 2 or more computers to jointly solve some computational problem since the early 1990s, an increasing trend to move away from expensive and specialized proprietary parallel supercomputers towards networks of workstations the rapid improvement in the availability of commodity high per ...

Week 6

... If the Pico-computer memory addresses 23, 50 and 51 contain the numbers 7, 1 and 23 respectively (described in the table below), and the processor has already fetched the LDA (Load Accumulator) instruction, then describe the register transfer operations, including any memory accessing, which will oc ...

... If the Pico-computer memory addresses 23, 50 and 51 contain the numbers 7, 1 and 23 respectively (described in the table below), and the processor has already fetched the LDA (Load Accumulator) instruction, then describe the register transfer operations, including any memory accessing, which will oc ...

Parallel computing

Parallel computing is a form/type of computation in which many calculations are carried out simultaneously, operating on the principle that large problems can often be divided into smaller ones, which are then solved at the same time. There are several different forms of parallel computing: bit-level, instruction level, data, and task parallelism. Parallelism has been employed for many years, mainly in high-performance computing, but interest in it has grown lately due to the physical constraints preventing frequency scaling. As power consumption (and consequently heat generation) by computers has become a concern in recent years, parallel computing has become the dominant paradigm in computer architecture, mainly in the form of multi-core processors.Parallel computing is closely related to concurrent computing – they are frequently used together, and often conflated, though the two are distinct: it is possible to have parallelism without concurrency (such as bit-level parallelism), and concurrency without parallelism (such as multitasking by time-sharing on a single-core CPU).In parallel computing, a computational task is typically broken down in several, often many, very similar subtasks that can be processed independently and whose results are combined afterwards, upon completion. In contrast, in concurrent computing, the various processes often do not address related tasks; when they do, as is typical in distributed computing, the separate tasks may have a varied nature and often require some inter-process communication during execution.Parallel computers can be roughly classified according to the level at which the hardware supports parallelism, with multi-core and multi-processor computers having multiple processing elements within a single machine, while clusters, MPPs, and grids use multiple computers to work on the same task. Specialized parallel computer architectures are sometimes used alongside traditional processors, for accelerating specific tasks.In some cases parallelism is transparent to the programmer, such as in bit-level or instruction-level parallelism, but explicitly parallel algorithms, particularly those that use concurrency, are more difficult to write than sequential ones, because concurrency introduces several new classes of potential software bugs, of which race conditions are the most common. Communication and synchronization between the different subtasks are typically some of the greatest obstacles to getting good parallel program performance.A theoretical upper bound on the speed-up of a single program as a result of parallelization is given by Amdahl's law.