* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download Building a Data Warehouse with SAS Software in the UNIX Environment

Survey

Document related concepts

Transcript

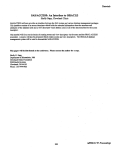

Building a Data Warehouse with SAS® Software in the Unix® Environment Karen Grippo, Dun & Bradstreet, Basking Ridge, NJ John Chen, Dun & Bradstreet, Basking Ridge, NJ Lisa Brown, SAS Institute Inc., Cary, NC ABSTRACT THE EVALUATION PROCESS A common trend in corporations today is "downsizing" moving applications from expensive mainframes to more affordable mid-range platforms. The Information Center of Dun & Bradstreet Information Systems, faced with the challenge of moving fulfillment off the mainframe, has chosen SAS software not only as the vehicle for its data analysis and delivery but also as its database management system. Due to the diversity of projects and data reqUirements, some time and analysis was required to identify an application suitable for migration. Once the application and the supporting data elements were chosen, a database management system had to be selected. Several key requirements were identified: (1) The time required to load the database had to be minimized. Since committments to customers would be at stake, a solution requiring more than one weekend to load the data would not be acceptable and less than twelve hours was preferable, in the event that the data had to be reloaded overnight. (2) The majority of projects involved appending Dun & Bradstreet data to files with less than 50,000 records using the DUNS Number® as the primary key for retrieving data (the DUNS Number is a unique number, like the Social Security number, that is assigned to every company in the database). (3) The time required to implement the database and transition the group from the mainframe to the Unix platform should be as short as possible. Twenty gigabytes, extracted from mainframe legacy databases, have been loaded onto an HP-UX® platform as indexed SAS data files. Two data structures (a table mapping the data files to file systems and a data dictionary mapping data elements to data files) and a set of SAS macros have been developed to give the Information Center developers and business users transparent access to the data, freeing them from the need to know data file or data element locations. In a future release of the SAS System, SAS Institute plans to support an integrated data dictionary. The Version 6 application presented in this paper had to define its own dictionary component. A post-version 6 preview of several dictionary features will be discussed at the end of this paper. The evaluation process covered not only traditional RDBMS's (Sybase® and Informix®), but also a new product from Red Brick Systems® (a product optimized for data warehousing, querying and decision support), and lastly, the SAS System. The SAS System was chosen for several reasons: (1) The time quoted by Sybase and Informix for the database load was anywhere between 3 days and 1 week. Benchmarks with SAS software indicated that both the download of the raw data and the building and indexing of the SAS data files could be completed within a ten hour window. (2) No other THE CHALLENGE like many corporations today, Dun & Bradstreet Information Systems is trying to reduce costs by moving applications from the mainframe to more affordable midrange platforms. The Information Center of the Technology & Business Systems Division was given the challenge of reducing its use of mainframe MIPS by designing and implementing an alternate platform solution. Historically, the role of the Information Center within the organization has been to provide customized development, analysis and fulfillment to both intemal and external customers. The requests for information received are as varied as the data collected by Dun & Bradstreet and in the course of fulfillment, probably every database and data source has been accessed at one time or another. Since its inception, the group has used the SAS System for its extensive data manipulation, analytical, and reporting capabilities. product offered as comprehensive a set of integrated tools as the SAS System - tools not only for complex data manipulation, but for analysis and presentation as well. Ultimately, SAS software provides tools that we can give to our business users to empower them to meet more of their own information delivery needs. (3) The level of SAS software expertise within the group meant an easier transition to the Unix platform. Less time would be required for implementing the data warehouse and less time would be required to begin fulfillment on the new platform. (4) The 'portability of 'SAS code meant that many of the existing mainframe procedures could be ported to the Unix environment with little or no change. Since SAS language is virtually platform-independent, our 519 developers could be productive immediately, with very little retraining necessary. type of operation, only an index on the DUNS number is required. Second, it has been difficult to identify a reasonable number of fields on which to create secondary indexes. The decision-support type projects which required "sweeping" the entire database, looking for records matching certain selection criteria, have had insufficient commonality in terms of data elements used THE IMPLEMENTATION Once the decision had been made to use SAS software as the data management tool, one operating system constraint became a very important issue. In the Unix operating system, the size of any file is restricted to 2 GB. Some DBMS packages, like Sybase, manage their own database space and thus, have no limitation on the size of a table. The SAS System, however, does not manage its own space, dictating that the database files had to comply with the 2 GB limit. for selection. Creating too many indexes would add significant overhead in terms of space and require additional time to build the database. This might not be offset by gains in turnaround time. Thus, it has been decided to defer the issue of creating additional indexes until further analysis can be done. Since space is not an issue, it has been decided not to compress the datasets, allowing for faster retrieval. As described above, the "main" database is segmented by business indicators, comprising 13 categories broken into 21 data segments. Retrieving a record using the DUNS Number index could potentially require searching all 21, querying each until a particular DUNS number is located - not a desirable solution. To resolve this issue, a more efficient two-level indexing scheme has been developed. The first level is a SAS data file, indexed by DUNS number, containing all 19+ million cases and pointers (in the form of a code) to the main segments in which they are located. At the second-level, the main segments themselves are indexed by the DUNS number. This allows the retrieval of data for any DUNS number in only two steps: (a) Use the first-level index to determine which main segment contains each DUNS Number and then, (b) follow the pointers to the appropriate segments, using the DUNS Number to directly access the correct This indexing scheme is record in each segment. illustrated in Figure 2. One obvious choice and, probably the Simplest, for segmenting the data would have been to split the data sequentially by the DUNS Number. However, a different approach was adopted with the following strategy. First, in an effort to minimize the size of the database, some data elements have been separated into smaller datasets, generically called "support" files. These support files contain data elements whose frequency of occurrence in the data is low relative to the amount of space they occupy. For example, the mailing address of a company occupies 65 bytes but is present in only 17% of the cases. By placing the mailing address fields in a separate data file, more than 1 GB of space is saved. In this manner, more than 10 separate support files have been created, saving 4+ GB in space. Second, the remaining data elements, comprising a 600+ byte extract for over 19 million cases (12 GB), are segmented using five key business indicators which place each company into one of 13 different categories, called An example of a key business 'main" segments. indicator is one which determines if a company is out of business or not. The advantage of our scheme is that certain categories of records can automatically be included or excluded, without even reading them, simply by knowing into which category they fall. For projects where we need to query the entire database, we frequently want to eliminate certain types of companies altogether (e.g. those which are out of business). In these circumstances, our database design allows us to avoid processing millions of records, using less valuable DATA ACCESS METHODOLOGY The complexity of the database design just described, translates to complexity in the SAS code required to retrieve data. As an application developer or business user wishing to retrieve data, you would have to know, for each element you wanted to extract, whether it is in a main segment or a support file, and if it is in a support file, which one. "Main" data elements must be retrieved from multiple files since the data is segmented. To further complicate matters, since a given file cannot exceed 2 GB, data files need to be split into two as they reach the size limit. Developers would have to oanstantly monitor the state of the database and adjust their code as changes occurred - an undesirable situation and a nightmare for program maintenance. CPU resources and giving a faster turnaround. Some segments still exceed the 2 GB limit and have to be further subdivided by the DUNS Number. The entire segmentation scheme is illustrated in Figure 1. To address this problem, meta data (that is, data about the data), in the form of two data structures, and a set of SAS macros have been created. This new data access methodology makes the number, type and location of the SAS data files as well as the location of individual data elements transparent to the application developer and the business user. Indexing the Database It has been decided to index the data sets only on the DUNS number for several reasons. First, the nature of the application being implemented is such that most of the projects involve taking a DUNS-numbered file of customer data and appending Dun & Bradstreet data using the DUNS number as the primary key. For this 520 The first data structure is an ASCII file containing the name of each SAS data file in the database. the file system on which it resides. and a code indicating whether it is a main or support file. Any time a data file is added. deleted or moved. a change is made to this table. Certain naming conventions have also been adopted. File system names have to be 8 characters or less so that they can also be used as SAS librets. The main files are named after the 13 categories. with a number added at the end to indicate how many sub-segments that category contains. For example. if the categories are given as A. B ..... M then the SAS data files might be named Al. Bl. B2. 93. .. .• Ml indicating that category A maps to one datase~ B to three. and M to one. provides some of the features that will be available in the SAS data dictionary. The advantage of using a data dictionary provided by the SAS Institute is that it will be fully integrated into the product. Some of the attribtues of an integrated data dictionary are: • The metadata set up for an application like the Dun & Bradstreet application could be recognized by other components of the product (PROC REPORT. for example). • The validation rules would be enforced throughout the system. If a variable. for example. has a value constraint then that constraint would be enforced by any application (PROC FSEDIT. PROC APPEND. etc.) which could update the file. The second data structure is a data dictionary. Created using PROC CONTENTS and SOme manually entered information. it is a SAS data file containing one record for each variable in the database with its name. type. length. and the segment from which it comes. Additional information. such as the mainframe source of the data element and a long text description of the variable. has been added to help users understand the contents of the database and for the creation of a hardcopy data dictionary for documentation. The SAS data dictionary will provide the ability to define metadata for libraries. SAS files and variables. as well as external files. The metadata can be information typically found in the SAS System such as variable name. variable type and variable format. or. it can be information that is not part of the version 6 metadata. This includes metadata that will be provided by SAS Institute. such as: • Prose descriptions of libraries. datasets. and variables. '* Icon associations for variables '* User-written constraints on variable values • Drill down Indicator • Classification Status A set of SAS macros utilizes these two data structures. The main macro. APND. defines the user's interface to the database. The user simply provides the names of the variables requested (up to a maximum of 100). the name of the input SAS data file containing the DUNS Numbers to which data will be appended and the name of the SAS data file that will contain the output. The macros do all the work. The variables requested are matched to the data dictionary to determine in which datasets they reside. A PROC Sal is constructed for each dataset. extracting the appropriate data elements. All the individual files are then combined into one data set and returned to the user. This data retrieval process is illustrated in Figure 3. Metadata can also be extended to include information that is specific to an application. An example of this type of metadata is the segment a variable comes from. as defined in the Dun & Bradstreet application. Another type of metadata that is important to most applications is information describing how different structures relate to each other. There are many relationships within the SAS System that will be described in the data dictionary. such as the link between a library and its SAS files or a dataset and its variables. There will also be relationships that allow for special behavior within the product. such as a relationship that can propagate changes to meta data among variables. For instance. if the same variable is used in several datasets and there is a desire to keep the format and the label the same for each occurrence of the variable. a relationship could be defined that would automatically propagate any changes to the format or label of one occurence of the variable to all the others. The design of this data access methodology embodies object-oriented concepts. even though SAS software is not traditionally considered an object-oriented language. These two data structures. plus the SAS macros which utilize them. encapSUlate everything any program needs to know to extract data from the database. Programs become virtually maintenance-free. A PREVIEW OF THE SAS INTEGRATED DATA DICTIONARY As with the other types of metadata described above. applications may extend the power of the SAS Institute data dictionary by defining their own relationships among the metadata. Complex interconnections among the structures in the SAS System can be defined by creating relationships that connect them. SAS Institute has recognized the need for its internal. as well as user-written applications. to have a method of storing and retrieving meta data about structures within the SAS System. A post-version 6 release of the SAS System will provide this functionality in the form of a data dictionary. The goal at SAS Institute is to make the data dictionary extensible enough that it can be 'useful for internal as well as user-written applications. The Dun and' Bradstreet The Dun and Bradstreet application presented in this paper has a component which manages metadata and 521 application uses several of the features that will be provided by the SAS data dictionary. The benefit they would receive by using the dictionary is that the functionality would be automated as well as integrated into the SAS System. SUMMARY The t~sk of building an alternate platform solution is difficult and complex. The SAS System may not be an obvious choice for implementing a data warehouse, but our experience illustrates that it is certainly a viable alterniltive. The SAS System provides traditional database management features such as indexing, data compression, support for SQL, and the creation of data views. With SAS Macro Language, you have a powerful facility for building the infrastructure of a data warehouse and for building a sophisticated mechanism for transparent data access. ACKNOWLEDGMENTS SAS is a registered trademark of SAS Institute Inc. in the USA and other countries. ® indicates USA registration. Brand and product names are registered trademarks or trademarks of their respective companies. 522 Data Segmentation Suooor! Files (1) Primary Kay· DUNS Number (2) Each has a dlfl'eAlnt layout with !hat data (3) Only _ Main Segments (1) Primary Kay· DUNS Number (2) Each has same layout (3) Segmenled by 5 Indicators 00 o 000 MaHing Address SIC Codes Public Records Type 1 A Main Segment Stili Too Large Must be SUb-Segmenled Type 2 ••• Type 13 /~"x -000 Type 2a Type 2b Type 2c Figure 1 Indexing Scheme 1st Level ~ 3 Fila Ptr Type2a Type 13 Type 1 n Type 1 1 2 2nd Level 0 0 Type 1 llnclexed by DUNS. Type 2a llndeaed by DUNS. 0 Type 13 Figure 2 523 llnclexed by DUNS. Data Appendage Flow Step 1: IIMr Req_ts Data %apnd (name, malladdr, slc1) Step2:Map Data Elements 1 Reqll8Sl8dto Data Dictionary YD!!!! .ImL name addr phone trdstyI1 mailaddr slc1 slc2 main main main tnlsty mall sics sics DATA DICTIONARY Step 3: Map Data Type to DB Table & Loca1Ion !!IlL fl!!..§n type1 t-:::::1I;",,,f--r:; 1cdata4 Icclata7 1ccIata15-f--*:-t-~ Icdata9 tnIstyIe Icclata10 mall Icdata9 t-==~d-~d Sics Icclata1 type2a type2b type13 FILE SYSTEM MAP Step 5: C.nNIIe Data Set Figure 3 524 outp:J I output IIle I