* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Document

Object-oriented programming wikipedia , lookup

Programming language wikipedia , lookup

Join-pattern wikipedia , lookup

Stream processing wikipedia , lookup

Library (computing) wikipedia , lookup

Abstraction (computer science) wikipedia , lookup

Scala (programming language) wikipedia , lookup

Falcon (programming language) wikipedia , lookup

Java (programming language) wikipedia , lookup

Ada (programming language) wikipedia , lookup

Assembly language wikipedia , lookup

C Sharp syntax wikipedia , lookup

Go (programming language) wikipedia , lookup

Java ConcurrentMap wikipedia , lookup

Program optimization wikipedia , lookup

GNU Compiler Collection wikipedia , lookup

Java performance wikipedia , lookup

Interpreter (computing) wikipedia , lookup

Name mangling wikipedia , lookup

C Sharp (programming language) wikipedia , lookup

Languages and Compilers

(SProg og Oversættere)

Bent Thomsen

Department of Computer Science

Aalborg University

With acknowledgement to Norm Hutchinson whose slides this lecture is based on.

1

Today’s lecture

• Three topics

– Treating Compilers and Interpreters as black-boxes

• Tombstone- or T- diagrams

– A first look inside the black-box

• Your guided tour

– Some Language Design Issues

2

Terminology

Q: Which programming languages play a role in this picture?

input

source program

Translator

is expressed in the

source language

output

object program

is expressed in the

target language

is expressed in the

implementation language

A: All of them!

3

Tombstone Diagrams

What are they?

– diagrams consisting out of a set of “puzzle pieces” we can use

to reason about language processors and programs

– different kinds of pieces

– combination rules (not all diagrams are “well formed”)

Program P implemented in L

P

L

Machine implemented in hardware

M

Translator implemented in L

S -> T

L

Language interpreter in L

M

L

4

Tombstone diagrams: Combination rules

P

M

M

P

L

M

OK!

P

S

P

T

S -> T

M

OK!

M OK!

OK!

WRONG!

P

L

WRONG!

S -> T

M

5

Compilation

Example: Compilation of C programs on an x86 machine

Tetris

C

C -> x86

x86

x86

Tetris

x86

Tetris

x86

x86

6

What is Tetris?

Tetris® The World's Most Popular

Video Game Since its commercial

introduction in 1987, Tetris® has

been established as the largest

selling and most recognized global

brand in the history of the interactive

game software industry. Simple,

entertaining, and yet challenging,

Tetris® can be found on more than

60 platforms. Over 65 million

Tetris® units have been sold

worldwide to date.

7

Cross compilation

Example: A C “cross compiler” from x86 to PPC

A cross compiler is a compiler which runs on one machine (the host

machine) but emits code for another machine (the target machine).

Tetris

C

C -> PPC

x86

x86

Tetris

PPC

download

Tetris

PPC

PPC

Host ≠ Target

Q: Are cross compilers useful? Why would/could we use them?

8

Two Stage Compilation

A two-stage translator is a composition of two translators. The

output of the first translator is provided as input to the second

translator.

Tetris

Tetris

Tetris

Java Java->JVM JVM JVM->x86 x86

x86

x86

x86

x86

9

Compiling a Compiler

Observation: A compiler is a program!

Therefore it can be provided as input to a language processor.

Example: compiling a compiler.

Java->x86

Java->x86

C -> x86

x86

C

x86

x86

10

Interpreters

An interpreter is a language processor implemented in software, i.e.

as a program.

Terminology: abstract (or virtual) machine versus real machine

Example: The Java Virtual Machine

Tetris

JVM

JVM

x86

x86

Q: Why are abstract machines useful?

11

Interpreters

Q: Why are abstract machines useful?

1) Abstract machines provide better platform independence

Tetris

JVM

JVM

x86

x86

Tetris

JVM

JVM

PPC

PPC

12

Interpreters

Q: Why are abstract machines useful?

2) Abstract machines are useful for testing and debugging.

Example: Testing the “Ultima” processor using hardware emulation

P

Ultima

Ultima

x86

x86

P

Ultima

Ultima

Functional equivalence

Note: we don’t have to implement Ultima emulator in x86 we can

use a high-level language and compile it.

13

Interpreters versus Compilers

Q: What are the tradeoffs between compilation and interpretation?

Compilers typically offer more advantages when

– programs are deployed in a production setting

– programs are “repetitive”

– the instructions of the programming language are complex

Interpreters typically are a better choice when

–

–

–

–

we are in a development/testing/debugging stage

programs are run once and then discarded

the instructions of the language are simple

the execution speed is overshadowed by other factors

• e.g. on a web server where communications costs are much higher than

execution speed

14

Interpretive Compilers

Why?

A tradeoff between fast(er) compilation and a reasonable runtime

performance.

How?

Use an “intermediate language”

• more high-level than machine code => easier to compile to

• more low-level than source language => easy to implement as an

interpreter

Example: A “Java Development Kit” for machine M

Java->JVM

M

JVM

M

15

Interpretive Compilers

Example: Here is how we use our “Java Development Kit” to run a

Java program P

P

Java

javac

P

Java->JVM JVM

M

M

java

P

JVM

JVM

M

M

16

Portable Compilers

Example: Two different “Java Development Kits”

Kit 1:

Java->JVM

M

JVM

M

Java->JVM

JVM

JVM

M

Kit 2:

Q: Which one is “more portable”?

17

Portable Compilers

In the previous example we have seen that portability is

not an “all or nothing” kind of deal.

It is useful to talk about a “degree of portability” as the

percentage of code that needs to be re-written when

moving to a dissimilar machine.

In practice 100% portability is as good as impossible.

18

Example: a “portable” compiler kit

Portable Compiler Kit:

Java->JVM

Java

Java->JVM

JVM

JVM

Java

Q: Suppose we want to run this kit on some machine M. How could

we go about realizing that goal? (with the least amount of effort)

19

Example: a “portable” compiler kit

Java->JVM

Java

Java->JVM

JVM

JVM

Java

Q: Suppose we want to run this kit on some machine M. How could

we go about realizing that goal? (with the least amount of effort)

JVM

Java

reimplement

JVM

C

C->M

M

M

JVM

M

20

Example: a “portable” compiler kit

This is what we have now:

Java->JVM

Java

Java->JVM

JVM

JVM

Java

JVM

M

Now, how do we run our Tetris program?

Tetris

Tetris

Java Java->JVM JVM

JVM

JVM

M

M

Tetris

JVM

JVM

M

M

21

Bootstrapping

Remember our “portable compiler kit”:

Java->JVM

Java

Java->JVM

JVM

JVM

Java

JVM

M

We haven’t used this yet!

Java->JVM

Java

Same language!

Q: What can we do with a compiler written in

itself? Is that useful at all?

22

Bootstrapping

Java->JVM

Java

Same language!

Q: What can we do with a compiler written in

itself? Is that useful at all?

• By implementing the compiler in (a subset of) its own language, we

become less dependent on the target platform => more portable

implementation.

• But… “chicken and egg problem”? How do to get around that?

=> BOOTSTRAPPING: requires some work to make the first “egg”.

There are many possible variations on how to bootstrap a compiler

written in its own language.

23

Bootstrapping an Interpretive Compiler to

Generate M code

Our “portable compiler kit”:

Java->JVM

Java

Java->JVM

JVM

JVM

Java

JVM

M

Goal we want to get a “completely native” Java compiler on machine M

P

P

Java->M

Java

M

M

M

24

Bootstrapping an Interpretive Compiler to

Generate M code (first approach)

Step 1: implement

Java ->M

Java

by rewriting Java ->JVM

Java

Step 2: compile it

Java->M

Java ->M

Java Java->JVM JVM

JVM

JVM

M

M

Step 3: Use this to compile again

25

Bootstrapping an Interpretive Compiler to

Generate M code (first approach)

Step 3: “Self compile” the Java (in Java) compiler

Java->M

Java->M

Java->M

Java

M

JVM

JVM

M

M

This is our desired

compiler!

Step 4: use this to compile the P program

P

Java

Java->M

M

P

M

26

Bootstrapping an Interpretive Compiler to

Generate M code (second approach)

Idea: we will build a two-stage Java -> M compiler.

P

Java

P

Java->JVM JVM

M

M

M

We will make this by

compiling

Java->JVM

JVM

JVM->M

M

M

P

M

To get this we implement

JVM->M

Java

and compile it

27

Bootstrapping an Interpretive Compiler to

Generate M code (second approach)

Step 1: implement

JVM->M

Java

Step 2: compile it

JVM->M

JVM->M

Java Java->JVM JVM

JVM

JVM

M

M

Step 3: compile this

28

Bootstrapping an Interpretive Compiler to

Generate M code (second approach)

Step 3: “Self compile” the JVM (in JVM) compiler

JVM->M

JVM->M

JVM JVM->M

M

JVM

JVM

M

M

This is the second

stage of our

compiler!

Step 4: use this to compile the Java compiler

29

Bootstrapping an Interpretive Compiler to

Generate M code

Step 4: Compile the Java->JVM compiler into machine code

Java->JVM

Java->JVM

JVM JVM->M

M

M

M

The first stage of

our compiler!

We are DONE!

P

Java

P

Java->JVM JVM

M

M

M

JVM->M

P

M

M

M

30

Full Bootstrap

A full bootstrap is necessary when we are building a new compiler

from scratch.

Example:

We want to implement an Ada compiler for machine M. We don’t

currently have access to any Ada compiler (not on M, nor on any

other machine).

Idea: Ada is very large, we will implement the compiler in a subset of

Ada and bootstrap it from a subset of Ada compiler in another

language. (e.g. C)

v1

Step 1: build a compiler for Ada-S

Ada-S ->M

in another language

C

31

Full Bootstrap

Step 1a: build a compiler (v1) for Ada-S in another language.

v1

Ada-S ->M

C

Step 1b: Compile v1 compiler on M

v1

v1

Ada-S ->M

Ada-S->M

C->M

C

M

M

This compiler can be used for

M

bootstrapping on machine M but we

do not want to rely on it permanently!

32

Full Bootstrap

Step 2a: Implement v2 of Ada-S compiler in Ada-S

v2

Ada-S ->M

Q: Is it hard to rewrite the compiler in Ada-S?

Ada-S

Step 2b: Compile v2 compiler with v1 compiler

v2

v2

v1 Ada-S->M

Ada-S ->M

M

Ada-S Ada-S ->M

M

We are now no longer dependent

M

on the availability of a C compiler!

33

Full Bootstrap

Step 3a: Build a full Ada compiler in Ada-S

v3

Ada->M

Ada-S

Step 3b: Compile with v2 compiler

v3

v3

v2

Ada->M

Ada->M

M

Ada-S Ada-S ->M

M

M

From this point on we can maintain the compiler in Ada.

Subsequent versions v4,v5,... of the compiler in Ada and compile

each with the the previous version.

34

Half Bootstrap

We discussed full bootstrap which is required when we have no

access to a compiler for our language at all.

Q: What if we have access to an compiler for our language on a

different machine HM but want to develop one for TM ?

We have:

Ada->HM

HM

We want:

Ada->HM

Ada

Ada->TM

TM

Idea: We can use cross compilation from HM to TM to bootstrap

the TM compiler.

35

Half Bootstrap

Idea: We can use cross compilation from HM to M to bootstrap the

M compiler.

Step 1: Implement Ada->TM compiler in Ada

Ada->TM

Ada

Step 2: Compile on HM

Ada->TM

Ada->TM

Ada Ada->HM HM

HM

HM

Cross compiler:

running on HM but

emits TM code

36

Half Bootstrap

Step 3: Cross compile our TM compiler.

Ada->TM

Ada

Ada->TM

Ada->TM

HM

HM

DONE!

TM

From now on we can develop subsequent versions of the compiler

completely on TM

37

Bootstrapping to Improve Efficiency

The efficiency of programs and compilers:

Efficiency of programs:

- memory usage

- runtime

Efficiency of compilers:

- Efficiency of the compiler itself

- Efficiency of the emitted code

Idea: We start from a simple compiler (generating inefficient code)

and develop more sophisticated version of it. We can then use

bootstrapping to improve performance of the compiler.

38

Bootstrapping to Improve Efficiency

We have:

Step 1

Ada->Mslow

Ada

Ada-> Mslow

Mslow

We implement:

Ada->Mfast

Ada

Ada->Mfast

Ada->Mfast

Ada Ada-> Mslow Mslow

Mslow

M

Step 2

Ada->Mfast

Ada->Mfast

Ada Ada-> Mfast Mfast

Mslow

Fast compiler that

emits fast code!

M

39

Conclusion

•

•

•

To write a good compiler you may be writing several

simpler ones first

You have to think about the source language, the target

language and the implementation language.

Strategies for implementing a compiler

1. Write it in machine code

2. Write it in a lower level language and compile it using an

existing compiler

3. Write it in the same language that it compiles and bootstrap

•

The work of a compiler writer is never finished, there

is always version 1.x and version 2.0 and …

40

Compilation

So far we have treated language processors (including

compilers) as “black boxes”

Now we take a first look "inside the box": how are

compilers built.

And we take a look at the different “phases” and their

relationships

41

The “Phases” of a Compiler

Source Program

Syntax Analysis

Error Reports

Abstract Syntax Tree

Contextual Analysis

Error Reports

Decorated Abstract Syntax Tree

Code Generation

Object Code

42

Different Phases of a Compiler

The different phases can be seen as different

transformation steps to transform source code into

object code.

The different phases correspond roughly to the different

parts of the language specification:

• Syntax analysis <-> Syntax

• Contextual analysis <-> Contextual constraints

• Code generation <-> Semantics

43

Example Program

We now look at each of the three different phases in a little

more detail. We look at each of the steps in transforming

an example Triangle program into TAM code.

! This program is useless except for

! illustration

let var n: integer;

var c: char

in begin

c := ‘&’;

n := n+1

end

44

1) Syntax Analysis

Source Program

Syntax Analysis

Error Reports

Abstract Syntax Tree Note: Not all compilers construct an

explicit representation of an AST. (e.g. on

a “single pass compiler” generally no need

to construct an AST)

45

1) Syntax Analysis -> AST

Program

LetCommand

SequentialCommand

SequentialDeclaration

AssignCommand

AssignCommand

VarDecl

VarDecl

SimpleT

Ident

n

Ident

Integer

Char.Expr

BinaryExpr

VNameExp Int.Expr

SimpleT SimpleV

Ident

Ident

c

Char

SimpleV

Ident Char.Lit Ident

c

‘&’

n

Ident Op Int.Lit

n

+

1

46

2) Contextual Analysis -> Decorated AST

Abstract Syntax Tree

Contextual Analysis

Error Reports

Decorated Abstract Syntax Tree

Contextual analysis:

• Scope checking: verify that all applied occurrences of

identifiers are declared

• Type checking: verify that all operations in the program are

used according to their type rules.

Annotate AST:

• Applied identifier occurrences => declaration

• Expressions => Type

47

2) Contextual Analysis -> Decorated AST

Program

LetCommand

SequentialCommand

SequentialDeclaration

VarDecl

Ident

n Integer

AssignCommand

BinaryExpr :int

Char.Expr

VNameExp Int.Expr

VarDecl

SimpleT

Ident

AssignCommand

:char

:int

SimpleT SimpleV

SimpleV

:char

Ident

Ident

c Char

:int

Ident Char.Lit Ident

c

‘&’

:int

n

Ident Op Int.Lit

n

+ 1

48

Contextual Analysis

Finds scope and type errors.

Example 1:

AssignCommand ***TYPE ERROR (incompatible types in

:int

assigncommand)

:char

Example 2:

foo not found

SimpleV ***SCOPE ERROR: undeclared variable foo

Ident

foo

49

3) Code Generation

Decorated Abstract Syntax Tree

Code Generation

Object Code

• Assumes that program has been thoroughly

checked and is well formed (scope & type rules)

• Takes into account semantics of the source

language as well as the target language.

• Transforms source program into target code.

50

3) Code Generation

let var n: integer;

var c: char

in begin

c := ‘&’;

n := n+1

end

VarDecl address = 0[SB]

PUSH 2

LOADL 38

STORE 1[SB]

LOAD 0

LOADL 1

CALL add

STORE 0[SB]

POP 2

HALT

SimpleT

Ident

Ident

n Integer

51

Compiler Passes

• A pass is a complete traversal of the source program, or

a complete traversal of some internal representation of

the source program.

• A pass can correspond to a “phase” but it does not have

to!

• Sometimes a single “pass” corresponds to several phases

that are interleaved in time.

• What and how many passes a compiler does over the

source program is an important design decision.

52

Single Pass Compiler

A single pass compiler makes a single pass over the source text,

parsing, analyzing and generating code all at once.

Dependency diagram of a typical Single Pass Compiler:

Compiler Driver

calls

Syntactic Analyzer

calls

Contextual Analyzer

calls

Code Generator

53

Multi Pass Compiler

A multi pass compiler makes several passes over the program. The

output of a preceding phase is stored in a data structure and used by

subsequent phases.

Dependency diagram of a typical Multi Pass Compiler:

Compiler Driver

calls

calls

calls

Syntactic Analyzer

Contextual Analyzer

Code Generator

input

output input

output input

output

Source Text

AST

Decorated AST

Object Code

54

Example: The Triangle Compiler Driver

public class Compiler {

public static void compileProgram(...) {

Parser parser = new Parser(...);

Checker checker = new Checker(...);

Encoder generator = new Encoder(...);

Program theAST = parser.parse();

checker.check(theAST);

generator.encode(theAST);

}

}

public void main(String[] args) {

... compileProgram(...) ...

}

55

Compiler Design Issues

Single Pass

Multi Pass

Speed

better

worse

Memory

Modularity

better for

large programs

worse

(potentially) better

for small programs

better

Flexibility

worse

better

“Global” optimization

impossible

possible

Source Language

single pass compilers are not possible

for many programming languages

56

Language Issues

Example Pascal:

Pascal was explicitly designed to be easy to implement

with a single pass compiler:

– Every identifier must be declared before it is first use.

?

var n:integer;

procedure inc;

begin

n:=n+1

end

procedure inc;

begin

n:=n+1

end; Undeclared Variable!

var n:integer;

57

Language Issues

Example Pascal:

– Every identifier must be declared before it is used.

– How to handle mutual recursion then?

procedure ping(x:integer)

begin

... pong(x-1); ...

end;

procedure pong(x:integer)

begin

... ping(x); ...

end;

58

Language Issues

Example Pascal:

– Every identifier must be declared before it is used.

– How to handle mutual recursion then?

forward procedure pong(x:integer)

procedure ping(x:integer)

begin

... pong(x-1); ...

end;

OK!

procedure pong(x:integer)

begin

... ping(x); ...

end;

59

Language Issues

Example Java:

– identifiers can be declared before they are used.

– thus a Java compiler need at least two passes

Class Example {

void inc() { n = n + 1; }

int n;

void use() { n = 0 ; inc(); }

}

60

Scope of Variable

• Range of program that can reference that variable (ie

access the corresponding data object by the variable’s

name)

• Variable is local to program or block if it is declared

there

• Variable is nonlocal to program unit if it is visible there

but not declared there

61

Static vs. Dynamic Scope

• Under static, sometimes

called lexical, scope, sub1

will always reference the x

defined in big

• Under dynamic scope, the x

it references depends on the

dynamic state of execution

procedure big;

var x: integer;

procedure sub1;

begin {sub1}

... x ...

end; {sub1}

procedure sub2;

var x: integer;

begin {sub2}

...

sub1;

...

end; {sub2}

begin {big}

...

sub1;

sub2;

...

end; {big}

62

Static Scoping

• Scope computed at compile time, based on program text

• To determine the name of a used variable we must find statement

declaring variable

• Subprograms and blocks generate hierarchy of scopes

– Subprogram or block that declares current subprogram or

contains current block is its static parent

• General procedure to find declaration:

– First see if variable is local; if yes, done

– If non-local to current subprogram or block recursively search

static parent until declaration is found

– If no declaration is found this way, undeclared variable error

detected

63

Example

program main;

var x : integer;

procedure sub1;

var x : integer;

begin { sub1 }

…x…

end; { sub1 }

begin { main }

…x…

end; { main }

64

Dynamic Scope

• Now generally thought to have been a mistake

• Main example of use: original versions of LISP

– Scheme uses static scope

– Perl allows variables to be declared to have dynamic scope

• Determined by the calling sequence of program units,

not static layout

• Name bound to corresponding variable most recently

declared among still active subprograms and blocks

65

Example

program main;

var x : integer;

procedure sub1;

begin { sub1 }

…x…

end; { sub1 }

procedure sub2;

var x : integer;

begin { sub2 }

… call sub1 …

end; { sub2 }

… call sub2…

end; { main }

66

Binding

• Binding: an association between an attribute and its

entity

• Binding Time: when does it happen?

• … and, when can it happen?

67

Binding of Data Objects and Variables

• Attributes of data objects and variables have different

binding times

• If a binding is made before run time and remains fixed

through execution, it is called static

• If the binding first occurs or can change during

execution, it is called dynamic

68

Binding Time

Static

•

•

•

•

•

•

Language definition time

Language implementation time

Program writing time

Compile time

Link time

Load time

Dynamic

• Run time

–

–

–

–

At the start of execution (program)

On entry to a subprogram or block

When the expression is evaluated

When the data is accessed

69

X = X + 10

•

•

•

•

•

Set of types for variable X

Type of variable X

Set of possible values for variable X

Value of variable X

Scope of X

– lexical or dynamic scope

• Representation of constant 10

– Value (10)

– Value representation (10102)

• big-endian vs. little-endian

– Type (int)

– Storage (4 bytes)

• stack or global allocation

• Properties of the operator +

– Overloaded or not

70

Little- vs. Big-Endians

• Big-endian

– A computer architecture in which, within a given multi-byte numeric

representation, the most significant byte has the lowest address (the word is stored

`big-end-first').

– Motorola and Sun processors

• Little-endian

– a computer architecture in which, within a given 16- or 32-bit word, bytes at lower

addresses have lower significance (the word is stored `little-end-first').

– Intel processors

from The Jargon Dictionary - http://info.astrian.net/jargon

71

Binding Times summary

• Language definition time:

– language syntax and semantics, scope discipline

• Language implementation time:

– interpreter versus compiler,

– aspects left flexible in definition,

– set of available libraries

• Compile time:

– some initial data layout, internal data structures

• Link time (load time):

– binding of values to identifiers across program modules

• Run time (execution time):

– actual values assigned to non-constant identifiers

The Programming language designer and compiler implementer

have to make decisions about binding times

72

Syntax Design Criteria

• Readability

– syntactic differences reflect

semantic differences

– verbose, redundant

• Writeability

– concise

• Ease of translation

– simple language

– simple semantics

• Lack of ambiguity

– dangling else

– Fortran’s A(I,J)

• Ease of verifiability

– simple semantics

73

Lexical Elements

•

•

•

•

•

•

Character set

Identifiers

Operators

Keywords

Noise words

Elementary data

• Comments

• Blank space

• Layout

– Free- and fixed-field formats

– numbers

• integers

• floating point

– strings

– symbols

• Delimiters

74

Some nitty gritty decisions

• Primitive data

– Integers, floating points, bit strings

– Machine dependent or independent (standards like IEEE)

– Boxed or unboxed

• Character set

– ASCII, EBCDIC, UNICODE

• Identifiers

– Length, special start symbol (#,$...), type encode in start letter

• Operator symbols

– Infix, prefix, postfix, precedence

• Comments

– REM, /* …*/, //, !, …

• Blanks

• Delimiters and brackets

• Reserved words or Keywords

75

Syntactic Elements

•

•

•

•

Definitions

Declarations

Expressions

Statements

•

•

•

•

Separate subprogram definitions (Module system)

Separate data definitions

Nested subprogram definitions

Separate interface definitions

76

Overall Program Structure

• Subprograms

– shallow definitions

• C

– nested definitions

• Pascal

• Data (OO)

– shallow definitions

• C++, Java, Smalltalk

• Separate Interface

– C, Fortran

– ML, Ada

• Mixed data and programs

– C

– Basic

• Others

– Cobol

• Data description separated

from executable statements

• Data and procedure division

77

Some more Programming Language Design Issues

• A Programming model (sometimes called the computer)

is defined by the language semantics

– More about this in the semantics course

• Programming model given by the underlying system

– Hardware platform and operating system

• The mapping between these two programming models

(or computers) that the language processing system must

define can be influenced in both directions

– E.g. low level features in high level languages

• Pointers, arrays, for-loops

– Hardware support for fast procedure calls

78

Programming Language Implementation

• Develop layers of machines, each more primitive than

the previous

• Translate between successive layers

• End at basic layer

• Ultimately hardware machine at bottom

• To design programming languages and compilers, we

thus need to understand a bit about computers ;-)

79

Why So Many Computers?

• It is economically feasible to produce in hardware (or

firmware) only relatively simple computers

• More complex or abstract computers are built in

software

• There are exceptions

– EDS machine to run prolog (or rather WAM)

– Alice Machine to run Hope

80

Machines

• Hardware computer: built out of

wires, gates, circuit boards, etc.

– An elaboration of the Von

Neumann Machine

Von Neumann Machine

• Software simulated computer: that

implemented in software, which

runs on top of another computer

•

•

•

•

•

•

Data

Primitive Operations

Sequence Control

Data Access

Storage Management

Operating Environment

81

Memory and data

• Memory

– Registers

• PC, data or address

– Main memory (fixed length words 32 or 64 bits)

– Cache

– External

• Disc, CD-ROM, memory stick, tape drives

– Order of magnitude in access speed

• Nanoseconds vs. milliseconds

• Built-in data types

– integers, floating point, fixed length strings, fixed length bit

strings

82

Hardware computer

• Operations

–

–

–

–

Arithmetic on primitive data

Tests (test for zero, positive or negative)

Primitive access and modification

Jumps (unconditional, conditional, return)

• Sequence control

– Next instruction in PC (location counter)

– Some instructions modify PC

• Data access

– Reading and writing

– Words from main memory, Blocks from external storage

• Storage management

– Wait for data or multi-programming

– Paging

– Cache (32K usually gives 95% hit rate)

83

Virtual Computers

• How can we execute programs written in the high-level

computer, given that all we have is the low-level

computer?

– Compilation

• Translate instructions to the high-level computer to those of

the low-level

– Simulation (interpretation)

• create a virtual machine

– Sometimes the simulation is done by hardware

• This is called firmware

84

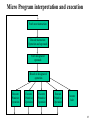

Micro Program interpretation and execution

Fetch next instruction

Decode instruction

Operation and operands

Fetch designated

operands

Branch to designated

operation

Execute

Primitive

Operation

Execute

Primitive

Operation

Execute

Primitive

Operation

Execute

Primitive

Operation

Execute

halt

85

A Six-Level Computer

Level 5

Applications

Application Level

Compilers, Editors, Navigators

Assembly Language Level

Level 3

Assembler, Linker, Loader

Operating System Machine Level

Level 2

Software

Level 4

Operating System

Instruction Set Architecture Level

Microprogram or hardware

Microarchitecture Level

Level 0

Hardware

Digital Logic Level

from Andrew S. Tanenbaum, Structured Computer Organization, 4th Edition, Prentice Hall, 1999.

Hardware

Level 1

86

Keep in mind

There are many issues influencing the design of a new

programming language:

– Choice of paradigm

– Syntactic preferences

– Even the compiler implementation

• e.g no of passes

• available tools

There are many issues influencing the design of new

compiler:

– No of passes

– The source, target and implementation language

– Available tools

87

Some advice from an expert

•

•

•

•

Programming languages are for people

Design for yourself and your friends

Give the programmer as much control as possible

Aim for brevity

88