Tuning IBM System x Servers for Performance

... 6.3.5 IBM CPU passthru card . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 113 6.4 64-bit computing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 116 6.5 Processor performance . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . ...

... 6.3.5 IBM CPU passthru card . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 113 6.4 64-bit computing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 116 6.5 Processor performance . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . ...

Dept. of CSE, BUAA

... Robustness is the child of transparency and simplicity. Design for simplicity; add complexity only where you must. Design for transparency; spend effort early to save effort later. In interface design, obey the Rule of Least Surprise. Programmer time is expensive; conserve it in preference to m ...

... Robustness is the child of transparency and simplicity. Design for simplicity; add complexity only where you must. Design for transparency; spend effort early to save effort later. In interface design, obey the Rule of Least Surprise. Programmer time is expensive; conserve it in preference to m ...

Windows Embedded CE 6.0 MCTS Exam Preparation Kit

... represents the current view of Microsoft Corporation on the issues discussed as of the date of publication. Because Microsoft must respond to changing market conditions, it should not be interpreted to be a commitment on the part of Microsoft, and Microsoft cannot guarantee the accuracy of any infor ...

... represents the current view of Microsoft Corporation on the issues discussed as of the date of publication. Because Microsoft must respond to changing market conditions, it should not be interpreted to be a commitment on the part of Microsoft, and Microsoft cannot guarantee the accuracy of any infor ...

Chapter 3

... processing the request and generating the response are both handled by a single servlet class ...

... processing the request and generating the response are both handled by a single servlet class ...

Threads Threads, User vs. Kernel Threads, Java Threads, Threads

... Question: what will the output be? Answer: Impossible to tell for sure If you know the implementation of the JVM on your particular machine, then you may be able to tell But if you write this code to be run anywhere, then you can’t expect to know what happens ...

... Question: what will the output be? Answer: Impossible to tell for sure If you know the implementation of the JVM on your particular machine, then you may be able to tell But if you write this code to be run anywhere, then you can’t expect to know what happens ...

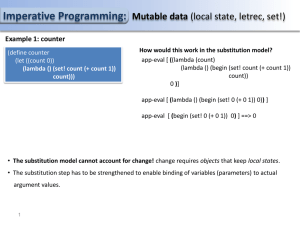

PS14

... Imperative Programming Evaluator: Adding while expressions Question1: Can a while expression be supported in a functional programming evaluator? NO! in functional programming there is no change of state. Particularly, the result of evaluating the condition clause of a while loop will always remain ...

... Imperative Programming Evaluator: Adding while expressions Question1: Can a while expression be supported in a functional programming evaluator? NO! in functional programming there is no change of state. Particularly, the result of evaluating the condition clause of a while loop will always remain ...

Clustered Objects - Computer Science

... Gamsa observes that, prior to addressing specific data structures and algorithms of the operating system in the small, a more fundamental restructuring of the operating system can reduce the impact of sharing induced by the workload by minimizing sharing in the operating system structure [49]. Gamsa ...

... Gamsa observes that, prior to addressing specific data structures and algorithms of the operating system in the small, a more fundamental restructuring of the operating system can reduce the impact of sharing induced by the workload by minimizing sharing in the operating system structure [49]. Gamsa ...

Socket Programming

... listen is non-blocking: returns immediately int s = accept(sock, &name, &namelen); s: integer, the new socket (used for data-transfer) sock: integer, the orig. socket (being listened on) name: struct sockaddr, address of the active participant ...

... listen is non-blocking: returns immediately int s = accept(sock, &name, &namelen); s: integer, the new socket (used for data-transfer) sock: integer, the orig. socket (being listened on) name: struct sockaddr, address of the active participant ...