* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Inference for Regression

Linear least squares (mathematics) wikipedia , lookup

Taylor's law wikipedia , lookup

History of statistics wikipedia , lookup

Degrees of freedom (statistics) wikipedia , lookup

Bootstrapping (statistics) wikipedia , lookup

Confidence interval wikipedia , lookup

Time series wikipedia , lookup

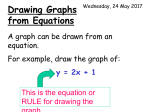

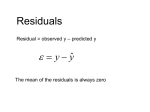

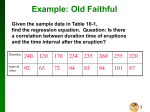

Inference for Regression • Today we will talk about the conditions necessary to make valid inference with regression • We will also discuss the various types of inference that can be made with regression • All this will be discussed in the context of simple linear regression Conditions for Valid Inference If these conditions hold • Independence • Normal errors • Errors have mean zero • Errors have equal SD Then we can • Find CI’s for the slope and intercept • Find a CI for the mean of y at a given x • Find a prediction interval (PI) for an individual y at a given x Checking the Conditions • After performing regression, we can check the conditions for valid inference. • Independence – good sampling and experimental techniques give independence. • Mean zero errors – verify using a residual plot. • Errors with constant standard deviation (homoscedasticity) – verify with residual plot. • Normal errors – verify with a normal quantile plot of the residuals. Residual Plot • Residuals are sample estimates of the errors (in y) made by the line. • Residual = observed y – predicted y • Predicted y’s are determined by the equation of the least squares line. • A residual plot is a scatter plot of the residuals vs. x. Examining Residual Plots • A residual plot indicates that the conditions of homoscedastic mean zero errors if the points appear randomly scattered with a constant spread in the y direction. • There should not be an obvious pattern to the residuals, nor should they “fan out”. • Fanning out indicates nonconstant variability, called heteroscedasticity. Residual Normal Quantile Plot • A normal quantile plot of the residuals should be examined to check for nonnormal errors. • Plots that indicate skewness or heavy tails show that regression inference using the normal distribution or the t-distribution will not be valid. What about independence? • Independence means that the (x,y) measurements do not depend on one another • A simple random sample will achieve independence • Common cases without independence: same individual measured twice or including related persons. • You are responsible for insuring independence by planning your study well. • If achieving independence is impossible, a professional statistician can help you perform valid inference using more advanced techniques. Examples of Unmet Conditions • Trying to fit a straight line to yield data obvious mistake because it shows curvature • Shows up on residual plot as curvature NOT CENTERED AT ZERO Examples of Unmet Conditions • This data set has unequal spread - thus the errors have unequal standard deviation. • Shows up on residual plot as a “horn” or “fanning out” pattern. • If residuals are not centered at zero or exhibit curvature - STOP - normality is inconsequential, seek help of a professional statistician. Back to Hand-Span Example • We fit a line to the data • Check conditions – Independence - used different people in simple random sample - OK – errors have mean zero checked residual plot OK – errors have equal SD checked residual plot OK – errors have light tails -OK • Since the conditions hold we can: – Form CI’s for population slope and intercept – Form CI’s for mean span of all individuals of a given height – Form prediction interval of hand-span for an individual of a given height Complete Regression Output Source | SS df MS ---------+-----------------------------Model | 44.703722 1 44.703722 Residual | 13.4629447 10 1.34629447 ---------+-----------------------------Total | 58.1666667 11 5.28787879 Number of obs = F( 1, 10) = Prob > F = R-squared = Adj R-squared = Root MSE = 12 33.21 0.0002 0.7685 0.7454 1.1603 -----------------------------------------------------------------------------Spancm | Coef. Std. Err. t P>|t| [95% Conf. Interval] ---------+-------------------------------------------------------------------Heightin | .5148221 .0893419 5.762 0.000 .3157559 .7138884 _cons | -14.01285 6.114214 -2.292 0.045 -27.63616 -.3895273 ------------------------------------------------------------------------------ • • • • • • • Top right: Adjusted R2 = .7685, RMSE = 1.1603 Bottom part - Heightin row corresponds to slope, _cons row to the intercept Coef column tells us Span (cm) = -14.01 +.51 Height (in) t, P>|t| tests H0: b1 (slope) = 0 and H0: b0 (intercept) = 0, gives t & p-value 95% Conf Interval gives 95% confidence interval for slope & intercept 95% confident that population slope is in (.31, .71) 95% confident that population intercept is in (-27.63, -.38) Inference on the Slope • Why test H0: b1 (slope) = 0? • If population slope is zero, then the line is useless - knowing x gives me no additional information about y • Example: If Span (cm) = .51 + 0 Height, then knowing height tells me nothing about span, all I know is the population mean of hand spans is approximately .51 cm Inference on the Intercept • Why test H0: b0 (intercept) = 0? • This depends on the situation. Note that the estimate for y when x=0 is the intercept. • Y = b0 + b1(x=0) = b0 • Example: Hand Span for person with height 0 is -14.01 cm • What? Regression is valid only within the range of observed data. We didn’t observe anyone with height 0. Line fits for observed region - may or may not for other regions. Confidence Interval for the Mean • At each value of x, we have a population of values for y. We would like to form a confidence interval for the mean of y for this population • Example: Estimate the mean hand-span of persons of height 70.5 inches • Center of interval will come from line • Span = -14.01 + .51 (70.5) = 22.28 cm • Endpoints of interval can be calculated, however, we will use a plot to estimate them Prediction Interval for an Individual • Suppose I have an individual of height 70.5 inches. Can I form an interval that I can be 95% confident that I will include the hand span of this individual? • This is called a prediction interval • By necessity, it is wider than a CI for the mean - predicting an individual is more challenging • Center at line, endpoints estimated with plot Confidence and Prediction Intervals • CI for mean hand-span at height=70.5 looks to be approx (21,23) • Widens as we approach edge of data - less certain at edges • PI for hand-span of an individual of height 70.5 inches looks to be approx (19,25) • PI wider than CI • PI includes most or all data Crab Meat Example • Easy to get total weight - really want weight of meat • Want to predict meat weight based on total weight • Scatterplot (total, meat) shows linearity • Fit a straight line, it looks like an appropriate thing to do Crab Meat Conditions • Residual plot looks evenly spread and centered at zero • Residuals are normal (or light tailed) • Independence - took a simple random sample of crabs in the tank farm - never measured the same crab twice • Conditions for inference are met Crab Meat Output Source | SS df MS ---------+-----------------------------Model | 104104.859 1 104104.859 Residual | 34114.0577 10 3411.40577 ---------+-----------------------------Total | 138218.917 11 12565.3561 Number of obs F( 1, 10) Prob > F R-squared Adj R-squared Root MSE = = = = = = 12 30.52 0.0003 0.7532 0.7285 58.407 -----------------------------------------------------------------------------Meatmg | Coef. Std. Err. t P>|t| [95% Conf. Interval] ---------+-------------------------------------------------------------------Totalwtg | 14.88321 2.694187 5.524 0.000 8.880184 20.88623 _cons | 12.71896 36.47862 0.349 0.735 -68.56048 93.9984 ------------------------------------------------------------------------------ • • • • • Adjusted R2 = .7285 (total weight explained 72% of variability in meat weight) RMSE = 58.407 (standard deviation of errors) Equation: Meat weight (mg) = 12.71 + 14.88 Total Weight (g) Reject null of zero slope, 95% CI for slope (8.88, 20.88) Fail to reject null of zero intercept (good!), 95% CI (-68,94) Crab Meat CI’s and PI’s • Confidence intervals for mean of meat weight given total weight • Prediction intervals for individual crab’s meat weight given total weight • For Total weight = 10, mean is in (100,200) and individual in (0,300) Review and Preview • We’re trying to find mathematical relationships between variables • Start with scatterplots - if linear, correlation gives a good measure of the nature and strength of relationship • Simple linear regression finds the equation of the least squares line • Under certain conditions, an array of inferences can be performed Review and Preview • Next time, we’ll discuss other types of regression. • Conditions for inference and nature of inferences will be the same. Using StataQuest: Handspan Example Getting a Scatterplot • Open the data set heightspan.dta • Go to Graphs: Scatterplots: Plot Y vs. X • Y=Spancm, X=Heightin • Click OK Finding the correlation • Go to Statistics: Correlation: Pearson (regular) • Choose Spancm and Heightin, click OK • r=.8767, p-val=.0002 for H0: r=0 StataQuest: Handspan Example Performing Regression • Go to Statistics: Simple Regression • Dependent=Spancm • Independent=Heightin • Click OK. Checking Conditions • We use these plots: • Plot fitted model • Plot residual vs. an X, choose Heightcm • Normal Quantile plot of residuals • For PI’s and CI’s choose Plot fitted model