* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Parametric Statistics

Degrees of freedom (statistics) wikipedia , lookup

Inductive probability wikipedia , lookup

Bootstrapping (statistics) wikipedia , lookup

Taylor's law wikipedia , lookup

Foundations of statistics wikipedia , lookup

Confidence interval wikipedia , lookup

History of statistics wikipedia , lookup

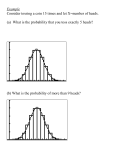

Parametric Statistics Descriptive statistics Hypothesis testing Definitions: • Population: the entire set about which info is needed, Greek letters are used • Sample: a subset studied; random samples • Parameter – numerical characteristic • Inferential statistics • Discrete and continuous • Distribution: the pattern of variation of a variable • One sided probability: comparing two data sets (ie. a > b); two-sided probability: a not equal to b Detection Limit Action Limit; 2s, 97.7% certain that signal observed is not random noise. Detection Limit; 3s, 93.3% certain to detect signal above the 2s limit when the analyte is at this concentration. • Quantitation Limit; 10s signal required for 10% RSD • Type I Error: identification of random noise as signal • Type II Error: not identifying signal that is present. Numerical Descriptive Statistics Types of numerical summary statistics: • Measures of Location – – • Measures of Center Other Measures Measures of Variability Probability Density Function • Probability density function – f(x) – probability of obtaining result x for the variable in your experiment (relative frequency for discreet measurements) • The total Sf(xi)=1 the total sum of all relative frequencies • Distribution function – probability that x is less than or equal to xi: – F(xi)= = Sf(xj) over all j such that xj xi Discreet Data PDFs • Binomial distribution: f(x) = (n!/(x!(n-x)!))px(1-p)n-x • The probability of getting the result of interest (success) x times out of n, if the overall probability of the result is p • Note that here, x is a discrete variable – Integer values only Uses of the Binomial Distribution • Quality assurance • Genetics • Experimental design Binomial PDF: Example • • • • n = 6; number of dice rolled pi = 1/6; probability of rolling a 2 on any die x = [0 1 2 3 4 5 6]; sample results # of 2s out of 6 Graph of f(x) versus x for rolling a “2” Binomial PDF: Example 2 • n = 8; number of puppies in litter • pi = 1/2; probability of any pup being male • x = [0 1 2 3 4 5 6 7 8] example data for the # of males out of 8 • Graph of f(x) versus x Binomial PDF: Characteristics • Shape is determined by values of n and p (parameters of the distribution function) – Only truly symmetric if p = 0.5 – Approaches Poisson’s distribution if n is very large and p is very small, – Approaches the normal distribution if n is large, and p is not small • Mean number of “successes” or the expectation value X = np • Variance is np(1- p) Poisson Distribution • Can be derived as a limiting form of Binomial Distribution – when n∞ as the mean l=np remains constant – this means conducting a large number of trials with p very small • Can be derived directly from basic assumptions • Assumptions determine the real situations where Poisson’s distribution is useful Simeon D. Poisson (1781-1840) Poisson’s Assumptions • Time or other interval type study • The time interval is small • The probability of one success is proportional to the time interval • The number of successes in one time interval is independent of the number of successes in another time interval Derive Poisson from basic assumptions • Derivation by Induction – To find an expression for p(x), first find p(0), then p(k) and p(k+1) then generalize for p(x). • Basic properties used: Poisson’s Assumptions: Example • The probability of one photon arriving in the time interval Dt is proportional to Dt when Dt is small • The probability that more than one photon arrives in Dt is negligible for small Dt • The number of photons that arrive in one time interval is independent of the number of photons that arrive in any other nonoverlatping interval Normal Approximation to Poisson’s Distribution • http://www.stat.ucla.edu/~dinov/courses_stu dents.dir/Applets.dir/NormalApprox2Poisso nApplet.html Measures of Center • Mode • Median • Population Mean (μ) and Sample Average (x) Measures of Spread • Variance – square of standard deviation • Standard deviation: – Population standard deviation s: large sample sets, the population mean (μ) is known. – Sample standard deviation (s): small sample sets, sample average (x) is used. – Pooled standard deviation (s ). When several small sets have the same sources of indeterminate error (ie: the same type of measurement but different samples) Standard Error of the Mean • uncertainty in the average(sm); different from the standard deviation s (variation for each measurement); if n=1, sm= s • i. If s is known, the uncertainty in the mean is: • ii. If s is unknown, use the t-score to compensate for the uncertainty in s. • t - from a table for % confidence level and n-1 degrees of freedom. (one degree of freedom is used to calculate the mean.) Chebychev and Empirical Rules • 's Rule The proportion of observations within k standard deviations of the mean, where , is at least , i.e., at least 75%, 89%, and 94% of the data are within 2, 3, and 4 standard deviations of the mean, respectively. • Empirical Rule If data follow a bell-shaped curve, then approximately 68%, 95%, and 99.7% of the data are within 1, 2, and 3 standard deviations of the mean, respectively. Z-score • -scores are a means of answering the question ``how many standard deviations away from the mean is this observation?'' z 0 1 2 3 P 1sided 0.5 .84 .98 .999 P 2sided 0 .68 .95 .99 Confidence Interval • The range of uncertainty in a value at a stated percent confidence • Percent confidence… that the value is within the stated range s is known s is unknown Look up the appropriate z or t values to use: x +/- t*s/sqrt(N) http://math.uc.edu/~brycw/classes/148/tables.htm Inferential Statistics • Comparing two sample means T-test (Student's t) • Used to calculate the confidence intervals of a measurement when the population standard deviation s is not known • Used to compare two averages • corrects for the uncertainty of the sample standard deviation (s) caused by taking a small number of samples. Comparison Tests Comparing the sample to the true value. Comparing two experimental averages Significance Testing • Confidence interval • Statistical Hypotheses • Ho and H1 Comparison Test: Comparing the sample to the true value Method #1. • If the difference between the measured value and the true value (μ) is greater than the uncertainty in the measurement, then there is a significant difference between the two values at that confidence level. Method #2. • experimental t-score (t ) is compared to t-critical (found in a table) • There is a significant difference if experimental t is greater than critical t . • t is chosen for N-1 degrees of freedom at the desired percent confidence interval. • If the experimental value may be greater or less than the true value, use a two sided t-score. If … Comparison Tests: Comparing two experimental averages. t-test: • use the pooled standard deviation and calculate t as: experimental • If experimental t is greater than critical t then there is a significant difference between the two means. • t is determined at the appropriate confidence level from a table • the t-statistic for N + N - 2 degrees of freedom. T-table