* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Figure 15.1 A distributed multimedia system

Survey

Document related concepts

Transcript

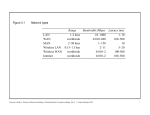

Slides for Chapter 6: Operating System support From Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edition 3, © Addison-Wesley 2001 Outline Introduction The operation system layer Protection Processes and threads Communication and invocation Operating system architecture Summary Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 6.1 Introduction In this chapter we shall continue to focus on remote invocations without real-time guarantee An important theme of the chapter is the role of the system kernel The chapter aims to give the reader an understanding of the advantages and disadvantages of splitting functionality between protection domains (kernel and user-level code) We shall examining the relation between operation system layer and middle layer, and in particular how well the requirement of middleware can be met by the operating system Efficient and robust access to physical resources The flexibility to implement a variety of resource-management policies Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Introduction (2) The task of any operating system is to provide problem-oriented abstractions of the underlying physical resources (For example, sockets rather than raw network access) the processors Memory Communications storage media System call interface takes over the physical resources on a single node and manages them to present these resource abstractions Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Introduction (3) Network operating systems They have a network capability built into them and so can be used to access remote resources. Access is networktransparent for some – not all – type of resource. Multiple system images The node running a network operating system retain autonomy in managing their own processing resources Single system imagethat produces a single An operating system system imageenvisage like thisan foroperating all the resources a users One could system ininwhich are neversystem concerned with where their programs run, or the distributed is called a distributed location system of any resources. The operating system has operating control over all the nodes in the system Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Introduction (4) --Middleware and network operating systems In fact, there are no distributed operating systems in general use, only network operating systems The first reason, users have much invested in their application software, which often meets their current problem-solving needs The second reason against the adoption of distributed operating systems is that users tend to prefer to have a degree of autonomy for their machines, even is a closely knit organization The combination of middleware and network operating systems provides an acceptable balance between the requirement for autonomy Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.1 System layers Applic ations, services Middlew are OS: kernel, libraries & s erv ers OS1 Proc es ses, threads, c ommunication, ... OS2 Proc es ses, threads, c ommunication, ... Computer & netw ork hardw are Computer & netw ork hardw are Node 1 Node 2 Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Platform The operating system layer Our goal in this chapter is to examine the impact of particular OS mechanisms on middleware’s ability to deliver distributed resource sharing to users Kernels and server processes are the components that manage resources and present clients with an interface to the resources Encapsulation Protection Concurrent processing Communication Scheduling Provide a useful service interface to their resource Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.2 Core OS functionality Communication between threads attached to different processes on the same computer Tread creation, synchronization and scheduling Dispatching of interrupts,Thread manager system call traps and other exceptions Proc es s manager Communic ation manager Memory manager Supervisor Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Handles the creation of and operations upon process Management of physical and virtual memory 6.3 Protection We said above that resources require protection from illegitimate accesses. Note that the threat to a system’s integrity does not come only from maliciously contrived code. Benign code that contains a bug or which has unanticipated behavior may cause part of the rest of the system to behave incorrectly. Protecting the file consists of two sub-problem this is a meaningless operation The first is to ensure that each of the file’s twothat operations (read and would upset normal use of write) can be performed only by clients with right toand perform it would never the file that files be designed export is The other type of illegitimate access, which we shall addresstohere, where a misbehaving client sidesteps the operations that resource exports We can protect resource from illegitimate invocations such as setFilePointRandomly or to use a type-safe programming language (JAVA or Modula-3) Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Kernel and Protection The kernel is a program that is distinguished by the facts that it always runs and its code is executed with complete access privileged for the physical resources on its host computer A kernel process execute with the processor in supervisor (privileged) mode; the kernel arranges that other processes execute in user (unprivileged) mode A kernel also sets up address spaces to protect itself and other processes from the accesses of an aberrant process, and to provide processes with their required virtual memory layout The process can safely transfer from a user-level address space to the kernel’s address space via an exception such as an interrupt or a system call trap Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 6.4 Processes and threads A thread is the operating system abstraction of an activity (the term derives from the phrase “thread of execution”) An execution environment is the unit of resource management: a collection of local kernel-managed resources to which its threads have access An execution environment primarily consists An address space Thread synchronization and communication resources such as semaphore and communication interfaces High-level resources such as open file and windows Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 6.4.1 Address spaces Region, separated by inaccessible areas of virtual memory Region do not overlap Each region is specified by the following properties Its extent (lowest virtual address and size) Read/write/execute permissions for the process’s threads Whether it can be grown upwards or downward Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.3 Address space 2N Auxiliary regions Stac k Heap Tex t 0 Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 6.4.1 Address spaces (2) A mapped file is one that is accessed as an array of bytes in memory. The virtual memory system ensures that accesses made in memory are reflected in the underlying file storage A shared memory region is that is backed by the same physical memory as one or more regions belonging to other address spaces The uses of shared regions include the following Libraries Kernel Data sharing and communication Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 6.4.2 Creation of a new process The creation of a new process has been an indivisible operation provided by the operating system. For example, the UNIX fork system call. For a distributed system, the design of the process creation mechanism has to take account of the utilization of multiple computers The choice of a new process can be separated into two independent aspects The choice of a target host The creation of an execution environment Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Choice of process host The choice of node at which the new process will reside – the process allocation decision – is a matter of policy Transfer policy Determines whether to situate a new process locally or remotely. For example, on whether the local node is lightly or heavily load Location policy Determines which node should host a new process selected for transfer. This decision may depend on the relative loads of nodes, on their machine architectures and on any specialized resources they may process Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Choice of process host (2) Process location policies may be Static Adaptive Load-sharing systems may be Load manager collect information about the nodes and use it to allocate new processes to node Centralized One load manager component Hierarchical Several load manager organized in a tree structure decentralized Node exchange information with one another direct to make allocation decisions Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Choice of process host (3) In sender-initiated load-sharing algorithms, the node that requires a new process to be created is responsible for initiating the transfer decision In receiver-initiated algorithm, a node whose load is below a given threshold advertises its existence to other nodes so that relatively loaded nodes will transfer work to it Migratory load-sharing systems can shift load at any time, not just when a new process is created. They use a mechanism called process migration Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Creation of a new execution environment There are two approaches to defining and initializing the address space of a newly created process Where the address space is of statically defined format For example, it could contain just a program text region, heap region and stack region Address space regions are initialized from an executable file or filled with zeroes as appropriate The address space can be defined with respect to an existing execution environment For example the newly created child process physically shares the parent’s text region, and has heap and stack regions that are copies of the parent’s in extent (as well as in initial contents) When parent and child share a region, the page frames belonging to the parent’s region are mapped simultaneously into the corresponding child region Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.4 Copy-on-write Process A’s address space RA The pages are initially write-protected at the hardware level A's page table Process B’s address space RB copied from RA RB Kernel Shared frame B's page table a) Before write page fault b) After write Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 The page fault handler allocates a new frame for process B and copies the original frame’s data into byte by byte 6.4.3 Threads thread 是 process 的簡化型式,它包含了使用 CPU 所必須的資訊:Program Counter、register set 以及 stack space。同一個程式 (task) 的 thread 之間共享 code section、data section 以及作業系統的資源 (OS resource)。如果作業系統可以提供多個 thread 同時 執行的能力,其具有 multithreading 的能力 The next key aspect of a process to consider in more detail and server process to possess more than one thread. Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.5 Client and server with threads Worker pool Thread 2 makes requests to server Thread 1 generates results Input-output Receipt & queuing T1 Requests N threads Client Server A disadvantage of this architecture is its inflexibility Another disadvantage is the high level of switching between the I/O and worker threads as they manipulate the share queue Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.6 Alternative server threading architectures (see also Figure 6.5) Associates a thread with each connection request I/O Associates a thread with each object per-c onnection threads w orkers remote objec ts a. Thread-per-request remote objec ts b. Thread-per-connec tion Advantage: thethe threads Disadvantage: do not contend a overheads of thefor thread shared queue, and creation and destruction throughput is potentially operations maximized per-objec t threads I/O remote objec ts c . Thread-per-object In each of these lastistwo Their disadvantage thatarchitectures clients may be the server benefits fromthread lowered delayed while a worker hasthreadmanagement overheads compared with several outstanding requests but another the thread-per-request architecture. thread has no work to perform Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.7 State associated with execution environments and threads Execution environment Address space tables Communication interfaces, open files Thread Saved processor registers Priority and execution state (such as BLOCKED) Software interrupt handling information Semaphores, other synchronization objects List of thread identifiers Execution environment identifier Pages of address space resident in memory; hardware cache entries Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 A comparison of processes and threads as follows Creating a new thread with an existing process is cheaper than creating a process. More importantly, switching to a different thread within the same process is cheaper than switching between threads belonging to different process. Threads within a process may share data and other resources conveniently and efficiently compared with separate processes. But, by the same token, threads within a process are not protected from one another. Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 A comparison of processes and threads as follows (2) The overheads associated with creating a process are in general considerably greater than those of creating a new thread. A new execution environment must first be created, including address space table The second performance advantage of threads concerns switching between threads – that is, running one thread instead of another at a given process Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 A context switch is the transition between contexts that takes place when switching between threads, or when a single thread makes a system call or takes another type of exception It involves the following: The saving of the processor’s original register state, and loading of the new state In some cases; a transfer to a new protection domain – this is known as a domain transition Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Thread scheduling In preemptive scheduling, a thread may be suspended at any point to make way for another thread In non-preemptive scheduling, a thread runs until it makes a call to the threading system (for example, a system call). The advantage of non-preemptive scheduling is that any section of code that does not contain a call to the threading system is automatically a critical section Race conditions are thus conveniently avoided Non-preemptively scheduled threads cannot takes advantage of multiprocessor , since they run exclusively Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Thread implementation When no kernel support for multi-thread process is provided, a user-level threads implementation suffers from the following problems The threads with a process cannot take advantage of a multiprocessor A thread that takes a page fault blocks the entire process and all threads within it Threads within different processes cannot be scheduled according to a single scheme of relative prioritization Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Thread implementation (2) User-level threads implementations have significant advantages over kernel-level implementations Certain thread operations are significantly less costly For example, switching between threads belonging to the same process does not necessarily involve a system call – that is, a relatively expensive trap to the kernel Given that the thread-scheduling module is implemented outside the kernel, it can be customized or changed to suit particular application requirements. Variations in scheduling requirements occur largely because of application-specific considerations such as the real-time nature of a multimedia processing Many more user-level threads can be supported than could reasonably be provided by default by a kernel Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 The four type of event that kernel notified to the user-level scheduler Virtual processor allocated The kernel has assigned a new virtual processor to the process, and this is the first timeslice upon it; the scheduler can load the SA with the context of a READY thread, which can thus can thus recommence execution SA blocked An SA has blocked in the kernel, and kernel is using a fresh SA to notify the scheduler: the scheduler sets the state of the corresponding thread to BLOCKED and can allocate a READY thread to the notifying SA SA unblocked An SA that was blocked in the kernel has become unblocked and is ready to execute at user level again; the scheduler can now return the corresponding thread to READY list. In order to create the notifying SA, the another SA in the same process. In the latter case, it also communicates the preemption event to the scheduler, which can re-evaluate its allocation of threads to SAs. SA preempted The kernel has taken away the specified SA from the process (although it may do this to allocate a processor to a fresh SA in the same process); the scheduler places the preempted thread in the READY list and re-evaluates the thread allocation. Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.10 Scheduler activations Proc es s A P added Proc es s B SA preempted Proc es s SA unbloc ked SA bloc ked Kernel Kernel Virtual process ors P idle P needed A. Ass ignment of v irtual proc es sors to proc es ses B. Ev ents betw een user-level sc heduler & kernel Key: P = proc es sor; SA = sc heduler ac tivation Scheduler activation (SA) is a call from kernel to a process Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 6.5.1 Invocation performance Invocation performance is a critical factor in distributed system design Network technologies continue to improve, but invocation times have not decreased in proportion with increases in network bandwidth This section will explain how software overheads often predominate over network overheads in invocation times Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.11 Invocations between address spaces (a) Sy stem c all Control trans fer v ia trap instruction Thread Control trans fer v ia priv ileged instruc tions Us er Kernel Protection domain boundary (b) RPC/RMI (w ithin one computer) Thread 1 Us er 1 Thread 2 Kernel Us er 2 (c ) RPC/RMI (betw een computers ) Netw ork Thread 1 Thread 2 Us er 1 Us er 2 Kernel 1 Kernel 2 Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.12 RPC delay against parameter size RPC delay Client delay against requested data size. The delay is roughly proportional to the size until the size reaches a threshold at about network packet size Reques ted data s iz e (bytes ) 0 1000 2000 Pac ket s iz e Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 The following are the main components accounting for remote invocation delay, besides network transmission times Marshalling Data copying Packet initialization Thread scheduling and context switching Waiting for acknowledgements and unmarshalling, which involve copying and Potentially, even after marshalling, message data isare copied several 1.Marshalling Several system calls (that is, context switches) made duringtimes an This The involves choice of initializing RPC protocol protocol may headers and converting data, become a significant overhead as theoperations amount of in the as course ofinvokes anparticularly RPCthe RPC, stubs kernel’s trailers, influence including delay, checksums. when The communication cost large is therefore data grows of data proportional, amounts in part, arethreads sent toboundary, the is amount of datathe sent 1. the user-kernel between client or server address 2. Across One or more server scheduled space and kernel buffers 3. If the operating system employs a separate network manager process, 2. Across each protocol layer (for example, then each Send involves a context switch RPC/UDP/IP/Ethernet) to one of its threads 3. Between the network interface and kernel buffers Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 A lightweight remote procedure call The LRPC design is based on optimizations concerning data copying and thread scheduling. Client and server are able to pass arguments and values directly via an A stack. The same stack is used by the client and server stubs In LRPC, arguments are copied once: when they are marshalled onto the A stack. In an equivalent RPC, they are copied four times Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.13 A lightweight remote procedure call Client Server A stack 4. Execute procedure and copy results 1. Copy args Us er A s tub s tub Kernel 2. Trap to Kernel 3. Upcall 5. Return (trap) Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 6.5.2 Asynchronous operation A common technique to defeat high latencies is asynchronous operation, which arises in two programming models: concurrent invocations asynchronous invocations An asynchronous invocation is one that is performed asynchronously with respect to the caller. That is, it is made with a non-blocking call, which returns as soon as the invocation request message has been created and is ready for dispatch Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.14 Times for serialized and concurrent invocations Serialised invocations proc es s args marshal Send Receiv e unmarshal proc es s results proc es s args marshal Send Concurrent inv oc ations proc es s args marshal Send trans mis sion proc es s args marshal Send Receiv e unmarshal execute request marshal Send Receiv e unmarshal proc es s results pipelining Receiv e unmarshal execute request marshal Send Receiv e unmarshal execute request marshal Send Receiv e unmarshal proc es s results Receiv e unmarshal execute request marshal Send time Receiv e unmarshal proc es s results Client Server Client Server Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 6.6 Operating system architecture Run only that system software at each computer that is necessary for it to carry out its particular role in the system architectures Allow the software implementing any particular service to be changed independently of other facilities Allow for alternatives of the same service to be provided, when this is required to suit different users or applications Introduce new services without harming the integrity of existing ones Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.15 Monolithic kernel and microkernel S4 ....... Microkernel provides only the most basic abstraction. Principally address spaces, the threads and local interprocess communication S1 S1 Key: Server: S2 S3 S2 S3 S4 ....... ....... Monolithic Kernel Kernel code and data: Microkernel Dy namic ally loaded s erv er program: Where these designs differ primarily is in the decision as to what functionality belongs in the kernel and what is to be left to sever processes that can be dynamically loaded to run on top of it Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 Figure 6.16 The role of the microkernel Middlew are Language s upport s ubsy stem Language s upport s ubsy stem OS emulation s ubsy stem Microkernel Hardw are The microkernel s upports middleware via subs ystems Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000 .... Comparison The chief advantages of a microkernel-based operating system are its extensibility A relatively small kernel is more likely to be free of bugs than one that is large and more complex The advantage of a monolithic design is the relative efficiency with which operations can be invoked Instructor’s Guide for Coulouris, Dollimore and Kindberg Distributed Systems: Concepts and Design Edn. 3 © Addison-Wesley Publishers 2000