* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Floating Point Computation

Survey

Document related concepts

Transcript

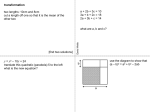

Floating Point Computation Jyun-Ming Chen 1 Contents • Sources of Computational Error • Computer Representation of (Floating-point) Numbers • Efficiency Issues 2 Sources of Computational Error • Converting a mathematical problem to numerical problem, one introduces errors due to limited computation resources: – round off error (limited precision of representation) – truncation error (limited time for computation) • Misc. – Error in original data – Blunder (programming/data input error) – Propagated error 3 Supplement: Error Classification (Hildebrand) • Gross error: caused by human or mechanical mistakes • Roundoff error: the consequence of using a number specified by n correct digits to approximate a number which requires more than n digits (generally infinitely many digits) for its exact specification. • Truncation error: any error which is neither a gross error nor a roundoff error. • Frequently, a truncation error corresponds to the fact that, whereas an exact result would be afforded (in the limit) by an infinite sequence of steps, the process is truncated after a certain finite number of steps. 4 Common measures of error • Definitions – total error = round off + truncation – Absolute error = | numerical – exact | – Relative error = Abs. error / | exact | • If exact is zero, rel. error is not defined 5 Ex: Round off error Representation consists of finite number of digits Implication: real-number is discrete (more later) R 6 Watch out for printf !! • By default, “%f” prints out 6 digits behind decimal point. 7 Ex: Numerical Differentiation • Evaluating first derivative of f(x) f ( x h) f ( x ) f ' ( x ) h f " ( x ) h2 2 f ( x h) f ( x ) f ' ( x) f " ( x) h2 h f ( x h ) f ( x ) f ' ( x) , for small h h Truncation error 8 Numerical Differentiation (cont) • Select a problem with known answer – So that we can evaluate the error! f ( x) x 3 f ' ( x) 3 x 2 f ' (10) 300 9 Numerical Differentiation (cont) • Error analysis – h (truncation) error • What happened at h = 0.00001?! 10 Ex: Polynomial Deflation • F(x) is a polynomial with 20 real roots f ( x) ( x 1)( x 2) ( x 20) • Use any method to numerically solve a root, then deflate the polynomial to 19th degree • Solve another root, and deflate again, and again, … • The accuracy of the roots obtained is getting worse each time due to error propagation 11 Computer Representation of Floating Point Numbers Floating point VS. fixed point Decimal-binary conversion Standard: IEEE 754 (1985) 12 Floating VS. Fixed Point • Decimal, 6 digits (positive number) – fixed point: with 5 digits after decimal point • 0.00001, … , 9.99999 – Floating point: 2 digits as exponent (10-base); 4 digits for mantissa (accuracy) • 0.001x10-99, … , 9.999x1099 • Comparison: – Fixed point: fixed accuracy; simple math for computation (sometimes used in graphics programs) – Floating point: trade accuracy for larger range of representation 13 Decimal-Binary Conversion • Ex: 134 (base 10) • Ex: 0.125 (base 10) • Ex: 0.1 (base 10) 14 Floating Point Representation f b e • Fraction, f – Usually normalized so that b 1 f 1 • Base, b – 2 for personal computers – 16 for mainframe –… • Exponent, e 15 Understanding Your Platform 16 Padding How about 17 IEEE 754-1985 • Purpose: make floating system portable • Defines: the number representation, how calculation performed, exceptions, … • Single-precision (32-bit) • Double-precision (64-bit) 18 Number Representation • S: sign of mantissa • Range (roughly) – Single: 10-38 to 1038 – Double: 10-307 to 10307 • Precision (roughly) – Single: 7 significant decimal digits – Double: 15 significant decimal digits Describe how these are obtained 19 Implication • When you write your program, make sure the results you printed carry the meaningful significant digits. 20 Implicit One • Normalized mantissa to increase one extra bit of precision • Ex: –3.5 21 Exponent Bias • Ex: in single precision, exponent has 8 bits – 0000 0000 (0) to 1111 1111 (255) • Add an offset to represent +/ – numbers – Effective exponent = biased exponent – bias – Bias value: 32-bit (127); 64-bit (1023) – Ex: 32-bit • 1000 0000 (128): effective exp.=128-127=1 22 Ex: Convert – 3.5 to 32-bit FP Number 23 Examine Bits of FP Numbers • Explain how this program works 24 The “Examiner” • Use the previous program to – Observe how ME work – Test subnormal behaviors on your computer/compiler – Convince yourself why the subtraction of two nearly equal numbers produce lots of error – NaN: Not-a-Number !? 25 Design Philosophy of IEEE 754 • [s|e|m] • S first: whether the number is +/- can be tested easily • E before M: simplify sorting • Represent negative by bias (not 2’s complement) for ease of sorting – [biased rep] –1, 0, 1 = 126, 127, 128 – [2’s compl.] –1, 0, 1 = 0xFF, 0x00, 0x01 • More complicated math for sorting, increment/decrement 26 Exceptions • Overflow: – ±INF: when number exceeds the range of representation • Underflow – When the number are too close to zero, they are treated as zeroes • Dwarf – The smallest representable number in the FP system • Machine Epsilon (ME) – A number with computation significance (more later) 27 Extremities More later • E : (1…1) – M (0…0): infinity – M not all zeros; NaN (Not a Number) • E : (0…0) – M (0…0): clean zero – M not all zero: dirty zero (see next page) 28 Not-a-Number • Numerical exceptions – Sqrt of a negative number – Invalid domain of trigonometric functions –… • Often cause program to stop running 29 Extremities (32-bit) • Max: 01 1 1 1 1 1 1 0 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1. (1.111…1)2254-127=(10-0.000…1) 21272128 • Min (w/o stepping into dirty-zero) 0 0 0 0 0 0 0 0 1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 1. (1.000…0)21-127=2-126 30 a.k.a.: also known as Dirty-Zero (a.k.a. denormals) • No “Implicit One” • IEEE 754 did not specify compatibility for denormals • If you are not sure how to handle them, stay away from them. Scale your problem properly – “Many problems can be solved by pretending as if they do not exist” 31 Dirty-Zero (cont) denormals R 0 dwarf 2-126 00000000 10000000 00000000 00000000 2-126 -127 00000000 01000000 00000000 00000000 2 00000000 00100000 00000000 00000000 2-128 00000000 00010000 00000000 00000000 2-129 (Dwarf: the smallest representable) 32 Drawf (32-bit) Value: 2-149 33 Machine Epsilon (ME) • Definition – smallest non-zero number that makes a difference when added to 1.0 on your working platform • This is not the same as the dwarf 34 Computing ME (32-bit) 1+eps Getting closer to 1.0 ME: (00111111 10000000 00000000 00000001) –1.0 = 2-23 1.12 10-7 35 Effect of ME 36 Significance of ME • Never terminate the iteration on that 2 FP numbers are equal. • Instead, test whether |x-y| < ME 37 Numerical Scaling • Number Density: there are as many IEEE 754 numbers between [1.0, 2.0] as there are in [256, 512] • Revisit: – “roundoff” error – ME: a measure of density near the 1.0 • Implication: – Scale your problem so that intermediate results lie between 1.0 and 2.0 (where numbers are dense; and where roundoff error is smallest) R 38 Scaling (cont) • Performing computation on denser portions of real line minimizes the roundoff error – but don’t over do it; switch to double precision will easily increase the precision – The densest part is near subnormal, if density is defined as numbers per unit length 39 How Subtraction is Performed on Your PC • Steps: – convert to Base 2 – Equalize the exponents by adjusting the mantissa values; truncate the values that do not fit – Subtract mantissa – normalize 40 Subtraction of Nearly Equal Numbers • Base 10: 1.24446 – 1.24445 1. Significant loss of accuracy (most bits are unreliable) 1110111 – 0100011 1010100… 41 Theorem of Loss Precision • x, y be normalized floating point machine numbers, and x>y>0 y p • If 2 1 2q x then at most p, at least q significant binary bits are lost in the subtraction of x-y. • Interpretation: – “When two numbers are very close, their subtraction introduces a lot of numerical error.” 42 Implications • When you program: • You should write these instead: f ( x) x 1 1 f ( x) ( x 1 1) g ( x) ln( x) 1 x g ( x) ln( x) ln( e) ln( ) e 2 2 x 2 11 x 2 11 x2 x 2 11 Every FP operation introduces error, but the subtraction of nearly equal numbers is the worst and should be avoided whenever possible 43 Efficiency Issues • Horner Scheme • program examples 44 Horner Scheme • For polynomial evaluation • Compare efficiency 45 Accuracy vs. Efficiency 46 Good Coding Practice 47 On Arrays … 48 Issues of PI • 3.14 is often not accurate enough – 4.0*atan(1.0) is a good substitute 49 Compare: 50 Exercise • Explain why 100, 000 0.1 10,000 i 0 • Explain why converge when implemented numerically 1 1 1 1 1 2 3 4 n 1 n 51 Exercise • Why Me( ) does not work as advertised? • Construct the 64-bit version of everything – Bit-Examiner – Dme( ); • 32-bit: int and float. Can every int be represented by float (if converted)? 52