* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download procomm2016-workshop-handout - The Technical Writing Project

Comparison (grammar) wikipedia , lookup

Ojibwe grammar wikipedia , lookup

Arabic grammar wikipedia , lookup

Modern Greek grammar wikipedia , lookup

Zulu grammar wikipedia , lookup

Old Norse morphology wikipedia , lookup

Ukrainian grammar wikipedia , lookup

Modern Hebrew grammar wikipedia , lookup

Navajo grammar wikipedia , lookup

Udmurt grammar wikipedia , lookup

Lithuanian grammar wikipedia , lookup

Macedonian grammar wikipedia , lookup

Old Irish grammar wikipedia , lookup

Old English grammar wikipedia , lookup

Georgian grammar wikipedia , lookup

French grammar wikipedia , lookup

Portuguese grammar wikipedia , lookup

Chinese grammar wikipedia , lookup

Hungarian verbs wikipedia , lookup

English clause syntax wikipedia , lookup

Sotho parts of speech wikipedia , lookup

Kannada grammar wikipedia , lookup

Lexical semantics wikipedia , lookup

Swedish grammar wikipedia , lookup

Esperanto grammar wikipedia , lookup

Russian grammar wikipedia , lookup

Italian grammar wikipedia , lookup

Scottish Gaelic grammar wikipedia , lookup

Ancient Greek grammar wikipedia , lookup

Spanish grammar wikipedia , lookup

Danish grammar wikipedia , lookup

Malay grammar wikipedia , lookup

Polish grammar wikipedia , lookup

Serbo-Croatian grammar wikipedia , lookup

Latin syntax wikipedia , lookup

Yiddish grammar wikipedia , lookup

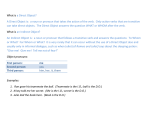

WORKSHOP: TEACHING TECHNICAL W RITING WITH DATA-DRIVEN LEARNING Ryan K. Boettger & Stefanie Wulff Practicing Corpus Queries 1. 2. 3. 4. Load the untagged BNC sample files. Create a concordance of technical. Sort it by the right-hand context. What kinds of nouns does technical modify? Go to the “Word List” ribbon and create a frequency list of the BNC sample you have currently loaded. a. How many tokens/types are in this sample? b. What are the most frequent words? Go to the “Collocates” ribbon and enter technical in the search window. Set the collocate parameter to 0 for the left-hand collocates, and 1 for the right-hand collocates. a. What are the top collocates? b. How does the list of top collocates change when you sort by frequency vs. a statistical association measure? Which output do you find more meaningful? Load the critical reviews of the TWP sample as a reference corpus, then go to the “Keyword List” ribbon and create a keyword list. a. What are the most distinctive words for the general, British English corpus in contrast to the critical reviews written by American English students? Clear the keyword list settings and unload all corpus files (or simply close AntConc and open it again). 5. 6. 7. 8. 9. 10. 11. Load the critical reviews of the TWP sample. First, create a word list. What are the most frequent words in the critical reviews? Create a concordance of *ly and sort the output by the search term, R1, and R2. a. What are the top adverbs in the critical reviews? b. Unload the critical reviews and load the white papers of the TWP sample instead. Repeat your search. What adverbs do you see now? How does the output differ? Add the critical review to the corpus files loaded so that you now have the entire TWP sample loaded (104 files). Create a word list. a. How many types/tokens does this sample contain? b. What is the most frequent lexical noun? Look for L1 collocates of research using the “Collocates” function. a. Sort by the keyness statistic. What are the most strongly associated collocates of research? b. Sort by frequency instead. How does the output differ? Taking advantage of the * (which means “0 or more of anything”), how could one look for instances of passive voice? a. How many hits does your search expression retrieve? b. Do you retrieve a lot of false hits (i.e., instances that do not contain any passives)? c. What hits does your search expression not retrieve that it should? How could one look for instances of split infinitives (like to boldly go …)? a. How many hits does your query retrieve? b. Do you retrieve a lot of false hits (i.e., instances that do not contain any split infinitives)? c. What hits does your search expression not retrieve that it should? Look for instances of “naked” this plus copula be in sentence-initial position (i.e., look for This is). a. How many hits do you retrieve? 1/4 WORKSHOP: TEACHING TECHNICAL W RITING WITH DATA-DRIVEN LEARNING Ryan K. Boettger & Stefanie Wulff b. c. d. Go to the “Concordance Plot” ribbon and look at where in the texts the instances occur. Do the instances cluster in a specific part of the texts? Go back to the concordance display and click on any concordance line. This will get you to the “File View” ribbon and show you the larger context in which the instance occurs. As you examine the instances more closely, what meta-discoursal function, if any, does This is seem to perform in the students’ writing? Repeat the search with This means instead. Is This means as frequent as This is? Does it occur in the same place in texts? Looking at the contexts more closely, can you make out a function that This means performs? Clear the keyword list settings and unload all corpus files (or simply close AntConc and open it again). 12. 13. 14. 15. 16. 17. Load the BNC sample that is tagged for parts of speech. (You should have 370 files loaded.) a. Create a word list. How many tokens/types does this sample contain? What are the top ten words? b. Create a concordance of research. How many hits do you retrieve? c. How can you take advantage of the POS-tags and regular syntax to only find hits of research as a verb? (Consult the list of POS-tags in the BNC below). How can you use the POS-tags and regular syntax to find all adverbs in the sample? How many hits do you retrieve? Can you write a search expression that finds all adverbs ending in –ly, and only those hits? How many hits does your search expression retrieve? (Tip: you will have to combine POS-tags and regular expressions – see below!). Can you combine POS-tags and regular syntax to write a more accurate search expression that finds instances of passive voice (and no false hits)? Can you combine POS-tags and regular syntax to write a more accurate search expression that finds instances of split infinitives (and no false hits)? Can you combine POS-tags and regular syntax to write a more accurate search expression that finds instances of the naked This? Can you maybe even write a search expression that finds This followed by any verb, not just a form of be (like This means, This implied, and This encourages)? a. Sort the concordance by the right-hand context. What are the most frequent verbs occurring with the naked This? b. How could you look for instances of attended This, i.e. case where This is followed by a noun phrase (as in This example is boring)? Regular Expressions Activate the “Regex” button on top of the search window when integrating these into your search expression in AntConc. * + ? . 0 or more 1 or more 0 or 1 any character (except a new line) (a|b) \s \b \w 2/4 a or b white space word boundary word character WORKSHOP: TEACHING TECHNICAL W RITING WITH DATA-DRIVEN LEARNING Ryan K. Boettger & Stefanie Wulff Part-of-speech (POS)-tags in the British National Corpus (BNC) AJ0 AJC AJS AT0 AV0 AVP AVQ CJC CJS CJT CRD DPS DT0 DTQ EX0 ITJ NN0 NN1 NN2 NP0 ORD PNI PNP PNQ PNX POS PRF PRP TO0 adjective (general or positive) e.g. good, old comparative adjective e.g. better, older superlative adjective, e.g. best, oldest article, e.g. the, a, an, no. Note the inclusion of no: articles are defined as determiners which typically begin a noun phrase but cannot appear as its head. adverb (general, not sub-classified as AVP or AVQ), e.g. often, well, longer, furthest. Note that adverbs, unlike adjectives, are not tagged as positive, comparative, or superlative. This is because of the relative rarity of comparative or superlative forms. adverb particle, e.g. up, off, out. This tag is used for all prepositional adverbs, whether or not they are used idiomatically in phrasal verbs such as come out here, or I can’t hold out any longer. wh-adverb, e.g. when, how, why. The same tag is used whether the word is used interrogatively or to introduce a relative clause. coordinating conjunction, e.g. and, or, but. subordinating conjunction, e.g. although, when. the subordinating conjunction that, when introducing a relative clause, as in the day that follows Christmas. Some theories treat that here as a relative pronoun; others as a con-junction. We have adopted the latter analysis. cardinal numeral, e.g. one, 3, fifty-five, 6609. possessive determiner form, e.g. your, their, his. general determiner: a determiner which is not a DTQ e.g. this both in This is my house and This house is mine. A determiner is defined as a word which typically occurs either as the first word in a noun phrase, or as the head of a noun phrase. wh-determiner, e.g. which, what, whose, which. The same tag is used whether the word is used interrogatively or to introduce a relative clause. existential there, the word there appearing in the constructions there is..., there are .... interjection or other isolate, e.g. oh, yes, mhm, wow. common noun, neutral for number, e.g. aircraft, data, committee. Singular collective nouns such as committee take this tag on the grounds that they can be followed by either a singular or a plural verb. singular common noun, e.g. pencil, goose, time, revelation. plural common noun, e.g. pencils, geese, times, revelations. proper noun, e.g. London, Michael, Mars, IBM. Note that no distinction is made for number in the case of proper nouns, since plural proper names are a comparative rarity. ordinal numeral, e.g. first, sixth, 77th, next, last. No distinction is made between ordinals used in nominal and adverbial roles. next and last are included in this category, as general ordinals. indefinite pronoun, e.g. none, everything, one (pronoun), nobody. This tag is applied to words which always function as heads of noun phrases. Words like some and these, which can also occur before a noun head in an article-like function, are tagged as determiners, DT0 or AT0. personal pronoun, e.g. I, you, them, ours. Note that possessive pronouns such as ours and theirs are included in this category. wh-pronoun, e.g. who, whoever, whom. The same tag is used whether the word is used interrogatively or to introduce a relative clause. reflexive pronoun, e.g. myself, yourself, itself, ourselves. the possessive or genitive marker ’s or ’. Note that this marker is tagged as a distinct word. For example, Peter’s or someone else’s is tagged <w NP0>Peter<w POS>'s <w CJC>or <w PNI>someone <w AV0>else<w POS>'s. the preposition of . This word has a special tag of its own, because of its high frequency and its almost exclusively postnominal function. preposition, other than of , e.g. about, at, in, on behalf of, with. Note that prepositional phrases like on behalf of or in spite of are treated as single words. the infinitive marker to. 3/4 WORKSHOP: TEACHING TECHNICAL W RITING WITH DATA-DRIVEN LEARNING Ryan K. Boettger & Stefanie Wulff UNC VBB VBD VBG VBI VBN VBZ VDB VDD VDG VDI VDN VDZ VHB VHD VHG VHI VHN VHZ VM0 VVB VVD VVG VVI VVN VVZ XX0 ZZ0 PUL PUN PUQ PUR unclassified items which are not appropriately classified as items of the English lexicon. Examples include foreign (nonEnglish) words; special typographical symbols; formulae; hesitation fillers such as errm in spoken language. the present tense forms of the verb be, except for is or ’s am, are ’m, ’re, be (subjunctive or imperative), ai (as in ain’t). the past tense forms of the verb be, was, were. -ing form of the verb be, being. the infinitive form of the verb be, be. the past participle form of the verb be, been. the -s form of the verb be, is, ’s. the finite base form of the verb do, do. the past tense form of the verb do, did. the -ing form of the verb do, doing. the infinitive form of the verb do, do. the past participle form of the verb do, done. the -s form of the verb do, does. the finite base form of the verb have, have, ’ve. the past tense form of the verb have, had, ’d. the -ing form of the verb have, having. the infinitive form of the verb have, have. the past participle form of the verb have, had. the -s form of the verb have, has, ’s. modal auxiliary verb, e.g. can, could, will, ’ll, ’d, wo (as in won’t) the finite base form of lexical verbs, e.g. forget, send, live, return. This tag is used for imperatives and the present subjunctive forms, but not for the infinitive (VVI). the past tense form of lexical verbs, e.g. forgot, sent, lived, returned. the -ing form of lexical verbs, e.g. forgetting, sending, living, returning. the infinitive form of lexical verbs , e.g. forget, send, live, return. the past participle form of lexical verbs, e.g. forgotten, sent, lived, returned. the -s form of lexical verbs, e.g. forgets, sends, lives, returns. the negative particle not or n’t. alphabetical symbols, e.g. A, a, B, b, c, d. left bracket (i.e. ( or [ ) any mark of separation ( . ! , : ; - ? ... ) quotation mark ( ‘ ’) right bracket (i.e. ) or ] ) References and Resources British National Corpus: http://www.natcorp.ox.ac.uk/ Technical Writing Project: http://www.technicalwritingproject.com/ AntConc: http://www.laurenceanthony.net/software.html 4/4