* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Learning from Observations

Agents of S.H.I.E.L.D. (season 4) wikipedia , lookup

Philosophy of artificial intelligence wikipedia , lookup

Existential risk from artificial general intelligence wikipedia , lookup

Agent-based model in biology wikipedia , lookup

Ecological interface design wikipedia , lookup

Agent-based model wikipedia , lookup

History of artificial intelligence wikipedia , lookup

Adaptive collaborative control wikipedia , lookup

Ethics of artificial intelligence wikipedia , lookup

Agent (The Matrix) wikipedia , lookup

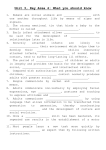

30/09/2016 Textbook Intro 2 Artificial Intelligence: Lecture 01 • Textbook: A rtificial Intelligence A Modern Approach (3 rd Edition) , by Stuart Russell and Peter Norvig, Prentice Hall, USA, 2010. (I w ill call it AIMA.) Introduction: definition of AI and agents • http://aima.cs.berkeley.edu / has many useful materials (like pdf of some chapt ers, program codes , etc.). Lubica Benuskova Reading: AIMA 2 nd ed., Chapters 1 and 2 1 • It is the leading textbook in AI, used in over 1200 universities in over 100 countries. Schedule (syllabus) Podmienky absolvovania predmetu (UUI) 1. 2. 3. 4. 5. 6. 7. 8. 9. 10. 11. 12. 13. 1. Cvičenia: L.I.S.T. http://capek.ii.fmph.uniba.sk/list/ 1. Mgr. Peter Gergeľ, email: [email protected] 2. Mgr. Juraj Holas, email: [email protected] 2. Prednášky na: http://dai.fmph.uniba.sk/~benus/UvodUI/ 3. Hodnotenie: cvičenia 30 % , projekty 20 %, skúška 50 %. 4. Skúška: bude písomnou formou. 5. Termíny: budeme sa držať striktne zákonov, skúšame len cez skúšobné, ako opravný termín máte právo využiť vypísané termíny. 6. Termín označený ako posledný bude naozaj posledný, žiaden dodatočný termín po ňom už nebude. 7. Známky zapisujeme do indexu len v rámci na to určených zapisovacích termínoch. What is Artificial Intelligence (AI)? Definition od AI, agent, simple agents, LEGO robots Logical agents (propositional logic) Search (DFS, BFS, etc), games and adversarial search (MinMax, etc) Constraint-satisfaction problems (CSP) Optimization and evolutionary robotics Uncertainty, probability, naïve Bayes model, fuzzy logic Regression and perceptron Multi-layer perceptron (MLP) – theory Multi-layer perceptron (MLP) – applications Reinforcement learning k-means, k-NN, SOM, PCA, applications Spiking neural netw orks (SNN) Robotics (closing the circle) and future of AI 4 What is Intelligence? • Here is a fairly uncontroversial definition: • Intelligence has been defined in many different w ays including logic, abstract thought, understanding, self-aw areness, communication, learning, having emotional know ledge, planning, and problem solving (Wikipedia, 2014). – AI is the study and creation of machines that perform tasks normally associated with intelligence. • Intelligence derives from the Latin verb intelligere, meaning to comprehend or perceive. A form of this verb, intellectus became the medieval technical term for understanding. • People are interested in AI for several different reasons: – Cognitive scientists and psychologists are interested in finding out how people (i.e. our brains) w ork. Machine simulations can help w ith this task. – Engineers are interested in building machines w hich can do useful things. These things (often) require intelligence. • The tasks to be performed could involve thinking, or acting, or some combination of these. 2 • Intelligence is a very general (mental) capability, through w hich organisms possess the abilities to learn, form concepts, understand, and reason, including the capacities to recognize and categorise patterns, comprehend ideas, make plans, solve problems, and use language to communicate. 5 6 1 30/09/2016 An embodied approach to AI Embodied AI: starting from the beginning • Proponents of embodied AI believe that in order to reproduce human intelligence, w e need to retrace an evolutionary process: – We should begin by building robust systems that perform very simple tasks — but in the real w orld. – When w e’ve solved this problem, w e can progressively add new functionality to our systems, to allow them to perform more complex tasks. – At every stage, w e need to have a robust, w orking real-w orld system. • Maybe systems of this kind w ill provide a good framew ork on w hich to implement distinctively human capabilities. • This line of thought is associated w ith a researcher called Rodney Brooks (Australian, living in USA). • How hard is it to build something like us? • One w ay of measuring the difficulty of a task is to look at how long evolution in nature took to discover a “solution”, i.e. us. • Most of evolutionary time w as spent designing robust systems for physical survival. • In this light, w hat’s ‘distinctively human’ seems relatively unimportant… 7 8 Agents and environments Disciplines contributing to AI • Artificial Intelligence (AI) has origins in several disciplines some old, some recent: • Recall our general definition of AI: AI is the study and creation of machines that perform tasks normally associated with intelligence. Computer Science Alan Turing, John von Neumann,… Mathematics Notions of proof, algorithms, probability… Robotics (cybernetics) Construction of embodied agents Economics Formal theory od rational decisions Neuroscience Study of the brain structure and function • In this course w e w ill use the term agent to refer to these machines. – The term agent is very general: it covers humans, robots, softbots, computer programs, vacuum cleaners, thermostats, etc. – An agent has a set of sensors, a set of actuators, and operates in an environment. sensors Experimental psychology Models of human information processing Linguistics Noam Chomsky, language, concepts,… Philosophy ‘Reasoning’: Aristotle, Boole, … ‘The mind’: Descartes, Locke, Hume,… “intellect” environment 9 10 actuators An example: the vacuum-cleaner world The agent function • If w e w ant, w e can define an agent and its environment formally. – We can define a set of actions A w hich the agent can perform. – We can define a set of percepts P w hich the agent can receive. (Assume that there’s one percept per time point.) • A simple agent function could simply map from percepts to actions: f :PA • Here’s a simple example: a formal definition of a robot vacuum cleaner, operating in a tw o-room environment (room A, room B). – Its sensors tell it w hich room it’s in, and w hether it’s clean or dirty. – It can do four actions: move left, move right, suck, do-nothing. Room A Room B • A more complex (and general) agent function maps from percept histories to actions, thus allow ing modelling an agent w ith a memory for previous experience: f : P* A • The agent program runs on the physical architecture to produce f. 11 12 2 30/09/2016 A vacuum-cleaner agent Preliminaries for defining the agent function • Here is an example agent function for the vacuum cleaner agent: • To define the agent function, w e need a syntax for percepts and actions. – Assume percepts have the form [location; state]: e.g. [A; Dirty]. – Assume the follow ing four actions: Left, Right, Suck, NoOp. • We also need a w ay of specifying the function itself. – Assume a simple lookup table, w hich lists percept histories in the first column, and actions in the second column. Percept history Action … … … … • And here is an agent’s pseudo-code w hich implements this function: 13 Evaluating the agent function 14 Formalising the agent’s environment • It is useful to evaluate the agent function, to see how w ell it performs. • As w ell as a formal description of the agent, w e can give a formal description of its environment. • To do this, w e need to specify a performance measure, w hich is defined as a function of the agent’s environment over time. • Environments vary along several different dimensions: – Fully observable vs partially observable – Deterministic versus stochastic (i.e. involving chance) – Episodic vs sequential – Offline vs online – Discrete vs continuous – Single-agent vs multi-agent • Some example performance measures: – one point per square cleaned up in time T? – one point per clean square per time step, minus one per move? – penalize for > k dirty squares – Etc. • The environment type largely determines the agent design. 15 Interim summary • Perception are processes that are used to interpret the environment of an agent. These are processes that turn stimulation into meaningfu l features. • Action is any process that changes the environment – including the position of the agent in the environment! • In order to specify a scenario in w hich an agent performs a certain task, w e need to define the so-called PEAS description of the agent/task, i.e.: – a Performance measure; – an Environment; – a set of Actuators; – a set of Sensors; 17 16 Some different types of agents • Just as w e can identify different types of environment, w e can identify different types of agents. • In order of increasing complexity/adaptability w e have: – Simple reflex agents – Model-based agents (or reflex agents w ith state) – Goal-based agents – Utility-based agents • All these can be turned into learning agents (w ith w hich w e w ill deal in the second half of the semester). 18 3 30/09/2016 Simple reflex agents Simple reflex agents • A simple reflex agent is one w hose behaviour at each moment is a function of its sensory inputs at that moment – It has no memory for previous sensory inputs – It doesn’t maintain any state • For example, here’s a simple reflex vacuum-cleaner agent: 19 Simple reflex agents 20 Braitenberg vehicle • Some AI researchers are interested in modelling simple biological organisms like cockroaches, ants, etc. • One very simple simulated organism is a Braitenberg vehicle. • The discs on the front are light sensors. • These creatures are basically simple reflex agents (c.f. Lecture 1): – They sense the w orld, and their actions are linked directly to w hat they sense. – They don’t have any internal representations of the w orld. – They don’t do any ‘reasoning’. – They don’t do any ‘planning’. – Lots of light ! strong signal. – Less light ! weaker signal. • The sensors are connected to motors driving the w heels that can be in one either: A – same side connections, B – cross connections. • Recall, the agent function for a simple reflex agent is the mapping from the current set of percepts to an action, i.e. f :PA 21 • From these simple conditions can you w ork out the behaviour? 22 Simple agents – complex behaviour The subsumption architecture • Often you can get complex behaviou r emerging from a simple action function being executed in a complex environment. • Rodney Brooks developed an influential model of the architecture of a reflex agent funct ion. The basic idea is that there are lots of separat e reflex functions, built on top of one another. • If an agent is w orking in the real w orld, its behaviou r is a result of interactions betw een its agent function and the environment it is in. Grab object • We can distinguish betw een – Algorithmic Complexity – Behavioural Complexity • It seems plausible to require that ‘int elligent’ behav iour be complex . How ever, this complexity could relate to the complexit y of the environment and not the agent itself. 23 24 4 30/09/2016 An example of the subsumption architecture The subsumption architecture • Each agent function f: P A is called a behaviour. – e.g. ‘w ander around’, ‘avoid obstacle’ are all concrete behaviours. • The subsumption architecture can be nicely motivated from biology. – New more sophisticated behav iours model new evolutionary developments of the organisms. – They sit on top of existing more primitive control systems. • One behaviour’s output can override that of less urgent behaviours. – For instance, people have got simple reflexes w hich override more complicated tasks they perform. 25 Interactions between behaviours and the world • Say you’re building a robot w hose task is to grab any drink can it sees. • Behaviour 1: move around the environment at random. • Behaviour 2: if you bump into an obstacle, inhibit Behaviour 1, and avoid the obstacle. • Behaviour 3: if you see a drink can directly ahead of you, inhibit Behaviour 1, and extend your arm. • Behaviour 4: if something appears betw een your fingers, inhibit Behaviour 3 and execute a grasp action. 26 Emergent behaviours • Notice how the ‘reach’ and ‘grasp’ actions interact w ith one another: – There’s no notion of a ‘planned sequence’ of act ions, w here the robot first reaches its arm and then grasps: the ‘reach’ and ‘grasp’ behaviours are entirely separate. – How ever, the physical cons equences of executing the ‘reach’ behaviour happen to trigger the ‘grasp’ behaviour. • The physical consequences of the ‘reach’ behaviour are due to: – the w ay the w orld is; – the w ay the robot’s hardw are is set up. • Notice that if you just offered the robot a drink can, it w ould also behave appropriately (by grasping it). 27 • Behaviours w hich result from the interact ion betw een the agent function and the environment can be termed emergent behaviours. • Some particularly int eresting emergent behav iours occu r w hen several agents are placed in the same environment. – The act ions of each individu al agent changes the environm ent w hich the other agents perceive; – So they potentially influence the behaviour of all the other agents. – Sw arming and flocking are good examples. • It’s very hard to experiment w ith emergent behaviou rs except by building simulations and seeing how they w ork. Often even simu lations are not enough – w e need to build real robots. 28 Embodied AI: importance of practical work The LEGO Mindstorms project • Robot icists stress the importance of w orking in the physical w orld. It’s not enou gh to develop a theory of how robots w ork, or to build simulations of robot in an environment; you actually have to build them. • Researchers at the MIT Media Lab have been using LEGO for prototyping robotic systems for quite a long time. – It’s very hard to simulat e all the relevant aspects of a robot’s physical environment. – The phys ical w orld is far harder to w ork w ith than w e might think—there are alw ays unexpected problems. – There are also often unexpect ed benefits from w orking w ith real physical systems. • So, w e’re going to do some practical w ork during labs w ith robots very similar to LEGO robots. 29 – One of these projects , headed by Fred Mart in, led to a collaboration w ith LEGO, w hich resulted in the Mindstorms product. – The active collaboration betw een MIT researchers and LEGO continued for some years, and w as quite productive. • Mindstorms is now a very popular product, w hich is used by many schools and universities, and comes w ith a range of different operating systems and programming languages. • We’re now onto the second generation of Mindstorms, called NXT. 30 5 30/09/2016 Mindstorms components 1 Mindstorms components 2 • As w ell as a lot of different ordinary LEGO pieces, t he Mindstorms kit comes w ith some special ones. • 2. A number of different sensors. – Tw o touch sensors: basically on-off buttons. • These are often connected to w hisker sensors. • 1. The NXT brick: a microcont roller, w ith four inputs and three outputs. – The heart of the NXT is a 32-bit ARM microcont roller chip. This has a CPU, ROM, RAM and I/O routines. – To run, the chip needs an operating system. This is know n as its firmw are. – The chip also needs a program for the O/S to ru n. The program needs to be given in bytecode (one step up from assembler). – Both the firmw are and the bytecode are dow nloaded onto the NXT from an ordinary computer, via a USB link. 31 – A light sensor detects light intensity. • This can be used to pick up either ambient light , or to detect the reflectance of a (close) object. • The sensor shines a beam of light outw ards. If there’s a close object, it picks up the light reflect ed off the object in question. – A sonar sensor, w hich calculates the distance of surfaces in front of it. – A microphone, w hich records sound intensity. 32 A simple mobile robot Mindstorms components 3 • 3. Three servomotors (actuators). – The motors all deliver rotational force (i.e. torque). – Motors can be programm ed to turn at particular speeds, either forw ards or backw ards. – They can also be programmed to lock, or to freew heel. – Servomotors come w ith rotation sensors. S o, they can be programmed to rotate a precise angle and then stop. • The robots you w ill be w orking w ith all have the same design. – They’re navigati onal robots - i.e. they move around in t heir environment. (As contrasted w ith e.g. w ith manipulatory robots.) – They are tu rtles: they have a chassis w ith tw o separately controllable w heels at the front, and a pivot w heel at the back. – They have a light sensor underneath, for sensing the ‘colour’ of t he ground at this point. – They have two whisker sensors on the front , for sensing cont act w ith objects ‘to the left’ and ‘to the right’. – They also hav e a microphone and a sonar dev ice (but there’s only space to plug in one of these at a time). 33 The NCX programming language • What commands w ould you need to give to a turtle to make it turn?34 The NCX program development and execution • We’ll be programm ing the robots using a langu age based on C, called NXC. Here’s a simple example program. • Program development cycle: – Turn the robot on, and connect it to your machine’s USB port. – Start the NXTCC app. (It’s in the Applications folder.) – Open the editor, w rite some NXC source code, save it. – Then compile the code. (‘Compile’ menu button in the editor) – When this compiles cleanly, it creat es a bytecode file called [filename].sym. – When you’v e got it compiling cleanly, download the bytecode file to your robot. (‘Dow nload’ menu button in the editor) • See the http://w w w .cs.otago.ac.nz/cosc343/lego.php for more info. 35 36 6 30/09/2016 Threads in NCX NXC threads and the subsumption architecture • Threads are called tasks. • Given that NXC is mult ithreaded, it’s possible to run several different behaviours (tasks) simultaneously. • Every program has to contain a task called main. This is start ed w hen the program is executed. Threads can then be started and halted by program commands. • Each behaviour can be looking for input, and computing output. • Behaviours can also trigger other behaviou rs, suppress their output , and so on. • Mutex variables are used to synchronise tasks. • In the example, the main thread initiates tw o tasks, by placing them in a ready queu e, and then exits: • So it’s possible to implement an architectu re very much like Brooks ’ subsumption architecture on the LEGO robots. 37 38 NXC documentation Subroutines in NXC • Other standard things: • NXC supports subroutines, w ith a pretty standard syntax: – Variables: declaration, initialisation – Data structures: arrays – Control structures: if, do, while, for, etc – Arithmetic expressions – The preprocessor: #define, #include • On the 343 w ebpage: Links to the NXT and NXC hom e pages and detailed information about how to develop and dow nload NXC code. • Other tw o useful guides: – Programming LEGO NXT robots using NXC, by Daniele Benedetelli – NXC programmer’s guide, by John Hansen (the creator of NXC). 39 40 Model-based / Reflex agents with state Challenge • We can modify a reflex agent to maintain the state. – ‘Important’ sensory inputs can cause changes in the agent’s state, w hile other (‘irrelevant’) inputs can leave its state unchanged. – A state can be a complex representation (model) of the world. – The agent’s actions can now be based on a combination of its current state and its current sensory inputs: • The physical capabilities of a LEGO robot are very limited. – The information about the w orld, w hich robot receives via its sensors is minimal. – It has very simple motor capabilities. – It doesn’t have much memory. (256K FLASH, 64K RAM). • So, the challenge is this: to program this simple robot to produce complex behaviours. • Thus, w e are going to do some practical exercise. 41 42 7 30/09/2016 Model-based agents Goal-based agents • A reflex agent may behave as if it has goals, but it doesn’t represent them explicitly. This means it can’t reason about how to achieve its goals. • A goal-based agent is a huge step up. In this kind of agent, – One or more goal states are defined. – The agent’s state includes a representation of the state of the w orld. – The agent has the ability to reason about the consequences of its actions, along the follow ing lines: • ‘In state S1, if I do action A1, the next state will be S2 ’ 43 Goal-based agents • Now the agent can search amongst all the actions in its repertoire for an action w hich achieves one of its goals. 44 Utility-based agents • Utility function: it maps a new state, w hich is a consequence of action A onto a single number – measure of agent’s happiness. • Agent’s happiness is inversely related to the cost of the actions, and influences the choice of subsequent actions. Utility-based agents 45 Learning agent Summary and next reading • In this lecture (reading AIMA 2 nd ed., chapters 1 and 2, chap. 25.7): – We looked at definitions of intelligence and AI – We introduced a formal definition of an agent – We introduced a taxonomy of different environment types – We looked at some different agent architectures • Next lecture, w e w ill introduce the logical agents, i.e. model-based agents based on propositional logic. • Next lecture & lab reading: AIMA 2 nd ed. Chap. 7.1 – 7.5. 47 48 8