* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Evolutionary Computation: Genetic Algorithms

Genetic drift wikipedia , lookup

Skewed X-inactivation wikipedia , lookup

Koinophilia wikipedia , lookup

Genome (book) wikipedia , lookup

Y chromosome wikipedia , lookup

X-inactivation wikipedia , lookup

Gene expression programming wikipedia , lookup

Population genetics wikipedia , lookup

Neocentromere wikipedia , lookup

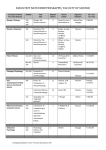

Lecture 9 Evolutionary Computation: Genetic algorithms Introduction, or can evolution be intelligent? ■ Simulation of natural evolution ■ Genetic algorithms ■ Case study: maintenance scheduling with genetic algorithms ■ Summary ■ Negnevitsky, Pearson Education, 2002 1 Can evolution be intelligent? Intelligence can be defined as the capability of a system to adapt its behaviour to ever-changing environment. According to Alan Turing, the form or appearance of a system is irrelevant to its intelligence. ■ Evolutionary computation simulates evolution on a computer. The result of such a simulation is a series of optimisation algorithms, usually based on a simple set of rules. Optimisation iteratively improves the quality of solutions until an optimal, or at least feasible, solution is found. ■ Negnevitsky, Pearson Education, 2002 2 The behaviour of an individual organism is an inductive inference about some yet unknown aspects of its environment. If, over successive generations, the organism survives, we can say that this organism is capable of learning to predict changes in its environment. ■ The evolutionary approach is based on computational models of natural selection and genetics. We call them evolutionary computation, an umbrella term that combines genetic algorithms, evolution strategies and genetic programming. ■ Negnevitsky, Pearson Education, 2002 3 Simulation of natural evolution On 1 July 1858, Charles Darwin presented his theory of evolution before the Linnean Society of London. This day marks the beginning of a revolution in biology. ■ Darwin’s classical theory of evolution, together with Weismann’s theory of natural selection and Mendel’s concept of genetics, now represent the neo-Darwinian paradigm. ■ Negnevitsky, Pearson Education, 2002 4 ■ Neo-Darwinism is based on processes of reproduction, mutation, competition and selection. The power to reproduce appears to be an essential property of life. The power to mutate is also guaranteed in any living organism that reproduces itself in a continuously changing environment. Processes of competition and selection normally take place in the natural world, where expanding populations of different species are limited by a finite space. Negnevitsky, Pearson Education, 2002 5 Evolution can be seen as a process leading to the maintenance of a population’s ability to survive and reproduce in a specific environment. This ability is called evolutionary fitness. ■ Evolutionary fitness can also be viewed as a measure of the organism’s ability to anticipate changes in its environment. ■ The fitness, or the quantitative measure of the ability to predict environmental changes and respond adequately, can be considered as the quality that is optimised in natural life. ■ Negnevitsky, Pearson Education, 2002 6 How is a population with increasing fitness generated? ■ ■ Let us consider a population of rabbits. Some rabbits are faster than others, and we may say that these rabbits possess superior fitness, because they have a greater chance of avoiding foxes, surviving and then breeding. If two parents have superior fitness, there is a good chance that a combination of their genes will produce an offspring with even higher fitness. Over time the entire population of rabbits becomes faster to meet their environmental challenges in the face of foxes. Negnevitsky, Pearson Education, 2002 7 Simulation of natural evolution All methods of evolutionary computation simulate natural evolution by creating a population of individuals, evaluating their fitness, generating a new population through genetic operations, and repeating this process a number of times. ■ We will start with Genetic Algorithms (GAs) as most of the other evolutionary algorithms can be viewed as variations of genetic algorithms. ■ Negnevitsky, Pearson Education, 2002 8 Genetic Algorithms In the early 1970s, John Holland introduced the concept of genetic algorithms. ■ His aim was to make computers do what nature does. Holland was concerned with algorithms that manipulate strings of binary digits. ■ Each artificial “chromosomes” consists of a number of “genes”, and each gene is represented by 0 or 1: ■ 1 0 1 1 0 1 0 0 0 0 0 1 0 1 0 1 Negnevitsky, Pearson Education, 2002 9 Nature has an ability to adapt and learn without being told what to do. In other words, nature finds good chromosomes blindly. GAs do the same. Two mechanisms link a GA to the problem it is solving: encoding and evaluation. ■ The GA uses a measure of fitness of individual chromosomes to carry out reproduction. As reproduction takes place, the crossover operator exchanges parts of two single chromosomes, and the mutation operator changes the gene value in some randomly chosen location of the chromosome. ■ Negnevitsky, Pearson Education, 2002 10 Basic genetic algorithms Step 1: Represent the problem variable domain as a chromosome of a fixed length, choose the size of a chromosome population N, the crossover probability pc and the mutation probability pm. Step 2: Define a fitness function to measure the performance, or fitness, of an individual chromosome in the problem domain. The fitness function establishes the basis for selecting chromosomes that will be mated during reproduction. Negnevitsky, Pearson Education, 2002 11 Step 3: Randomly generate an initial population of chromosomes of size N: x1 , x2 , . . . , xN Step 4: Calculate the fitness of each individual chromosome: f (x1), f (x2), . . . , f (xN) Step 5: Select a pair of chromosomes for mating from the current population. Parent chromosomes are selected with a probability related to their fitness. Negnevitsky, Pearson Education, 2002 12 Step 6: Create a pair of offspring chromosomes by applying the genetic operators − crossover and mutation. Step 7: Place the created offspring chromosomes in the new population. Step 8: Repeat Step 5 until the size of the new chromosome population becomes equal to the size of the initial population, N. Step 9: Replace the initial (parent) chromosome population with the new (offspring) population. Step 10: Go to Step 4, and repeat the process until the termination criterion is satisfied. Negnevitsky, Pearson Education, 2002 13 Genetic algorithms ■ ■ ■ GA represents an iterative process. Each iteration is called a generation. A typical number of generations for a simple GA can range from 50 to over 500. The entire set of generations is called a run. Because GAs use a stochastic search method, the fitness of a population may remain stable for a number of generations before a superior chromosome appears. A common practice is to terminate a GA after a specified number of generations and then examine the best chromosomes in the population. If no satisfactory solution is found, the GA is restarted. Negnevitsky, Pearson Education, 2002 14 Genetic algorithms: case study A simple example will help us to understand how a GA works. Let us find the maximum value of the function (15x − x2) where parameter x varies between 0 and 15. For simplicity, we may assume that x takes only integer values. Thus, chromosomes can be built with only four genes: Integer 1 2 3 4 5 Binary code 0001 0010 0011 0100 0101 Integer 6 7 8 9 10 Negnevitsky, Pearson Education, 2002 Binary code 0110 0111 1000 1001 1010 Integer 11 12 13 14 15 Binary code 1011 1100 1101 1110 1111 15 Suppose that the size of the chromosome population N is 6, the crossover probability pc equals 0.7, and the mutation probability pm equals 0.001. The fitness function in our example is defined by f(x) = 15 x − x2 Negnevitsky, Pearson Education, 2002 16 The fitness function and chromosome locations C hrom osom e label C hrom osom e string X1 X2 X3 X4 X5 X6 f(x ) 1 0 0 1 0 1 1 1 0 1 1 0 0 0 0 1 1 0 0 0 1 0 1 1 Decoded integer C hrom osom e fitness Fitness ratio, % 12 4 1 14 7 9 36 44 14 14 56 54 16.5 20.2 6.4 6.4 25.7 24.8 60 60 50 50 40 40 30 30 20 20 10 10 0 0 5 10 15 0 0 5 10 x x (a) Chromosome initia l locations. (b) Chromosome final locations. Negnevitsky, Pearson Education, 2002 15 17 In natural selection, only the fittest species can survive, breed, and thereby pass their genes on to the next generation. GAs use a similar approach, but unlike nature, the size of the chromosome population remains unchanged from one generation to the next. ■ The last column in Table shows the ratio of the individual chromosome’s fitness to the population’s total fitness. This ratio determines the chromosome’s chance of being selected for mating. The chromosome’s average fitness improves from one generation to the next. ■ Negnevitsky, Pearson Education, 2002 18 Roulette wheel selection The most commonly used chromosome selection techniques is the roulette wheel selection. 100 0 16.5 75.2 36.7 49.5 Negnevitsky, Pearson Education, 2002 43.1 X1: X2: X3: X4: X5: X6: 16.5% 20.2% 6.4% 6.4% 25.3% 24.8% 19 Crossover operator In our example, we have an initial population of 6 chromosomes. Thus, to establish the same population in the next generation, the roulette wheel would be spun six times. ■ Once a pair of parent chromosomes is selected, the crossover operator is applied. ■ Negnevitsky, Pearson Education, 2002 20 First, the crossover operator randomly chooses a crossover point where two parent chromosomes “break”, and then exchanges the chromosome parts after that point. As a result, two new offspring are created. ■ If a pair of chromosomes does not cross over, then the chromosome cloning takes place, and the offspring are created as exact copies of each parent. ■ Negnevitsky, Pearson Education, 2002 21 Crossover X6i 1 0 00 1 0 1 00 00 X2i X1i 10 11 00 00 0 11 11 11 X5i X2i 0 1 0 0 0 1 1 1 X5i Negnevitsky, Pearson Education, 2002 22 Mutation operator Mutation represents a change in the gene. ■ Mutation is a background operator. Its role is to provide a guarantee that the search algorithm is not trapped on a local optimum. ■ The mutation operator flips a randomly selected gene in a chromosome. ■ The mutation probability is quite small in nature, and is kept low for GAs, typically in the range between 0.001 and 0.01. ■ Negnevitsky, Pearson Education, 2002 23 Mutation X6'i 1 0 0 0 X2'i 0 1 0 1 0 X1'i 1 0 1 1 1 1 1 X1"i X5'i 0 1 0 1 0 1 X2i 0 1 X5i 0 1 1 1 Negnevitsky, Pearson Education, 2002 0 0 1 0 X2"i 24 The genetic algorithm cycle Crossover Generation i X1i 1 1 0 0 f = 36 X2i 0 1 0 0 f = 44 X3i 0 0 0 1 f = 14 X4i 1 1 1 0 f = 14 X5i 0 1 1 1 f = 56 X6i 1 0 0 1 f = 54 X6i 1 0 00 1 0 1 00 00 X2i X1i 10 11 00 00 0 11 11 11 X5i X2i 0 1 0 0 0 1 1 1 X5i Generation (i + 1) X1i+1 1 0 0 0 f = 56 X2i+1 0 1 0 1 f = 50 X3i+1 1 0 1 1 f = 44 X4i+1 0 1 0 0 f = 44 X5i+1 0 1 1 0 f = 54 X6i+1 0 1 1 1 f = 56 Negnevitsky, Pearson Education, 2002 Mutation X6'i 1 0 0 0 X2'i 0 1 0 10 X1'i 10 1 1 1 1 1 X1"i X5'i 0 1 01 01 X2i 0 1 X5i 0 1 1 1 0 0 1 0 X2"i 25 Genetic algorithms: case study ■ Suppose it is desired to find the maximum of the “peak” function of two variables: 2 − x 2 −( y +1) 2 f ( x, y ) = (1 − x) e − (x − x − y ) e 3 3 − x2 − y 2 where parameters x and y vary between −3 and 3. ■ The first step is to represent the problem variables as a chromosome − parameters x and y as a concatenated binary string: 1 0 0 0 1 0 1 0 0 0 1 1 1 0 1 1 x Negnevitsky, Pearson Education, 2002 y 26 We also choose the size of the chromosome population, for instance 6, and randomly generate an initial population. ■ The next step is to calculate the fitness of each chromosome. This is done in two stages. ■ First, a chromosome, that is a string of 16 bits, is partitioned into two 8-bit strings: ■ 1 0 0 0 1 0 1 0 ■ and 0 0 1 1 1 0 1 1 Then these strings are converted from binary (base 2) to decimal (base 10): (10001010 ) 2 = 1 × 2 7 + 0 × 2 6 + 0 × 2 5 + 0 × 2 4 + 1 × 2 3 + 0 × 2 2 + 1 × 21 + 0 × 2 0 = (138)10 and (00111011) 2 = 0 × 2 7 + 0 × 2 6 + 1 × 2 5 + 1 × 2 4 + 1 × 2 3 + 0 × 2 2 + 1 × 21 + 1 × 2 0 = (59)10 Negnevitsky, Pearson Education, 2002 27 ■ Now the range of integers that can be handled by 8-bits, that is the range from 0 to (28 − 1), is mapped to the actual range of parameters x and y, that is the range from −3 to 3: 6 = 0.0235294 256 − 1 ■ To obtain the actual values of x and y, we multiply their decimal values by 0.0235294 and subtract 3 from the results: x = (138)10 × 0.0235294 − 3 = 0.2470588 and y = (59)10 × 0.0235294 − 3 = −1.6117647 Negnevitsky, Pearson Education, 2002 28 Using decoded values of x and y as inputs in the mathematical function, the GA calculates the fitness of each chromosome. ■ To find the maximum of the “peak” function, we will use crossover with the probability equal to 0.7 and mutation with the probability equal to 0.001. As we mentioned earlier, a common practice in GAs is to specify the number of generations. Suppose the desired number of generations is 100. That is, the GA will create 100 generations of 6 chromosomes before stopping. ■ Negnevitsky, Pearson Education, 2002 29 Chromosome locations on the surface of the “peak” function: initial population Negnevitsky, Pearson Education, 2002 30 Chromosome locations on the surface of the “peak” function: first generation Negnevitsky, Pearson Education, 2002 31 Chromosome locations on the surface of the “peak” function: local maximum Negnevitsky, Pearson Education, 2002 32 Chromosome locations on the surface of the “peak” function: global maximum Negnevitsky, Pearson Education, 2002 33 Performance graphs for 100 generations of 6 chromosomes: local maximum pc = 0.7, pm = 0.001 0.7 0.6 0.5 Fitness 0.4 0.3 0.2 0.1 Best Average 0 -0.1 0 10 20 30 40 50 60 70 80 90 100 Generations Negnevitsky, Pearson Education, 2002 34 Performance graphs for 100 generations of 6 chromosomes: global maximum pc = 0.7, pm = 0.01 1.8 1.6 1.4 Fitness 1.2 1.0 0.8 0.6 Best Average 0.4 0.2 0 10 20 30 40 50 60 70 80 90 100 Generations Negnevitsky, Pearson Education, 2002 35 Performance graphs for 20 generations of 60 chromosomes pc = 0.7, pm = 0.001 1.8 1.6 1.4 Fitness 1.2 1.0 0.8 0.6 Best Average 0.4 0.2 0 2 4 6 8 10 12 14 16 18 20 Generations Negnevitsky, Pearson Education, 2002 36 Case study: maintenance scheduling Maintenance scheduling problems are usually solved using a combination of search techniques and heuristics. ■ These problems are complex and difficult to solve. ■ They are NP-complete and cannot be solved by combinatorial search techniques. ■ Scheduling involves competition for limited resources, and is complicated by a great number of badly formalised constraints. ■ Negnevitsky, Pearson Education, 2002 37 Steps in the GA development 1. Specify the problem, define constraints and optimum criteria; 2. Represent the problem domain as a chromosome; 3. Define a fitness function to evaluate the chromosome performance; 4. Construct the genetic operators; 5. Run the GA and tune its parameters. Negnevitsky, Pearson Education, 2002 38 Case study Scheduling of 7 units in 4 equal intervals The problem constraints: ■ ■ ■ The maximum loads expected during four intervals are 80, 90, 65 and 70 MW; Maintenance of any unit starts at the beginning of an interval and finishes at the end of the same or adjacent interval. The maintenance cannot be aborted or finished earlier than scheduled; The net reserve of the power system must be greater or equal to zero at any interval. The optimum criterion is the maximum of the net reserve at any maintenance period. Negnevitsky, Pearson Education, 2002 39 Case study Unit data and maintenance requirements Unit number Unit capacity, MW Number of intervals required for unit maintenance 1 2 3 4 5 6 7 20 15 35 40 15 15 10 2 2 1 1 1 1 1 Negnevitsky, Pearson Education, 2002 40 Case study Unit gene pools Unit 1: Unit 2: Unit 3: Unit 4: Unit 5: Unit 6: Unit 7: 1 1 0 0 1 1 0 0 0 1 1 0 0 1 1 0 0 0 1 1 0 0 1 1 1 0 0 0 1 0 0 0 0 1 0 0 0 1 0 0 0 0 1 0 0 0 1 0 0 0 0 1 0 0 0 1 1 0 0 0 1 0 0 0 0 1 0 0 0 1 0 0 0 0 1 0 0 0 1 0 0 0 0 1 0 0 0 1 1 0 0 0 0 1 0 0 0 0 1 0 0 0 0 1 Chromosome for the scheduling problem Unit 7 Unit 6 Unit 1 Unit 2 Unit 3 Unit 4 Unit 5 0 1 1 0 0 0 1 1 0 0 0 1 1 0 0 0 0 1 0 0 0 0 1 0 1 0 0 0 Negnevitsky, Pearson Education, 2002 41 Case study The crossover operator Parent 1 0 1 1 0 0 0 1 1 0 0 0 1 1 0 0 0 0 1 0 0 0 0 1 0 1 0 0 0 Parent 2 1 1 0 0 0 1 1 0 0 1 0 0 0 0 0 1 0 0 1 0 1 0 0 0 0 1 0 0 Child 1 0 1 1 0 0 0 1 1 0 0 0 1 1 0 0 0 0 0 1 0 1 0 0 0 0 1 0 0 Child 2 1 1 0 0 0 1 1 0 0 1 0 0 0 0 0 1 0 1 0 0 0 0 1 0 1 0 0 0 Negnevitsky, Pearson Education, 2002 42 Case study The mutation operator 1 1 0 0 0 1 1 0 0 1 0 0 0 0 0 1 0 1 0 0 0 0 1 0 1 0 0 0 1 1 0 0 0 1 1 0 0 10 0 01 0 0 0 1 0 1 0 0 0 0 1 0 1 0 0 0 Negnevitsky, Pearson Education, 2002 43 Performance graphs and the best maintenance schedules created in a population of 20 chromosomes N = 20, pc = 0.7, pm = 0.001 15 Fitness 10 5 Best Average 0 -5 -10 0 5 10 15 20 25 30 35 40 45 50 Generations N e t r e s e r v e s: 150 15 35 35 25 Unit 2 Unit 1 Unit 4 Unit 2 Unit 7 120 MW Unit 5 Unit 1 Unit 6 Unit 3 3 4 90 60 30 0 1 2 Time interval (a) 50 generations Negnevitsky, Pearson Education, 2002 44 Performance graphs and the best maintenance schedules created in a population of 20 chromosomes N = 20, pc = 0.7, pm = 0.001 F i t n e ss 20 Best Average 10 0 -10 0 10 150 120 20 40 30 60 40 50 Generations 70 80 N e t r e s e r v e s: 25 20 Unit 1 Unit 7 Unit 1 Unit 2 90 100 25 Unit 2 Unit 4 Unit 3 MW Unit 5 Unit 6 90 60 30 0 1 2 3 4 Time interval (b) 100 generations Negnevitsky, Pearson Education, 2002 45 Performance graphs and the best maintenance schedules created in a population of 100 chromosomes N = 100, pc = 0.7, pm = 0.001 30 Fitne ss 20 10 Best Average 0 -10 0 10 20 30 60 40 50 Generations 70 80 90 100 N e t r e s e r v e s: 150 35 Unit 3 MW 120 25 25 Unit 1 Unit 1 Unit 2 Unit 2 Unit 5 Unit 7 90 25 Unit 4 Unit 6 60 30 0 1 2 3 4 Time interval (a) Mutation rate is 0.001 Negnevitsky, Pearson Education, 2002 46 Performance graphs and the best maintenance schedules created in a population of 100 chromosomes N = 100, pc = 0.7, pm = 0.01 F i t n e ss 30 20 10 0 Best Average -10 -20 0 10 20 30 60 40 50 Generations 70 80 90 100 N e t r e s e r v e s: 150 MW 120 25 25 30 Unit 1 Unit 1 Unit 2 Unit 6 Unit 7 Unit 2 30 Unit 3 Unit 4 Unit 5 90 60 30 0 1 2 3 4 Time interval (b) Mutation rate is 0.01 Negnevitsky, Pearson Education, 2002 47