* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Repeated Measures ANOVA

Survey

Document related concepts

Transcript

Chapter 5-18. Correlated Data: Repeated Measures ANOVA

Before generalized estimating equations (GEE) and multilevel models became popular,

researchers analyzed repeated measures data using repeated measures analysis of variance

(repeated measures ANOVA).

There is no need to use repeated measures ANOVA anymore, because GEE regression models

and multilevel regression models are easier to fit and are not as limited.

Still, it is useful to know about repeated measures ANOVA since these models occasionally

shown up in published papers (e.g., Tonetti et al, N Engl J Med, 2007). These analyses were the

standard approach to repeated measures analysis a few dacades ago, before the new approaches

were developed.

Isoproterenol Dataset

We will use the 11.2.Isoproterenol.dta dataset provided with the Dupont (2002, p.338) textbook,

described as,

“Lang et al. (1995) studied the effect of isoproterenol, a β-adrenergic agonist, on forearm

blood flow in a group of 22 normotensive men. Nine of the study subjects were black and

13 were white. Each subject’s blood flow was measured at baseline and then at

escalating doses of isoproterenol.”

Reading the data in,

File

Open

Find the directory where you copied the course CD

Find the subdirectory datasets & do-files

Single click on 11.2.Isoproterenol.dta

Open

use "C:\Documents and Settings\u0032770.SRVR\Desktop\

regressionclass\datasets & do-files\progesterone.dta", clear

which must be all on one line, or use:

cd "C:\Documents and Settings\u0032770.SRVR\Desktop\"

cd "Biostats & Epi With Stata\datasets & do-files"

use 11.2.Isoproterenol.dta, clear

_________________

Source: Stoddard GJ. Biostatistics and Epidemiology Using Stata: A Course Manual [unpublished manuscript] University of Utah

School of Medicine, 2010.

Chapter 5-18 (revision 16 May 2010)

p. 1

Listing the data,

Data

Describe data

List data

Options tab: Display numeric codes rather than label values

OK

list , nolabel

1.

2.

3.

4.

5.

6.

7.

8.

9.

10.

11.

12.

13.

14.

15.

16.

17.

18.

19.

20.

21.

22.

+---------------------------------------------------------------------+

| id

race

fbf0

fbf10

fbf20

fbf60

fbf150

fbf300

fbf400 |

|---------------------------------------------------------------------|

| 1

1

1

1.4

6.4

19.1

25

24.6

28 |

| 2

1

2.1

2.8

8.3

15.7

21.9

21.7

30.1 |

| 3

1

1.1

2.2

5.7

8.2

9.3

12.5

21.6 |

| 4

1

2.44

2.9

4.6

13.2

17.3

17.6

19.4 |

| 5

1

2.9

3.5

5.7

11.5

14.9

19.7

19.3 |

|---------------------------------------------------------------------|

| 6

1

4.1

3.7

5.8

19.8

17.7

20.8

30.3 |

| 7

1

1.24

1.2

3.3

5.3

5.4

10.1

10.6 |

| 8

1

3.1

.

.

15.45

.

.

31.3 |

| 9

1

5.8

8.8

13.2

33.3

38.5

39.8

43.3 |

| 10

1

3.9

6.6

9.5

20.2

21.5

30.1

29.6 |

|---------------------------------------------------------------------|

| 11

1

1.91

1.7

6.3

9.9

12.6

12.7

15.4 |

| 12

1

2

2.3

4

8.4

8.3

12.8

16.7 |

| 13

1

3.7

3.9

4.7

10.5

14.6

20

21.7 |

| 14

2

2.46

2.7

2.54

3.95

4.16

5.1

4.16 |

| 15

2

2

1.8

4.22

5.76

7.08

10.92

7.08 |

|---------------------------------------------------------------------|

| 16

2

2.26

3

2.99

4.07

3.74

4.58

3.74 |

| 17

2

1.8

2.9

3.41

4.84

7.05

7.48

7.05 |

| 18

2

3.13

4

5.33

7.31

8.81

11.09

8.81 |

| 19

2

1.36

2.7

3.05

4

4.1

6.95

4.1 |

| 20

2

2.82

2.6

2.63

10.03

9.6

12.65

9.6 |

|---------------------------------------------------------------------|

| 21

2

1.7

1.6

1.73

2.96

4.17

6.04

4.17 |

| 22

2

2.1

1.9

3

4.8

7.4

16.7

21.2 |

+---------------------------------------------------------------------+

We see that the data are in wide format, with variables

id

patient ID (1 to 22)

race race (1=white, 2=black)

fbf0 forearm blood flow (ml/min/dl) at ioproterenol dose 0 ng/min

fbf10 forearm blood flow (ml/min/dl) at ioproterenol dose 10 ng/min

…

fbf400 forearm blood flow (ml/min/dl) at ioproterenol dose 400 ng/min

In this dataset, each of the several occasions represents an increasing dose, so can be thought of

as an effect across dose, rather than as an effect across time.

Chapter 5-18 (revision 16 May 2010)

p. 2

Paired Sample t Test

If we are willing to limit the analysis to two repeated measurements, and do not care about

covariates, than we can analyze these data with a paired sample t test.

Let’s compare the no dose forearm blood flow, fbf0, to the initial dose (10 ng/min), ignoring race

for now.

Statistics

Summaries, tables & tests

Classical tests of hypotheses

Mean comparison test, paired data

First variable: fbf10

Second variable: fbf0

OK

ttest fbf10 = fbf0

Paired t test

-----------------------------------------------------------------------------Variable |

Obs

Mean

Std. Err.

Std. Dev.

[95% Conf. Interval]

---------+-------------------------------------------------------------------fbf10 |

21

3.057143

.3864103

1.770755

2.251105

3.863181

fbf0 |

21

2.467619

.2532897

1.160719

1.939266

2.995972

---------+-------------------------------------------------------------------diff |

21

.5895238

.1967903

.9018064

.1790265

1.000021

-----------------------------------------------------------------------------Ho: mean(fbf10 - fbf0) = mean(diff) = 0

Ha: mean(diff) < 0

t =

2.9957

P < t =

0.9964

Ha: mean(diff) != 0

t =

2.9957

P > |t| =

0.0071

Ha: mean(diff) > 0

t =

2.9957

P > t =

0.0036

The paired t test is identically the one sample t test on the absolute change scores (fbf10 – fbf0).

To verify this,

capture drop diff10

gen diff10 = fbf10-fbf0

list fbf0 fbf10 diff10 in 1/5

1.

2.

3.

4.

5.

+-------------------------+

| fbf0

fbf10

diff10 |

|-------------------------|

|

1

1.4

.4 |

| 2.1

2.8

.7 |

| 1.1

2.2

1.1 |

| 2.44

2.9

.46 |

| 2.9

3.5

.5999999 |

+-------------------------+

Chapter 5-18 (revision 16 May 2010)

p. 3

Statistics

Summaries, tables & tests

Classical tests of hypotheses

One sample mean comparison test

Variable name: diff10

Hypothesized mean: 0

OK

ttest diff10 = 0

One-sample t test

-----------------------------------------------------------------------------Variable |

Obs

Mean

Std. Err.

Std. Dev.

[95% Conf. Interval]

---------+-------------------------------------------------------------------diff10 |

21

.5895238

.1967903

.9018064

.1790265

1.000021

-----------------------------------------------------------------------------Degrees of freedom: 20

Ho: mean(diff10) = 0

Ha: mean < 0

t =

2.9957

P < t =

0.9964

Ha: mean != 0

t =

2.9957

P > |t| =

0.0071

Ha: mean > 0

t =

2.9957

P > t =

0.0036

Comparing this to the paired t test output from above,

Paired t test

-----------------------------------------------------------------------------Variable |

Obs

Mean

Std. Err.

Std. Dev.

[95% Conf. Interval]

---------+-------------------------------------------------------------------fbf10 |

21

3.057143

.3864103

1.770755

2.251105

3.863181

fbf0 |

21

2.467619

.2532897

1.160719

1.939266

2.995972

---------+-------------------------------------------------------------------diff |

21

.5895238

.1967903

.9018064

.1790265

1.000021

-----------------------------------------------------------------------------Ho: mean(fbf10 - fbf0) = mean(diff) = 0

Ha: mean(diff) < 0

t =

2.9957

P < t =

0.9964

Ha: mean(diff) != 0

t =

2.9957

P > |t| =

0.0071

Ha: mean(diff) > 0

t =

2.9957

P > t =

0.0036

we see that the t test and p value are identically the same. For both tests, the hypothesis being

tested is:

H 0 : 0 , where is the population mean difference, estimated by the sample

mean difference, d .

Chapter 5-18 (revision 16 May 2010)

p. 4

The fbf0 and fbf10 variables are correlated. To see this, we can compute the Pearson correlation

coefficient, using

Statistics

Summaries, tables & tests

Summary statistics

Pairwise correlations

Main tab: Variables: fbf0 fbf10

Print number of observatinos for each entry

Print significance level for each entry

OK

pwcorr fbf0 fbf10, obs sig

|

fbf0

fbf10

-------------+-----------------fbf0 |

1.0000

|

|

22

|

fbf10 |

0.8927

1.0000

|

0.0000

|

21

21

The paired t test takes the correlation structure of the data into account by being a test on

difference scores. The standard error of the difference, by definition, includes the correlation

coefficient of the two variables. The formula is (van Belle, 2002, p.61):

Var(difference) Var(Yi1 Yi 2 ) 12 22 2 1 2

where ρ is the correlation between the two variables.

Let’s verify this:

Statistics

Summaries, tables & tests

Summary statistics

Summary statistics

Main tab: Variables: fbf0 fbf10 diff10

OK

summarize fbf0 fbf10 diff10

Variable |

Obs

Mean

Std. Dev.

Min

Max

-------------+-------------------------------------------------------fbf0 |

22

2.496364

1.140741

1

5.8

fbf10 |

21

3.057143

1.770755

1.2

8.8

diff10 |

21

.5895238

.9018064 -.3999999

3

Chapter 5-18 (revision 16 May 2010)

p. 5

Applying the above formula for the variance of two variables

Var(difference) Var(Yi1 Yi 2 ) 12 22 2 1 2

display 1.770755^2+1.160719^2-2*0.8927*1.770755*1.160719

.81322181

Taking the square root to convert this to a standard deviation

display sqrt(.81322181)

.90178812

which is identically the standard deviation of the difference shown in either t test output, accurate

to 4 decimal places. (Accuracy was limited to 4 decimal places since that is all we used for the

correlation coefficient in the calculation.)

Paired t test

-----------------------------------------------------------------------------Variable |

Obs

Mean

Std. Err.

Std. Dev.

[95% Conf. Interval]

---------+-------------------------------------------------------------------fbf10 |

21

3.057143

.3864103

1.770755

2.251105

3.863181

fbf0 |

21

2.467619

.2532897

1.160719

1.939266

2.995972

---------+-------------------------------------------------------------------diff |

21

.5895238

.1967903

.9018064

.1790265

1.000021

-----------------------------------------------------------------------------Ho: mean(fbf10 - fbf0) = mean(diff) = 0

Ha: mean(diff) < 0

t =

2.9957

P < t =

0.9964

Ha: mean(diff) != 0

t =

2.9957

P > |t| =

0.0071

Ha: mean(diff) > 0

t =

2.9957

P > t =

0.0036

Which, of course, is also identical the standard deviation computed in the summarize command.

Variable |

Obs

Mean

Std. Dev.

Min

Max

-------------+-------------------------------------------------------fbf0 |

22

2.496364

1.140741

1

5.8

fbf10 |

21

3.057143

1.770755

1.2

8.8

diff10 |

21

.5895238

.9018064 -.3999999

3

Chapter 5-18 (revision 16 May 2010)

p. 6

Let’s compare this to an independent groups t test on the same data. We will limit the analysis to

only those observations which have a non-missing value for both fbf0 and fbf10, so that we will

be using identical samples to compare the mean difference between the two versions of the t test.

Statistics

Summaries, tables & tests

Classical tests of hypotheses

Two sample mean comparison test

Main tab: First variable: fbf10

Second variable: fbf0

by/if/in tab: Restrict to observations: fbf0~=. & fbf10~=.

OK

ttest fbf10 == fbf0 if fbf0~=. & fbf10~=., unpaired

Two-sample t test with equal variances

-----------------------------------------------------------------------------Variable |

Obs

Mean

Std. Err.

Std. Dev.

[95% Conf. Interval]

---------+-------------------------------------------------------------------fbf10 |

21

3.057143

.3864103

1.770755

2.251105

3.863181

fbf0 |

21

2.467619

.2532897

1.160719

1.939266

2.995972

---------+-------------------------------------------------------------------combined |

42

2.762381

.232776

1.508561

2.29228

3.232482

---------+-------------------------------------------------------------------diff |

.5895238

.4620266

-.3442668

1.523314

-----------------------------------------------------------------------------diff = mean(fbf10) - mean(fbf0)

t =

1.2760

Ho: diff = 0

degrees of freedom =

40

Ha: diff < 0

Pr(T < t) = 0.8953

Ha: diff != 0

Pr(|T| > |t|) = 0.2093

Ha: diff > 0

Pr(T > t) = 0.1047

Comparing this to the paired t test computed above,

Paired t test

-----------------------------------------------------------------------------Variable |

Obs

Mean

Std. Err.

Std. Dev.

[95% Conf. Interval]

---------+-------------------------------------------------------------------fbf10 |

21

3.057143

.3864103

1.770755

2.251105

3.863181

fbf0 |

21

2.467619

.2532897

1.160719

1.939266

2.995972

---------+-------------------------------------------------------------------diff |

21

.5895238

.1967903

.9018064

.1790265

1.000021

-----------------------------------------------------------------------------Ho: mean(fbf10 - fbf0) = mean(diff) = 0

Ha: mean(diff) < 0

t =

2.9957

P < t =

0.9964

Ha: mean(diff) != 0

t =

2.9957

P > |t| =

0.0071

Ha: mean(diff) > 0

t =

2.9957

P > t =

0.0036

First, notice that both approaches produce an identical effect, with the same mean difference.

Second, notice the large difference in p values, with significance being lost with the independent

samples approach.

Chapter 5-18 (revision 16 May 2010)

p. 7

For both the independent samples t test and paired t test, the t statistic is computed using

t

effect

standard error of effect

where the effect is the difference in means in the first test and the mean of the differences in the

second test.

The t statistics are thus,

display .5895238 / .4620266

display .5895238 / .1967903

1.2759521

2.9956954

which match the t test outputs.

The difference, then, is completely due to the way the standard error is computed.

For the independent samples t test, which assumes the correlation between the two variables, fbf0

and fbf10, is zero, we have something very close to (not exactly this because a pooled estimate of

the variance is used),

Var(difference) Var(Yi1 Yi 2 ) 12 22 2 1 2

12 22 2(0) 1 2

12 22

For the paired t test, which estimates the population correlation with the sample correlation

coefficient, we have

Var(difference) Var(Yi1 Yi 2 ) 12 22 2 1 2

so we get to substract off something to provide a smaller variance, which in turn produces a

smaller standard error, which in turn provides a larger t statistic. (We substract something off

only in the typical case when the correlation is positive, as discussed in the ANCOVA chapter,

chapter 5-16.)

Conclusion From This Illustration

To correctly model repeated measures data, the model needs to take the correlation structure of

the data into account.

Chapter 5-18 (revision 16 May 2010)

p. 8

Oneway Repeated Measures ANOVA With Two Repeated Measurements

We will show that the oneway repeated measures ANOVA is identically the paired t test, when

no covariates are included. Thus it extends the paired t test to allow for covariates.

Restoring the longitudinal data in wide format and listing it,

use 11.2.Isoproterenol.dta, clear

list , nolabel

1.

2.

3.

4.

5.

6.

7.

8.

9.

10.

11.

12.

13.

14.

15.

16.

17.

18.

19.

20.

21.

22.

+---------------------------------------------------------------------+

| id

race

fbf0

fbf10

fbf20

fbf60

fbf150

fbf300

fbf400 |

|---------------------------------------------------------------------|

| 1

1

1

1.4

6.4

19.1

25

24.6

28 |

| 2

1

2.1

2.8

8.3

15.7

21.9

21.7

30.1 |

| 3

1

1.1

2.2

5.7

8.2

9.3

12.5

21.6 |

| 4

1

2.44

2.9

4.6

13.2

17.3

17.6

19.4 |

| 5

1

2.9

3.5

5.7

11.5

14.9

19.7

19.3 |

|---------------------------------------------------------------------|

| 6

1

4.1

3.7

5.8

19.8

17.7

20.8

30.3 |

| 7

1

1.24

1.2

3.3

5.3

5.4

10.1

10.6 |

| 8

1

3.1

.

.

15.45

.

.

31.3 |

| 9

1

5.8

8.8

13.2

33.3

38.5

39.8

43.3 |

| 10

1

3.9

6.6

9.5

20.2

21.5

30.1

29.6 |

|---------------------------------------------------------------------|

| 11

1

1.91

1.7

6.3

9.9

12.6

12.7

15.4 |

| 12

1

2

2.3

4

8.4

8.3

12.8

16.7 |

| 13

1

3.7

3.9

4.7

10.5

14.6

20

21.7 |

| 14

2

2.46

2.7

2.54

3.95

4.16

5.1

4.16 |

| 15

2

2

1.8

4.22

5.76

7.08

10.92

7.08 |

|---------------------------------------------------------------------|

| 16

2

2.26

3

2.99

4.07

3.74

4.58

3.74 |

| 17

2

1.8

2.9

3.41

4.84

7.05

7.48

7.05 |

| 18

2

3.13

4

5.33

7.31

8.81

11.09

8.81 |

| 19

2

1.36

2.7

3.05

4

4.1

6.95

4.1 |

| 20

2

2.82

2.6

2.63

10.03

9.6

12.65

9.6 |

|---------------------------------------------------------------------|

| 21

2

1.7

1.6

1.73

2.96

4.17

6.04

4.17 |

| 22

2

2.1

1.9

3

4.8

7.4

16.7

21.2 |

+---------------------------------------------------------------------+

We discarded the difference score between fbf0 and fbf10, which we no longer need.

To fit a repeated measures ANOVA, the data must first be converted to long format.

Data

Create or change variables

Other variable transformation commands

Convert data between wide and long

Main tab: long format from wide

ID variable(s) – the i() option: id

Subobservation identifier variable – the j() option: dose

Base (stub) names of X_ij variables: fbf

OK

reshape long fbf , i(id) j(dose)

Chapter 5-18 (revision 16 May 2010)

p. 9

Listing the data for the first two subjects, with the separator line drawn between subjects

list if id<=2 , sepby(id)

1.

2.

3.

4.

5.

6.

7.

8.

9.

10.

11.

12.

13.

14.

+--------------------------+

| id

dose

race

fbf |

|--------------------------|

| 1

0

White

1 |

| 1

10

White

1.4 |

| 1

20

White

6.4 |

| 1

60

White

19.1 |

| 1

150

White

25 |

| 1

300

White

24.6 |

| 1

400

White

28 |

|--------------------------|

| 2

0

White

2.1 |

| 2

10

White

2.8 |

| 2

20

White

8.3 |

| 2

60

White

15.7 |

| 2

150

White

21.9 |

| 2

300

White

21.7 |

| 2

400

White

30.1 |

+--------------------------+

Computing a repeated measures ANOVA on the first two repeated measurements (doses 0 and

10),

Statistics

Linear models and related

ANOVA

Analysis of variance and covariance

Model tab: Dependent variable: fbf

Model: id dose

Model variables: all categorical

Repeated measures variables: dose

by/if/in tab: If (expression): dose==0 | dose==10

OK

anova fbf id dose if dose==0 | dose==10 , repeated(dose)

Chapter 5-18 (revision 16 May 2010)

p. 10

Number of obs =

43

Root MSE

= .637673

R-squared

=

Adj R-squared =

0.9129

0.8172

Source | Partial SS

df

MS

F

Prob > F

-----------+---------------------------------------------------Model | 85.2847538

22 3.87657972

9.53

0.0000

|

id | 81.9059936

21 3.90028541

9.59

0.0000

dose | 3.64915245

1 3.64915245

8.97

0.0071

|

Residual | 8.13254708

20 .406627354

-----------+---------------------------------------------------Total | 93.4173008

42 2.22422145

Between-subjects error term:

Levels:

Lowest b.s.e. variable:

id

22

id

(21 df)

Repeated variable: dose

Huynh-Feldt epsilon

=

Greenhouse-Geisser epsilon =

Box's conservative epsilon =

1.0000

1.0000

1.0000

------------ Prob > F -----------Source |

df

F

Regular

H-F

G-G

Box

-----------+---------------------------------------------------dose |

1

8.97

0.0071

0.0071

0.0071

0.0071

Residual |

20

-----------+----------------------------------------------------

Comparing this to the paired t test computed above, we see that the term in the anova table for

dose has an identical p value to the paired t test.

Paired t test

-----------------------------------------------------------------------------Variable |

Obs

Mean

Std. Err.

Std. Dev.

[95% Conf. Interval]

---------+-------------------------------------------------------------------fbf10 |

21

3.057143

.3864103

1.770755

2.251105

3.863181

fbf0 |

21

2.467619

.2532897

1.160719

1.939266

2.995972

---------+-------------------------------------------------------------------diff |

21

.5895238

.1967903

.9018064

.1790265

1.000021

-----------------------------------------------------------------------------Ho: mean(fbf10 - fbf0) = mean(diff) = 0

Ha: mean(diff) < 0

t =

2.9957

P < t =

0.9964

Ha: mean(diff) != 0

t =

2.9957

P > |t| =

0.0071

Ha: mean(diff) > 0

t =

2.9957

P > t =

0.0036

The id term is just ignored in this output.

Ignore the second table for now, which is not useful until more than two repeated measurements

are included in the model. That table will be explained below.

Chapter 5-18 (revision 16 May 2010)

p. 11

To see the underlying regression model that the anova fitted, we can use,

anova, regress

regress

// Stata version 10

// Stata version 11

Source |

SS

df

MS

-------------+-----------------------------Model | 85.2847538

22 3.87657972

Residual | 8.13254708

20 .406627354

-------------+-----------------------------Total | 93.4173008

42 2.22422145

Number of obs

F( 22,

20)

Prob > F

R-squared

Adj R-squared

Root MSE

=

=

=

=

=

=

43

9.53

0.0000

0.9129

0.8172

.63767

-----------------------------------------------------------------------------fbf |

Coef.

Std. Err.

t

P>|t|

[95% Conf. Interval]

-------------+---------------------------------------------------------------id |

2 |

1.25

.6376734

1.96

0.064

-.0801634

2.580163

3 |

.45

.6376734

0.71

0.489

-.8801633

1.780163

4 |

1.47

.6376734

2.31

0.032

.1398367

2.800163

5 |

2

.6376734

3.14

0.005

.6698367

3.330163

6 |

2.7

.6376734

4.23

0.000

1.369837

4.030163

7 |

.02

.6376734

0.03

0.975

-1.310163

1.350163

8 |

2.194762

.7871611

2.79

0.011

.5527725

3.836751

9 |

6.1

.6376734

9.57

0.000

4.769837

7.430164

10 |

4.05

.6376734

6.35

0.000

2.719837

5.380163

11 |

.605

.6376734

0.95

0.354

-.7251634

1.935163

12 |

.95

.6376734

1.49

0.152

-.3801634

2.280163

13 |

2.6

.6376734

4.08

0.001

1.269837

3.930163

14 |

1.38

.6376734

2.16

0.043

.0498367

2.710163

15 |

.7

.6376734

1.10

0.285

-.6301634

2.030163

16 |

1.43

.6376734

2.24

0.036

.0998366

2.760163

17 |

1.15

.6376734

1.80

0.086

-.1801634

2.480163

18 |

2.365

.6376734

3.71

0.001

1.034837

3.695163

19 |

.83

.6376734

1.30

0.208

-.5001633

2.160163

20 |

1.51

.6376734

2.37

0.028

.1798365

2.840163

21 |

.45

.6376734

0.71

0.489

-.8801633

1.780163

22 |

.8

.6376734

1.25

0.224

-.5301634

2.130163

|

10.dose |

.5895238

.1967903

3.00

0.007

.1790265

1.000021

_cons |

.9052381

.4615141

1.96

0.064

-.0574635

1.86794

Comparing this to the paired t test computed above, we see that the term in the anova table for

dose has an identical p value to the paired t test, and identical standard error, and the same effect

size (mean difference) .

Paired t test

-----------------------------------------------------------------------------Variable |

Obs

Mean

Std. Err.

Std. Dev.

[95% Conf. Interval]

---------+-------------------------------------------------------------------fbf10 |

21

3.057143

.3864103

1.770755

2.251105

3.863181

fbf0 |

21

2.467619

.2532897

1.160719

1.939266

2.995972

---------+-------------------------------------------------------------------diff |

21

.5895238

.1967903

.9018064

.1790265

1.000021

-----------------------------------------------------------------------------Ho: mean(fbf10 - fbf0) = mean(diff) = 0

Ha: mean(diff) < 0

t =

2.9957

P < t =

0.9964

Chapter 5-18 (revision 16 May 2010)

Ha: mean(diff) != 0

t =

2.9957

P > |t| =

0.0071

Ha: mean(diff) > 0

t =

2.9957

P > t =

0.0036

p. 12

We discover that the model created an indicator term for each subject, which makes the dose

comparison a “stratified” analysis by subject.

Recall from the chapter on conditional logistic regression, that stratifying by the matching

variable (subject in this case) inflated the odds ratio (the effect measure). In least squares

estimation, such as anova and linear regression, the effect measure, or mean difference is not

inflated.

However, the model is still subject to overfitting, since each indicator term for subject counts one

toward the N/10 = # allowed predictors rule to avoid overfitting. Clearly, this rule is violated in

this example.

From the output, we see that R-squared = 0.9129, which is R = 0.9555. The Pearson correlation

calculated above between dose 0 and dose 10 was r = 0.8927. Thus the R is inflated due to

overfitting. The adjusted R-squared = 0.8172, which is R = 0.9040, did a nice job of removing

the effect of overfitting.

Chapter 5-18 (revision 16 May 2010)

p. 13

Two-way Repeated Measures ANOVA With One Repeated Measurements Factor and One

Between Groups Factor.

Next, we will extend this paired t test to allow for a between subjects covariate, by including race

in the model. That is, we are interested to know if the change from 0 dose to dose 10 differs

between blacks and whites.

This could be described as “a twoway repeated measures ANOVA with one repeated measures

factor and one between groups factor.”

Computing the ANOVA,

anova fbf race

if dose==0 |

*

anova fbf race

if dose==0 |

/ id|race dose dose*race ///

dose==10 ,repeated(dose)

// Stata version 10

/ id|race dose dose#race ///

dose==10 ,repeated(dose)

// Stata version 11

Number of obs =

Root MSE

=

43

.64235

R-squared

=

Adj R-squared =

0.9161

0.8145

Source | Partial SS

df

MS

F

Prob > F

-----------+---------------------------------------------------Model | 85.5776555

23 3.72076763

9.02

0.0000

|

race | 5.07251403

1 5.07251403

1.32

0.2648

id|race | 77.0659916

20 3.85329958

-----------+---------------------------------------------------dose | 3.28830185

1 3.28830185

7.97

0.0109

dose#race | .292901785

1 .292901785

0.71

0.4100

|

Residual |

7.8396453

19

.41261291

-----------+---------------------------------------------------Total | 93.4173008

42 2.22422145

Between-subjects error term:

Levels:

Lowest b.s.e. variable:

Covariance pooled over:

id|race

22

id

race

(20 df)

(for repeated variable)

Repeated variable: dose

Huynh-Feldt epsilon

*Huynh-Feldt epsilon reset

Greenhouse-Geisser epsilon

Box's conservative epsilon

=

to

=

=

1.0526

1.0000

1.0000

1.0000

------------ Prob > F -----------Source |

df

F

Regular

H-F

G-G

Box

-----------+---------------------------------------------------dose |

1

7.97

0.0109

0.0109

0.0109

0.0109

dose#race |

1

0.71

0.4100

0.4100

0.4100

0.4100

Residual |

19

----------------------------------------------------------------

We’ll put off the interpretation of the race and dose terms in this model, saving that for below.

The dose × race interaction term, however, has a very intuitive interpretation. It tells us that

blacks change differently than whites from no dose to initial dose (dose of 10).

Chapter 5-18 (revision 16 May 2010)

p. 14

In fact, for the two repeated measurements case, this interaction term is identically an

independent groups t test on the change score. Let’s verify this.

The change score is easier to compute in wide format, so we’ll first return to that

reshape wide fbf , i(id) j(dose)

list in 1/5

1.

2.

3.

4.

5.

+----------------------------------------------------------------------+

| id

fbf0

fbf10

fbf20

fbf60

fbf150

fbf300

fbf400

race |

|----------------------------------------------------------------------|

| 1

1

1.4

6.4

19.1

25

24.6

28

White |

| 2

2.1

2.8

8.3

15.7

21.9

21.7

30.1

White |

| 3

1.1

2.2

5.7

8.2

9.3

12.5

21.6

White |

| 4

2.44

2.9

4.6

13.2

17.3

17.6

19.4

White |

| 5

2.9

3.5

5.7

11.5

14.9

19.7

19.3

White |

+----------------------------------------------------------------------+

Then, computing the change score,

capture drop diff10

gen diff10 = fbf10-fbf0

list fbf0 fbf10 diff10 in 1/5

1.

2.

3.

4.

5.

+-------------------------+

| fbf0

fbf10

diff10 |

|-------------------------|

|

1

1.4

.4 |

| 2.1

2.8

.7 |

| 1.1

2.2

1.1 |

| 2.44

2.9

.46 |

| 2.9

3.5

.5999999 |

+-------------------------+

Finally, computing the independent groups t test on the change score,

ttest diff10, by(race)

Two-sample t test with equal variances

-----------------------------------------------------------------------------Group |

Obs

Mean

Std. Err.

Std. Dev.

[95% Conf. Interval]

---------+-------------------------------------------------------------------White |

12

.7341667

.3088259

1.069804

.0544455

1.413888

Black |

9

.3966667

.2071634

.6214902

-.081053

.8743863

---------+-------------------------------------------------------------------combined |

21

.5895238

.1967903

.9018064

.1790265

1.000021

---------+-------------------------------------------------------------------diff |

.3375

.4005753

-.5009138

1.175914

-----------------------------------------------------------------------------diff = mean(White) - mean(Black)

t =

0.8425

Ho: diff = 0

degrees of freedom =

19

Ha: diff < 0

Pr(T < t) = 0.7950

Ha: diff != 0

Pr(|T| > |t|) = 0.4100

Ha: diff > 0

Pr(T > t) = 0.2050

We now see that the p value is identical to the dose × race interaction term p value, which

verifies that the interaction term is identically an independent groups t test on the change score.

Chapter 5-18 (revision 16 May 2010)

p. 15

Repeated Measures ANOVA With More Than Two Measurements In the Repeated

Measurements Factor

Now, let’s use all dose levels. We reshape the data to long format and leave off the if statement

to allow all doses to be included.

reshape long fbf , i(id) j(dose)

anova fbf race

*

anova fbf race

/ id|race dose dose*race ,repeated(dose) // Version 10

/ id|race dose dose#race ,repeated(dose) // Version 11

Number of obs =

150

Root MSE

= 3.33212

R-squared

=

Adj R-squared =

0.8915

0.8606

Source | Partial SS

df

MS

F

Prob > F

-----------+---------------------------------------------------Model | 10580.8603

33 320.632131

28.88

0.0000

|

race | 2093.30331

1 2093.30331

16.44

0.0006

id|race | 2546.14177

20 127.307089

-----------+---------------------------------------------------dose | 3898.97947

6 649.829911

58.53

0.0000

dose#race | 1164.13439

6 194.022398

17.47

0.0000

|

Residual | 1287.95004

116 11.1030176

-----------+---------------------------------------------------Total | 11868.8104

149 79.6564455

Between-subjects error term:

Levels:

Lowest b.s.e. variable:

Covariance pooled over:

id|race

22

id

race

(20 df)

(for repeated variable)

Repeated variable: dose

Huynh-Feldt epsilon

=

Greenhouse-Geisser epsilon =

Box's conservative epsilon =

0.3092

0.2732

0.1667

------------ Prob > F -----------Source |

df

F

Regular

H-F

G-G

Box

-----------+---------------------------------------------------dose |

6

58.53

0.0000

0.0000

0.0000

0.0000

dose#race |

6

17.47

0.0000

0.0000

0.0000

0.0005

Residual |

116

----------------------------------------------------------------

When you have more than two time points, you should always make a correction to the p value of

a repeated measures ANOVA to account for departures from “sphericity”. Sphericity is a

complex form of the analogous “equal variances” assumption of the independent groups t test

and ANOVA. The farther the departure from this assumption being justified, the greater the

difference of the sphericity corrected and non-corrected p values. This adjustment is reported in

the second table.

Chapter 5-18 (revision 16 May 2010)

p. 16

Each of the three methods adjust the degrees of freedom by computing an epsilon which is then

multiplied by the unadjusted degrees of freedom—instead of (t-1) and (n-1)(t-1) degrees of

freedom, the adjusted degrees of freedom are (t-1) and (n-1)(t-1) degrees of freedom The

most widely used adjustment is the Greenhouse-Geisser adjustment. (Twisk, 2003, p.25)

You’ll notice in the case of the two repeated measurements above, the three epilsons were 1, so

no adjustment took place. In this second example with more than two repeated measurements,

the epsilons are less than 1, reducing the degrees of freedom, and making it more difficult to get a

significant p value.

Twisk (2003, p.25) describes the sphericity assumption as,

“This assumption is also known as the ‘compound symmetry’ assumption. It applies,

firstly, when all correlations in outcome variable Y between repeated measurements are

equal, irrespective of the time interval between the measurements. Secondly, the

variances of otucome variable Y must be the same at each of the repeated measurements.”

SPSS describes the sphericity assumption in its repeated measures output as,

“the error covariance matrix of the orthonormalized transformed dependent variables is

proportional to an identity matrix”.

The best approach for reporting is just to assume that those who know about it are familiar with

the term, and those who don’t do not want to know. You could describe the analysis as:

Statistical Methods

The repeated measurements of forearm blood flow across the of isoproterenol dose levels

were analyzed using a two-way repeated measures analysis of variance, with the

Greenhouse-Geisser adjustment to the p values to account for any violation of the

sphericity assumption. The repeated measures factor was dose level and the

between groups factor was race.

Example

Tonetti et al (N Engl J Med, 2007) reported a repeated measures analysis of variance with a

Greenhouse-Geisser adjustment to the F-test, as well as a time × group interaction term to test the

hypot. This is how they stated it in their Statistical Methods section,

“We performed a repeated-measures analysis of variance to determine differences in

flow-mediated dilatation (the primary outcome) and all secondary and other outcomes

between the two groups and over time, using a conservative F-test for the interaction

between time and treatment group (SPSS software version 13). The Greenhouse-Geisser

correction for the F test was used to adjust the degrees of freedom for deviations form

sphericity (one of the assumptions of repeated-measures analysis of variance [ANOVA].”

Chapter 5-18 (revision 16 May 2010)

p. 17

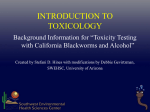

Repeated measures data are frequently displayed using profile plots, which is just a graph of the

means across time. Here is such a plot with 95% confidence intervals.

* -- profile plot

use 11.2.Isoproterenol.dta, clear // wide format

#delimit ;

collapse (mean) mfbf0=fbf0 mfbf10=fbf10 mfbf20=fbf20 mfbf60=fbf60

mfbf150=fbf150 mfbf300=fbf300 mfbf400=fbf400

(sd) sfbf0=fbf0 sfbf10=fbf10 sfbf20=fbf20 sfbf60=fbf60

sfbf150=fbf150 sfbf300=fbf300 sfbf400=fbf400

(count) nfbf0=fbf0 nfbf10=fbf10 nfbf20=fbf20 nfbf60=fbf60

nfbf150=fbf150 nfbf300=fbf300 nfbf400=fbf400

, by(race);

#delimit cr

foreach i of numlist 0 10 20 60 150 300 400 {

gen fbflcl`i'=mfbf`i'-invttail(nfbf`i'-1,0.025)*sfbf`i'/sqrt(nfbf`i')

gen fbfucl`i'=mfbf`i'+invttail(nfbf`i'-1,0.025)*sfbf`i'/sqrt(nfbf`i')

}

reshape long mfbf sfbf nfbf fbflcl fbfucl, i(race) j(dose)

list

capture drop dose2

gen dose2=dose

replace dose2=dose2+5 if race==2

order dose dose2

#delimit ;

twoway

(scatter mfbf dose2 if race==1

, connect(direct) clpattern(solid)clcolor(blue) clwidth(medium)

msymbol(square) mlcolor(blue) mfcolor(blue) msize(medium))

(scatter mfbf dose2 if race==2

, connect(direct) clpattern(solid)clcolor(green) clwidth(medium)

msymbol(triangle) mlcolor(green) mfcolor(green) msize(medium))

(rcap fbfucl fbflcl dose2 if race==1

, blcolor(blue) blwidth(medium) blpattern(solid) )

(rcap fbfucl fbflcl dose2 if race==2

, blcolor(green) blwidth(medium) blpattern(solid) )

,

xlabel(0 10 20 60 150 300 400)

xtitle("Isoproterenol dose (ng/min)", height(5))

ylabel(0(5)25,angle(horizontal))

ytitle("Mean Forearm Blood Flow (ml/min/dl)")

legend(order(1 "White" 2 "Black"))

note("Shown are means and 95% confidence intervals")

;

#delimit cr

Chapter 5-18 (revision 16 May 2010)

p. 18

1.

2.

3.

4.

5.

6.

7.

8.

9.

10.

11.

12.

13.

14.

+---------------------------------------------------------------------+

| dose

dose2

race

mfbf

sfbf

nfbf

fbflcl

fbfucl |

|---------------------------------------------------------------------|

|

0

0

White

2.7146

1.3943

13

1.872018

3.557212 |

|

10

10

White

3.4167

2.2307

12

1.999342

4.833992 |

|

20

20

White

6.4583

2.7348

12

4.720738

8.19593 |

|

60

60

White

14.658

7.3405

13

10.22189

19.09349 |

| 150

150

White

17.25

8.8903

12

11.60138

22.89862 |

|---------------------------------------------------------------------|

| 300

300

White

20.2

8.4396

12

14.83772

25.56228 |

| 400

400

White

24.408

8.7034

13

19.14828

29.6671 |

|

0

5

Black

2.1811

.5571

9

1.752886

2.609337 |

|

10

15

Black

2.5778

.73786

9

2.010605

3.144951 |

|

20

25

Black

3.2111

1.0397

9

2.411905

4.010317 |

|---------------------------------------------------------------------|

|

60

65

Black

5.3022

2.1676

9

3.636074

6.96837 |

| 150

155

Black

6.2344

2.2373

9

4.514739

7.95415 |

| 300

305

Black

9.0567

4.0413

9

5.950222

12.16311 |

| 400

405

Black

7.7678

5.4942

9

3.544545

11.99101 |

+---------------------------------------------------------------------+

Mean Forearm Blood Flow (ml/min/dl)

25

20

15

10

5

0

01020

60

150

Isoproterenol dose (ng/min)

(ng/min)White

300

400

Black

Shown are means and 95% confidence intervals

Exercise Look at the profile plots in the Tonetti et al (N Engl J Med, 2007) paper. Their plots

are just like this, only they chose to use standard errors, rather than confidence intervals. Using

the standard errors is just as common as using the confidence intervals; however, standard errors

mislead the reader into thinking the separation of the two lines is greater than it really is.

Chapter 5-18 (revision 16 May 2010)

p. 19

Overlapping Confidence Intervals

When the confidence intervals around statistics, such as group means, do not overlap, this

indicates that the difference between the means is statistically significant. However, the converse

is not always true. (see box).

Overlapping Confidence Intervals Do Not Imply Nonsignificance

van Belle (2002, p.39) explains,

“It is sometimes claimed that if two independent statistics have overlapping confidence

intervals, then they are not significantly different. This is certainly true if there is

substantial overlap. However, the overlap can be surprisingly large and the means still

significantly different. … Confidence intervals associated with statistics can overlap as

much as 29% and the statistics can still be significantly different.”

Chapter 5-18 (revision 16 May 2010)

p. 20

____________________________________________________________________________

Interpretation (a bit advanced, but might be interesting to MStat students)

Just what do the results mean?

The repeated measures ANOVA we have seen is called the “univariate approach”. There is also

a “multivariate approach”, called MANOVA. The MANOVA approach compares each repeated

measure to the preceding repeated measure, and so has an intuitive interpretation. However, the

univariate approach is more powerful and thus more popular.

The univariate approach is simply a sums of squares approach. The sums of squares for the

between factor (between whites and blacks) is computed as (Twisk, 2003, p.26-27):

T

SSb N ( yt y ) 2

t 1

where N is the number of subjects, T is the number of repeated measurements, yt is the average

of outcome variable Y at time-point t, and y is the overall average of outcome variable Y.

The sums of squares for the within subjects, or repeated measures, factor is computed as:

T

N

SS w ( yit yt )2

t 1 i 1

where N is the number of subjects, T is the number of repeated measurements, yit is the value of

outcome variable Y for individual i at time-point t, and yt is the average value of outcome

variable Y at time-point t.

The within subjects sums of squares, then, is simply a way to determine if all the subjects’ scores

are equal across the dose levels (all on a horizontal line).

The between groups sums of squares, then, is simply a way to determine if subjects’ scores

across dose level in one group are different from the other group.

The between groups factor, race in this example, may be significant simply because the baseline

repeated measure was different, forearm blood flow for dose = 0 in this example. Computing

change scores from baseline is one way to adjust for this. However, it is generally only the

interaction term that is of interest, which does not require equal baseline values.

_____________________________________________________________________________

Chapter 5-18 (revision 16 May 2010)

p. 21

Limitations of the Repeated Measures ANOVA Approach

This approach does not use any information about the spacing between repeated measurements.

Thus it does not take into account the unequal intervals in the dose in this example, treating them

the same as if they were incremented by a constant amount.

Secondly, this approach always uses a listwise deletion of missing data, so a subject is lost if any

time point is missing.

The two methods will consider next, GEE and multilevel (mixed effects) models, overcome both

limitations. For that reason, and because these other methdos are easier to use, there really is no

need to analyze your data with repeated measures ANOVA.

References

Dupont WD. (2002). Statistical Modeling for Biomedical Researchers: a Simple

Introduction to the Analysis of Complex Data. Cambridge, Cambridge University

Press.

Fleiss JL. (1986). The Design and Analysis of Clinical Experiments. New York, John

Wiley & Sons.

Frison L, Pocock S. (1992). Repeated measures in clinical trials: analysis using mean summary

statistics and its implications for design. Statistics in Medicine 11:1685-1704.

Lang CC, Stein CM, Brown RM, et al. (1995). Attenuation of isoproterenol-mediated

vasodilation in blacks. N Engl J Med 333:155-60.

Tonetti MS, D’Aiuto F, Nibali L et al. (2007). Treatment of periodontitis and endothelial

function. N Engl J Med 356(9):911-20.

Twisk JWR. (2003). Applied Longitudinal Data Analysis for Epidemiology: A Practical

Guide. Cambridge, Cambridge University Press.

van Belle G. (2002). Statistical Rules of Thumb. New York, John Wiley & Sons.

Chapter 5-18 (revision 16 May 2010)

p. 22