* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download Introduction to the Theory of Computation Chapter 10.2

Turing's proof wikipedia , lookup

List of prime numbers wikipedia , lookup

Georg Cantor's first set theory article wikipedia , lookup

List of important publications in mathematics wikipedia , lookup

Fundamental theorem of calculus wikipedia , lookup

Mathematical proof wikipedia , lookup

Brouwer fixed-point theorem wikipedia , lookup

Law of large numbers wikipedia , lookup

Central limit theorem wikipedia , lookup

Four color theorem wikipedia , lookup

System of polynomial equations wikipedia , lookup

Fermat's Last Theorem wikipedia , lookup

Vincent's theorem wikipedia , lookup

Factorization wikipedia , lookup

Wiles's proof of Fermat's Last Theorem wikipedia , lookup

Quadratic reciprocity wikipedia , lookup

Factorization of polynomials over finite fields wikipedia , lookup

Introduction to the Theory of Computation

Chapter 10.2

Takagi Lab. M1

Sho Nishimura

Outline

• Probabilistic algorithms,

probabilistic Turing machines,

and complexity class BPP

• Example of probabilistic algorithms :

primality test

• Example of probabilistic algorithms :

equivalence of read-once branching programs

Probabilistic algorithms

• A probabilistic algorithm:

designed to use the outcome of a random

process

– Some problems seem to be more easily solvable

by probabilistic algorithms

than by deterministic algorithms

A Probabilistic Turing Machine

• Def 10.3: a probabilistic Turing machine 𝑀

– A type of nondeterministic TM in which each

nondeterministic step is called a coin-flip step and

has TWO legal next moves.

• The probability of branch 𝑏:Pr 𝑏 = 2−𝑘

(𝑘: the number of coin-flip steps)

– The probability that 𝑀 accepts the input 𝑤:

Pr 𝑀 𝑎𝑐𝑐𝑒𝑝𝑡𝑠 𝑤 =

Pr[𝑏]

𝑏 𝑖𝑠

𝑎𝑛 𝑎𝑐𝑐𝑒𝑝𝑡𝑖𝑛𝑔 𝑏𝑟𝑎𝑛𝑐ℎ

• Of course, the probability that 𝑀 rejects the input 𝑤

is the complementary event of this

A Probabilistic Turing Machine

A Probabilistic Turing Machine

• accept/reject: error probability 𝜖 0 ≤ 𝜖 <

• “𝑀 recognizes language 𝐴 with error

probability 𝜖”:

1. 𝑤 ∈ 𝐴 → Pr 𝑀 𝑎𝑐𝑐𝑒𝑝𝑡𝑠 𝑤 ≥ 1 − 𝜖

2. 𝑤 ∉ 𝐴 → Pr 𝑀 𝑟𝑒𝑗𝑒𝑐𝑡𝑠 𝑤 ≥ 1 − 𝜖

1

2

Class BPP

• Def 10.4: class BPP

– BPP is the class of languages that are recognized

by probabilistic polynomial Turing machines with

1

an error probability of .

3

Actually, any constant error probability(0 < 𝜖 <

would yield an equivalent definition.

1

)

2

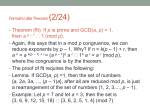

Lemma 10.5:Amplification Lemma

• Lemma 10.5:Amplification Lemma

– 𝜖: a fixed constant strictly between 0 and

1

2

– For any polynomial 𝑝𝑜𝑙𝑦 𝑛 , a probabilistic

polynomial time TM 𝑀1 that operates with error

probability 𝜖 has an equivalent probabilistic

polynomial time TM 𝑀2 that operates with an

error probability of 2−𝑝𝑜𝑙𝑦 𝑛 .

Lemma 10.5:Amplification Lemma

• How to prove Lemma 10.5?

– 𝑀2 simulates 𝑀1 by running 𝑀1 polynomial times

and taking the majority vote of outcomes.

• Error rate decreases exponentially.

Lemma 10.5:Amplification Lemma

(Formal proof)

• 𝑀1 (error prob.:𝜖

1

< ), a polynomial

2

−𝑝𝑜𝑙𝑦 𝑛

→ 𝑀2 (error prob.:2

𝑝𝑜𝑙𝑦(𝑛)

)

– 𝑀2 =“ On input 𝑥,

1. Calculate 𝑘 (describe later)

2. Run 2𝑘 independent simulations of 𝑀1 on input 𝑥

3. If most accept, then accept; otherwise reject.

Lemma 10.5:Amplification Lemma

(Formal proof)

• How to calculate 𝑘(boundary)?

– 𝑆: any sequence of results 𝑀2 might obtain in

Stage 2

• 𝑝𝑆 : probability 𝑆 is obtained

• 𝑐: correct results, 𝑤: wrong results(𝑐 + 𝑤 = 2𝑘)

• 𝑆 is a bad sequence if 𝑐 ≤ 𝑤

– 𝜖𝑥 : the probability 𝑀1 is wrong on input 𝑥

– →If 𝑆 is a bad sequence, then

𝑝𝑠 ≤ 𝜖𝑥 𝑤 1 − 𝜖𝑥 𝑐

Lemma 10.5:Amplification Lemma

(Formal proof)

• How to calculate 𝑘(boundary)?

– If 𝑆 is a bad sequence, then

𝑝𝑠 ≤ 𝜖𝑥 𝑤 1 − 𝜖𝑥

≤ 𝜖𝑤 1 − 𝜖 𝑐

𝑐

1

2

• 𝜖𝑥 ≤ 𝜖 < and 𝑐 ≤ 𝑤

therefore 𝜖𝑥 1 − 𝜖𝑥 ≤ 𝜖(1 − 𝜖)

– Also,

𝑝𝑠 ≤ 𝜖 𝑤 1 − 𝜖

• 𝑘 ≤ 𝑤 and 𝜖 < 1 − 𝜖

𝑐

≤ 𝜖𝑘 1 − 𝜖

𝑘

Lemma 10.5:Amplification Lemma

(Formal proof)

• How to calculate 𝑘(boundary)?

– The number of bad sequences are at most 22𝑘

– Therefore,

Pr 𝑀2 𝑜𝑢𝑡𝑝𝑢𝑡𝑠 𝑖𝑛𝑐𝑜𝑟𝑟𝑒𝑐𝑡𝑙𝑦 𝑜𝑛 𝑖𝑛𝑝𝑢𝑡 𝑥

=

𝑝𝑠

𝑏𝑎𝑑 𝑆

2𝑘

≤2

𝑘

⋅𝜖 1−𝜖

𝑘

= 4𝜖 1 − 𝜖

𝑘

• This upper bound decreases exponentially in 𝑘

– Because 4𝜖 1 − 𝜖 < 1

– Specific value that allows us to bound the error probability

by 2−𝑡 :

𝑡

𝑘≥

log 2 (4𝜖(1 − 𝜖))

(QED)

Primality

• A prime number ↔ A composite

– Testing whether a integer is prime or not

• A polynomial time algorithm is now known

(“AKS primality test”) but difficult

• A much simpler probabilistic polynomial time algorithm

for primality testing

• A number= ∏factors

– No probabilistic polynomial algorithm for finding

factors is known to exist

• The algorithm is based on

“Fermat’s little theorem”.(Theorem 10.6)

Primality

• Notations

– “𝑥 and 𝑦 are equivalent modulo 𝑝”:

𝑥 ≡ 𝑦(mod 𝑝)

• 𝑥 mod 𝑝: the smallest nonnegative 𝑦 such that

𝑥 ≡ 𝑦(mod 𝑝)

– Every number is equivalent modulo 𝑝 to

some member of the set 𝑍𝑝+ = {0, … , 𝑝 − 1}

• 𝑍𝑝 = {1, … , 𝑝 − 1}

• If 𝑥 ≡ 𝑝 − 1(mod 𝑝), then we may refer 𝑥 as

𝑥 ≡ −1(mod 𝑝)

Theorem 10.6:Fermat’s Little Theorem

• Theorem 10.6:Fermat’s little theorem

– If 𝑝: prime, and 𝑎 ∈ 𝑍𝑝+

– Then 𝑎𝑝−1 ≡ 1(mod 𝑝)

• Example

– 𝑝 = 7, 𝑎 = 2 → 27−1 = 64 ≡ 1(mod 7)

– 𝑝 = 6, 𝑎 = 2 → 26−1 = 32 ≡ 2(mod 6)

Fermat’s Little Theorem, pseudoprimes,

and the Carmichael numbers

• A Fermat test

– If 𝑎𝑝−1 ≡ 1(mod 𝑝), then we say

𝑝 passes the Fermat test at 𝑎

• Some composites pass this test.

– Pseudoprimes

– The Carmichael numbers

Correct definition of

Pseudoprime and the Carmichael numbers

• Pseudoprime

– a composite number that passes a test or

sequence of tests that fail for most composite

numbers

– c.f. Fermat pseudoprime to a base 𝑎(𝑝𝑠𝑝(𝑎))

• A composite 𝑛 such that 𝑎𝑛−1 ≡ 1(mod 𝑛)

• The Carmichael number

– An odd composite 𝑛 which satisfies Fermat’s little

theorem for every choice of 𝑎 which is relatively

prime to 𝑛

Reference: Wolfram Mathworld

http://mathworld.wolfram.com/

Algorithm “PSEUDOPRIME”

• Go through all Fermat tests?

→would require exponential time

• How to reduce the number of tests?

– “If a number is not pseudoprime, it fails at least

half of all tests.”(c.f. Problem 10.16)

• Probabilistic algorithm containing a parameter

𝑘 that determines the error probability

Algorithm “PSEUDOPRIME”

• PSEUDOPRIME = “On input 𝑝:

1. Select 𝑎1 , … , 𝑎𝑘 randomly in 𝑍𝑝+

𝑝−1

2. Compute 𝑎𝑖 mod 𝑝 for each 𝑖

3. If all computed values are 1, then accept;

otherwise, reject”

• Works properly?

– 𝑝 is prime→passes all tests and accept

– 𝑝 is not pseudoprime

→it passes at most half of all tests

1

• Probability it passes each test≤

2

• Probability it passes all random-selected tests≤ 2−𝑘

• Time complexity?: polynomial

Algorithm “PSEUDOPRIME”

• Eg. Check primality of 𝑝 = 17,18

(𝑘 = 4)

– 𝑝 = 17

1. 𝑎1 = 2, 𝑎2 = 3, 𝑎3 = 5, 𝑎4 = 7

2. 𝑎116 ≡ 1, 𝑎216 ≡ 1, 𝑎316 ≡ 1, 𝑎416 ≡ 1(mod 17)

3. Accept

– 𝑝 = 18

1. 𝑎1 = 2, 𝑎2 = 3, 𝑎3 = 5, 𝑎4 = 7

2. 𝑎117 ≡ 14, 𝑎217 ≡ 9, 𝑎317 ≡ 11, 𝑎417 ≡ 13(mod 18)

3. Reject

Algorithm “PSEUDOPRIME”

• Eg. Check primality of

𝑝 = 2821 = 7 × 13 × 31(𝑘 = 3)

1. 𝑎1 = 2, 𝑎2 = 3, 𝑎3 = 5

2. 22820 ≡ 1, 32820 ≡ 1, 52820 ≡ 1(mod 2821)

3. Accept……?

Actually, 2821 is one of the Carmichael numbers.

72820 ≡ 2016, 132820 ≡ 2171,

312820 ≡ 1457(mod 2821)

Improve “PSEUDOPRIME”

• How to deal with the Carmichael numbers?

– Number 1 has exactly two square roots

±1 modulo any prime 𝑝

– For composites, 1 has 4 or more square roots

• Eg.±1, ±8 are the square roots of 1, modulo 21

• If 𝑝 passes the Fermat test at 𝑎, we can obtain

a square root of 1 from 𝑎

– 𝑎𝑝−1 mod 𝑝 = 1

𝑝−1

2

mod 𝑝

Algorithm “PRIME”

• PRIME = “ On input 𝑝,

1. If 𝑝 is even, accept if 𝑝 = 2; otherwise, reject

2. Select 𝑎1 , … , 𝑎𝑘 randomly in 𝑍𝑝+

3. For each 𝑖 from 1 to 𝑘:

𝑝−1

4. Compute 𝑎𝑖

mod 𝑝 and reject if different from 1

5. Let 𝑝 − 1 = 𝑠𝑡 where 𝑠 is odd and 𝑡 = 2ℎ

𝑠⋅20 𝑠⋅21

𝑠⋅2ℎ

𝑎𝑖 , 𝑎𝑖 , … , 𝑎𝑖

6. Compute the sequence

modulo 𝑝

7. If some of the sequence is not 1, find the last element

that is not 1 and reject if it is not -1

8. All tests passed, so accept”

Algorithm “PRIME”

• Example(𝑝 = 2741, 𝑘 = 1)

– 𝑎1 = 2 is selected, 2741 passes Fermat test at 2:

22740 ≡ 1(mod 2741)

– 2741 − 1 = 685 × 22 (𝑠 = 685, 𝑡 = 4, ℎ = 2)

– The sequence:

2685 ≡ 656,

1

685×2

2

≡ 2740 ≡ −1,

2

685×2

2

≡ 1(mod 2741)

1

• The last element that is not 1 is “2685×2 modulo 2741”,

which is −1.

– Therefore accept

Algorithm “PRIME”

• Example(𝑝 = 2821, 𝑘 = 1)

– 𝑎1 = 2 is selected, 2821 passes Fermat test at 2.

– 2821 − 1 = 705 × 22 (𝑠 = 705, 𝑡 = 4, ℎ = 2)

– The sequence:

2705 ≡ 2605,

705×21

2

≡ 1520,

2

705×2

2

≡ 1(mod 2821)

– Therefore reject

Algorithm “PRIME”

• Works correctly?

– If 𝑝 is even, obviously it works

– Theorem 10.7:

If 𝑝 is an odd prime number,

Pr 𝑃𝑅𝐼𝑀𝐸 𝑎𝑐𝑐𝑒𝑝𝑡𝑠 𝑝 = 1

– Theorem 10.8:

If 𝑝 is an odd composite number,

Pr 𝑃𝑅𝐼𝑀𝐸 𝑎𝑐𝑐𝑒𝑝𝑡𝑠 𝑝 ≤ 2−𝑘

• 𝑎𝑖 is a (compositeness) witness

– if the algorithm rejects at either stage 4 or 7, using

𝑎𝑖

Theorem 10.7(proof)

• If 𝑎 were a stage 4 witness, 𝑝 is composite

(Fermat’s little theorem)

• If 𝑎 were a stage 7 witness?

∃𝑏 ∈ 𝑍𝑝+ 𝑠. 𝑡. 𝑏 ≢ 1 mod 𝑝 𝑎𝑛𝑑 𝑏 2 ≡ 1(mod 𝑝)

Therefore 𝑏 2 − 1 ≡ 0(mod 𝑝)

Factor this: (𝑏 + 1)(𝑏 − 1) ≡ 0(mod 𝑝)

Which implies: 𝑏 + 1 𝑏 − 1 = 𝑐𝑝

(𝑐: some positive integer)

– 𝑏 − 1, 𝑏 + 1: strictly between 0 and 𝑝(𝑏 ≢ 1 mod 𝑝 )

– A multiple of prime can’t be expressed as a product of

numbers that are smaller than it is.

– Therefore 𝑝 is composite.

–

–

–

–

The Chinese Remainder Theorem

• The Chinese remainder theorem

– If 𝑝 and 𝑞 are relatively prime,

a one-to-one correspondence between

𝑍𝑝𝑞 and 𝑍𝑝 × 𝑍𝑞 exists

• Each number 𝑟 ∈ 𝑍𝑝𝑞 corresponds to a pair 𝑎, 𝑏

where 𝑎 ∈ 𝑍𝑝 , 𝑏 ∈ 𝑍𝑞 such that

𝑟 ≡ 𝑎 mod 𝑝 , 𝑟 ≡ 𝑏 mod 𝑞

Theorem 10.8

• Theorem 10.8

– If 𝑝 is an odd composite number,

Pr 𝑃𝑅𝐼𝑀𝐸 𝑎𝑐𝑐𝑒𝑝𝑡𝑠 𝑝 ≤ 2−𝑘

Theorem 10.8(proof)

• We show:

if 𝑝 is an odd composite number and 𝑎 is

selected randomly in 𝑍𝑃+ ,

1

Pr 𝑎 𝑖𝑠 𝑎 𝑤𝑖𝑡𝑛𝑒𝑠𝑠 ≥

2

– At least as many witnesses as non-witnesses exist

in 𝑍𝑝+

• By finding a unique witness for each non-witness.

• If above is proved,

Pr 𝑃𝑅𝐼𝑀𝐸 𝑎𝑐𝑐𝑒𝑝𝑡𝑠 𝑝 ≤ 2−𝑘

is obvious.

Theorem 10.8(proof)

• In every non-witness, the sequence(stage 6):

all 1s

/ contains -1 at some position followed by 1s

– Among all non-witnesses of the second kind, find

a non-witness ℎ for which the -1 appears in the

largest position 𝑗 in the sequence.

(position for first element: 0)

•

𝑗

𝑠⋅2

ℎ

≡ −1(mod 𝑝)

Theorem 10.8(proof)

• 𝑝:composite→

𝑝: the power of a prime

/ 𝑝 = 𝑞𝑟 (𝑞, 𝑟: relatively prime)

• According to the Chinese remainder theorem:

some number 𝑡 ∈ 𝑍𝑝 whereby

𝑡 ≡ ℎ mod 𝑞 , 𝑡 ≡ 1(mod 𝑟)

• Therefore

𝑠⋅2𝑗

𝑠⋅2𝑗

𝑡

≡ −1 mod 𝑞 , 𝑡

≡ 1(mod 𝑟)

• Hence 𝑡 is a witness.

–𝑡

𝑠⋅2𝑗

≢ ±1 mod 𝑝 , 𝑡

𝑠⋅2𝑗+1

≡ 1(mod 𝑝)

Theorem 10.8(proof)

• One witness 𝑡 → more witnesses?

– 𝑑𝑡 mod 𝑝 is a unique witness for each nonwitness 𝑑

• Really a witness?: 𝑑 is a non-witness, therefore

𝑗

𝑗+1

𝑠⋅2

𝑠⋅2

𝑑

≡ ±1, 𝑑

≡ 1 mod 𝑝

𝑗

𝑗+1

𝑠⋅2

𝑠⋅2

(𝑑𝑡) ≢ ±1, (𝑑𝑡)

≡ 1 mod 𝑝

• Unique?: 𝑑1 , 𝑑2 are distinct non-witnesses,

𝑑1 𝑡 mod 𝑝 ≠ 𝑑2 𝑡 mod 𝑝

– 𝑡 𝑠⋅2

𝑗+1

≡ 𝑡 ⋅ 𝑡 𝑠⋅2

𝑗+1 −1

≡ 1(mod 𝑝)

if 𝑡𝑑1 ≡ 𝑡𝑑2 (mod 𝑝), then

𝑗+1 −1

𝑗+1 −1

𝑠⋅2

𝑠⋅2

𝑑1 = 𝑡𝑑1 ⋅ 𝑡

mod 𝑝 = 𝑡𝑑2 ⋅ 𝑡

mod 𝑝 = 𝑑2

Theorem 10.8(proof)

• 𝑝:composite→

𝑝: the power of a prime

/ 𝑝 = 𝑞𝑟 (𝑞, 𝑟: relatively prime)

– 𝑝 = 𝑞 𝑒 where 𝑞 is prime and 𝑒 > 1

– Let 𝑡 = 1 + 𝑞 𝑒−1 , and expanding 𝑡 𝑝 using the

binomial theorem,

𝑡 𝑝 = 1 + 𝑞 𝑒−1 𝑝 = 1 + 𝑝𝑞 𝑒−1

+𝑚𝑢𝑙𝑡𝑖𝑝𝑙𝑒𝑠 𝑜𝑓 ℎ𝑖𝑔ℎ𝑒𝑟 𝑝𝑜𝑤𝑒𝑟𝑠 𝑜𝑓 𝑞 𝑒−1

– This is equivalent to 1 mod 𝑝

– Therefore 𝑡 is a witness.

(QED)

PRIMES ∈ BPP

• PRIMES={𝑛| 𝑛 is a prime number in binary}

• Theorem 10.9: 𝑃𝑅𝐼𝑀𝐸𝑆 ∈BPP

One-sided error

• The algorithm “PRIME” has one-sided error

– When the algorithm rejects

→ the input is 100% composite.

– When the algorithm accepts

→ there may be incorrect answer

• One-sided error is a common feature in

probabilistic algorithms

– Special complexity class “RP” is designated

Class RP

• Definition 10.10: Class RP

– RP is the class of languages that are recognized by

probabilistic polynomial time Turing machine

where inputs in the language are accepted with a

1

probability of at least and inputs not in the

2

language are rejected with a probability of 1.

• Ex. COMPOSITES ∈ RP

Branching Programs

• A branching program

– Model of computation used in complexity theory

and in certain practical areas(ex. CAD).

– Testing whether two branching programs are

equivalent

• Generally it is coNP-complete

• A certain restriction on the class of branching programs

→a probabilistic polynomial time algorithm for testing

equivalence

Branching Programs

• Definition 10.11: A branching program

– A branching program is a directed acyclicgraph

where all nodes are labeled by variables, except

for two output nodes labeled 0 or 1. The nodes

that are labeled by variables are called query

nodes. Every query node has two outgoing edges,

one labeled 0 and the other labeled 1. Both

output nodes have no outgoing edges. One of the

nodes in a branching program is designated the

start node.

Read-once Branching Programs

• A read-once branching program

– A branching program that can query each variable

at most one time on every directed path from the

start node to an output node

Read-once Branching Programs

Theorem 10.13

• 𝐸𝑄𝑅𝑂𝐵𝑃 ={ 𝐵1 , 𝐵2 |𝐵1 and 𝐵2 are equivalent

read-once branching programs}

• Theorem 10.13

– 𝐸𝑄𝑅𝑂𝐵𝑃 ∈ BPP

Theorem 10.13

• Proof idea

– “Assign random values to the variables 𝑥1 , … , 𝑥𝑚 ,

and accept if 𝐵1 and 𝐵2 agree on the

assignment“?

• If 𝐵1 and 𝐵2 disagree only on a single assignment out of

the 2𝑚 possible assignments?

→this doesn’t work.

– “Randomly select a non-Boolean assignment to

the variables and evaluate 𝐵1 and 𝐵2 in a suitably

defined manner”

Theorem 10.3(proof)

• Assign polynomials over 𝑥1 , … , 𝑥𝑚 to the

nodes/edges of a read-once branching

program 𝐵

– Start node: assign the constant function 1

– A node labeled 𝑥 has been assigned polynomial 𝑝

→outgoing 1-edge: assign the polynomial 𝑥𝑝,

outgoing 0-edge: assign the polynomial 1 − 𝑥 𝑝

– A node: assign the sum of the polynomials of the

incoming edges

– 𝐵 itself: assign

the polynomial of the output node 1

Theorem 10.13(proof)

𝑝=1

𝑝=

1 − 𝑥1

𝑝=

1 − 𝑥1

(1 − 𝑥2 )

𝑝 = 𝑥1

𝑝 = 𝑥1

(1 − 𝑥2 )

𝑝 = 1 − 𝑥1 1 − 𝑥2 𝑥3

+𝑥1 1 − 𝑥2 𝑥3 + 𝑥1 𝑥2

Theorem 10.13(proof)

• ℱ: a finite field with at least 3𝑚 elements

• Algorithm:

𝐷 =“On input 𝐵1 , 𝐵2 :

1. Select elements 𝑎1 , … , 𝑎𝑚 randomly from ℱ

2. Evaluate the assigned polynomials 𝑝1 , 𝑝2 at 𝑎1

through 𝑎𝑚

3. If 𝑝1 𝑎1 , … , 𝑎𝑚 = 𝑝2 𝑎1 , … , 𝑎𝑚 then accept;

otherwise reject

• This runs in polynomial time

• Error probability: at

1

most

3

Theorem 10.13(proof)

• Relation between a read-once branching

program 𝐵 and the assigned polynomial 𝑝?

– For any Boolean assignments to 𝐵’s variables, all

polynomials assigned to its nodes evaluate to

either 1 or 0

• The polynomials that evaluate to 1 are those on the

computation path for the assignment

– Since 𝐵 is read-once, the polynomial is written as

𝑝 = ∑ ∏𝑦𝑖

(𝑦𝑖 = 𝑥𝑖 , 1 − 𝑥𝑖 , 1)

• 𝑦𝑖 = 1: when a path doesn’t contain variable 𝑥𝑖

Theorem 10.13(proof)

• split “𝑦𝑖 = 1” in the polynomial 𝑝:

𝑦𝑖 = 1 = 𝑥𝑖 + (1 − 𝑥𝑖 )

• The end result is an equivalent polynomial 𝑞

that contains a product term for each

assignment on which 𝐵 evaluates to 1

Theorem 10.13(proof)

• 𝐵1 and 𝐵2 are equivalent:

this algorithm always accepts

– They evaluate to 1 on exactly the same

assignments, so 𝑞1 and 𝑞2 are equal.

Therefore 𝑝1 and 𝑝2 are always the same

• 𝐵1 and 𝐵2 are inequivalent:

this algorithm rejects with a probability of at

2

least

3

– Shown by Lemma 10.15

• We need Lemma 10.14 to prove Lemma 10.15

(QED)

Lemma 10.14

• Lemma 10.14

– For every 𝑑 ≥ 0, a degree-𝑑 polynomial 𝑝 on a

single variable 𝑥 either has at most 𝑑 roots, or is

everywhere equal to 0.

Lemma 10.14(proof)

• Induction on 𝑑

– Basis:𝑑 = 0

• A polynomial of degree 0 is constant. If that constant is

not 0, the polynomial has no roots.

– Induction step: Assume true for 𝑑 − 1 and prove

true for 𝑑

• If 𝑝: non-zero polynomial of degree 𝑑 with a root at 𝑎

→the polynomial (𝑥 − 𝑎) divides 𝑝 evenly.

• Then the polynomial 𝑝/(𝑥 − 𝑎) is an non-zero

polynomial of degree 𝑑 − 1, and it has at most 𝑑 − 1

roots by virtue of the induction hypothesis.

(QED)

Lemma 10.15

• Lemma 10.15

– Let ℱ be a finite field with 𝑓 elements and let 𝑝 be

a nonzero polynomial on the variables 𝑥1 through

𝑥𝑚 , where each variable has degree at most 𝑑.

If 𝑎1 through 𝑎𝑚 are selected randomly in ℱ, then

𝑚𝑑

Pr 𝑝 𝑎1 , … , 𝑎𝑚 = 0 ≤

𝑓

Lemma 10.15(proof)

• Induction on 𝑚

– Basis: 𝑚 = 1

By Lemma 10.14, 𝑝 has at most 𝑑 roots, so the

probability that 𝑎1 is one of them is at most 𝑑/𝑓

Lemma 10.15(proof)

• Induction on 𝑚

– Induction step: true for 𝑚 − 1 and proof for 𝑚

• 𝑥1 : one of 𝑝’s variables, then

𝑑

𝑝=

𝑥1

𝑖

× 𝑝𝑖 (𝑥2 , … , 𝑥𝑚 )

𝑖=0

• If 𝑝 𝑎1 , … , 𝑎𝑚 = 0, two possible cases.

1.

2.

All 𝑝𝑖 evaluate to 0

Some 𝑝𝑖 doesn’t evaluate to 0 and

𝑎1 is the root of the single variable polynomial obtained by

evaluating 𝑝0 through 𝑝𝑑 on 𝑎2 through 𝑎𝑚

Lemma 10.15(proof)

• Induction on 𝑚

– Bound the probability of the first case

• 𝑝 is non-zero, so one of 𝑝𝑗 must not be non-zero

• The probability of the first case

≤ 𝑝𝑗 evaluates to 0

• By induction hypothesis,

𝑝𝑗 evaluates to 0 ≤ 𝑚 − 1 𝑑/𝑓

– Bound the probability of the second case

• On the assignment 𝑎2 , … , 𝑎𝑚 , 𝑝 reduces to a non-zero

polynomial in the single variable 𝑥1 .

• The basis of induction shows the probability is at most 𝑑/𝑓

– Therefore the probability that 𝑎1 , … , 𝑎𝑚 is a root of

the polynomial is at most 𝑚𝑑/𝑓

(QED)

Pseudorandom generators

• Here, we assume these algorithms use true

randomness

– True randomness is difficult (or impossible) to obtain

• → pseudorandom generators

– Deterministic algorithms whose output appears random

• Probabilistic algorithms may work equally with

pseudorandom generators

– Proving this is difficult

• Sometimes they may not work well with certain

pseudorandom generators

• Sophisticated pseudorandom generators

– Under the assumption of a one-way function

Summary

• A probabilistic Turing machine

– A type of nondeterministic TM with coin-flip steps

• class BPP

– The complexity class associated with polynomial

probabilistic TMs

• Examples of probabilistic algorithms

– Primality testing

– Checking equivalence of read-once branching

programs

• One-sided error and class RP

Thank you for listening!

![[Part 2]](http://s1.studyres.com/store/data/008795852_1-cad52ff07db278d6ae8b566caa06ee72-150x150.png)