* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Multiple Regression

Survey

Document related concepts

Transcript

Multiple Regression Analysis

y = b0 + b1x1 + b2x2 + . . . bkxk + u

1

Parallels with Simple Regression

b0 is still the intercept

b1 to bk all called slope parameters

u is still the error term (or disturbance)

Still need to make a zero conditional mean

assumption, so now assume that

E(u|x1,x2, …,xk) = 0

Still minimizing the sum of squared

residuals, so have k+1 first order conditions

2

Interpreting Multiple Regression

yˆ bˆ0 bˆ1 x1 bˆ 2 x2 ... bˆ k xk , so

yˆ bˆ x bˆ x ... bˆ x ,

1 1

2 2

k

k

so holding x2 ,..., xk fixed implies that

yˆ bˆ1 x1 , that is each b has

a ceteris pa ribus interpreta tion

3

A “Partialling Out” Interpretation

Consider t he case where k 2, i.e.

yˆ bˆ bˆ x bˆ x , then

0

1 1

bˆ1 rˆi1 yi

2 2

rˆ

2

i1

, where rˆi1 are

the residuals from the estimated

regression xˆ1 ˆ0 ˆ2 xˆ 2

Economics 20 - Prof. Anderson

4

“Partialling Out” continued

Previous equation implies that regressing y

on x1 and x2 gives same effect of x1 as

regressing y on residuals from a regression

of x1 on x2

This means only the part of xi1 that is

uncorrelated with xi2 is being related to yi so

we’re estimating the effect of x1 on y after

x2 has been “partialled out”

5

Simple vs Multiple Reg Estimate

~ ~

~

Compare the simple regression y b 0 b1 x1

with the multiple regression yˆ bˆ0 bˆ1 x1 bˆ 2 x2

~

Generally, b1 bˆ1 unless :

bˆ 0 (i.e. no partial effect of x ) OR

2

2

x1 and x2 are uncorrelat ed in the sample

6

Goodness-of-Fit

We can think of each observatio n as being made

up of an explained part, and an unexplaine d part,

yi yˆ i uˆi We then define the following :

y y is the total sum of squares (SST)

yˆ y is the explained sum of squares (SSE)

uˆ is the residual sum of squares (SSR)

2

i

2

i

2

i

Then SST SSE SSR

7

Goodness-of-Fit (continued)

How do we think about how well our

sample regression line fits our sample data?

Can compute the fraction of the total sum

of squares (SST) that is explained by the

model, call this the R-squared of regression

R2 = SSE/SST = 1 – SSR/SST

8

Goodness-of-Fit (continued)

We can also think of R 2 as being equal to

the squared correlatio n coefficien t between

the actual yi and the values yˆ i

y y yˆ yˆ

y y yˆ yˆ

2

R

2

i

i

2

2

i

i

9

More about R-squared

R2 can never decrease when another

independent variable is added to a

regression, and usually will increase

Because R2 will usually increase with the

number of independent variables, it is not a

good way to compare models

10

Assumptions for Unbiasedness

Population model is linear in parameters:

y = b0 + b1x1 + b2x2 +…+ bkxk + u

We can use a random sample of size n,

{(xi1, xi2,…, xik, yi): i=1, 2, …, n}, from the

population model, so that the sample model

is yi = b0 + b1xi1 + b2xi2 +…+ bkxik + ui

E(u|x1, x2,… xk) = 0, implying that all of the

explanatory variables are exogenous

None of the x’s is constant, and there are no

exact linear relationships among them

11

Too Many or Too Few Variables

What happens if we include variables in

our specification that don’t belong?

There is no effect on our parameter

estimate, and OLS remains unbiased

What if we exclude a variable from our

specification that does belong?

OLS will usually be biased

12

Omitted Variable Bias

Suppose the true model is given as

y b 0 b1 x1 b 2 x2 u , but we

~ ~

~

estimate y b b x u , then

~

b1

x

x

i1

i1

0

x1 yi

1 1

x1

2

13

Omitted Variable Bias (cont)

Recall the true model, so that

yi b 0 b1 xi1 b 2 xi 2 ui , so the

numerator becomes

x x b

b x x

i1

1

0

2

1

i1

1

b1 xi1 b 2 xi 2 ui

b 2 xi1 x1 xi 2 xi1 x1 ui

14

Omitted Variable Bias (cont)

~

b b1 b 2

x x x x

x x x

i1

1

i1

i2

2

i1

1

i1

x1 ui

x1

2

since E( ui ) 0, taking expectatio ns we have

~

E b1 b1 b 2

x x x

x x

i1

i1

1

i2

2

1

15

Omitted Variable Bias (cont)

Consider t he regression of x2 on x1

~

~

~

~

x2 0 1 x1 then 1

~

x x x

x x

i1

i1

~

1

i2

2

1

so E b1 b1 b 2 1

16

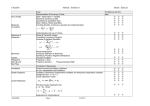

Summary of Direction of Bias

Corr(x1, x2) > 0 Corr(x1, x2) < 0

b2 > 0

Positive bias

Negative bias

b2 < 0

Negative bias

Positive bias

17

Omitted Variable Bias Summary

Two cases where bias is equal to zero

b2 = 0, that is x2 doesn’t really belong in model

x1 and x2 are uncorrelated in the sample

If correlation between x2 , x1 and x2 , y is

the same direction, bias will be positive

If correlation between x2 , x1 and x2 , y is

the opposite direction, bias will be negative

18

The More General Case

Technically, can only sign the bias for the

more general case if all of the included x’s

are uncorrelated

Typically, then, we work through the bias

assuming the x’s are uncorrelated, as a

useful guide even if this assumption is not

strictly true

19

Variance of the OLS Estimators

Now we know that the sampling

distribution of our estimate is centered

around the true parameter

Want to think about how spread out this

distribution is

Much easier to think about this variance

under an additional assumption, so

Assume Var(u|x1, x2,…, xk) = s2

(Homoskedasticity)

20

Variance of OLS (cont)

Let x stand for (x1, x2,…xk)

Assuming that Var(u|x) = s2 also implies

that Var(y| x) = s2

The 4 assumptions for unbiasedness, plus

this homoskedasticity assumption are

known as the Gauss-Markov assumptions

21

Variance of OLS (cont)

Given the Gauss - Markov Assumption s

Var bˆ j

s

2

SST j 1 R

2

j

, where

SST j xij x j and R is the R

2

2

j

2

from regressing x j on all other x' s

Economics 20 - Prof. Anderson

22

Components of OLS Variances

The error variance: a larger s2 implies a

larger variance for the OLS estimators

The total sample variation: a larger SSTj

implies a smaller variance for the estimators

Linear relationships among the independent

variables: a larger Rj2 implies a larger

variance for the estimators

23

Misspecified Models

Consider again the misspecifi ed model

s

~ ~

~

~

y b 0 b1 x1 , so that Var b1

SST1

~

Thus, Var b Var bˆ unless x and

1

1

2

1

x2 are uncorrelat ed, then they ' re the same

24

Misspecified Models (cont)

While the variance of the estimator is

smaller for the misspecified model, unless

b2 = 0 the misspecified model is biased

As the sample size grows, the variance of

each estimator shrinks to zero, making the

variance difference less important

25

Estimating the Error Variance

We don’t know what the error variance, s2,

is, because we don’t observe the errors, ui

What we observe are the residuals, ûi

We can use the residuals to form an

estimate of the error variance

26

Error Variance Estimate (cont)

sˆ uˆ

n k 1 SSR df

thus, sebˆ sˆ SST 1 R

2

2

i

j

j

2 12

j

df = n – (k + 1), or df = n – k – 1

df (i.e. degrees of freedom) is the (number

of observations) – (number of estimated

parameters)

27

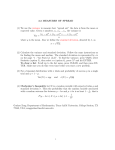

The Gauss-Markov Theorem

Given our 5 Gauss-Markov Assumptions it

can be shown that OLS is “BLUE”

Best

Linear

Unbiased

Estimator

Thus, if the assumptions hold, use OLS

28