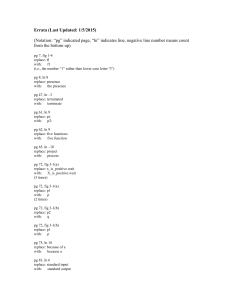

Errata1

... block numbers in the table refer to the original block numbers, i.e., before the deletion or insertion. For example, when block 4 is deleted, we continue referring to the 3 blocks following block 4 as 5, 6, 7, even though these blocks get shifted to the left and thus become the logical blocks 4, 5, ...

... block numbers in the table refer to the original block numbers, i.e., before the deletion or insertion. For example, when block 4 is deleted, we continue referring to the 3 blocks following block 4 as 5, 6, 7, even though these blocks get shifted to the left and thus become the logical blocks 4, 5, ...

Thread

... – One thread (the main thread) listens on the server port for client connection requests and assigns (creates) a thread for each client connected – Each client is served in its own thread on the server – The listening thread should provide client information (e.g. at least the connected socket) to t ...

... – One thread (the main thread) listens on the server port for client connection requests and assigns (creates) a thread for each client connected – Each client is served in its own thread on the server – The listening thread should provide client information (e.g. at least the connected socket) to t ...

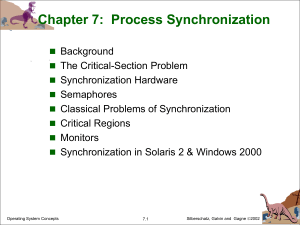

Chapter 5: Process Synchronization

... critical section next cannot be postponed indefinitely 3. Bounded Waiting - A bound must exist on the number of times that other processes are allowed to enter their critical sections after a process has made a request to enter its critical section and before that request is granted ...

... critical section next cannot be postponed indefinitely 3. Bounded Waiting - A bound must exist on the number of times that other processes are allowed to enter their critical sections after a process has made a request to enter its critical section and before that request is granted ...

Martingale problem approach to Markov processes

... considered, it was clear that one has to consider situations other than i.i.d- independent and identically distributed. ...

... considered, it was clear that one has to consider situations other than i.i.d- independent and identically distributed. ...

The C++ language, STL

... In C++, a struct and a class is almost the same thing. Both can have methods. public, private and protected can be used with both. Inheritance is the same. Both are stored in the same way in memory. The only difference is that members are public by default in a struct and private by default in a cla ...

... In C++, a struct and a class is almost the same thing. Both can have methods. public, private and protected can be used with both. Inheritance is the same. Both are stored in the same way in memory. The only difference is that members are public by default in a struct and private by default in a cla ...

ppt

... • Occurs if the effect of multiple threads on shared data depends on the order in which the threads are scheduled ...

... • Occurs if the effect of multiple threads on shared data depends on the order in which the threads are scheduled ...

dist-prog2

... Most parallel languages talk about processes: – these can be on different processors or on different computers ...

... Most parallel languages talk about processes: – these can be on different processors or on different computers ...

Thread

... single chain of instruction execution Interprocess communication share common resources synchronization Concurrent programming: introduces these concepts in programming languages (i.e. new programming primitives) investigates how to build compound systems safely ...

... single chain of instruction execution Interprocess communication share common resources synchronization Concurrent programming: introduces these concepts in programming languages (i.e. new programming primitives) investigates how to build compound systems safely ...

MAITA Project CyberPanel review

... • To modify/observe a component find a residence of the component and modify/observe it in the residence • To modify/observe a component find a migration path and modify/observe it during the transmission Maita Final, Dec. 5, 2002 -- **Not for distribution** ...

... • To modify/observe a component find a residence of the component and modify/observe it in the residence • To modify/observe a component find a migration path and modify/observe it during the transmission Maita Final, Dec. 5, 2002 -- **Not for distribution** ...

ch7

... struct process *L; // list of processes waiting on this semaphore } semaphore; Assume two simple operations: block() suspends the process that invokes it. (places the process in a waiting queue associated with the semaphore) This allows the CPU scheduler to switch in a process that could actua ...

... struct process *L; // list of processes waiting on this semaphore } semaphore; Assume two simple operations: block() suspends the process that invokes it. (places the process in a waiting queue associated with the semaphore) This allows the CPU scheduler to switch in a process that could actua ...

Module 7: Process Synchronization

... link field in each process control block (PCB). This list can use a FIFO to ensure bounded waiting. However, the list may use any queueing strategy. ...

... link field in each process control block (PCB). This list can use a FIFO to ensure bounded waiting. However, the list may use any queueing strategy. ...

Chapter 7 Process Synchronization

... Starvation – indefinite blocking. A process may never be removed from the semaphore queue in which it is suspended. ...

... Starvation – indefinite blocking. A process may never be removed from the semaphore queue in which it is suspended. ...

Bobtail: Avoiding Long Tails in the Cloud

... enter the BOOST state, which allows a VM to automatically receive first execution priority when it wakes due to an I/O interrupt event. VMs in the same BOOST state run in FIFO order. Even with this optimization, Xen’s credit scheduler is known to be unfair to latency-sensitive workloads [24, 8]. As ...

... enter the BOOST state, which allows a VM to automatically receive first execution priority when it wakes due to an I/O interrupt event. VMs in the same BOOST state run in FIFO order. Even with this optimization, Xen’s credit scheduler is known to be unfair to latency-sensitive workloads [24, 8]. As ...

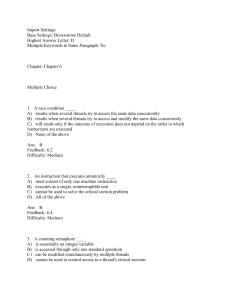

Import Settings: Base Settings: Brownstone Default Highest Answer

... section if a thread is currently executing in its critical section. Furthermore, only those threads that are not executing in their critical sections can participate in the decision on which process will enter its critical section next. Finally, a bound must exist on the number of times that other t ...

... section if a thread is currently executing in its critical section. Furthermore, only those threads that are not executing in their critical sections can participate in the decision on which process will enter its critical section next. Finally, a bound must exist on the number of times that other t ...

Chapter 5

... 3. Bounded Waiting - A bound must exist on the number of times that other processes are allowed to enter their critical sections after a process has made a request to enter its critical section and before that request is granted ...

... 3. Bounded Waiting - A bound must exist on the number of times that other processes are allowed to enter their critical sections after a process has made a request to enter its critical section and before that request is granted ...

Chapter 5: Process Synchronization

... Preemptive – allows preemption of process when running in kernel mode ...

... Preemptive – allows preemption of process when running in kernel mode ...

Chapter 6 Slides

... – c – integer expression evaluated when the wait opertion is executed. – value of c (priority number) stored with the name of the process that is suspended. – when x.signal is executed, process with smallest associated priority number is resumed next. Check tow conditions to establish correctness of ...

... – c – integer expression evaluated when the wait opertion is executed. – value of c (priority number) stored with the name of the process that is suspended. – when x.signal is executed, process with smallest associated priority number is resumed next. Check tow conditions to establish correctness of ...

Research Statement - Singapore Management University

... decentralized resource allocation and scheduling problems. In this framework, jobs are represented by agents, and machine timeslots are treated as resources bidded by job agents through an auctioneer. Given the temporal dependency between tasks, resource bids are combinatorial. I developed different ...

... decentralized resource allocation and scheduling problems. In this framework, jobs are represented by agents, and machine timeslots are treated as resources bidded by job agents through an auctioneer. Given the temporal dependency between tasks, resource bids are combinatorial. I developed different ...