1.10 Euler`s Method

... generate a sequence of approximations y1 , y2 , . . . to the value of the exact solution at the points x1 , x2 , . . . , where xn+1 = xn + h, n = 0, 1, . . . , and h is a real number. We emphasize that numerical methods do not generate a formula for the solution to the differential equation. Rather ...

... generate a sequence of approximations y1 , y2 , . . . to the value of the exact solution at the points x1 , x2 , . . . , where xn+1 = xn + h, n = 0, 1, . . . , and h is a real number. We emphasize that numerical methods do not generate a formula for the solution to the differential equation. Rather ...

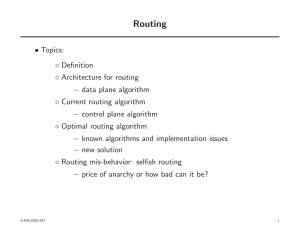

Thomas L. Magnanti and Georgia Perakis

... of the problem. Clearly, L and I depend on the feasible set K, i.e., L = L(K) and l(K), and are part of our input data. ...

... of the problem. Clearly, L and I depend on the feasible set K, i.e., L = L(K) and l(K), and are part of our input data. ...

probability

... probability of obtaining at least 9 Heads away from 50 is = 0.0886 probability of obtaining at least 10 Heads away from 50 is = 0.0569 probability of obtaining at least 11 Heads away from 50 is = 0.0352 probability of obtaining at least 12 Heads away from 50 is = 0.0210 probability of obtaining at l ...

... probability of obtaining at least 9 Heads away from 50 is = 0.0886 probability of obtaining at least 10 Heads away from 50 is = 0.0569 probability of obtaining at least 11 Heads away from 50 is = 0.0352 probability of obtaining at least 12 Heads away from 50 is = 0.0210 probability of obtaining at l ...

Monte Carlo sampling of solutions to inverse problems

... of the model space by using a method described by Wiggins [1969, 1972] in which the model space was sampled according to the prior distribution ρ(m). This approach is superior to a uniform sampling by crude Monte Carlo. However, the peaks of the prior distribution are typically much less pronounced ...

... of the model space by using a method described by Wiggins [1969, 1972] in which the model space was sampled according to the prior distribution ρ(m). This approach is superior to a uniform sampling by crude Monte Carlo. However, the peaks of the prior distribution are typically much less pronounced ...

AWA* - A Window Constrained Anytime Heuristic Search

... terms of information content and performs a global competition among all the partially explored paths to select a new node. In practice, the heuristic errors are usually distance dependent [Pearl, 1984]. Therefore, the nodes lying in the same locality are expected to have comparable errors, where as ...

... terms of information content and performs a global competition among all the partially explored paths to select a new node. In practice, the heuristic errors are usually distance dependent [Pearl, 1984]. Therefore, the nodes lying in the same locality are expected to have comparable errors, where as ...

Variance and Standard Deviation - Penn Math

... distribution is the mean or expected value E (X ). The next one is the variance Var (X ) = σ 2 (X ). The square root of the variance σ is called the Standard Deviation. For continuous random variable X with probability density function f (x) defined on [A, B] we saw: ...

... distribution is the mean or expected value E (X ). The next one is the variance Var (X ) = σ 2 (X ). The square root of the variance σ is called the Standard Deviation. For continuous random variable X with probability density function f (x) defined on [A, B] we saw: ...

A Simplex Algorithm Whose Average Number of Steps Is Bounded

... shown that the number of steps is usually much smaller. This has been observed while solving practical problems, as well as those generated in a laboratory. It has been a challenge to confirm these findings theoretically. Tremendous effort has been made in the direction of studying properties of con ...

... shown that the number of steps is usually much smaller. This has been observed while solving practical problems, as well as those generated in a laboratory. It has been a challenge to confirm these findings theoretically. Tremendous effort has been made in the direction of studying properties of con ...

slides

... local optima (where all the neighbouring solutions are non-improving) by guiding a steepest descent local seach (or steepest ascent hill climbing ) algorithm; • Uses of memory to: – prevent the search from revisiting previously visited solutions; – explore the unvisited areas of the solution space; ...

... local optima (where all the neighbouring solutions are non-improving) by guiding a steepest descent local seach (or steepest ascent hill climbing ) algorithm; • Uses of memory to: – prevent the search from revisiting previously visited solutions; – explore the unvisited areas of the solution space; ...

Simulated annealing

Simulated annealing (SA) is a generic probabilistic metaheuristic for the global optimization problem of locating a good approximation to the global optimum of a given function in a large search space. It is often used when the search space is discrete (e.g., all tours that visit a given set of cities). For certain problems, simulated annealing may be more efficient than exhaustive enumeration — provided that the goal is merely to find an acceptably good solution in a fixed amount of time, rather than the best possible solution.The name and inspiration come from annealing in metallurgy, a technique involving heating and controlled cooling of a material to increase the size of its crystals and reduce their defects. Both are attributes of the material that depend on its thermodynamic free energy. Heating and cooling the material affects both the temperature and the thermodynamic free energy. While the same amount of cooling brings the same amount of decrease in temperature it will bring a bigger or smaller decrease in the thermodynamic free energy depending on the rate that it occurs, with a slower rate producing a bigger decrease.This notion of slow cooling is implemented in the Simulated Annealing algorithm as a slow decrease in the probability of accepting worse solutions as it explores the solution space. Accepting worse solutions is a fundamental property of metaheuristics because it allows for a more extensive search for the optimal solution.The method was independently described by Scott Kirkpatrick, C. Daniel Gelatt and Mario P. Vecchi in 1983, and by Vlado Černý in 1985. The method is an adaptation of the Metropolis–Hastings algorithm, a Monte Carlo method to generate sample states of a thermodynamic system, invented by M.N. Rosenbluth and published in a paper by N. Metropolis et al. in 1953.