Best Keyword Cover Search

... recent years, we observe the increasing availability and importance of keyword rating in object evaluation for the better decision making. This motivates us to investigate a generic version of Closest Keywords search called Best Keyword Cover which considers inter-objects distance as well as the key ...

... recent years, we observe the increasing availability and importance of keyword rating in object evaluation for the better decision making. This motivates us to investigate a generic version of Closest Keywords search called Best Keyword Cover which considers inter-objects distance as well as the key ...

The x

... ✦ Thus, the zeros of f ′ are x = –1 and x = 1. ✦ Also note that f ′ is not defined at x = 0, so we have four intervals to consider: (– , –1), (– 1, 0), (0, 1), and (1, ). Example 4, page 247 ...

... ✦ Thus, the zeros of f ′ are x = –1 and x = 1. ✦ Also note that f ′ is not defined at x = 0, so we have four intervals to consider: (– , –1), (– 1, 0), (0, 1), and (1, ). Example 4, page 247 ...

Information Gathering and Reward Exploitation of Subgoals for

... help of roadmap GM , we first extract a path transitioning to the closest m 2 M from current state s. To do so, we store a list of all outgoing edges for each subgoal according to GM . If s is not a subgoal, the path will be the only path from s to the subgoal in the same partition; otherwise s is a ...

... help of roadmap GM , we first extract a path transitioning to the closest m 2 M from current state s. To do so, we store a list of all outgoing edges for each subgoal according to GM . If s is not a subgoal, the path will be the only path from s to the subgoal in the same partition; otherwise s is a ...

The x

... is increasing and where it is decreasing. Solution 2. To determine the sign of f ′(x) in the intervals we found (– , –2), (– 2, 4), and (4, ), we compute f ′(c) at a convenient test point in each interval. ✦ Lets consider the values –3, 0, and 5: f ′(–3) = 3(–3)2 – 6(–3) – 24 = 27 +18 – 24 = 21 > ...

... is increasing and where it is decreasing. Solution 2. To determine the sign of f ′(x) in the intervals we found (– , –2), (– 2, 4), and (4, ), we compute f ′(c) at a convenient test point in each interval. ✦ Lets consider the values –3, 0, and 5: f ′(–3) = 3(–3)2 – 6(–3) – 24 = 27 +18 – 24 = 21 > ...

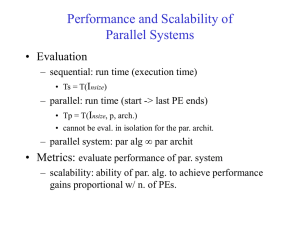

Seven Challenges in Parallel SAT Solving

... 1996). As we have seen with the previous challenge, finding a good decomposition prior to solving the formula is a hard problem as it is hard to predict the hardness of each of the subproblems. In the second category of decompositions, the instance itself is decomposed such that none of the computin ...

... 1996). As we have seen with the previous challenge, finding a good decomposition prior to solving the formula is a hard problem as it is hard to predict the hardness of each of the subproblems. In the second category of decompositions, the instance itself is decomposed such that none of the computin ...

Lecture Notes for Algorithm Analysis and Design

... Clearly it grows exponentially with n. You can also prove that Fn = 1 + ...

... Clearly it grows exponentially with n. You can also prove that Fn = 1 + ...

Case Adaptation by Segment Replanning for Case

... This paper introduces a domain-independent and anytime behavior adaptation process, called CASER (Case Adaptation by Segment Replanning), that finds an easyto-generate solution with low quality and adapts this solution to improve it. It follows the same idea as PbR. However, this method does not req ...

... This paper introduces a domain-independent and anytime behavior adaptation process, called CASER (Case Adaptation by Segment Replanning), that finds an easyto-generate solution with low quality and adapts this solution to improve it. It follows the same idea as PbR. However, this method does not req ...

Simulated annealing

Simulated annealing (SA) is a generic probabilistic metaheuristic for the global optimization problem of locating a good approximation to the global optimum of a given function in a large search space. It is often used when the search space is discrete (e.g., all tours that visit a given set of cities). For certain problems, simulated annealing may be more efficient than exhaustive enumeration — provided that the goal is merely to find an acceptably good solution in a fixed amount of time, rather than the best possible solution.The name and inspiration come from annealing in metallurgy, a technique involving heating and controlled cooling of a material to increase the size of its crystals and reduce their defects. Both are attributes of the material that depend on its thermodynamic free energy. Heating and cooling the material affects both the temperature and the thermodynamic free energy. While the same amount of cooling brings the same amount of decrease in temperature it will bring a bigger or smaller decrease in the thermodynamic free energy depending on the rate that it occurs, with a slower rate producing a bigger decrease.This notion of slow cooling is implemented in the Simulated Annealing algorithm as a slow decrease in the probability of accepting worse solutions as it explores the solution space. Accepting worse solutions is a fundamental property of metaheuristics because it allows for a more extensive search for the optimal solution.The method was independently described by Scott Kirkpatrick, C. Daniel Gelatt and Mario P. Vecchi in 1983, and by Vlado Černý in 1985. The method is an adaptation of the Metropolis–Hastings algorithm, a Monte Carlo method to generate sample states of a thermodynamic system, invented by M.N. Rosenbluth and published in a paper by N. Metropolis et al. in 1953.