* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Lecture 16

Survey

Document related concepts

Transcript

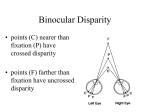

Computational Vision CSCI 363, Fall 2012 Lecture 16 Stereopsis 1 Random Dot Stereogram 2 Transformed 3 Linear Systems •Linear functions: F(x1 + x2) = F(x1) + F(x2) F(ax) = aF(x) •Linear systems are nice to work with because you can predict (or compute) the responses of the system relatively easily. •For example, if you double the input, the output doubles. •Fourier Transforms are linear operations. (The Fourier transform of the sum of two images is the sum of the Fourier transforms of each image). •Gabor filters are linear filters. •Neurons are not linear. 4 Threshold and Saturation Threshold non-linearity: Neurons do not respond until the input reaches a minimum level (threshold). Response Saturation non-linearity: Neurons have a maximum firing rate. The response saturates after they reach this maximum. Threshold Saturation Linear response region Input strength 5 Phase and Half-wave Rectification Phase non-linearity: Complex cells are insensitive to the phase (position) of a grating within the receptive field. Complex cells do not sum inputs within the receptive field. Response Half-wave Rectification: Cortical cells have a low spontaneous firing rate. There cannot be as large a negative response as a positive response. The bottom half of the waveform is clipped off. This can be alleviated with pairs of matched cells that are 180 deg out of phase with one another. The difference in responses acts like a linear 6 time response. Lateral Inhibition •There is evidence that a spatial frequency channel is inhibited by other channels tuned to nearby frequencies. (Also true for orientation tuning). •This is accomplished by lateral inhibitory connections within the cortex, known as lateral inhibition. •This can cause interesting effects, such as repulsion of perceived orientation when 2 lines of similar orientation are shown close together. •If you adapt 1 spatial frequency, there is an increased sensitivity at other nearby frequencies. •Inhibitory interactions can help to make tuning curves narrower. 7 Random Dot Stereogram 8 Binocular Stereo •The image in each of our two eyes is slightly different. •Images in the plane of fixation fall on corresponding locations on the retina. •Images in front of the plane of fixation are shifted outward on each retina. They have crossed disparity. •Images behind the plane of fixation are shifted inward on the retina. They have uncrossed disparity. 9 Crossed and uncrossed disparity 1 uncrossed (negative) disparity plane of fixation 2 crossed (positive) disparity 10 Stereo processing To determine depth from stereo disparity: 1) Extract the "features" from the left and right images 2) For each feature in the left image, find the corresponding feature in the right image. 3) Measure the disparity between the two images of the feature. 4) Use the disparity to compute the 3D location of the feature. 11 The Correspondence problem •How do you determine which features from one image match features in the other image? (This problem is known as the correspondence problem). •This could be accomplished if each image has well defined shapes or colors that can be matched. •Problem: Random dot stereograms. Left Image Right Image Making a stereogram 12 Random Dot Stereogram 13 Problem with Random Dot Stereograms •In 1980's Bela Julesz developed the random dot stereogram. •The stereogram consists of 2 fields of random dots, identical except for a region in one of the images in which the dots are shifted by a small amount. •When one image is viewed by the left eye and the other by the right eye, the shifted region is seen at a different depth. •No cues such as color, shape, texture, shading, etc. to use for matching. •How do you know which dot from left image matches which dot from the right image? 14 Using Constraints to Solve the Problem To solve the correspondence problem, we need to make some assumptions (constraints) about how the matching is accomplished. Constraints used by many computer vision stereo algorithms: 1) Uniqueness: Each point has at most one match in the other image. 2) Similarity: Each feature matches a similar feature in the other image (i.e. you cannot match a white dot with a black dot). 3) Continuity: Disparity tends to vary slowly across a surface. (Note: this is violated at depth edges). 4) Epipolar constraint: Given a point in the image of one eye, the matching point in the image for the other eye must lie 15 along a single line. The epipolar constraint Feature in left image Possible matches in right image 16 It matters where you look •If the observer is fixating a point along a horizontal plane through the middle of the eyes, the possible positions of a matching point in the other image lie along a horizontal line. •If the observer is looking upward or downward, the line will be tilted. •Most stereo algorithms for machine vision assume the epipolar lines are horizontal. •For biological systems, the stereo computation must take into account where the eyes are looking (e.g. upward or downward). 17