* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download a An example

Psychometrics wikipedia , lookup

History of statistics wikipedia , lookup

Bootstrapping (statistics) wikipedia , lookup

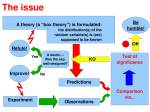

Foundations of statistics wikipedia , lookup

Taylor's law wikipedia , lookup

Statistical hypothesis testing wikipedia , lookup

Gibbs sampling wikipedia , lookup

Resampling (statistics) wikipedia , lookup

Today • • • • • • • • • Null and alternative hypotheses 1- and 2-tailed tests Regions of rejection Sampling distributions The Central Limit Theorem Standard errors z-tests for sample means The 5 steps of hypothesis-testing Type I and Type II error (not necessarily in this order) 1 Hypothesis testing • Approach hypothesis testing from the standpoint of theory. • If our theory about some phenomenon is correct, then things should be a certain way. • If the commercial really works, then we should see an increase in sales (that cannot easily be attributed to chance). • Hypotheses are stated in terms of parameters (e.g., “the average difference between Groups A and B is zero in the population”). 2 Hypothesis testing • We will always observe some kind of effect, even if nothing interesting is going on. • It could be due to chance fluctuations, or sampling error... or there really could be an effect in the population. • Inferential statistics help us decide. • If we conclude, on the basis of statistics, that an effect should not be attributed to chance, the effect is termed statistically significant. 3 Gender: F 10 • Say we know m and s, and that they are m = 64.28” and s = 3.1”, like in the female sample. • We want to know if the 74-inch-tall person is female. • Use logic to make a good guess. Frequency 8 6 4 2 Mean = 64.28 Std. Dev. = 3.077 N = 47 0 56 58 60 62 64 66 68 70 How tall are you in inches? 4 Gender: F 10 • If the person is female, then her distribution has m = 64.28” and s = 3.1” (assuming normality). • That implies that “her” z-score is: Frequency 8 6 4 z 2 Mean = 64.28 Std. Dev. = 3.077 N = 47 0 56 58 60 62 64 66 68 70 xm s 74 64.28 3.14 3.1 p .001 How tall are you in inches? • Very unlikely that this person is female! • We could do this because we made the assumption of normality, and assumed m = 64.28” and s = 3.1”. 5 Hypothesis testing • A hypothesis is a theory-based prediction about population parameters. • Researchers begin with a theory. • Then they define the implications of the theory. • Then they test the implications using if-then logic (e.g., if the theory is true, then the population mean should be greater than 3.8). 6 Hypothesis testing • Null hypothesis – Represents the “status quo” situation. Usually, the hypothesis of no difference or no relationship. E.g. ... H0 : m 0 • Alternative hypothesis – what we are predicting will occur. Usually, the most scientifically interesting hypothesis. E.g. ... H1 : m 0 7 Conventions • By convention, the null and alternative hypotheses are mutually exclusive and exhaustive. E.g. ... H 0 : m 40% H1 : m 40% • Not everyone follows this convention. 8 Hypothesis testing • This is an example of a 2-tailed hypothesis test: H 0 : m 40% H1 : m 40% • Null distribution: Null Distribution for Recidivism 0.05 0.04 0.03 0.02 0.01 0.00 0 20 40 60 Recidivism Percentage 80 100 9 1-tailed tests • Say we had the following hypotheses: H0 : m 0 H1 : m 0 • We would reject the null hypothesis only if the observed mean is sufficiently positive. • “Sufficiently” because sample means will always differ. We care about the population, not samples. • If we conclude that chance variability isn’t driving the effect, then we say the effect is statistically significant. 10 An example... • Say we want to know if UNC students’ IQ differs from the national average. We know: m 500 s 100 • We pick a student at random (our “sample”), and give her an IQ test. She scores 700. • Was her score drawn from the U.S. population at large, or from another (more intelligent) distribution? 11 An example... • The null hypothesis is that she is part of the U.S. population distribution of IQ test-takers. Nothing special. • The alternative is that she is from some other (more intelligent) population distribution. H 0 : m 500 H1 : m 500 • 1-tailed, because we are interested only if UNC students are more intelligent than average. 12 An example... 0.005 Null Distribution of IQ scores (U.S. population) 0.004 • First, draw the null distribution: 0.003 0.002 0.001 0.000 100 200 300 400 500 600 700 800 900 IQ 0.005 Null Distribution of IQ scores (U.S. population) 0.004 • Then define the region(s) of rejection: 0.003 a 0.002 0.001 0.000 100 200 300 400 500 600 700 800 900 IQ 13 An example... 0.005 Null Distribution of IQ scores (U.S. population) 0.004 0.003 0.002 a 0.001 0.000 100 200 300 400 500 600 700 800 900 IQ 14 An example... • How did I find the “critical value” of IQ? By knowing alpha, knowing how to use Table E10, and a little algebra... • First, find z given p, then... z x m0 sx zs x x m0 x zs x m0 x 1.645 100 500 x 664.5 15 An example... • Our student’s IQ score is 700. Does it fall in the region of rejection? 0.005 Null Distribution of IQ scores (U.S. population) 0.004 0.003 0.002 0.001 ...Yes! 0.000 100 200 300 400 500 600 700 800 900 IQ 16 An example... • We could have done this by comparing z-scores instead of raw scores. z x m0 sx 700 500 2.0 100 • 2.0 > 1.645, so we reject H0. 17 An example... • We also could have done this by comparing a pvalue to a instead of comparing raw scores or zscores. • The p-value corresponding to a z-score or 2.0 is .0228. • .0228 < .05, so we reject H0. • A UNC student with an IQ of 700 would be very rare if drawn from the null population with m = 500. In fact, even more rare than we are willing to tolerate (remember, a = .05). 18 3 Decision rules in this example • We need to know if we should reject H0. These three rules all yield the same conclusion. Reject H0 if... xobs xcritical zobs zcritical p a 19 But... • Wait a minute – we did all that with only one student?? • The sample was very small (N = 1) to making such bold claims about UNC. • We need a representative sample, N >> 1. • The logic of hypothesis testing is exactly the same with samples as it is with individuals. • But, we need to know about sampling distributions... 20 Sampling distributions • Sampling distribution: A distribution of some statistic. • “Sampling distribution of _____” (mean / variance / z, t, etc.) 21 The Central Limit Theorem • Given a population with mean m and variance s2, the sampling distribution of the mean (the distribution of sample means) will have a mean equal to m and a variance equal to: s s 2 x 2 x N ...and thus a standard deviation of: sx sx N The distribution will approach normality as N increases. [from Howell, p. 267] 22 The Central Limit Theorem sx sx N • ...is called the standard error of the mean, or simply standard error. 23 0.005 Null Distribution of IQ scores (U.S. population) 0.004 The Central Limit Theorem N=1 0.003 0.002 0.001 0.000 100 200 300 400 500 600 700 800 900 IQ 0.010 Null Distribution of IQ scores (U.S. population) • As sample size increases, the standard error decreases. 0.008 N=5 0.006 0.004 0.002 0.000 100 200 300 400 500 600 700 800 900 IQ 0.020 0.018 Null Distribution of IQ scores (U.S. population) 0.016 N = 20 0.014 0.012 0.010 0.008 0.006 0.004 0.002 0.000 100 200 300 400 500 IQ 600 700 800 900 24 The Central Limit Theorem • Another example... 25 Back to the UNC IQ example... • Let’s say we that we collect a sample of N = 4 UNC students. • Their IQs are 700, 710, 680, and 670. • Now the mean is x 690 • Is there enough evidence to claim that UNC students are brighter than average? • Now the question is, “if the population mean is 500, how extreme would a sample mean of 690 be (given that N = 4)? 26 In terms of z-scores... z x m0 sx x m0 s x 690 500 3.8 100 4 N • The critical value for z is still +1.645 (because it’s a 1-tailed test and a = .05). • 3.8 > 1.645, so reject H0. • Conclusion: UNC students are likely brighter than average (we’ll never really know for sure). 27 Another example • Your theory says that Benadryl should alter reaction time on some task, but you are not sure how. The null and alternative hypotheses might be: H 0 : m 0.09sec H1 : m 0.09sec • We’re given that s = .032 seconds • We’re given that N = 400 • We’re given that a = .01 28 Finding critical z’s for a 2-tailed test 0.5 Standard Normal Distribution 0.4 Density 0.3 a 2 0.2 a 2 0.1 0.0 -5 -4 -3 -2 -1 0 1 2 3 4 5 z z = -2.575 z = +2.575 29 Finding critical reaction times 300 s .032sec Reaction Time Sampling Distribution of the Mean 250 s x .032 Density 200 150 100 a 2 a 2 400 .032 / 20 .0016 50 0 0.082 0.084 0.086 0.088 0.090 0.092 0.094 0.096 0.098 seconds 30 Another example • We collect data from our 400 subjects and find the mean RT to be .097 seconds. • .097 is different from .09, but different enough? z x m0 sx .097 .09 .007 4.375 .032 .0016 400 • 4.375 > 2.575, so reject H0. Benadryl probably does have an effect on reaction time. Specifically, it slows people down. 31 N = 1: a special case? z x m0 sx • When N = 1, x m0 s x N xx s x s x s 1 x N ...and: z x m0 sx 32 The 5 steps of hypothesis testing 1. Specify null and alternative hypotheses. 2. Identify a test statistic. 3. Specify the sampling distribution and sample size. 4. Specify alpha and the region(s) of rejection. 5. Collect data, compute the test statistic, and make a decision regarding H0. 33 1. Null and alternative hypotheses • Specify H0 and H1 in terms of population parameters. • H0 is presumed to be true in the absence of evidence against it. • H1 is adopted if H0 is rejected. H 0 : m 0.09sec H1 : m 0.09sec 34 2. Identify a test statistic • Identify a test statistic that is useful for discriminating between different hypotheses about the population parameter of interest, taking into account the hypothesis being tested and the information known. • E.g., z, t, F, and c2. 35 3. Sampling distribution and N • Specify the sampling distribution and sample size. • The sampling distribution here refers to the distribution of all possible values of the test statistic obtained under the assumption that H0 is true. • E.g., “N = 48. The sampling distribution is the standard normal distribution (distribution of z statistics), because we are testing a hypothesis about the population mean when s is known.” 36 4. Specify a and the rejection regions • Alpha (a) is the probability of incorrectly rejecting H0 (rejecting the null hypothesis when it is really true). • Regions of rejection are those ranges of the test statistic’s sampling distribution which, if encountered, would lead to rejecting H0. • The regions of rejection are determined by a and by whether the test is 1-tailed or 2-tailed. 37 5. Collect data, compute the test statistic, make a decision • For example... z x m0 s x N 20 5 48 2 2.77 .722 • E.g., “2.77 > 1.96, so reject H0 and conclude that...” • Always couch the conclusion in terms of the original problem. 38 The 5 steps: Example • Let’s say you think a certain standardized achievement test is biased against Asian-Americans. You know that for the non-Asian-American population... m 100 s 10 • In the sample... N 28 39 The 5 steps: Example 1. Specify null and alternative hypotheses. H 0 : m 100 H1 : m 100 2. Identify a test statistic. We want to compare a sample mean to a hypothesized value, and we know s, so we use a z-test. 40 The 5 steps: Example 3. Specify the sampling distribution and sample size. The sampling distribution of z is the standard normal distribution. N 28 4. Specify alpha and the region(s) of rejection. a .05 The regions of rejection are harder... 41 The 5 steps: Example 5. Collect data, compute the test statistic, make a decision. We collect data. Say the mean is 97.1. Does 97.1 fall in the region of rejection? z x m sx x m s x 97.1 100 2.9 1.53 10 1.89 N 28 42 Type I and Type II errors • There are two ways to make an incorrect decision in hypothesis testing: Type I and Type II errors. • Type I error: Concluding that the null hypothesis is false when it is really true. • We control the probability of making a Type I error (alpha). • Alpha (a): The risk of incorrectly rejecting a true null hypothesis. • Why not make a really, really small? The smaller we make a, the more likely it becomes we will encounter a Type II error. 43 Type I and Type II errors • Type II error: Concluding the null hypothesis is true when it is really false. • Beta (b): The probability of incorrectly retaining a false null hypothesis. 44 Next time... • Power • Effect size • Statistical significance vs. practical significance • Confidence intervals 45