* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download sampling distribution of differences between two

History of statistics wikipedia , lookup

Sufficient statistic wikipedia , lookup

Confidence interval wikipedia , lookup

Bootstrapping (statistics) wikipedia , lookup

Degrees of freedom (statistics) wikipedia , lookup

Misuse of statistics wikipedia , lookup

German tank problem wikipedia , lookup

Taylor's law wikipedia , lookup

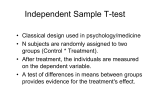

RMTD 404 Lecture 7 Two Independent Samples t Test Probably the most common experimental design involving two groups is one in which participants are random assigned into treatment and control conditions. Because such observations are likely to have minimal dependence between treatment and control participants (although it is possible—people might know one another or there may be unintentional similarities among subgroups within each condition), observations are said to be independent between the two groups. Hence, along with having the desire to compare the means of two groups and not knowing the population variance, this design involves observations of two independent samples. The appropriate statistic would then be the two independent samples t-test. 2 The relevant sampling distribution for this test is the sampling distribution of differences between two means ( X1 X 2 ). If we were to create such a distribution, we would collect many pairs of samples (one from each group), compute the mean for each of the two samples, and record the difference of these means. The sampling distribution would be the distribution of a very large number of differences between the two means. Obviously, our interest is in determining whether it is likely that the two samples came from different populations. Hence, the most common null hypothesis is that X1 X 2 or that X1 X 2 0 for the twotailed case. The null hypothesis says that the means for the two samples are from the same population, or there is no difference between the means of the two samples in the population. 3 The variance of the sampling distribution of the differences between two means, on the other hand, is obtained from the variance sum law, which states that the variance of a sum or difference of two independent variables is equal to the sum of their variances. X2 2 2 X1 X2 1 X 2 Note that this is only true when the variables are independent. This law does not apply to the standard error of the two matched samples pairs ttest. In that case, the variance equals the sum the variances and two times the product of the standard deviations and the correlation between the two variables. X2 2 2 2 X1 X 2 X1 X2 1 X 2 4 Applying the variance sum law to the sampling distribution of the differences between two means, we have the following. X2 X2 X2 2 2 1 2 X1 X2 1 X 2 N1 N2 The final point about the sampling distribution of the differences between two means concerns its mean. The sum or difference of two independent normal distributions is itself normally distributed with a mean equal to the sum or difference in the means of the respective distributions. Hence, we know that the sampling distribution of the difference between two means is normally distributed with a mean equal to the difference between the populations means (i.e., X1 X 2 X1 X),2 given a large sample. 5 And, we can extend our t statistic in the same way we did in the previous example. We are interested in comparing an observed statistic to the parameter it estimates, and we know the standard error of that statistic. Hence, we can write a t-test for two independent samples as follows. t X1 X 2 X1 X 2 X1 X 2 X1 X 2 s 2X s 2X 1 2 s 2X 1 N1 s 2X 2 N2 Incidentally, if we know the two population variances, we can use the z statistic version of the test. z X1 X 2 X1 X 2 X2 1 N1 X2 2 N2 6 X1 or X 2 Given that we typically test a null hypothesis that and 2 of the t-statistic equation. we can drop the out 1 t X1 X,2 0 X1 X 2 s 2X 1 N1 s 2X 2 N2 It is important to note that this statistic is only valid when: (a) the observations are independent between samples, (b) the populations are normally distributed or the sample size is large enough to rely on the central limit theorem for normalizing the sampling distribution, (c) the sample sizes are equal (N1 = N2), and X2 X2 ). X2 (d) the variances of the two populations are equal ( 1 2 7 Recall that the shape of the t distribution changes as sample size increases, and we depict the shape of the distribution based on the concept of degrees of freedom. Note that for the two independent samples t-test, there are two sources of degrees of freedom—one for the variance of each sample. Hence, the degrees of freedom for the two independent samples t-test is simply the sum of the degrees of freedom of the two variances that are being estimated. df1 N1 1 df 2 N 2 1 dftotal df1 df 2 N1 1 N 2 1 N1 N 2 2 8 Graphically, here’s what we do: null population X1 X 2 X1 X 2 0 X1 X 2 t X1 X 2 tCV p 9 Example using SPSS: IV: Gender(1=Male; 2=Female) DV: Reading Achievement Group Statistics Follow-up Reading std score COMPOSITE SEX MALE FEMALE N 117 138 Mean 50.4083 51.3812 Std. Deviation 10.37854 9.03615 Std. Error Mean .95950 .76921 Independent Samples Test Levene's Test for Equality of Variances F Follow-up Reading std score Equal variances as sumed Equal variances not ass umed 5.490 Sig. .020 t-test for Equality of Means t df Sig. (2-tailed) Mean Difference Std. Error Difference 95% Confidence Interval of the Difference Lower Upper -.800 253 .424 -.97287 1.21585 -3.36734 1.42160 -.791 231.910 .430 -.97287 1.22976 -3.39580 1.45006 10 When the sample sizes are unequal, we must pool the variances in the formula for the standard error by weighting each variance by its degrees of freedom. We do this weighting because the simple average of the two variances would cause the smaller sample to have more influence per person than would be the case for the larger sample. We call this weighted average the pooled variance estimate. s 2p N1 1s 2X When N1 = N2: 1 N 2 1s 2X N1 N 2 2 s 2p 2 N1 1 s X2 N 2 1 s X2 1 N1 N 2 2 N 1 ( s X2 1 s X2 2 ) 2( N 1) 2 N 1 (s X2 1 s X2 2 ) 2N 2 s X2 1 s X2 2 2 Hence, the correction (pooling) makes no difference when the sample sizes are equal. As a result, you can always use the correction for unequal sample sizes. 11 We then substitute the pooled variance estimate into the t statistic. t X1 X 2 s 2p N1 s 2p N2 X1 X 2 1 1 s 2p N1 N 2 Note that all the pooled variance estimate accomplishes is weighting each variance by its sample size so that all observations in the study make an equal contribution to the magnitude of the standard error. * SPSS always uses the pooled variance. 12 Two Independent Samples t Test: (Unequal 2 ) When the population variances are equal (a.k.a. homogeneous variances), the pooled variance estimate allows us to average sample variances that have different values to produce an estimate of the population variance. But, when the population variances are unequal (a.k.a. heterogeneous variances), we cannot simply average the variances because the distribution of such a t statistic does not follow a t distribution. The most common solution to this problem was developed by Welch and Satterthwaite. Their correction adjusts the degrees of freedom for the t-test via the following formula. 2 2 2 s 1 s2 N N 1 2 df int 2 2 2 2 s1 s2 N N1 2 N1 1 N 2 1 2 2 2 s1 s2 N N 1 2 or int 2 2 2 2 2 s s 1 2 N1 N2 N 1 N 1 2 1 13 The Welch-Sattherwaite correction adjusts the degrees of freedom to be a value between the degrees of freedom for the smaller sample and the degrees of freedom for the combined sample. An obvious question is How do you know whether to use this correction? The problem is that we never know whether the population variances are equal because we cannot observe those parameters. The answer to the question is we compare the two sample variances to determine the likelihood that they could have been produced by populations with the same variance—another hypothesis testing problem. 2 2 H0: . 1 2 In SPSS, we look at the Levene’s test to decide whether the two sample variances are from the same population. 14 If we reject this null hypothesis, we conclude that the sample variances could not have come from a common population, and we use the Sattherwaite correction for the t-test’s degrees of freedom. If we retain the null hypothesis, we conclude that the sample variances could have come from a common population, and we use the equal variance degrees of freedom for the t-test. This is the example we saw earlier: Independent Samples Test Levene's Test for Equality of Variances F Follow-up Reading std score Equal variances as sumed Equal variances not ass umed 5.490 Sig. .020 t-test for Equality of Means t df Sig. (2-tailed) Mean Difference Std. Error Difference 95% Confidence Interval of the Difference Lower Upper -.800 253 .424 -.97287 1.21585 -3.36734 1.42160 -.791 231.910 .430 -.97287 1.22976 -3.39580 1.45006 15 To summarize, we’ve mentioned three assumptions that need to be considered when using a t-test. 1. Normality of the sampling distribution of the differences. For equal sample sizes, violating this assumption has only a small impact. If the distributions are skewed, then serious problems arise unless the variances are similar. 2. Homogeneity of variance. For equal sample sizes, violating this assumption has only a small impact. 3. Equality of sample size. When sample sizes are unequal and variances are non-homogeneous, there are large differences between the assumed and true Type I error rates. 16 Confidence Intervals We’ve discussed two approaches to interpreting a hypothesis test so far: (a) determine whether the p-value is smaller than the a that you’ve chosen and (b) determine whether the observed statistic is more extreme than the critical value. A third and slightly different approach to interpreting a hypothesis test is referred to as constructing a confidence interval around a parameter estimate. Note that the examples we have used so far all determine the degree to which a point estimate of a parameter could have come from a particular null distribution. The notion of confidence intervals approaches the issue from a slightly different angle. Instead of determining the likelihood that the observed statistic could have come from a null population, we could construct an interval around the point estimate that identifies the range of parameter values that could have produced that statistic. 17 Let’s look at an example of a confidence interval. Below is the formula for a tstatistic. t X 0 X 0 sX sX N Once we’ve collected data, we know the values of the sample mean, the sample standard deviation, and the sample size. The only unknowns are the parameter (μ0) and the t value. Typically, we solve for t by plugging in a null value for μ0. But what if we plugged in a null value for t instead? Then we could solve for μ0. The best way to do this is to identify a critical value from the t distribution that is interesting to you. Doing so allows us to identify the range of parameters that could have produced the observed mean and would not have led us to reject the null hypothesis. 18 Graphically, here’s what I’m saying: X tcv s X X X tcv s X a a L X L tcv s X U tcv s X U 19 Hence, we can create confidence intervals around an observed statistic (point estimate) that would encompass parameter values that were likely to have produced the statistic. Strictly speaking, we are not estimating the range of population parameters because only a single population parameter produced the observed statistic. Rather, we are estimating how much the observed statistic could vary due to random variation, and hence, we are estimating the range of parameter values that could have produced the observed statistic, given it’s likely variation due to sampling error. The general formula for creating such a confidence interval is. CI1a % parameter statistic critical value1-α% SEstatistic This equation is read as the (100 x α)% confidence interval (CI) around the observed statistic equals the observed statistic plus and minus the critical value associated with p and the standard error of the statistic. In the case of a mean when the population variance is unknown. CI1a % X tcv1a % SE X 20 An example—suppose we wanted to create a 95% confidence interval around an observed mean LUC GRE score of 565 when the standard deviation equals 75. Hence, we first obtain the appropriate critical t-value resulting in an alpha of .025 (a two-tailed test—half of the alpha goes into each tail)—1.96. The standard error of the mean equals 4.33: 75 4.33 300 Plugging the known values into the CI equation gives us: CI 95% 565 1.96 4.33 LL 556.51 UL 573.49 Hence, we can say that population means ranging from 556.5 to 573.5 could have produced the observed mean of 565 95% of the time due to sampling error. We would write this as: CI95% : 556.51 573.49 21 We can draw similar confidence intervals around the difference between two means CI1a % 1 2 X1 X 2 t p s X1 X 2 Look at the CI in the example from SPSS output: Independent Samples Test Levene's Test for Equality of Variances F Follow-up Reading std score Equal variances as sumed Equal variances not ass umed Sig. 5.490 .020 t-test for Equality of Means t df Sig. (2-tailed) Mean Difference Std. Error Difference 95% Confidence Interval of the Difference Lower Upper -.800 253 .424 -.97287 1.21585 -3.36734 1.42160 -.791 231.910 .430 -.97287 1.22976 -3.39580 1.45006 Let’s try this out: CI.U = -.97287 - 1.22976*1.96 CI.U [1] -3.38 CI.L = -.97287 + 1.22976*1.96 CI.L [1] 1.44 22 Some practice in R… 23 Review… 24