* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download PPT

Survey

Document related concepts

Transcript

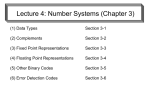

IT 252

Computer Organization

and Architecture

Number Representation

Chia-Chi Teng

Where Are We Now?

CS142 & 124

IT344

Review (do you remember from 124/104?)

• 8 bit signed 2’s complement binary #

-> decimal #

• 0111 1111 = ?

• 1000 0000 = ?

• 1111 1111 = ?

• Decimal # -> 8 bit signed 2’s

complement binary #

• 32 = ?

• -2 = ?

• 200 = ?

Decimal Numbers: Base 10

Digits: 0, 1, 2, 3, 4, 5, 6, 7, 8, 9

Example:

3271 =

(3x103) + (2x102) + (7x101) + (1x100)

Numbers: positional notation

• Number Base B B symbols per digit:

• Base 10 (Decimal): 0, 1, 2, 3, 4, 5, 6, 7, 8, 9

Base 2 (Binary):

0, 1

• Number representation:

• d31d30 ... d1d0 is a 32 digit number

• value = d31 B31 + d30 B30 + ... + d1 B1 + d0 B0

• Binary:

0,1 (In binary digits called “bits”)

• 0b11010 = 124 + 123 + 022 + 121 + 020

= 16 + 8 + 2

#s often written = 26

0b… • Here 5 digit binary # turns into a 2 digit decimal #

• Can we find a base that converts to binary easily?

Hexadecimal Numbers: Base 16

• Hexadecimal:

0, 1, 2, 3, 4, 5, 6, 7, 8, 9, A, B, C, D, E, F

• Normal digits + 6 more from the alphabet

• In C, written as 0x… (e.g., 0xFAB5)

• Conversion: BinaryHex

• 1 hex digit represents 16 decimal values

• 4 binary digits represent 16 decimal values

1 hex digit replaces 4 binary digits

• One hex digit is a “nibble”. Two is a “byte”

• 2 bits is a “half-nibble”. Shave and a haircut…

• Example:

• 1010 1100 0011 (binary) = 0x_____ ?

Decimal vs. Hexadecimal vs. Binary

Examples:

1010 1100 0011 (binary)

= 0xAC3

10111 (binary)

= 0001 0111 (binary)

= 0x17

0x3F9

= 11 1111 1001 (binary)

How do we convert between

hex and Decimal?

MEMORIZE!

00

01

02

03

04

05

06

07

08

09

10

11

12

13

14

15

0

1

2

3

4

5

6

7

8

9

A

B

C

D

E

F

0000

0001

0010

0011

0100

0101

0110

0111

1000

1001

1010

1011

1100

1101

1110

1111

Precision and Accuracy

Don’t confuse these two terms!

Precision is a count of the number bits in a computer

word used to represent a value.

Accuracy is a measure of the difference between the

actual value of a number and its computer

representation.

High precision permits high accuracy but doesn’t

guarantee it. It is possible to have high precision

but low accuracy.

Example:

float pi = 3.14;

pi will be represented using all bits of the

significant (highly precise), but is only an

approximation (not accurate).

What to do with representations of numbers?

• Just what we do with numbers!

• Add them

• Subtract them

• Multiply them

• Divide them

• Compare them

• Example: 10 + 7 = 17

+

1

1

1

0

1

0

0

1

1

1

------------------------1

0

0

0

1

• …so simple to add in binary that we can

build circuits to do it!

• subtraction just as you would in decimal

• Comparison: How do you tell if X > Y ?

Visualizing (Mathematical) Integer Addition

• Integer Addition

• 4-bit integers u,

v

• Compute true

sum Add4(u , v)

• Values increase

linearly with u

and v

• Forms planar

surface

Add4(u , v)

Integer Addition

32

28

24

20

16

14

12

12

10

8

8

4

6

0

4

0

2

u

4

2

6

8

10

12

0

14

v

Visualizing Unsigned Addition

• Wraps Around

Overflow

• If true sum ≥ 2w

UAdd4(u , v)

• At most once

True Sum

16

2w+1

14

Overflow

12

10

8

2w

14

6

12

10

4

8

2

0

6

0

Modular Sum

4

0

2

u

4

2

6

8

10

12

0

14

v

BIG IDEA: Bits can represent anything!!

• Characters?

• 26 letters 5 bits (25 = 32)

• upper/lower case + punctuation

7 bits (in 8) (“ASCII”)

• standard code to cover all the world’s

languages 8,16,32 bits (“Unicode”)

www.unicode.com

• Logical values?

• 0 False, 1 True

• colors ? Ex:

Red (00)

Green (01)

Blue (11)

• locations / addresses? commands?

• MEMORIZE: N bits at most 2N things

How to Represent Negative Numbers?

• So far, unsigned numbers

• Obvious solution: define leftmost bit to be sign!

• 0 +, 1 –

• Rest of bits can be numerical value of number

• Representation called sign and magnitude

• x86 uses 32-bit integers. +1ten would be:

0000 0000 0000 0000 0000 0000 0000 0001

• And –1ten in sign and magnitude would be:

1000 0000 0000 0000 0000 0000 0000 0001

Shortcomings of sign and magnitude?

• Arithmetic circuit complicated

• Special steps depending whether signs are

the same or not

• Also, two zeros

• 0x00000000 = +0ten

• 0x80000000 = –0ten

• What would two 0s mean for programming?

• Therefore sign and magnitude abandoned

Another try: complement the bits

• Example:

710 = 001112 –710 = 110002

• Called One’s Complement

• Note: positive numbers have leading 0s,

negative numbers have leadings 1s.

00000

00001 ...

01111

10000 ... 11110 11111

• What is -00000 ? Answer: 11111

• How many positive numbers in N bits?

• How many negative numbers?

Standard Negative Number Representation

• What is result for unsigned numbers if tried

to subtract large number from a small one?

• Would try to borrow from string of leading 0s,

so result would have a string of leading 1s

3 - 4 00…0011 – 00…0100 = 11…1111

• With no obvious better alternative, pick

representation that made the hardware simple

• As with sign and magnitude,

leading 0s positive, leading 1s negative

000000...xxx is ≥ 0, 111111...xxx is < 0

except 1…1111 is -1, not -0 (as in sign & mag.)

• This representation is Two’s Complement

2’s Complement Number “line”: N = 5

00000 00001

11111

11110

00010

-1 0 1

11101

2

-2

-3

11100

-4

.

.

.

.

.

.

• 2N-1 nonnegatives

• 2N-1 negatives

• one zero

• how many

positives?

-15 -16 15

10001 10000 01111

00000

10000 ... 11110 11111

00001 ...

01111

Numeric Ranges

• Unsigned Values

• UMin

= 0

• Two’s Complement Values

• TMin

=

–2w–1

000…0

• UMax

=

2w

100…0

–1

• TMax

111…1

=

011…1

• Other Values

• Minus 1

111…1

Values for W = 16

UMax

TMax

TMin

-1

0

Decimal

65535

32767

-32768

-1

0

Hex

FF FF

7F FF

80 00

FF FF

00 00

Binary

11111111 11111111

01111111 11111111

10000000 00000000

11111111 11111111

00000000 00000000

2w–1 – 1

Values for Different Word Sizes

W

UMax

TMax

TMin

8

255

127

-128

16

65,535

32,767

-32,768

32

4,294,967,295

2,147,483,647

-2,147,483,648

• Observations

• |TMin | = TMax + 1

Asymmetric range

• UMax = 2 * TMax + 1

64

18,446,744,073,709,551,615

9,223,372,036,854,775,807

-9,223,372,036,854,775,808

C Programming

#include <limits.h>

Declares constants, e.g.,

ULONG_MAX

LONG_MAX

LONG_MIN

Values platform

specific

Unsigned & Signed Numeric Values

X

0000

0001

0010

0011

0100

0101

0110

0111

1000

1001

1010

1011

1100

1101

1110

1111

B2U(X)

0

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

B2T(X)

0

1

2

3

4

5

6

7

–8

–7

–6

–5

–4

–3

–2

–1

• Equivalence

• Same encodings for

nonnegative values

• Uniqueness

• Every bit pattern represents

unique integer value

• Each representable integer

has unique bit encoding

• Can Invert Mappings

• U2B(x) = B2U-1(x)

Bit pattern for unsigned

integer

• T2B(x) = B2T-1(x)

Bit pattern for two’s comp

integer

Two’s Complement Formula

• Can represent positive and negative numbers

in terms of the bit value times a power of 2:

d31 x -(231) + d30 x 230 + ... + d2 x 22 + d1 x 21 + d0 x 20

• Example: 1101two

= 1x-(23) + 1x22 + 0x21 + 1x20

= -23 + 22 + 0 + 20

= -8 + 4 + 0 + 1

= -8 + 5

= -3ten

Two’s Complement shortcut: Negation

*Check out www.cs.berkeley.edu/~dsw/twos_complement.html

• Change every 0 to 1 and 1 to 0 (invert or

complement), then add 1 to the result

• Proof*: Sum of number and its (one’s)

complement must be 111...111two

However, 111...111two= -1ten

Let x’ one’s complement representation of x

Then x + x’ = -1 x + x’ + 1 = 0 -x = x’ + 1

• Example: -3 to +3 to -3

x : 1111 1111 1111 1111 1111 1111 1111 1101two

x’: 0000 0000 0000 0000 0000 0000 0000 0010two

+1: 0000 0000 0000 0000 0000 0000 0000 0011two

()’: 1111 1111 1111 1111 1111 1111 1111 1100two

+1: 1111 1111 1111 1111 1111 1111 1111 1101two

You should be able to do this in your head…

What if too big?

• Binary bit patterns above are simply

representatives of numbers. Strictly speaking

they are called “numerals”.

• Numbers really have an number of digits

• with almost all being same (00…0 or 11…1) except

for a few of the rightmost digits

• Just don’t normally show leading digits

• If result of add (or -, *, / ) cannot be

represented by these rightmost HW bits,

overflow is said to have occurred.

00000 00001 00010

unsigned

11110 11111

Peer Instruction Question

X = 1111 1111 1110 1100two

Y = 0011 1010 0000 0000two

A. X > Y (if signed)

B. X > Y (if unsigned)

C. X = -19 (if signed)

0:

1:

2:

3:

4:

5:

6:

7:

ABC

FFF

FFT

FTF

FTT

TFF

TFT

TTF

TTT

Peer Instruction Question

A: False (X negative)

B: True

C: False(X = -20)

X = 1111 1111 1110 1100two

Y = 0011 1010 0000 0000two

A. X > Y (if signed)

B. X > Y (if unsigned)

C. X = -19 (if signed)

0:

1:

2:

3:

4:

5:

6:

7:

ABC

FFF

FFT

FTF

FTT

TFF

TFT

TTF

TTT

Number summary...

META: We often make design

decisions to make HW simple

• We represent “things” in computers as

particular bit patterns: N bits 2N things

• Decimal for human calculations, binary for

computers, hex to write binary more easily

• 1’s complement - mostly abandoned

00000

00001 ...

01111

10000 ... 11110 11111

• 2’s complement universal in computing:

cannot avoid, so learn

00000 00001 ... 01111

10000 ... 11110 11111

• Overflow: numbers ; computers finite,errors!

Information units

• Basic unit is the bit (has value 0 or 1)

• Bits are grouped together in units and operated on together:

• Byte = 8 bits

• Word = 4 bytes

• Double word = 2 words

• etc.

Encoding Byte Values

• Byte = 8 bits

• Binary

• Decimal:

000000002

010

to

to

25510

111111112

First digit must not be 0 in C

• Hexadecimal

0016

to

FF16

Base 16 number representation

Use characters ‘0’ to ‘9’ and ‘A’ to ‘F’

Write FA1D37B16 in C as 0xFA1D37B

•

Or 0xfa1d37b

0

1

2

3

4

5

6

7

8

9

A

B

C

D

E

F

0

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

0000

0001

0010

0011

0100

0101

0110

0111

1000

1001

1010

1011

1100

1101

1110

1111

Memory addressing

• Memory is an array of information units

– Each unit has the same size

– Each unit has its own address

– Address of an unit and contents of the unit at that address are

different

0

1

2

address

123

-17

0

contents

Addressing

• In most of today’s computers, the basic unit that can be addressed is

a byte. (how many bit is a byte?)

– x86 (and pretty much all CPU today) is byte addressable

• The address space is the set of all memory units that a program can

reference

– The address space is usually tied to the length of the registers

– x86 has 32-bit registers. Hence its address space is 4G bytes

– Older micros (minis) had 16-bit registers, hence 64 KB address

space (too small)

– Some current (Alpha, Itanium, Sparc, Altheon) machines have

64-bit registers, hence an enormous address space

Machine Words

• Machine Has “Word Size”

• Nominal size of integer-valued data

Including addresses

• Many current machines still use 32 bits (4 bytes) words

Limits addresses to 4GB

Becoming too small for memory-intensive applications

• New or high-end systems use 64 bits (8 bytes) words

Potential address space 1.8 X 1019 bytes

x86-64 machines support 48-bit addresses: 256 Terabytes

• Machines support multiple data formats

Fractions or multiples of word size

Always integral number of bytes

Addressing words

• Although machines are byte-addressable, 4 byte integers are the

most commonly used units

• Every 32-bit integer starts at an address divisible by 4

int at address 0

int at address 4

int at address 8

Word-Oriented Memory Organization

32-bit

Words

• Addresses Specify

Byte Locations

• Address of first byte in

word

• Addresses of

successive words differ

by 4 (32-bit) or 8 (64-bit)

64-bit

Words

Addr

=

0000

??

Addr

=

0000

??

Addr

=

0004

??

Addr

=

0008

??

Addr

=

0012

??

Addr

=

0008

??

Bytes Addr.

0000

0001

0002

0003

0004

0005

0006

0007

0008

0009

0010

0011

0012

0013

0014

0015

Data Representations

• Sizes of C Objects (in Bytes)

• C Data TypeTypical 32-bit

Intel IA32

x86-64

char

short

int

long

1

2

4

4

1

2

4

4

1

2

4

8

long long

float

double

long double

char *

8

4

8

8

4

8

4

8

10/12

4

8

4

8

10/16

8

•

Or any other pointer

Byte Ordering

How should bytes within multi-byte

word be ordered in memory?

Conventions

Big Endian: Sun, PPC Mac, Internet

Least significant byte has highest address

Little Endian: x86

Least significant byte has lowest address

Byte Ordering Example

• Big Endian

• Least significant byte has highest address

• Big End First

• Little Endian

• Least significant byte has lowest address

• Little End First

• Example

• Variable x has 4-byte representation 0x01234567

• Address given by &x is 0x100

Big Endian

Little Endian

0x100

0x101

0x102

0x103

01

23

45

45

67

0x100

0x101

0x102

0x103

67

45

23

01

Big-endian vs. little-endian

• Byte order within a word:

Value of Word #0

3

2

1

0

Little-endian

(we’ll use this)

0

1

2

3

Big-endian

Memory

address

0

byte

1

2

3

Word #0

Reading Byte-Reversed Listings

• Disassembly

• Text representation of binary machine code

• Generated by program that reads the machine code

• Example Fragment

Address

8048365:

8048366:

804836c:

Instruction Code

5b

81 c3 ab 12 00 00

83 bb 28 00 00 00 00

Assembly Rendition

pop

%ebx

add

$0x12ab,%ebx

cmpl

$0x0,0x28(%ebx)

Deciphering Numbers

Value:

Pad to 32 bits:

Split into bytes:

Reverse:

0x12ab

0x000012ab

00 00 12 ab

ab 12 00 00

Examining Data Representations

Code to Print Byte Representation of Data

Casting pointer to unsigned char * creates byte array

typedef unsigned char *pointer;

void show_bytes(pointer start, int len)

{

int i;

for (i = 0; i < len; i++)

printf("0x%p\t0x%.2x\n",

start+i, start[i]);

printf("\n");

}

Printf directives:

%p:Print pointer

%x: Print Hexadecimal

show_bytes Execution Example

int a = 15213;

printf("int a = 15213;\n");

show_bytes((pointer) &a, sizeof(int));

Result (Linux):

int a = 15213;

0x11ffffcb8 0x6d

0x11ffffcb9 0x3b

0x11ffffcba 0x00

0x11ffffcbb 0x00

Representing & Manipulating Sets

• Representation

• Width w bit vector represents subsets of {0, …, w–1}

• aj = 1 if j A

01101001 { 0, 3, 5, 6 }

76543210

01010101 { 0, 2, 4, 6 }

76543210

• Operations

• &

Intersection

01000001

{ 0, 6 }

• |

Union

01111101

{ 0, 2, 3, 4, 5, 6 }

• ^

Symmetric difference

00111100

{ 2, 3, 4, 5 }

• ~

Complement

10101010

{ 1, 3, 5, 7 }

Bit-Level Operations in C

• Operations &, |, ~, ^ Available in C

• Apply to any “integral” data type

long, int, short, char, unsigned

• View arguments as bit vectors

• Arguments applied bit-wise

• Examples (Char data type)

• ~0x41 -->

0xBE

~010000012

• ~0x00 -->

~000000002

• 0x69 & 0x55

-->

101111102

-->

111111112

0xFF

-->

0x41

011010012 & 010101012 --> 010000012

• 0x69 | 0x55

-->

0x7D

011010012 | 010101012 --> 011111012

Contrast: Logic Operations in C

• Contrast to Logical Operators

• &&, ||, !

View 0 as “False”

Anything nonzero as “True”

Always return 0 or 1

Early termination

• Examples (char data type)

• !0x41 -->

• !0x00 -->

• !!0x41 -->

• 0x69 && 0x55

• 0x69 || 0x55

• p && *p

0x00

0x01

0x01

-->

-->

0x01

0x01

(avoids null pointer access)

Shift Operations

• Left Shift:

x << y

• Shift bit-vector x left y positions

•

Throw away extra bits on left

Fill with 0’s on right

• Right Shift: x >> y

• Shift bit-vector x right y positions

<< 3

00010000

Log. >> 2

00011000

Arith. >> 2

00011000

Argument x

10100010

<< 3

00010000

Log. >> 2

00101000

Arith. >> 2

11101000

Fill with 0’s on left

• Arithmetic shift

01100010

Throw away extra bits on right

• Logical shift

Argument x

Replicate most significant bit on

right

• Undefined Behavior

• Shift amount < 0 or word size

The CPU - Instruction Execution Cycle

• The CPU executes a program by repeatedly following this cycle

1. Fetch the next instruction, say instruction i

2. Execute instruction i

3. Compute address of the next instruction, say j

4. Go back to step 1

• Of course we’ll optimize this but it’s the basic concept

What’s in an instruction?

• An instruction tells the CPU

– the operation to be performed via the OPCODE

– where to find the operands (source and destination)

• For a given instruction, the ISA specifies

– what the OPCODE means (semantics)

– how many operands are required and their types, sizes

etc.(syntax)

• Operand is either

– register (integer, floating-point, PC)

– a memory address

– a constant

Reference slides

You ARE responsible for the

material on these slides (they’re

just taken from the reading

anyway) ; we’ve moved them to

the end and off-stage to give

more breathing room to lecture!

Kilo, Mega, Giga, Tera, Peta, Exa, Zetta, Yotta

physics.nist.gov/cuu/Units/binary.html

• Common use prefixes (all SI, except K [= k in SI])

Name

Abbr Factor

SI size

Kilo

K

210 = 1,024

103 = 1,000

Mega

M

220 = 1,048,576

106 = 1,000,000

Giga

G

230 = 1,073,741,824

109 = 1,000,000,000

Tera

T

240 = 1,099,511,627,776

1012 = 1,000,000,000,000

Peta

P

250 = 1,125,899,906,842,624

1015 = 1,000,000,000,000,000

Exa

E

260 = 1,152,921,504,606,846,976

1018 = 1,000,000,000,000,000,000

Zetta

Z

270 = 1,180,591,620,717,411,303,424

1021 = 1,000,000,000,000,000,000,000

Yotta

Y

280 = 1,208,925,819,614,629,174,706,176

1024 = 1,000,000,000,000,000,000,000,000

• Confusing! Common usage of “kilobyte” means

1024 bytes, but the “correct” SI value is 1000 bytes

• Hard Disk manufacturers & Telecommunications are

the only computing groups that use SI factors, so

what is advertised as a 30 GB drive will actually only

hold about 28 x 230 bytes, and a 1 Mbit/s connection

transfers 106 bps.

kibi, mebi, gibi, tebi, pebi, exbi, zebi, yobi

en.wikipedia.org/wiki/Binary_prefix

• New IEC Standard Prefixes [only to exbi officially]

Name

Abbr Factor

kibi

Ki

210 = 1,024

mebi

Mi

220 = 1,048,576

gibi

Gi

230 = 1,073,741,824

tebi

Ti

240 = 1,099,511,627,776

pebi

Pi

250 = 1,125,899,906,842,624

exbi

Ei

260 = 1,152,921,504,606,846,976

zebi

Zi

270 = 1,180,591,620,717,411,303,424

yobi

Yi

280 = 1,208,925,819,614,629,174,706,176

As of this

writing, this

proposal has

yet to gain

widespread

use…

• International Electrotechnical Commission (IEC) in

1999 introduced these to specify binary quantities.

• Names come from shortened versions of the

original SI prefixes (same pronunciation) and bi is

short for “binary”, but pronounced “bee” :-(

• Now SI prefixes only have their base-10 meaning

and never have a base-2 meaning.

The way to remember #s

• What is 234? How many bits addresses

(I.e., what’s ceil log2 = lg of) 2.5 TiB?

• Answer! 2XY means…

X=0 --X=1 kibi ~103

X=2 mebi ~106

X=3 gibi ~109

X=4 tebi ~1012

X=5 pebi ~1015

X=6 exbi ~1018

X=7 zebi ~1021

X=8 yobi ~1024

Y=0 1

Y=1 2

Y=2 4

Y=3 8

Y=4 16

Y=5 32

Y=6 64

Y=7 128

Y=8 256

Y=9 512

MEMORIZE!

Which base do we use?

• Decimal: great for humans, especially when

doing arithmetic

• Hex: if human looking at long strings of

binary numbers, its much easier to convert

to hex and look 4 bits/symbol

• Terrible for arithmetic on paper

• Binary: what computers use;

you will learn how computers do +, -, *, /

• To a computer, numbers always binary

• Regardless of how number is written:

• 32ten == 3210 == 0x20 == 1000002 == 0b100000

• Use subscripts “ten”, “hex”, “two” in book,

slides when might be confusing

Two’s Complement for N=32

0000 ... 0000

0000 ... 0000

0000 ... 0000

...

0111 ... 1111

0111 ... 1111

0111 ... 1111

1000 ... 0000

1000 ... 0000

1000 ... 0000

...

1111 ... 1111

1111 ... 1111

1111 ... 1111

0000 0000 0000two =

0000 0000 0001two =

0000 0000 0010two =

1111

1111

1111

0000

0000

0000

1111

1111

1111

0000

0000

0000

0ten

1ten

2ten

1101two =

1110two =

1111two =

0000two =

0001two =

0010two =

2,147,483,645ten

2,147,483,646ten

2,147,483,647ten

–2,147,483,648ten

–2,147,483,647ten

–2,147,483,646ten

1111 1111 1101two =

1111 1111 1110two =

1111 1111 1111two =

–3ten

–2ten

–1ten

• One zero; 1st bit called sign bit

• 1 “extra” negative:no positive 2,147,483,648ten

Two’s comp. shortcut: Sign extension

• Convert 2’s complement number rep.

using n bits to more than n bits

• Simply replicate the most significant bit

(sign bit) of smaller to fill new bits

• 2’s comp. positive number has infinite 0s

• 2’s comp. negative number has infinite 1s

• Binary representation hides leading bits;

sign extension restores some of them

• 16-bit -4ten to 32-bit:

1111 1111 1111 1100two

1111 1111 1111 1111 1111 1111 1111 1100two

Preview: Signed vs. Unsigned Variables

• Java and C declare integers int

• Use two’s complement (signed integer)

• Also, C declaration unsigned int

• Declares a unsigned integer

• Treats 32-bit number as unsigned

integer, so most significant bit is part of

the number, not a sign bit