* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Process Management - Computer Science

Survey

Document related concepts

Transcript

Processes, Threads,

Synchronization

CS 519: Operating System Theory

Computer Science, Rutgers University

Fall 2013

Process

Process = system abstraction for the set of resources

required for executing a program

= a running instance of a program

= memory image + registers (+ I/O state)

The stack + registers form the execution context

Computer Science, Rutgers

2

CS 519: Operating System Theory

Process Image

Code

Globals

Stack

Each variable must be assigned a storage

class

Global (static) variables

Allocated in the global region at compiletime

Local variables and parameters

Allocated dynamically on the stack

Heap

Memory

Computer Science, Rutgers

Dynamically created objects

Allocated from the heap

3

CS 519: Operating System Theory

What About The OS Image?

Recall that one of the function of an OS is to provide a virtual

machine interface that makes programming the machine easier

So, a process memory image must also contain the OS

Code

Globals

Memory

OS

Stack

Code

Globals

Heap

Stack

OS data space is used to store things

like file descriptors for files being

accessed by the process, status of I/O

devices, etc.

Heap

Computer Science, Rutgers

4

CS 519: Operating System Theory

What Happens When There Are More

Than One Running Process?

OS

Code

Globals

Stack

P0

Heap

P1

P2

Computer Science, Rutgers

5

CS 519: Operating System Theory

Process Control Block

Each process has per-process state maintained by the OS

Identification: process, parent process, user, group, etc.

Execution contexts: threads

Address space: virtual memory

I/O state: file handles (file system), communication endpoints

(network), etc.

Accounting information

For each process, this state is maintained in a process control

block (PCB)

This is just data in the OS data space

Computer Science, Rutgers

6

CS 519: Operating System Theory

Process Creation

How to create a process? System call.

In UNIX, a process can create another process using the fork()

system call

int pid = fork();

/* this is in C */

The creating process is called the parent and the new process is

called the child

The child process is created as a copy of the parent process

(process image and process control structure) except for the

identification and scheduling state

Parent and child processes run in two different address spaces

By default, there is no memory sharing

Process creation is expensive because of this copying

The exec() call is provided for the newly created process to run a

different program than that of the parent

Computer Science, Rutgers

7

CS 519: Operating System Theory

System Call In Monolithic OS

interrupt vector for trap instruction

PC

PSW

in-kernel file system(monolithic OS)

code for fork system call

kernel mode

trap

iret

user mode

id = fork()

Computer Science, Rutgers

8

CS 519: Operating System Theory

Process Creation

fork() code

PCBs

fork()

exec()

fork()

Computer Science, Rutgers

9

CS 519: Operating System Theory

Example of Process Creation Using Fork

The UNIX shell is command-line interpreter whose basic purpose

is for user to run applications on a UNIX system

cmd arg1 arg2 ... argn

Computer Science, Rutgers

10

CS 519: Operating System Theory

Process Termination

One process can wait for another process to finish

using the wait() system call

Can wait for a child to finish as shown in the example

Can also wait for an arbitrary process if it knows its PID

Can kill another process using the kill() system call

What all happens when kill() is invoked?

What if the victim process does not want to die?

Computer Science, Rutgers

11

CS 519: Operating System Theory

Process Swapping

May want to swap out entire process

Thrashing if too many processes competing for resources

To swap out a process

Suspend its execution

Copy all of its information to backing store (except for PCB)

To swap a process back in

Copy needed information back into memory, e.g. page table,

thread control blocks

Restore state to blocked or ready

Must check whether event(s) has (have) already occurred

Computer Science, Rutgers

12

CS 519: Operating System Theory

Process State Diagram

ready

(in

memory)

swap in

swap out

suspended

(swapped out)

Computer Science, Rutgers

13

CS 519: Operating System Theory

Signals

OS may need to “upcall” into user processes

Signals

UNIX mechanism to upcall when an event of interest occurs

Potentially interesting events are predefined: e.g.,

segmentation violation, message arrival, kill, etc.

When interested in “handling” a particular event (signal), a

process indicates its interest to the OS and gives the OS a

procedure that should be invoked in the upcall.

Computer Science, Rutgers

14

CS 519: Operating System Theory

Signals (Cont’d)

When an event of interest occurs, the

kernel handles the event first, then

modifies the process‘ stack to look as

if the process’ code made a procedure

call to the signal handler.

When the user process is scheduled

next, it executes the handler first

From the handler, the user process

returns to where it was when the event

occurred

Computer Science, Rutgers

15

Handler

B

B

A

A

CS 519: Operating System Theory

Inter-Process Communication

Most operating systems provide several abstractions

for inter-process communication: message passing,

shared memory, etc

Communication requires synchronization between

processes (i.e. data must be produced before it is

consumed)

Synchronization can be implicit (message passing) or

explicit (shared memory)

Explicit synchronization can be provided by the OS

(semaphores, monitors, etc) or can be achieved

exclusively in user-mode (if processes share memory)

Computer Science, Rutgers

16

CS 519: Operating System Theory

Message Passing Implementation

kernel buffers

kernel

1st

copy

2nd

copy

process 1

x=1

send(process2, &X)

X

process 2

receive(process1,&Y)

print Y

Y

two copy operations in a conventional implementation

17

Shared Memory Implementation

kernel

shared

region

process 1

X=1

X

process 2

physical memory

print Y

Y

no copying but synchronization is necessary

18

Inter-Process Communication

More on shared memory and message passing later

Synchronization after we talk about threads

Computer Science, Rutgers

19

CS 519: Operating System Theory

A Tree of Processes On A Typical UNIX

System

Computer Science, Rutgers

20

CS 519: Operating System Theory

Process: Summary

System abstraction – the set of resources required for executing

a program (an instantiation of a program)

Execution context

Address space

File handles, communication endpoints, etc.

Historically, all of the above “lumped” into a single abstraction

More recently, split into several abstractions

Threads, address space, protection domain, etc.

OS process management:

Supports creation of processes and interprocess communication (IPC)

Allocates resources to processes according to specific policies

Interleaves the execution of multiple processes to increase system

utilization

Computer Science, Rutgers

21

CS 519: Operating System Theory

Threads

Thread of execution: stack + registers (including PC)

Informally: where an execution stream is currently at in the

program and the method invocation chain that brought the

execution stream to the current place

Example: A called B, which called C, which called B, which called

C

The PC should be pointing somewhere inside C at this point

The stack should contain 5 activation records: A/B/C/B/C

Process model discussed thus far implies a single thread

Computer Science, Rutgers

22

CS 519: Operating System Theory

Multi-Threading

Why limit ourselves to a single thread?

Think of a web server that must service a large stream of requests

If only have one thread, can only process one request at a time

What to do when reading a file from disk?

Multi-threading model

Each process can have multiple threads

Each thread has a private stack

Registers are also private

All threads of a process share the code, the global data and heap

Computer Science, Rutgers

23

CS 519: Operating System Theory

Process Address Space Revisited

OS

OS

Code

Code

Globals

Globals

Stack

Stack

Stack

Heap

Heap

(a) Single-threaded address space (b) Multi-threaded address space

Computer Science, Rutgers

24

CS 519: Operating System Theory

Multi-Threading (cont)

Implementation

Each thread is described by a thread-control block (TCB)

A TCB typically contains

Thread ID

Space for saving registers

Pointer to thread-specific data not on stack

Observation

Although the model is that each thread has a private stack,

threads actually share the process address space

There’s no memory protection!

Threads could potentially write into each other’s stack

Computer Science, Rutgers

25

CS 519: Operating System Theory

Posix Thread (Pthread) API

_

thread creation and termination

pthread_create(&tid,NULL,start_fn,arg);

pthread_exit(status)’

_

_

_

thread join

pthread_join(tid, &status);

mutual exclusion

pthread_mutex_lock(&lock);

pthread_mutex_unlock(&lock);

condition variable

pthread_cond_wait(&c,&lock);

pthread_cond_signal(&c);

26

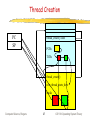

Thread Creation

PC

SP

thread_create() code

PCBs

TCBs

thread_create()

new_thread_starts_here

stacks

Computer Science, Rutgers

27

CS 519: Operating System Theory

Context Switching

Suppose a process has multiple threads, a uniprocessor

machine only has 1 CPU, so what to do?

In fact, even if we only had one thread per process, we would

have to do something about running multiple processes …

We multiplex the multiple threads on the single CPU

At any instance in time, only one thread is running

At some point in time, the OS may decide to stop the

currently running thread and allow another thread to

run

This switching from one running thread to another is

called context switching

Computer Science, Rutgers

28

CS 519: Operating System Theory

Diagram of Thread State

Computer Science, Rutgers

29

CS 519: Operating System Theory

Context Switching (cont)

How to do a context switch?

Save state of currently executing thread

Copy all “live” registers to the thread control block

Restore state of thread to run next

Copy values of live registers from thread control block to

registers

When does context switching take place?

Computer Science, Rutgers

30

CS 519: Operating System Theory

Context Switching (cont)

When does context switching occur?

When the OS decides that a thread has run long enough and

that another thread should be given the CPU

Remember how the OS gets control of the CPU back when it is

executing user code?

When a thread performs an I/O operation and needs to block

to wait for the completion of this operation

To wait for some other thread

Thread synchronization

Computer Science, Rutgers

31

CS 519: Operating System Theory

How Is the Switching Code Invoked?

user thread executing clock interrupt PC modified by

hardware to “vector” to interrupt handler user thread state is

saved for later resume clock interrupt handler is invoked

disable interrupt checking check whether current thread has

run “long enough” if yes, post asynchronous software trap

(AST) enable interrupt checking exit interrupt handler

enter “return-to-user” code check whether AST was posted

if not, restore user thread state and return to executing user

thread; if AST was posted, call context switch code

Why need AST?

Computer Science, Rutgers

32

CS 519: Operating System Theory

How Is the Switching Code Invoked?

(cont)

user thread executing system call to perform I/O

user thread state is saved for later resume OS code

to perform system call is invoked I/O operation

started (by invoking I/O driver) set thread status to

waiting move thread’s TCB from run queue to wait

queue associated with specific device call context

switching code

Computer Science, Rutgers

33

CS 519: Operating System Theory

Context Switching

At entry to CS, the return address is either in a register or on

the stack (in the current activation record)

CS saves this return address to the TCB instead of the current

PC

To thread, it looks like CS just took a while to return!

If the context switch was initiated from an interrupt, the thread

never knows that it has been context switched out and back in

unless it looking at the “wall” clock

Computer Science, Rutgers

34

CS 519: Operating System Theory

Context Switching (cont)

Even that is not quite the whole story

When a thread is switched out, what happens to it?

How do we find it to switch it back in?

This is what the TCB is for. System typically has

A run queue that points to the TCBs of threads ready to run

A blocked queue per device to hold the TCBs of threads blocked

waiting for an I/O operation on that device to complete

When a thread is switched out at a timer interrupt, it is still ready to

run so its TCB stays on the run queue

When a thread is switched out because it is blocking on an I/O

operation, its TCB is moved to the blocked queue of the device

Computer Science, Rutgers

35

CS 519: Operating System Theory

Ready Queue And Various I/O Device

Queues

Computer Science, Rutgers

36

CS 519: Operating System Theory

Switching Between Threads of Different

Processes

What if switching to a thread of a different process?

Caches, TLB, page table, etc.?

Caches

Physical addresses: no problem

Virtual addresses: cache must either have process tag or must flush

cache on context switch

TLB

Each entry must have process tag or must flush TLB on context

switch

Page table

Typically have page table pointer (register) that must be reloaded on

context switch

Computer Science, Rutgers

37

CS 519: Operating System Theory

Threads & Signals

What happens if kernel wants to signal a process when

all of its threads are blocked?

When there are multiple threads, which thread should

the kernel deliver the signal to?

OS writes into process control block that a signal should be

delivered

Next time any thread from this process is allowed to run, the

signal is delivered to that thread as part of the context switch

What happens if kernel needs to deliver multiple signals?

Computer Science, Rutgers

38

CS 519: Operating System Theory

Thread Implementation

Kernel-level threads (lightweight processes)

Kernel sees multiple execution contexts

Thread management done by the kernel

User-level threads

Implemented as a thread library, which contains the code for

thread creation, termination, scheduling and switching

Kernel sees one execution context and is unaware of thread

activity

Can be preemptive or not

Computer Science, Rutgers

39

CS 519: Operating System Theory

User-Level Thread Implementation

kernel

process

thread 1

pc

sp

code

pc

thread 2

data

sp

thread stacks

40

User-Level vs. Kernel-Level Threads

Advantages of user-level threads

Performance: low-cost thread operations (do not require

crossing protection domains)

Flexibility: scheduling can be application specific

Portability: user-level thread library easy to port

Disadvantages of user-level threads

If a user-level thread is blocked in the kernel, the entire

process (all threads of that process) are blocked

Cannot take advantage of multiprocessing (the kernel assigns

one process to only one processor)

Computer Science, Rutgers

41

CS 519: Operating System Theory

User-Level vs. Kernel-Level Threads

user-level

threads

kernel-level

threads

threads

thread

scheduling

user

kernel

threads

process

thread

scheduling

process

scheduling

processor

processor

Computer Science, Rutgers

process

scheduling

42

CS 519: Operating System Theory

User-Level vs. Kernel-Level Threads

No reason why we should not

have both

user-level

threads

Most systems now support

kernel threads

thread

scheduling

User-level threads are available

as linkable libraries

user

kernel

kernel-level

threads

thread

scheduling

process

scheduling

processor

Computer Science, Rutgers

43

CS 519: Operating System Theory

Kernel Support for User-Level Threads

Even kernel threads are not quite the right abstraction

for supporting user-level threads

Mismatch between where the scheduling information is

available (user) and where scheduling on real processors

is performed (kernel)

When the kernel thread is blocked, the corresponding physical

processor is lost to all user-level threads although there may

be some ready to run.

Computer Science, Rutgers

44

CS 519: Operating System Theory

Why Kernel Threads Are Not The Right

Abstraction

user-level threads

user-level scheduling

user

kernel

kernel thread

blocked

kernel-level scheduling

physical processor

Computer Science, Rutgers

45

CS 519: Operating System Theory

Scheduler Activations:

Kernel Support for User-Level Threads

Each process contains a user-level thread system (ULTS) that

controls the scheduling of the allocated processors

Kernel allocates processors to processes as scheduler activations

(SAs). An SA is similar to a kernel thread, but it also transfers

control from the kernel to the ULTS on a kernel event as

described below

Kernel notifies a process whenever the number of allocated

processors changes or when an SA is blocked due to the userlevel thread running on it (e.g., for I/O or on a page fault)

The process notifies the kernel when it needs more or fewer SAs

(processors)

Ex.: (1) Kernel notifies ULTS that user-level thread blocked by

creating an SA and upcalling the process; (2) ULTS removes the

state from the old SA, tells the kernel that it can be reused,

and decides which user-level thread to run on the new SA

Computer Science, Rutgers

46

CS 519: Operating System Theory

User-Level Threads On Top of

Scheduler Activations

user-level threads

user-level scheduling

blocked

active

user

kernel

scheduler activation

kernel-level scheduling

blocked active

physical processor

Source: T. Anderson et al. “Scheduler Activations: Effective Kernel Support for the

User-Level Management of Parallelism”. ACM TOCS, 1992.

Computer Science, Rutgers

47

CS 519: Operating System Theory

Threads vs. Processes

Why multiple threads?

Can’t we use multiple processes to do whatever it is that we do with

multiple threads?

Of course, we need to be able to share memory (and other resources)

between multiple processes …

But this sharing is already supported by threads

Operations on threads (creation, termination, scheduling, etc..) are

cheaper than the corresponding operations on processes

This is because thread operations do not involve manipulations of other

resources associated with processes (I/O descriptors, address space, etc)

Inter-thread communication is supported through shared memory

without kernel intervention

Why not? Have multiple other resources, why not threads?

Computer Science, Rutgers

48

CS 519: Operating System Theory

Thread/Process Operation Latencies

Operation

User-level

Thread

(s)

Kernel

Threads

(s)

Processes

(s)

Null fork

34

948

11,300

Signal-wait

37

441

1,840

VAX uniprocessor running UNIX-like OS, 1992.

Operation

Kernel Threads

(s)

Null fork

45

Processes

(s)

108

2.8-GHz Pentium 4 uniprocessor running Linux, 2004.

Computer Science, Rutgers

49

CS 519: Operating System Theory

Synchronization

Synchronization

Problem

Threads must share data

Data consistency must be maintained

Computer Science, Rutgers

51

CS 519: Operating System Theory

The Critical Section Problem

When a process executes code that

manipulates shared data (or resource), we

say that the process is in its critical section

(for that shared data)

The execution of critical sections must be

mutually exclusive: at any time: only one

process is allowed to execute in its critical

section (even with multiple CPUs)

Each process must request the permission to

enter a critical section

52

Terminologies

Critical section: a section of code which reads or writes

shared data

Race condition: potential for interleaved execution of a

critical section by multiple threads

Results are non-deterministic

Mutual exclusion: synchronization mechanism to avoid

race conditions by ensuring exclusive execution of

critical sections

Deadlock: permanent blocking of threads

Starvation: execution but no progress

Computer Science, Rutgers

53

CS 519: Operating System Theory

The Critical Section Problem

• The section of code implementing the request to enter

a CS is called the entry section

• The critical section might be followed by an exit

section

• The remaining code is the remainder section

• The critical section problem: to design a protocol

that, if executed by concurrent processes, ensures

that their action will not depend on the order in which

their execution is interleaved (possibly on many

processors)

54

Framework for Critical Section

Solution Analysis

•Each thread executes at nonzero speed, but no

assumption on the relative speed of n threads

•Structure of a concurrent thread:

repeat

entry section

critical section

exit section

remainder section

forever

•No assumption about order of interleaved execution

•Basically, a ME solution must specify the entry and

exit sections

55

Requirements for Mutual Exclusion

• No assumptions on hardware: speed, # of processors

• Mutual exclusion is maintained – that is, only one

thread at a time can be executing inside a CS

• Execution of CS takes a finite time

• A thread/process not in CS cannot prevent other

threads/processes to enter the CS

• Entering CS cannot de delayed indefinitely: no

deadlock or starvation

Computer Science, Rutgers

56

CS 519: Operating System Theory

What about thread failures?

If all three criteria (ME, progress, bounded waiting) are

satisfied, then a valid solution will provide robustness

against failure of a thread in its remainder section (RS)

Because failure in RS is just like having an infinitely

long RS

However, no valid solution can provide robustness against

a thread failing in its critical section (CS)

A thread Ti that fails in its CS does not signal that

fact to other threads: for them Ti is still in its CS

57

Synchronization Primitives

Most common primitives

Locks (mutual exclusion)

Condition variables

Semaphores

Monitors

Need

Semaphores, or

Locks and condition variables, or

Monitors

Computer Science, Rutgers

58

CS 519: Operating System Theory

Solutions for Mutual Exclusion

•software reservation: a thread must register its

intent to enter CS and then, wait until no other thread

has registered a similar intention before proceeding

•spin-locks using memory-interlocked instructions:

require special hardware to ensure that a given location

can be read, modified and written without interruption

(i.e. TST: test&set instruction)

•OS-based mechanisms for ME: semaphores, monitors,

message passing, lock files

59

Locks

Mutual exclusion want to be

the only thread modifying a set

of data items

Can look at it as exclusive

access to data items or to a

piece of code

Have three components:

Acquire, Release, Waiting

Computer Science, Rutgers

60

CS 519: Operating System Theory

Example

public class BankAccount

{

Lock aLock = new Lock;

int balance = 0;

...

public void deposit(int amount)

{

aLock.acquire();

balance = balance + amount;

aLock.release();

}

Computer Science, Rutgers

61

public void withdrawal(int amount)

{

aLock.acquire();

balance = balance - amount;

aLock.release();

}

}

CS 519: Operating System Theory

Implementing Locks Inside OS Kernel

From Nachos (with some simplifications)

public class Lock {

private KThread lockHolder = null;

private ThreadQueue waitQueue =

ThreadedKernel.scheduler.newThreadQueue(true);

public void acquire() {

KThread thread = KThread.currentThread();

if (lockHolder != null) {

waitQueue.waitForAccess(thread);

KThread.sleep();

}

else {

lockHolder = thread;

}

}

Computer Science, Rutgers

62

// Get thread object (TCB)

// Gotta wait

// Put thread on wait queue

// Context switch

// Got the lock

CS 519: Operating System Theory

Implementing Locks Inside OS Kernel

(cont)

public void release() {

if ((lockHolder = waitQueue.nextThread()) != null)

lockHolder.ready();

// Wake up a waiting thread

}

This implementation is not quite right … what’s missing?

Computer Science, Rutgers

63

CS 519: Operating System Theory

Implementing Locks Inside OS Kernel

(cont)

public void release() {

boolean intStatus = Machine.interrupt().disable();

if ((lockHolder = waitQueue.nextThread()) != null)

lockHolder.ready();

Machine.interrupt().restore(intStatus);

}

Unfortunately, disabling interrupts only works for uniprocessors.

Computer Science, Rutgers

64

CS 519: Operating System Theory

Implementing Locks At User-Level

Why?

Expensive to enter the kernel

Parallel programs on multiprocessor systems

What’s the problem?

Can’t disable interrupt …

Many software algorithms for mutual exclusion

See any OS book

Disadvantages: very difficult to get correct

So what do we do?

Computer Science, Rutgers

65

CS 519: Operating System Theory

Implementing Locks At User-Level

Simple with a “little bit” of help from the hardware

Atomic read-modify-write instructions

Test-and-set

Atomically read a variable and, if the value of the variable is currently

0, set it to 1

Fetch-and-increment

Compare-and-swap

Computer Science, Rutgers

66

CS 519: Operating System Theory

Hardware Solutions: Interrupt Disabling

•On a uniprocessor, mutual

exclusion is preserved but

efficiency of execution is

degraded

Process Pi:

repeat

• while in CS, execution

disable interrupts

cannot be interleaved with

critical section

other processes in RS

enable interrupts

•On a multiprocessor, mutual

remainder section

exclusion is not preserved

forever

• CS is atomic but not

mutually exclusive

•Generally not an acceptable

solution

67

Hardware Solutions: Special Machine

Instructions

• Normally, an access to a memory location excludes

other access to that same location

• Extension: designers have proposed machines

instructions that perform two actions atomically

(indivisible) on the same memory location (ex:

reading and writing)

• The execution of such an instruction is also mutually

exclusive (even with multiple CPUs)

• They can be used to provide mutual exclusion but

need to be complemented by other mechanisms to

satisfy the other two requirements of the CS

problem (and avoid starvation and deadlock)

68

Test-and-Set Instruction

•A C++ description of

test-and-set:

•An algorithm that uses

test&set for mutual exclusion:

69

bool testset(int& i)

{

if (i==0) {

i=1;

return true;

} else {

return false;

}

}

Process Pi:

repeat

repeat{}

until testset(b);

CS

b:=0;

RS

forever

Test-and-Set Instruction (cont.)

Shared variable b is initialized to 0

Only the first Pi that sets b enters CS

Mutual exclusion is preserved

•if Pi enter CS, the other Pj are busy waiting

When Pi exit CS, the selection of the next Pj that

enters CS is arbitrary

No bounded waiting

Starvation is possible

70

Using xchg for Mutual Exclusion

•Shared variable b is

initialized to 0

Process Pi:

repeat

k:=1

repeat xchg(k,b)

until k=0;

CS

b:=0;

RS

forever

•Each Pi has a local variable

k

•The only Pi that can enter

CS is the one that finds

b=0

•This Pi excludes all the

other Pj by setting b to 1

71

Atomic Read-Modify-Write Instructions

Test-and-set

Read a memory location and, if the value is currently 0, set it

to 1

Fetch-and-increment

Return the value of of a memory location

Increment the value by 1 (in memory, not the value returned)

Compare-and-swap

Compare the value of a memory location with an old value

If the same, replace with a new value

Computer Science, Rutgers

72

CS 519: Operating System Theory

Mutual Exclusion Machine Instructions

Advantages

Applicable to any number of

processes/threads on either a single

processor or multiple processors sharing main

memory

It is simple and easy to verify

It can be used to support multiple critical

sections

73

Mutual Exclusion Machine Instructions

Disadvantages

Busy-waiting consumes processor time

Starvation is possible when a process leaves a

critical section and more than one process is

waiting.

Deadlock

If a low priority process has the critical region and

a higher priority process needs it, the higher

priority process will obtain the processor just to

wait for the critical region

74

Implementing Spin Locks Using Test&Set

#define UNLOCKED 0

#define LOCKED 1

Spin_acquire(lock)

{

while (test-and-set(lock) == LOCKED);

}

Spin_release(lock)

{

lock = UNLOCKED;

}

Problems?

Computer Science, Rutgers

75

CS 519: Operating System Theory

Implementing Spin Locks Using Test&Set

#define UNLOCKED 0

#define LOCKED 1

Spin_acquire(lock)

{

while (test-and-set(lock) == LOCKED);

}

Spin_release(lock)

{

lock = UNLOCKED;

}

Problems? Lots of memory traffic if TAS always sets; lots of

traffic when lock is released; no ordering guarantees. Solutions?

Computer Science, Rutgers

76

CS 519: Operating System Theory

Spin Locks Using Test and Test&Set

Spin_acquire(lock)

{

while (1) {

while (lock == LOCKED);

if (test-and-set(lock) == UNLOCKED)

break;

}

}

Spin_release(lock)

{

lock = UNLOCKED;

}

Better, since TAS is guaranteed not to generate traffic

unnecessarily. But there is still lots of traffic after a release.

Still no ordering guarantees.

Computer Science, Rutgers

77

CS 519: Operating System Theory

OS Solutions: Semaphores

Synchronization tool (provided by the OS) that does not

require busy waiting

A semaphore S is an integer variable that, apart from

initialization, can only be accessed through two atomic

and mutually exclusive operations:

wait(S)

signal(S)

Avoids busy waiting

when a thread has to wait, the OS will put it in a

blocked queue of threads waiting for that semaphore

78

Semaphores

•Internally, a semaphore is a record (structure):

type semaphore = record

count: integer;

queue: list of threads

end;

var S: semaphore;

•When a thread must wait for a semaphore S, it is

blocked and put on the semaphore’s queue

•The signal operation removes (according to a fair

policy like ,FIFO) one thread from the queue and puts

it in the list of ready threads

79

Semaphore Operations

wait(S):

S.count--;

if (S.count<0) {

block this thread

place this thread in S.queue

}

signal(S):

S.count++;

if (S.count<=0) {

remove a thread P from S.queue

place this thread P on ready list

}

•S.count must be initialized to a

nonnegative value (depending on application)

80

Semaphores: Observations

S.count >=0

the number of threads that can execute wait(S)

without being blocked is S.count

S.count<0

the number of threads waiting on S is = |S.count|

Atomicity and mutual exclusion

no two threads can be in wait(S) and signal(S) (on the

same S) at the same time (even with multiple CPUs)

The code defining wait(S) and signal(S) must be

executed in critical sections

81

Semaphores: Implementation

•Key observation: the critical sections defined by

wait(S) and signal(S) are very short (typically 10

instructions)

•Uniprocessor solutions:

disable interrupts during these operations (ie: for a very short

period)

does not work on a multiprocessor machine.

•Multiprocessor solutions:

use software or hardware mutual exclusion solutions in the OS.

the amount of busy waiting is small.

82

Using Semaphores for Solving Critical

Section Problems

For n threads

Initialize S.count to 1

Only one thread is allowed into

CS (mutual exclusion)

To allow k threads into CS, we

initialize S.count to k

83

Process Pi:

repeat

wait(S);

CS

signal(S);

RS

forever

Using Semaphores to SynchronizeThreads

We have two threads: P1 and P2

Problem: Statement S1 in P1 must be performed

before statement S2 in P2

Solution: define a semaphore “synch”

Initialize synch to 0

P1 code:

S1;

signal(synch);

P2 code

wait(synch);

S2;

84

Binary Semaphores

•Similar to general (counting) semaphores except that “count”

is Boolean valued

•Counting semaphores can be implemented using binary

semaphores

•More difficult to use than counting semaphores (eg: they

cannot be initialized to an integer k > 1)

85

Binary Semaphore Operations

waitB(S):

if (S.value = 1) {

S.value := 0;

} else {

block this process

place this process in S.queue

}

signalB(S):

if (S.queue is empty) {

S.value := 1;

} else {

remove a process P from S.queue

place this process P on ready list

}

86

Problems with Semaphores

• Semaphores are a powerful tool for enforcing

mutual exclusion and coordinate threads

• Problem: wait(S) and signal(S) are scattered

among several threads

•It is difficult to understand their effects

•Usage must be correct in all threads

•One badly coded (or malicious) thread can fail

the entire collection of threads

87

Monitors

• Are high-level language constructs that provide

equivalent functionality to semaphores but are

easier to control

• Found in many concurrent programming languages

• Concurrent Pascal, Modula-3, uC++, Java...

• Can be implemented using semaphores

88

Monitors

Is a software module containing:

•one or more procedures

•an initialization sequence

•local data variables

Characteristics:

•local variables accessible only by monitor’s procedures

•a process enters the monitor by invoking one of it’s

procedures

•only one process can be in the monitor at any one time

89

Monitors

• The monitor ensures mutual exclusion

• no need to program this constraint explicitly

• Shared data are protected by placing them in the

monitor

•The monitor locks the shared data on process entry

• Process/thread synchronization is done using

condition variables, which represent conditions a

process may need to wait for before executing in the

monitor

90

Condition Variables

A condition variable is always associated with a

condition and a lock

Typically used to wait for a condition to take on a given

value

Three operations:

cond_wait(lock, cond_var)

cond_signal(cond_var)

cond_broadcast(cond_var)

Computer Science, Rutgers

91

CS 519: Operating System Theory

Condition Variables

Local to the monitor (accessible only within the monitor)

Can be access and changed only by two functions:

•cwait(a): blocks execution of the calling thread on

condition (variable) a

• the process can resume execution only if another process

executes csignal(a)

•csignal(a): resume execution of some process blocked

on condition (variable) a.

• If several such process exists: choose any one

• If no such process exists: do nothing

92

Condition Variables

cond_wait(lock, cond_var)

Release the lock

Sleep on cond_var

When awakened by the system, reacquire the lock and return

cond_signal(cond_var)

If at least 1 thread is sleeping on cond_var, wake 1 up

Otherwise, no effect

cond_broadcast(cond_var)

If at least 1 thread is sleeping on cond_var, wake everyone up

Otherwise, no effect

Computer Science, Rutgers

93

CS 519: Operating System Theory

Posix Thread (Pthread) API

_

thread creation and termination

pthread_create(&tid,NULL,start_fn,arg);

pthread_exit(status)’

_

_

_

thread join

pthread_join(tid, &status);

mutual exclusion

pthread_mutex_lock(&lock);

pthread_mutex_unlock(&lock);

condition variable

pthread_cond_wait(&c,&lock);

pthread_cond_signal(&c);

94

Condition Variables (example)

_

_

thread 1

pthread_mutex_lock(&lock);

while (!my-condition)

pthread_cond_wait(&c,&lock);

do_critical_section();

pthread_mutex_unlock(&lock);

thread 2

pthread_mutex_lock(&lock);

my-condition = true;

pthread_mutex_unlock(&lock);

pthread_cond_signal(&c);

95

Producer/Consumer Example

Producer

Consumer

lock(lock_bp)

while (free_bp.is_empty())

cond_wait(lock_bp, cond_freebp_empty)

buffer free_bp.get_buffer()

unlock(lock_bp)

lock(lock_bp)

while (data_bp.is_empty())

cond_wait(lock_bp, cond_databp_empty)

buffer data_bp.get_buffer()

unlock(lock_bp)

… produce data in buffer …

… consume data in buffer …

lock(lock_bp)

data_bp.add_buffer(buffer)

cond_signal(cond_databp_empty)

unlock(lock_bp)

lock(lock_bp)

free_bp.add_buffer(buffer)

cond_signal(cond_freebp_empty)

unlock(lock_bp)

Computer Science, Rutgers

96

CS 519: Operating System Theory

Monitors

•Awaiting processes are either

in the entrance queue or in a

condition queue

•A process puts itself into

condition queue cn by issuing

cwait(cn)

•csignal(cn) brings into the

monitor one process in condition

cn queue

•csignal(cn) blocks the calling

process and puts it in the

urgent queue (unless csignal is

the last operation of the

monitor procedure)

97

Deadlock

Computer Science, Rutgers

Lock A

Lock B

A

B

98

CS 519: Operating System Theory

Deadlock

Computer Science, Rutgers

Lock A

Lock B

A

B

99

CS 519: Operating System Theory

Deadlock

Computer Science, Rutgers

Lock A

Lock B

Lock B

Lock A

100

CS 519: Operating System Theory

Deadlock (Cont’d)

Deadlock can occur whenever multiple parties are competing for

exclusive access to multiple resources

How can we deal deadlocks?

Deadlock prevention

Design a system without one of mutual exclusion, hold and wait, no

preemption or circular wait (four necessary conditions)

To prevent circular wait, impose a strict ordering on resources. For instance, if

need to lock variables A and B, always lock A first, then lock B

Deadlock avoidance

Deny requests that may lead to unsafe states (Banker’s algorithm)

Running the algorithm on all resource requests is expensive

Deadlock detection and recovery

Check for circular wait periodically. If circular wait is found, abort all

deadlocked processes (extreme solution but very common)

Checking for circular wait is expensive

Computer Science, Rutgers

101

CS 519: Operating System Theory