* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download Mean, Mode, Median, and Standard Deviation

Survey

Document related concepts

Transcript

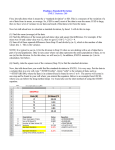

Mean, Mode, Median, and Standard Deviation The Mean and Mode The sample mean is the average and is computed as the sum of all the observed outcomes from the sample divided by the total number of events. We use x as the symbol for the sample mean. In math terms, where n is the sample size and the x correspond to the observed valued. Example Suppose you randomly sampled six acres in the Desolation Wilderness for a non-indigenous weed and came up with the following counts of this weed in this region: 34, 43, 81, 106, 106 and 115 We compute the sample mean by adding and dividing by the number of samples, 6. 34 + 43 + 81 + 106 + 106 + 115 = 80.83 6 We can say that the sample mean of non-indigenous weed is 80.83. The mode of a set of data is the number with the highest frequency. In the above example 106 is the mode, since it occurs twice and the rest of the outcomes occur only once. The population mean is the average of the entire population and is usually impossible to compute. We use the Greek letter for the population mean. Median, and Trimmed Mean One problem with using the mean, is that it often does not depict the typical outcome. If there is one outcome that is very far from the rest of the data, then the mean will be strongly affected by this outcome. Such an outcome is called and outlier. An alternative measure is the median. The median is the middle score. If we have an even number of events we take the average of the two middles. The median is better for describing the typical value. It is often used for income and home prices. Example Suppose you randomly selected 10 house prices in the South Lake Tahoe area. Your are interested in the typical house price. In $100,000 the prices were 2.7, 2.9, 3.1, 3.4, 3.7, 4.1, 4.3, 4.7, 4.7, 40.8 If we computed the mean, we would say that the average house price is 744,000. Although this number is true, it does not reflect the price for available housing in South Lake Tahoe. A closer look at the data shows that the house valued at 40.8 x $100,000 = $4.08 million skews the data. Instead, we use the median. Since there is an even number of outcomes, we take the average of the middle two 3.7 + 4.1 = 3.9 2 The median house price is $390,000. This better reflects what house shoppers should expect to spend. There is an alternative value that also is resistant to outliers. This is called the trimmed mean which is the mean after getting rid of the outliers or 5% on the top and 5% on the bottom. We can also use the trimmed mean if we are concerned with outliers skewing the data, however the median is used more often since more people understand it. Example: At a ski rental shop data was collected on the number of rentals on each of ten consecutive Saturdays: 44, 50, 38, 96, 42, 47, 40, 39, 46, 50. To find the sample mean, add them and divide by 10: 44 + 50 + 38 + 96 + 42 + 47 + 40 + 39 + 46 + 50 = 49.2 10 Notice that the mean value is not a value of the sample. To find the median, first sort the data: 38, 39, 40, 42, 44, 46, 47, 50, 50, 96 Notice that there are two middle numbers 44 and 46. To find the median we take the average of the two. 44 + 46 Median = = 45 2 Notice also that the mean is larger than all but three of the data points. The mean is influenced by outliers while the median is robust. Variance, Standard Deviation and Coefficient of Variation The mean, mode, median, and trimmed mean do a nice job in telling where the center of the data set is, but often we are interested in more. For example, a pharmaceutical engineer develops a new drug that regulates iron in the blood. Suppose she finds out that the average sugar content after taking the medication is the optimal level. This does not mean that the drug is effective. There is a possibility that half of the patients have dangerously low sugar content while the other half have dangerously high content. Instead of the drug being an effective regulator, it is a deadly poison. What the pharmacist needs is a measure of how far the data is spread apart. This is what the variance and standard deviation do. First we show the formulas for these measurements. Then we will go through the steps on how to use the formulas. We define the variance to be and the standard deviation to be Variance and Standard Deviation: Step by Step Calculate the mean, x. Write a table that subtracts the mean from each observed value. Square each of the differences. Add this column. Divide by n -1 where n is the number of items in the sample This is the variance. 6. To get the standard deviation we take the square root of the variance. 1. 2. 3. 4. 5. Example The owner of the Ches Tahoe restaurant is interested in how much people spend at the restaurant. He examines 10 randomly selected receipts for parties of four and writes down the following data. 44, 50, 38, 96, 42, 47, 40, 39, 46, 50 He calculated the mean by adding and dividing by 10 to get x = 49.2 Below is the table for getting the standard deviation: x 44 50 38 96 42 47 40 39 46 50 Total x - 49.2 -5.2 0.8 11.2 46.8 -7.2 -2.2 -9.2 -10.2 -3.2 0.8 (x - 49.2 )2 27.04 0.64 125.44 2190.24 51.84 4.84 84.64 104.04 10.24 0.64 2600.4 Now 2600.4 = 288.7 10 - 1 Hence the variance is 289 and the standard deviation is the square root of 289 = 17. Since the standard deviation can be thought of measuring how far the data values lie from the mean, we take the mean and move one standard deviation in either direction. The mean for this example was about 49.2 and the standard deviation was 17. We have: 49.2 - 17 = 32.2 and 49.2 + 17 = 66.2 What this means is that most of the patrons probably spend between $32.20 and $66.20. The sample standard deviation will be denoted by s and the population standard deviation will be denoted by the Greek letter . The sample variance will be denoted by s2 and the population variance will be denoted by 2. The variance and standard deviation describe how spread out the data is. If the data all lies close to the mean, then the standard deviation will be small, while if the data is spread out over a large range of values, s will be large. Having outliers will increase the standard deviation. One of the flaws involved with the standard deviation, is that it depends on the units that are used. One way of handling this difficulty, is called the coefficient of variation which is the standard deviation divided by the mean times 100% CV = 100% In the above example, it is 17 100% = 34.6% 49.2 This tells us that the standard deviation of the restaurant bills is 34.6% of the mean. Chebyshev's Theorem A mathematician named Chebyshev came up with bounds on how much of the data must lie close to the mean. In particular for any positive k, the proportion of the data that lies within k standard deviations of the mean is at least 1 1 k2 For example, if k = 2 this number is 1 1 - = .75 2 2 This tell us that at least 75% of the data lies within 75% of the mean. In the above example, we can say that at least 75% of the diners spent between 49.2 - 2(17) = 15.2 and 49.2 + 2(17) = 83.2 Z-4: Mean, Standard Deviation, And Coefficient Of Variation Written by Madelon F. Zady Don't be caught in your skivvies when you talk about CV's, or confuse STD's with SD's. Do you know what they mean when they talk about mean? These are the bread and butter statistical calculations. Make sure you're doing them right. EdD Assistant Professor Clinical Laboratory Science Program University of Louisville Louisville, Kentucky June 1999 Mean or average Standard deviation Degrees of freedom Variance Normal distribution Coefficient of variation Alternate formulae References Self-assessment exercises About the Author Many of the terms covered in this lesson are also found in the lessons on Basic QC Practices, which appear on this website. It is highly recommended that you study these lessons online or in hard copy[1]. The importance of this current lesson, however, resides in the process. The lesson sets up a pattern to be followed in future lessons. Mean or average The simplest statistic is the mean or average. Years ago, when laboratories were beginning to assay controls, it was easy to calculate a mean and use that value as the "target" to be achieved. For example, given the following ten analyses of a control material - 90, 91, 89, 84, 88, 93, 80, 90, 85, 87 - the mean or Xbar is 877/10 or 87.7. [The term Xbar refers to a symbol having a line or bar over the X, , however, we will use the term instead of the symbol in the text of these lessons because it is easier to present.] The mean value characterizes the "central tendency" or "location" of the data. Although the mean is the value most likely to be observed, many of the actual values are different than the mean. When assaying control materials, it is obvious that technologists will not achieve the mean value each and every time a control is analyzed. The values observed will show a dispersion or distribution about the mean, and this distribution needs to be characterized to set a range of acceptable control values. Standard deviation The dispersion of values about the mean is predictable and can be characterized mathematically through a series of manipulations, as illustrated below, where the individual x-values are shown in column A. Column A X value 90 91 89 84 88 93 80 90 85 87 X = 877 Column B X value-Xbar 90 - 87.7 = 2.30 91 - 87.7 = 3.30 89 - 87.7 = 1.30 84 - 87.7 = -3.70 88 - 87.7 = 0.30 93 - 87.7 = 5.30 80 - 87.7 = -7.70 90 - 87.7 = 2.30 85 - 87.7 = -2.70 87 - 87.7 = -0.70 (X-Xbar) = 0 Column C (X-Xbar)2 (2.30)2 = 5.29 (3.30)2 = 10.89 (1.30)2 = 1.69 (-3.70)2 = 13.69 (0.30)2 = 0.09 (5.30)2 = 28.09 (-7.70)2 = 59.29 (2.30)2 = 5.29 (-2.70)2 = 7.29 (-0.70)2 = 0.49 (X-Xbar)² = 132.10 The first mathematical manipulation is to sum ( ) the individual points and calculate the mean or average, which is 877 divided by 10, or 87.7 in this example. The second manipulation is to subtract the mean value from each control value, as shown in column B. This term, shown as X value - Xbar, is called the difference score. As can be seen here, individual difference scores can be positive or negative and the sum of the difference scores is always zero. The third manipulation is to square the difference score to make all the terms positive, as shown in Column C. Next the squared difference scores are summed. Finally, the predictable dispersion or standard deviation (SD or s) can be calculated as follows: = [132.10/(10-1)]1/2 = 3.83 Degrees of freedom The "n-1" term in the above expression represents the degrees of freedom (df). Loosely interpreted, the term "degrees of freedom" indicates how much freedom or independence there is within a group of numbers. For example, if you were to sum four numbers to get a total, you have the freedom to select any numbers you like. However, if the sum of the four numbers is stipulated to be 92, the choice of the first 3 numbers is fairly free (as long as they are low numbers), but the last choice is restricted by the condition that the sum must equal 92. For example, if the first three numbers chosen at random are 28, 18, and 36, these numbers add up to 82, which is 10 short of the goal. For the last number there is no freedom of choice. The number 10 must be selected to make the sum come out to 92. Therefore, the degrees of freedom have been limited by 1 and only n-1 degrees of freedom remain. In the SD formula, the degrees of freedom are n minus 1 because the mean of the data has already been calculated (which imposes one condition or restriction on the data set). Variance Another statistical term that is related to the distribution is the variance, which is the standard deviation squared (variance = SD² ). The SD may be either positive or negative in value because it is calculated as a square root, which can be either positive or negative. By squaring the SD, the problem of signs is eliminated. One common application of the variance is its use in the F-test to compare the variance of two methods and determine whether there is a statistically significant difference in the imprecision between the methods. In many applications, however, the SD is often preferred because it is expressed in the same concentration units as the data. Using the SD, it is possible to predict the range of control values that should be observed if the method remains stable. As discussed in an earlier lesson, laboratorians often use the SD to impose "gates" on the expected normal distribution of control values. Normal or Gaussian distribution Traditionally, after the discussion of the mean, standard deviation, degrees of freedom, and variance, the next step was to describe the normal distribution (a frequency polygon) in terms of the standard deviation "gates." The figure here is a representation of the frequency distribution of a large set of laboratory values obtained by measuring a single control material. This distribution shows the shape of a normal curve. Note that a "gate" consisting of ±1SD accounts for 68% of the distribution or 68% of the area under the curve, ±2SD accounts for 95% and ±3SD accounts for >99%. At ±2SD, 95% of the distribution is inside the "gates," 2.5% of the distribution is in the lower or left tail, and the same amount (2.5%) is present in the upper tail. Some authors call this polygon an error curve to illustrate that small errors from the mean occur more frequently than large ones. Other authors refer to this curve as a probability distribution. Coefficient of variation Another way to describe the variation of a test is calculate the coefficient of variation, or CV. The CV expresses the variation as a percentage of the mean, and is calculated as follows: CV% = (SD/Xbar)100 In the laboratory, the CV is preferred when the SD increases in proportion to concentration. For example, the data from a replication experiment may show an SD of 4 units at a concentration of 100 units and an SD of 8 units at a concentration of 200 units. The CVs are 4.0% at both levels and the CV is more useful than the SD for describing method performance at concentrations in between. However, not all tests will demonstrate imprecision that is constant in terms of CV. For some tests, the SD may be constant over the analytical range. The CV also provides a general "feeling" about the performance of a method. CVs of 5% or less generally give us a feeling of good method performance, whereas CVs of 10% and higher sound bad. However, you should look carefully at the mean value before judging a CV. At very low concentrations, the CV may be high and at high concentrations the CV may be low. For example, a bilirubin test with an SD of 0.1 mg/dL at a mean value of 0.5 mg/dL has a CV of 20%, whereas an SD of 1.0 mg/dL at a concentration of 20 mg/dL corresponds to a CV of 5.0%. Alternate formulae The lessons on Basic QC Practices cover these same terms (see QC - The data calculations), but use a different form of the equation for calculating cumulative or lot-to-date means and SDs. Guidelines in the literature recommend that cumulative means and SDs be used in calculating control limits [2-4], therefore it is important to be able to perform these calculations. The cumulative mean can be expressed as Xbar = ( xi)t /nt, which appears similar to the prior mean term except for the "t" subscripts, which refer to data from different time periods. The idea is to add the xi and n terms from groups of data in order to calculate the mean of the combined groups. The cumulative or lot-to-date standard deviation can be expressed as follows: This equation looks quite different from the prior equation in this lesson, but in reality, it is equivalent. The cumulative standard deviation formula is derived from an SD formula called the Raw Score Formula. Instead of first calculating the mean or Xbar, the Raw Score Formula calculates Xbar inside the square root sign. Oftentimes in reading about statistics, an unfamiliar formula may be presented. You should realize that the mathematics in statistics is often redundant. Each procedure builds upon the previous procedure. Formulae that seem to be different are derived from mathematical manipulations of standard expressions with which you are often already acquainted. References 1. Westgard JO, Barry, PL, Quam EF. Basic QC practices: Training in statistical quality control for healthcare laboratories. Madison, WI: Westgard Quality Corporation, 1998. 2. Westgard JO, Barry PL, Hunt MR, Groth, T. A multirule Shewhart chart for quality control in clinical chemistry. Clin Chem 1981;27:493-501. 3. Westgard JO, Klee GG. Quality Management. Chapter 17 in Tietz Textbook of Clinical Chemistry, 3rd ed., Burtis and Ashwood, eds. Philadelphia, PA: Saunders, 1999. 4. NCCLS C24-A2 document. Statistical quality control for quantitative measurements: Principles and definitions. National Committee for Clinical Laboratory Standards, Wayne PA, 1999. Self-assessment exercises 1. Manually calculate the mean, SD, and CV for the following data: 44, 47, 48, 43, 48. 2. Use the SD Calculator to calculate the mean, SD, and CV for the following data: 203, 202, 204, 201, 197, 200, 198, 196, 206, 198, 196, 192, 205, 190, 207, 198, 201, 195, 209, 186. 3. If the data above were for a cholesterol control material, calculate the control limits that would contain 95% of the expected values. 4. If control limits (or SD "gates") were set as the mean +/- 2.5 SD, what percentage of the control values are expected to exceed these limits? [Hint: you need to find a table of areas under a normal curve.] 5. Describe how to calculate cumulative control limits. 6. (Optional) Show the equivalence of the regular SD formula and the Raw Score formula. [Hint: start with the regular formula, substitute a summation term for Xbar, multiply both sides by n/n, then rearrange.] About the author: Madelon F. Zady Madelon F. Zady is an Assistant Professor at the University of Louisville, School of Allied Health Sciences Clinical Laboratory Science program and has over 30 years experience in teaching. She holds BS, MAT and EdD degrees from the University of Louisville, has taken other advanced course work from the School of Medicine and School of Education, and also advanced courses in statistics. She is a registered MT(ASCP) and a credentialed CLS(NCA) and has worked part-time as a bench technologist for 14 years. She is a member of the: American Society for Clinical Laboratory Science, Kentucky State Society for Clinical Laboratory Science, American Educational Research Association, and the National Science Teachers Association. Her teaching areas are clinical chemistry and statistics. Her research areas are metacognition and learning theory.