* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Database Buffer Cache

Entity–attribute–value model wikipedia , lookup

Serializability wikipedia , lookup

Microsoft SQL Server wikipedia , lookup

Open Database Connectivity wikipedia , lookup

Ingres (database) wikipedia , lookup

Extensible Storage Engine wikipedia , lookup

Functional Database Model wikipedia , lookup

Microsoft Jet Database Engine wikipedia , lookup

Concurrency control wikipedia , lookup

Relational model wikipedia , lookup

Versant Object Database wikipedia , lookup

Oracle Database wikipedia , lookup

Database model wikipedia , lookup

Oracle Architecture

------------------------------------------------------------------------------------------------------------------------------------------

Oracle server: An Oracle server includes an Oracle Instance and an Oracle

database.

Oracle database: An Oracle database consists of files.

Sometimes these are referred to as operating system files, but they are

actually database files that store the database information that a firm or

organization needs in order to operate.

The redo log files are used to recover the database in the event of

application program failures, instance failures and other minor failures.

The archived redo log files are used to recover the database if a disk fails.

Other files not shown in the figure include:

o The required parameter file that is used to specify parameters for configuring

an Oracle instance when it starts up.

o The optional password file authenticates special users of the database – these

are termed privileged users and include database administrators.

o Alert and Trace Log Files – these files store information about errors and

actions taken that affect the configuration of the database

Oracle instance: An Oracle Instance consists of two different sets of components:

The first component set is the set of background processes (PMON, SMON,

RECO, DBW0, LGWR, CKPT, D000 and others).

o These will be covered later in detail – each background process is a computer

program.

o These processes perform input/output and monitor other Oracle processes to

provide good performance and database reliability.

The second component set includes the memory structures that comprise

the Oracle instance.

o When an instance starts up, a memory structure called the System Global Area

(SGA) is allocated.

o At this point the background processes also start.

An Oracle Instance provides access to one and only one Oracle database.

Storage Structure

An Oracle database is made up of physical and logical structures. Physical

structures can be seen and operated on from the operating system, such as the

physical files that store data on a disk.

Logical structures are created and recognized by Oracle Database and are not

known to the operating system. The primary logical structure in a database, a

tablespace, contains physical files. The applications developer or user may be

aware of the logical structure, but is not usually aware of this physical structure.

The database administrator (DBA) must understand the relationship between the

physical and logical structures of a database.

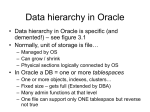

Logical Storage Structures

This section discusses logical storage structures. The following logical storage

structures enable Oracle Database to have fine-grained control of disk space use:

Tablespace

A database is divided into logical storage units called tablespaces. A tablespace is

the logical container for a segment. Each tablespace contains at least one data

file.

Segment

A segment is a set of extents allocated for a user object (for example, a table or

index), undo data, or temporary data.

Extent

An extent is a specific number of logically contiguous data blocks, obtained in a

single allocation, used to store a specific type of information.

Data blocks

At the finest level of granularity, Oracle Database data is stored in data blocks.

One data block corresponds to a specific number of bytes on disk.

Table spaces___________________________

A database consists of one or more tablespaces. A tablespace is a logical grouping

of one or more physical datafiles or tempfiles, and is the primary structure by

which the database manages storage.

There are various types of tablespaces, including the following:

Permanent tablespaces

These tablespaces are used to store system and user data. Permanent tablespaces

consist of one or more datafiles. In Oracle Database XE, all your application data is

by default stored in the tablespace named USERS. This tablespace consists of a

single datafile that automatically grows (autoextends) as your applications store

more data.

Online and Offline Tablespaces

A tablespace can be online (accessible) or offline (not accessible). A tablespace is

generally online, so that users can access the information in the tablespace.

However, sometimes a tablespace is taken offline to make a portion of the

database unavailable while allowing normal access to the remainder of the

database. This makes many administrative tasks easier to perform.

Temporary tablespaces

Temporary tablespaces improve the concurrency of multiple sort operations, and

reduce their overhead. Temporary tablespaces are the most efficient tablespaces

for disk sorts. Temporary tablespaces consist of one or more tempfiles. Oracle

Database XE automatically manages storage for temporary tablespaces.

Undo tablespace

Oracle Database XE transparently creates and automatically manages undo data

in this tablespace.

When a transaction modifies the database, Oracle Database XE makes a copy of

the original data before modifying it. The original copy of the modified data is

called undo data. This information is necessary for the following reasons:

To undo any uncommitted changes made to the database in the event that a

rollback operation is necessary. A rollback operation can be the result of a user

specifically issuing a ROLLBACK statement to undo the changes of a misguided or

unintentional transaction, or it can be part of a recovery operation.

To provide read consistency, which means that each user can get a consistent

view of data, even while other uncommitted changes may be occurring against

the data. For example, if a user issues a query at 10:00 a.m. and the query runs

for 15 minutes, then the query results should reflect the entire state of the data

at 10:00 a.m., regardless of updates or inserts by other users during the query.

The SYSTEM Tablespace

The SYSTEM tablespace is a necessary administrative tablespace included with

the database when it is created. Oracle Database uses SYSTEM to manage the

database.

The SYSTEM tablespace includes the following information, all owned by the SYS

user:

The data dictionary

Tables and views that contain administrative information about the database

Compiled stored objects such as triggers, procedures, and packages

The SYSTEM tablespace is managed as any other tablespace, but requires a

higher level of privilege and is restricted in some ways. For example, you cannot

rename or drop the SYSTEM tablespace.

By default, Oracle Database sets all newly created user tablespaces to be locally

managed. In a database with a locally managed SYSTEM tablespace, you cannot

create dictionary-managed tablespaces (which are deprecated). However, if you

execute the CREATE DATABASE statement manually and accept the defaults,

then the SYSTEM tablespace is dictionary managed. You can migrate an existing

dictionary-managed SYSTEM tablespace to a locally managed format.

The SYSAUX Tablespace

The SYSAUX tablespace is an auxiliary tablespace to the SYSTEM tablespace. The

SYSAUX tablespace provides a centralized location for database metadata that

does not reside in the SYSTEM tablespace. It reduces the number of tablespaces

created by default, both in the seed database and in user-defined databases.

Several database components, including Oracle Enterprise Manager and Oracle

Streams, use the SYSAUX tablespace as their default storage location. Therefore,

the SYSAUX tablespace is created automatically during database creation or

upgrade.

During normal database operation, the database does not allow the SYSAUX

tablespace to be dropped or renamed. If the SYSAUX tablespace becomes

unavailable, then core database functionality remains operational. The database

features that use the SYSAUX tablespace could fail, or function with limited

capability.

Undo Tablespaces

An undo tablespace is a locally managed tablespace reserved for system-managed

undo data (see "Undo Segments"). Like other permanent tablespaces, undo

tablespaces contain data files. Undo blocks in these files are grouped in extents.

Automatic Undo Management Mode

Undo tablespaces require the database to be in the default automatic undo

management mode. This mode eliminates the complexities of manually

administering undo segments. The database automatically tunes itself to provide

the best possible retention of undo data to satisfy long-running queries that may

require this data.

An undo tablespace is automatically created with a new installation of Oracle

Database. Earlier versions of Oracle Database may not include an undo tablespace

and use legacy rollback segments instead, known as manual undo management

mode. When upgrading to Oracle Database 11g, you can enable automatic undo

management mode and create an undo tablespace. Oracle Database contains an

Undo Advisor that provides advice on and helps automate your undo

environment.

A database can contain multiple undo tablespaces, but only one can be in use at a

time. When an instance attempts to open a database, Oracle Database

automatically selects the first available undo tablespace. If no undo tablespace is

available, then the instance starts without an undo tablespace and stores undo

data in the SYSTEM tablespace. Storing undo data in SYSTEM is not

recommended.

Automatic Undo Retention

The undo retention period is the minimum amount of time that Oracle Database

attempts to retain old undo data before overwriting it. Undo retention is

important because long-running queries may require older block images to supply

read consistency. Also, some Oracle Flashback features can depend on undo

availability.

In general, it is desirable to retain old undo data as long as possible. After a

transaction commits, undo data is no longer needed for rollback or transaction

recovery. The database can retain old undo data if the undo tablespace has space

for new transactions. When available space is low, the database begins to

overwrite old undo data for committed transactions.

Oracle Database automatically provides the best possible undo retention for the

current undo tablespace. The database collects usage statistics and tunes the

retention period based on these statistics and the undo tablespace size. If the

undo tablespace is configured with the AUTOEXTEND option, and if the

maximum size is not specified, then undo retention tuning is different. In this

case, the database tunes the undo retention period to be slightly longer than the

longest-running query, if space allows.

Read Consistency

Read consistency, as supported by Oracle, does the following:

Guarantees that the set of data seen by a statement is consistent with respect to

a single point in time and does not change during statement execution

(statement-level read consistency)

Ensures that readers of database data do not wait for writers or other readers of

the same data

Ensures that writers of database data do not wait for readers of the same data

Ensures that writers only wait for other writers if they attempt to update identical

rows in concurrent transactions

The simplest way to think of Oracle's implementation of read consistency is to

imagine each user operating a private copy of the database, hence the

multiversion consistency model.

Read Consistency, Undo Records, and Transactions

To manage the multiversion consistency model, Oracle must create a readconsistent set of data when a table is queried (read) and simultaneously updated

(written). When an update occurs, the original data values changed by the update

are recorded in the database undo records. As long as this update remains part of

an uncommitted transaction, any user that later queries the modified data views

the original data values. Oracle uses current information in the system global area

and information in the undo records to construct a read-consistent view of a

table's data for a query.

Only when a transaction is committed are the changes of the transaction made

permanent. Statements that start after the user's transaction is committed only

see the changes made by the committed transaction.

The transaction is key to Oracle's strategy for providing read consistency. This unit

of committed (or uncommitted) SQL statements:

Dictates the start point for read-consistent views generated on behalf of readers

Controls when modified data can be seen by other transactions of the database

for reading or updating

Temporary Tablespaces

A temporary tablespace contains transient data that persists only for the duration

of a session. No permanent schema objects can reside in a temporary tablespace.

The database stores temporary tablespace data in temp files.

Temporary tablespaces can improve the concurrency of multiple sort operations

that do not fit in memory. These tablespaces also improve the efficiency of space

management operations during sorts.

When the SYSTEM tablespace is locally managed, a default temporary tablespace

is included in the database by default during database creation. A locally managed

SYSTEM tablespace cannot serve as default temporary storage.

You can specify a user-named default temporary tablespace when you create a

database by using the DEFAULT TEMPORARY TABLESPACE extension to the

CREATE DATABASE statement. If SYSTEM is dictionary managed, and if a

default temporary tablespace is not defined at database creation, then SYSTEM

is the default temporary storage. However, the database writes a warning in the

alert log saying that a default temporary tablespace is recommended.

Managing Space in Tablespaces

Tablespaces allocate space in extents. Tablespaces can use two different methods

to keep track of their free and used space:

Locally managed tablespaces: Extent management by the tablespace

Dictionary managed tablespaces: Extent management by the data dictionary

When you create a tablespace, you choose one of these methods of space

management. Later, you can change the management method with the

DBMS_SPACE_ADMIN PL/SQL package.

Locally Managed Tablespaces

A tablespace that manages its own extents maintains a bitmap in each datafile to

keep track of the free or used status of blocks in that datafile. Each bit in the

bitmap corresponds to a block or a group of blocks. When an extent is allocated

or freed for reuse, Oracle changes the bitmap values to show the new status of

the blocks. These changes do not generate rollback information because they do

not update tables in the data dictionary (except for special cases such as

tablespace quota information).

Locally managed tablespaces have the following advantages over dictionary

managed tablespaces:

Local management of extents automatically tracks adjacent free space,

eliminating the need to coalesce free extents.

Local management of extents avoids recursive space management operations.

Such recursive operations can occur in dictionary managed tablespaces if

consuming or releasing space in an extent results in another operation that

consumes or releases space in a data dictionary table or rollback segment.

The sizes of extents that are managed locally can be determined automatically by

the system. Alternatively, all extents can have the same size in a locally managed

tablespace and override object storage options.

The LOCAL clause of the CREATE TABLESPACE or CREATE TEMPORARY

TABLESPACE statement is specified to create locally managed permanent or

temporary tablespaces, respectively.

Segment Space Management in Locally Managed Tablespaces

When you create a locally managed tablespace using the CREATE TABLESPACE

statement, the SEGMENT SPACE MANAGEMENT clause lets you specify how free

and used space within a segment is to be managed. Your choices are:

AUTO

This keyword tells Oracle that you want to use bitmaps to manage the free space

within segments. A bitmap, in this case, is a map that describes the status of each

data block within a segment with respect to the amount of space in the block

available for inserting rows. As more or less space becomes available in a data

block, its new state is reflected in the bitmap. Bitmaps enable Oracle to manage

free space more automatically; thus, this form of space management is called

automatic segment-space management.

Locally managed tablespaces using automatic segment-space management can be

created as smallfile (traditional) or bigfile tablespaces. AUTO is the default.

MANUAL

This keyword tells Oracle that you want to use free lists for managing free space

within segments. Free lists are lists of data blocks that have space available for

inserting rows.

Dictionary Managed Tablespaces

If you created your database with an earlier version of Oracle, then you could be

using dictionary managed tablespaces. For a tablespace that uses the data

dictionary to manage its extents, Oracle updates the appropriate tables in the

data dictionary whenever an extent is allocated or freed for reuse. Oracle also

stores rollback information about each update of the dictionary tables. Because

dictionary tables and rollback segments are part of the database, the space that

they occupy is subject to the same space management operations as all other

data.

Multiple Block Sizes

Oracle supports multiple block sizes in a database. The standard block size is used

for the SYSTEM tablespace. This is set when the database is created and can be

any valid size. You specify the standard block size by setting the initialization

parameter DB_BLOCK_SIZE. Legitimate values are from 2K to 32K.

In the initialization parameter file or server parameter, you can configure

subcaches within the buffer cache for each of these block sizes. Subcaches can

also be configured while an instance is running. You can create tablespaces having

any of these block sizes. The standard block size is used for the system tablespace

and most other tablespaces.

Tablespace Modes

The tablespace mode determines the accessibility of the tablespace.

Read/Write and Read-Only Tablespaces

Every tablespace is in a write mode that specifies whether it can be written to.

The mutually exclusive modes are as follows:

Read/write mode

Users can read and write to the tablespace. All tablespaces are initially created as

read/write. The SYSTEM and SYSAUX tablespaces and temporary tablespaces

are permanently read/write, which means that they cannot be made read-only.

Read-only mode

Write operations to the data files in the tablespace are prevented. A read-only

tablespace can reside on read-only media such as DVDs or WORM drives.

Read-only tablespaces eliminate the need to perform backup and recovery of

large, static portions of a database. Read-only tablespaces do not change and thus

do not require repeated backup. If you recover a database after a media failure,

then you do not need to recover read-only tablespaces.

Online and Offline Tablespaces

A tablespace can be online (accessible) or offline (not accessible) whenever the

database is open. A tablespace is usually online so that its data is available to

users. The SYSTEM tablespace and temporary tablespaces cannot be taken

offline.

A tablespace can go offline automatically or manually. For example, you can take

a tablespace offline for maintenance or backup and recovery. The database

automatically takes a tablespace offline when certain errors are encountered, as

when the database writer (DBW) process fails in several attempts to write to a

data file. Users trying to access tables in an offline tablespace receive an error.

When a tablespace goes offline, the database does the following:

The database does not permit subsequent DML statements to reference objects

in the offline tablespace. An offline tablespace cannot be read or edited by any

utility other than Oracle Database.

Active transactions with completed statements that refer to data in that

tablespace are not affected at the transaction level.

The database saves undo data corresponding to those completed statements in a

deferred undo segment in the SYSTEM tablespace. When the tablespace is

brought online, the database applies the undo data to the tablespace, if needed.

Tablespace File Size

A tablespace is either a bigfile tablespace or a smallfile tablespace. These

tablespaces are indistinguishable in terms of execution of SQL statements that do

not explicitly refer to data files or temp files. The difference is as follows:

A smallfile tablespace can contain multiple data files or temp files, but the files

cannot be as large as in a bigfile tablespace. This is the default tablespace type.

A bigfile tablespace contains one very large data file or temp file. This type of

tablespaces can do the following:

Increase the storage capacity of a database

The maximum number of data files in a database is limited (usually to 64 KB files),

so increasing the size of each data file increases the overall storage.

Reduce the burden of managing many data files and temp files

Bigfile tablespaces simplify file management with Oracle Managed Files and

Automatic Storage Management (Oracle ASM) by eliminating the need for adding

new files and dealing with multiple files.

Perform operations on tablespaces rather than individual files

Bigfile tablespaces make the tablespace the main unit of the disk space

administration, backup and recovery, and so on.

Bigfile tablespaces are supported only for locally managed tablespaces with

ASSM. However, locally managed undo and temporary tablespaces can be bigfile

tablespaces even when segments are manually managed.

SEGMENT

A segment is a set of extents that contains all the data for a specific logical storage

structure within a tablespace. For example, for each table, Oracle allocates one or

more extents to form that table's data segment, and for each index, Oracle

allocates one or more extents to form its index segment.

Oracle Database manages segment space automatically or manually. This section

assumes the use of ASSM.

Types of segment

User Segments

A single data segment in a database stores the data for one user object. There are

different types of segments. Examples of user segments include:Table, table

partition, or table cluster

Multiple segment

Temporary Segments:

When processing a query, Oracle Database often requires temporary workspace

for intermediate stages of SQL statement execution. Typical operations that may

require a temporary segment include sorting, hashing, and merging bitmaps.

While creating an index, Oracle Database also places index segments into

temporary segments and then converts them into permanent segments when the

index is complete.Oracle Database does not create a temporary segment if an

operation can be performed in memory. However, if memory use is not possible,

then the database automatically allocates a temporary segment on disk

3. Undo Segments

Oracle Database maintains records of the actions of transactions, collectively

known as undo data. Oracle Database uses undo to do the following:

Roll back an active transaction

Recover a terminated transaction

Provide read consistency

Perform some logical flashback operations

Oracle Database stores undo data inside the database rather than in external logs.

Undo data is stored in blocks that are updated just like data blocks, with changes

to these blocks generating redo. In this way, Oracle Database can efficiently

access undo data without needing to read external logs.

Undo data is stored in an undo tablespace. Oracle Database provides a fully

automated mechanism, known as automatic undo management mode, for

managing undo segments and space in an undo tablespace.

Undo Segments and Transactions

When a transaction starts, the database binds (assigns) the transaction to an

undo segment, and therefore to a transaction table, in the current undo

tablespace. In rare circumstances, if the database instance does not have a

designated undo tablespace, then the transaction binds to the system undo

segment.

Extents

An extent is a logical unit of database storage space allocation made up of a

number of contiguous data blocks. One or more extents in turn make up a

segment. When the existing space in a segment is completely used, Oracle

allocates a new extent for the segment.

When Extents Are Allocated

When you create a table, Oracle allocates to the table's data segment an initial

extent of a specified number of data blocks. Although no rows have been inserted

yet, the Oracle data blocks that correspond to the initial extent are reserved for

that table's rows.

If the data blocks of a segment's initial extent become full and more space is

required to hold new data, Oracle automatically allocates an incremental extent

for that segment. An incremental extent is a subsequent extent of the same or

greater size than the previously allocated extent in that segment.

For maintenance purposes, the header block of each segment contains a directory

of the extents in that segment.

Db Block: The Oracle Server manages data at the smallest unit in what is termed

a block or data block. Data are actually stored in blocks.

Block header

This part contains general information about the block, including disk address and

segment type. For blocks that are transaction-managed, the block header

contains active and historical transaction information.

A transaction entry is required for every transaction that updates the block.

Oracle Database initially reserves space in the block header for transaction

entries. In data blocks allocated to segments that support transactional changes,

free space can also hold transaction entries when the header space is depleted.

The space required for transaction entries is operating system dependent.

However, transaction entries in most operating systems require approximately 23

bytes.

Table directory

For a heap-organized table, this directory contains metadata about tables whose

rows are stored in this block. Multiple tables can store rows in the same block.

Row directory

For a heap-organized table, this directory describes the location of rows in the

data portion of the block.

After space has been allocated in the row directory, the database does not

reclaim this space after row deletion. Thus, a block that is currently empty but

formerly had up to 50 rows continues to have 100 bytes allocated for the row

directory. The database reuses this space only when new rows are inserted in the

block.

Row Data

This portion of the data block contains table or index data. Rows can span blocks.

Free Space

Free space is allocated for insertion of new rows and for updates to rows that

require additional space (for example, when a trailing null is updated to a nonnull

value).

In data blocks allocated for the data segment of a table or cluster, or for the index

segment of an index, free space can also hold transaction entries. A transaction

entry is required in a block for each INSERT, UPDATE, DELETE, and

SELECT...FOR UPDATE statement accessing one or more rows in the block. The

space required for transaction entries is operating system dependent; however,

transaction entries in most operating systems require approximately 23 bytes.

Datafile: Tablespaces are stored in datafiles which are physical disk objects.

A datafile can only store objects for a single tablespace, but a tablespace

may have more than one datafile – this happens when a disk drive device fills up

and a tablespace needs to be expanded, then it is expanded to a new disk drive.

The DBA can change the size of a datafile to make it smaller or later. The file

can also grow in size dynamically as the tablespace grows.

Thus, the Oracle database architecture includes both logical and physical

structures as follows:

Physical: Control files; Redo Log Files; Datafiles; Operating System Blocks.

Logical: Tablespaces; Segments; Extents; Data Blocks.

Physical Structure

As was noted above, an Oracle database consists of physical files. The database

itself has:

Datafiles – these contain the organization's actual data.

A tablespace in an Oracle database consists of one or more physical datafiles. A

datafile can be associated with only one tablespace and only one database.

Oracle creates a datafile for a tablespace by allocating the specified amount of disk

space plus the overhead required for the file header. When a datafile is created, the

operating system under which Oracle runs is responsible for clearing old

information and authorizations from a file before allocating it to Oracle. If the file

is large, this process can take a significant amount of time. The first tablespace in

any database is always the SYSTEM tablespace, so Oracle automatically allocates

the first datafiles of any database for the SYSTEM tablespace during database

creation.

Datafile Contents

When a datafile is first created, the allocated disk space is formatted but does not

contain any user data. However, Oracle reserves the space to hold the data for

future segments of the associated tablespace—it is used exclusively by Oracle. As

the data grows in a tablespace, Oracle uses the free space in the associated datafiles

to allocate extents for the segment.

The data associated with schema objects in a tablespace is physically stored in one

or more of the datafiles that constitute the tablespace. Note that a schema object

does not correspond to a specific datafile; rather, a datafile is a repository for the

data of any schema object within a specific tablespace. Oracle allocates space for

the data associated with a schema object in one or more datafiles of a tablespace.

Therefore, a schema object can span one or more datafiles. Unless table striping is

used (where data is spread across more than one disk), the database administrator

and end users cannot control which datafile stores a schema object.

Size of Datafiles

You can alter the size of a datafile after its creation or you can specify that a

datafile should dynamically grow as schema objects in the tablespace grow. This

functionality enables you to have fewer datafiles for each tablespace and can

simplify administration of datafiles.

Temporary Datafiles

Locally managed temporary tablespaces have temporary datafiles (tempfiles),

which are similar to ordinary datafiles, with the following exceptions:

Tempfiles are always set to NOLOGGING mode.

You cannot make a tempfile read only.

You cannot create a tempfile with the ALTER DATABASE statement.

Media recovery does not recognize tempfiles:

o BACKUP CONTROLFILE does not generate any information for

tempfiles.

o CREATE CONTROLFILE cannot specify any information about

tempfiles.

When you create or resize tempfiles, they are not always guaranteed

allocation of disk space for the file size specified. On certain file systems

(for example, UNIX) disk blocks are allocated not at file creation or

resizing, but before the blocks are accessed.

Control Files

The database control file is a small binary file necessary for the database to start

and operate successfully. A control file is updated continuously by Oracle during

database use, so it must be available for writing whenever the database is open. If

for some reason the control file is not accessible, then the database cannot function

properly.

Each control file is associated with only one Oracle database.

Control File Contents

A control file contains information about the associated database that is required

for access by an instance, both at startup and during normal operation. Control file

information can be modified only by Oracle; no database administrator or user can

edit a control file.

Among other things, a control file contains information such as:

The database name

The timestamp of database creation

The names and locations of associated datafiles and redo log files

Tablespace information

Datafile offline ranges

The log history

Archived log information

Backup set and backup piece information

Backup datafile and redo log information

Datafile copy information

The current log sequence number

Checkpoint information

The database name and timestamp originate at database creation. The database

name is taken from either the name specified by the DB_NAME initialization

parameter or the name used in the CREATE DATABASE statement.

Each time that a datafile or a redo log file is added to, renamed in, or dropped from

the database, the control file is updated to reflect this physical structure change.

These changes are recorded so that:

Oracle can identify the datafiles and redo log files to open during database

startup

Oracle can identify files that are required or available in case database

recovery is necessary

Therefore, if you make a change to the physical structure of your database (using

ALTER DATABASE statements), then you should immediately make a backup of

your control file.

Control files also record information about checkpoints. Every three seconds, the

checkpoint process (CKPT) records information in the control file about the

checkpoint position in the redo log. This information is used during database

recovery to tell Oracle that all redo entries recorded before this point in the redo

log group are not necessary for database recovery; they were already written to the

datafiles.

Multiplexed Control Files

As with redo log files, Oracle enables multiple, identical control files to be open

concurrently and written for the same database. By storing multiple control files

for a single database on different disks, you can safeguard against a single point of

failure with respect to control files. If a single disk that contained a control file

crashes, then the current instance fails when Oracle attempts to access the damaged

control file. However, when other copies of the current control file are available on

different disks, an instance can be restarted without the need for database recovery.

If all control files of a database are permanently lost during operation, then the

instance is aborted and media recovery is required. Media recovery is not

straightforward if an older backup of a control file must be used because a current

copy is not available. It is strongly recommended that you adhere to the following:

Use multiplexed control files with each database

Store each copy on a different physical disk

Use operating system mirroring

Monitor backups

The control file serves the following purposes:

It contains information about data files, online redo log files, and so on that

are required to open the database.

The control file tracks structural changes to the database. For example, when

an administrator adds, renames, or drops a data file or online redo log file,

the database updates the control file to reflect this change.

It contains metadata that must be accessible when the database is not open.

For example, the control file contains information required to recover the

database, including checkpoints. A checkpoint indicates the SCN in the redo

stream where instance recovery would be required to begin Every committed

change before a checkpoint SCN is guaranteed to be saved on disk in the

data files. At least every three seconds the checkpoint process records

information in the control file about the checkpoint position in the online

redo log.

Oracle Database reads and writes to the control file continuously during

database use and must be available for writing whenever the database is

open. For example, recovering a database involves reading from the control

file the names of all the data files contained in the database. Other

operations, such as adding a data file, update the information stored in the

control file

Online Redo Log

The most crucial structure for recovery is the online redo log, which consists of

two or more preallocated files that store changes to the database as they occur. The

online redo log records changes to the data files.

Use of the Online Redo Log

The database maintains online redo log files to protect against data loss.

Specifically, after an instance failure the online redo log files enable Oracle

Database to recover committed data not yet written to the data files.

Oracle Database writes every transaction synchronously to the redo log buffer,

which is then written to the online redo logs. The contents of the log include

uncommitted transactions, undo data, and schema and object management

statements.

Oracle Database uses the online redo log only for recovery. However,

administrators can query online redo log files through a SQL interface in the

Oracle LogMiner utility Redo log files are a useful source of historical information

about database activity.

How Oracle Database Writes to the Online Redo Log

The online redo log for a database instance is called a redo thread. In singleinstance configurations, only one instance accesses a database, so only one redo

thread is present. In an Oracle Real Application Clusters (Oracle RAC)

configuration, however, two or more instances concurrently access a database, with

each instance having its own redo thread. A separate redo thread for each instance

avoids contention for a single set of online redo log files.

An online redo log consists of two or more online redo log files. Oracle Database

requires a minimum of two files to guarantee that one is always available for

writing while the other is being archived (if the database is in ARCHIVELOG

mode).

Online Redo Log Switches

Oracle Database uses only one online redo log file at a time to store records written

from the redo log buffer. The online redo log file to which the log writer (LGWR)

process is actively writing is called the current online redo log file.

A log switch occurs when the database stops writing to one online redo log file and

begins writing to another. Normally, a switch occurs when the current online redo

log file is full and writing must continue. However, you can configure log switches

to occur at regular intervals, regardless of whether the current online redo log file

is filled, and force log switches manually.

Log writer writes to online redo log files circularly. When log writer fills the last

available online redo log file, the process writes to the first log file, restarting the

cycle.

Multiple Copies of Online Redo Log Files

Oracle Database can automatically maintain two or more identical copies of the

online redo log in separate locations. An online redo log group consists of an

online redo log file and its redundant copies. Each identical copy is a member of

the online redo log group. Each group is defined by a number, such as group 1,

group 2, and so on.

Maintaining multiple members of an online redo log group protects against the loss

of the redo log. Ideally, the locations of the members should be on separate disks

so that the failure of one disk does not cause the loss of the entire online redo log.

In , A_LOG1 and B_LOG1 are identical members of group 1, while A_LOG2 and

B_LOG2 are identical members of group 2. Each member in a group must be the

same size. LGWR writes concurrently to group 1 (members A_LOG1 and

B_LOG1), then writes concurrently to group 2 (members A_LOG2 and B_LOG2),

then writes to group 1, and so on. LGWR never writes concurrently to members of

different groups.

Oracle recommends that you multiplex the online redo log. The loss of log files

can be catastrophic if recovery is required. When you multiplex the online redo

log, the database must increase the amount of I/O it performs. Depending on

your system, this additional I/O may impact overall database performance.

Structure of the Online Redo Log

Online redo log files contain redo records. A redo record is made up of a group of

change vectors, each of which describes a change to a data block. For example, an

update to a salary in the employees table generates a redo record that describes

changes to the data segment block for the table, the undo segment data block, and

the transaction table of the undo segments.

The redo records have all relevant metadata for the change, including the

following:

SCN and time stamp of the change

Transaction ID of the transaction that generated the change

SCN and time stamp when the transaction committed (if it committed)

Type of operation that made the change

Name and type of the modified data segment

Archived Redo Log Files

An archived redo log file is a copy of a filled member of an online redo log group.

This file is not considered part of the database, but is an offline copy of an online

redo log file created by the database and written to a user-specified location.

Archived redo log files are a crucial part of a backup and recovery strategy. You

can use archived redo log files to:

Recover a database backup

Update a standby database (see "Computer Failures")

Archiving is the operation of generating an archived redo log file. Archiving is

either automatic or manual and is only possible when the database is in

ARCHIVELOG mode.

An archived redo log file includes the redo entries and the log sequence number of

the identical member of the online redo log group. In files A_LOG1 and B_LOG1

are identical members of Group 1. If the database is in ARCHIVELOG mode, and if

automatic archiving is enabled, then the archiver process (ARCn) will archive one

of these files. If A_LOG1 is corrupted, then the process can archive B_LOG1. The

archived redo log contains a copy of every group created since you enabled

archiving.

Parameter Files

o start a database instance, Oracle Database must read either a server parameter

file, which is recommended, or a text initialization parameter file, which is a

legacy implementation. These files contain a list of configuration parameters.

To create a database manually, you must start an instance with a parameter file and

then issue a CREATE DATABASE command. Thus, the instance and parameter file

can exist even when the database itself does not exist.

Initialization Parameters

Initialization parameters are configuration parameters that affect the basic

operation of an instance. The instance reads initialization parameters from a file at

startup.

Oracle Database provides many initialization parameters to optimize its operation

in diverse environments. Only a few of these parameters must be explicitly set

because the default values are adequate in most cases.

Functional Groups of Initialization Parameters

Most initialization parameters belong to one of the following functional groups:

Parameters that name entities such as files or directories

Parameters that set limits for a process, database resource, or the database

itself

Parameters that affect capacity, such as the size of the SGA (these

parameters are called variable parameters)

Variable parameters are of particular interest to database administrators because

they can use these parameters to improve database performance.

Basic and Advanced Initialization Parameters

Initialization parameters are divided into two groups: basic and advanced. In most

cases, you must set and tune only the approximately 30 basic parameters to obtain

reasonable performance. The basic parameters set characteristics such as the

database name, locations of the control files, database block size, and undo

tablespace.

In rare situations, modification to the advanced parameters may be required for

optimal performance. The advanced parameters enable expert DBAs to adapt the

behavior of the Oracle Database to meet unique requirements.

Oracle Database provides values in the starter initialization parameter file provided

with your database software, or as created for you by the Database Configuration

Assistant. You can edit these Oracle-supplied initialization parameters and add

others, depending on your configuration and how you plan to tune the database.

For relevant initialization parameters not included in the parameter file, Oracle

Database supplies defaults.

Server Parameter Files

A server parameter file is a repository for initialization parameters that is managed

by Oracle Database. A server parameter file has the following key characteristics:

Only one server parameter file exists for a database. This file must reside on

the database host.

The server parameter file is written to and read by only by Oracle Database,

not by client applications.

The server parameter file is binary and cannot be modified by a text editor.

Initialization parameters stored in the server parameter file are persistent.

Any changes made to the parameters while a database instance is running

can persist across instance shutdown and startup.

A server parameter file eliminates the need to maintain multiple text initialization

parameter files for client applications. A server parameter file is initially built from

a text initialization parameter file using the CREATE SPFILE statement. It can

also be created directly by the Database Configuration Assistant.

Text Initialization Parameter Files

A text initialization parameter file is a text file that contains a list of initialization

parameters. This type of parameter file, which is a legacy implementation of the

parameter file, has the following key characteristics:

When starting up or shutting down a database, the text initialization

parameter file must reside on the same host as the client application that

connects to the database.

A text initialization parameter file is text-based, not binary.

Oracle Database can read but not write to the text initialization parameter

file. To change the parameter values you must manually alter the file with a

text editor.

Changes to initialization parameter values by ALTER SYSTEM are only in

effect for the current instance. You must manually update the text

initialization parameter file and restart the instance for the changes to be

known.

db_name=sample

control_files=/disk1/oradata/sample_cf.dbf

db_block_size=8192

open_cursors=52

undo_management=auto

shared_pool_size=280M

pga_aggregate_target=29M

Modification of Initialization Parameter Values

You can adjust initialization parameters to modify the behavior of a

database. The classification of parameters as static or dynamic

Static parameters include DB_BLOCK_SIZE, DB_NAME, and

COMPATIBLE. Dynamic parameters are grouped into session-level

parameters, which affect only the current user session, and system-level

parameters, which affect the database and all sessions. For example,

MEMORY_TARGET is a system-level parameter, while

NLS_DATE_FORMAT is a session-level parameter

The scope of a parameter change depends on when the change takes effect. When

an instance has been started with a server parameter file, you can use the ALTER

SYSTEM SET statement to change values for system-level parameters as follows:

SCOPE=MEMORY

Changes apply to the database instance only. The change will not persist if

the database is shut down and restarted.

SCOPE=SPFILE

Changes are written to the server parameter file but do not affect the current

instance. Thus, the changes do not take effect until the instance is restarted.

SCOPE=BOTH

Changes are written both to memory and to the server parameter file. This is

the default scope when the database is using a server parameter file.

The database prints the new value and the old value of an initialization parameter

to the alert log. As a preventative measure, the database validates changes of basic

parameter to prevent illegal values from being written to the server parameter file.

Diagnostic Files

Oracle Database includes a fault diagnosability infrastructure for preventing,

detecting, diagnosing, and resolving database problems. Problems include critical

errors such as code bugs, metadata corruption, and customer data corruption.

The goals of the advanced fault diagnosability infrastructure are the following:

Detecting problems proactively

Limiting damage and interruptions after a problem is detected

Reducing problem diagnostic and resolution time

Simplifying customer interaction with Oracle Support

Automatic Diagnostic Repository

Automatic Diagnostic Repository (ADR) is a file-based repository that stores

database diagnostic data such as trace files, the alert log, and Health Monitor

reports. Key characteristics of ADR include:

Unified directory structure

Consistent diagnostic data formats

Unified tool set

The preceding characteristics enable customers and Oracle Support to correlate and

analyze diagnostic data across multiple Oracle instances, components, and

products.

ADR is located outside the database, which enables Oracle Database to access and

manage ADR when the physical database is unavailable. An instance can create

ADR before a database has been created.

Problems and Incidents

ADR proactively tracks problems, which are critical errors in the database. Critical

errors manifest as internal errors, such as ORA-600, or other severe errors. Each

problem has a problem key, which is a text string that describes the problem.

When a problem occurs multiple times, ADR creates a time-stamped incident for

each occurrence. An incident is uniquely identified by a numeric incident ID.

When an incident occurs, ADR sends an incident alert to Enterprise Manager.

Diagnosis and resolution of a critical error usually starts with an incident alert.

Because a problem could generate many incidents in a short time, ADR applies

flood control to incident generation after certain thresholds are reached. A floodcontrolled incident generates an alert log entry, but does not generate incident

dumps. In this way, ADR informs you that a critical error is ongoing without

overloading the system with diagnostic data.

See Also:

Oracle Database Administrator's Guide for detailed information about the fault

diagnosability infrastructure

ADR Structure

The ADR base is the ADR root directory. The ADR base can contain multiple

ADR homes, where each ADR home is the root directory for all diagnostic data—

traces, dumps, the alert log, and so on—for an instance of an Oracle product or

component. For example, in an Oracle RAC environment with shared storage and

ASM, each database instance and each ASM instance has its own ADR home.

illustrates the ADR directory hierarchy for a database instance. Other ADR homes

for other Oracle products or components, such as ASM or Oracle Net Services, can

exist within this hierarchy, under the same ADR base.

ADR Directory Structure for an Oracle Database Instance

Alert Log

Each database has an alert log, which is an XML file containing a chronological

log of database messages and errors. The alert log contents include the following:

All internal errors (ORA-600), block corruption errors (ORA-1578), and

deadlock errors (ORA-60)

Administrative operations such as DDL statements and the SQL*Plus

commands STARTUP, SHUTDOWN, ARCHIVE LOG, and RECOVER

Several messages and errors relating to the functions of shared server and

dispatcher processes

Errors during the automatic refresh of a materialized view

Oracle Database uses the alert log as an alternative to displaying information in the

Enterprise Manager GUI. If an administrative operation is successful, then Oracle

Database writes a message to the alert log as "completed" along with a time stamp.

Oracle Database creates an alert log in the alert subdirectory when you first start

a database instance, even if no database has been created yet. The following

example shows a portion of a text-only alert log:

Trace Files

A trace file is an administrative file that contain diagnostic data used to investigate

problems. Also, trace files can provide guidance for tuning applications or an

instance, as explained in

Types of Trace Files

Each server and background process can periodically write to an associated trace

file. The files information on the process environment, status, activities, and errors.

The SQL trace facility also creates trace files, which provide performance

information on individual SQL statements. To enable tracing for a client identifier,

service, module, action, session, instance, or database, you must execute the

appropriate procedures in the DBMS_MONITOR package or use Oracle Enterprise

Manager.

A dump is a special type of trace file. Whereas a trace tends to be continuous

output of diagnostic data, a dump is typically a one-time output of diagnostic data

in response to an event (such as an incident). When an incident occurs, the

database writes one or more dumps to the incident directory created for the

incident. Incident dumps also contain the incident number in the file name.

Locations of Trace Files

ADR stores trace files in the trace subdirectory, as shown in. Trace file names

are platform-dependent and use the extension .trc.

Typically, database background process trace file names contain the Oracle SID,

the background process name, and the operating system process number. An

example of a trace file for the RECO process is mytest_reco_10355.trc.

Server process trace file names contain the Oracle SID, the string ora, and the

operating system process number. An example of a server process trace file name

is mytest_ora_10304.trc.

Sometimes trace files have corresponding trace map (.trm) files. These files

contain structural information about trace files and are used for searching and

navigation.

Password file – specifies which *special* users are authenticated to startup/shut

down an Oracle Instance.

Memory Management and Memory Structures

Oracle Database Memory Management

Memory management - focus is to maintain optimal sizes for memory structures.

Memory is managed based on memory-related initialization parameters.

These values are stored in the init.ora file for each database.

Three basic options for memory management are as follows:

Automatic memory management:

o DBA specifies the target size for instance memory.

o The database instance automatically tunes to the target memory size.

o Database redistributes memory as needed between the SGA and the

instance PGA.

Automatic shared memory management:

o This management mode is partially automated.

o DBA specifies the target size for the SGA.

o DBA can optionally set an aggregate target size for the PGA or

managing PGA work areas individually.

Manual memory management:

o Instead of setting the total memory size, the DBA sets many

initialization parameters to manage components of the SGA and

instance PGA individually.

If you create a database with Database Configuration Assistant (DBCA) and

choose the basic installation option, then automatic memory management is the

default.

The memory structures include three areas of memory:

System Global Area (SGA) – this is allocated when an Oracle Instance

starts up.

Program Global Area (PGA) – this is allocated when a Server Process

starts up.

User Global Area (UGA) – this is allocated when a user connects to

create a session.

System Global Area

The SGA is a read/write memory area that stores information shared by all

database processes and by all users of the database (sometimes it is called the

Shared Global Area).

o This information includes both organizational data and control information

used by the Oracle Server.

o The SGA is allocated in memory and virtual memory.

o The size of the SGA can be established by a DBA by assigning a value to

the parameter SGA_MAX_SIZE in the parameter file—this is an optional

parameter.

The SGA is allocated when an Oracle instance (database) is started up based on

values specified in the initialization parameter file (either PFILE or SPFILE).

The SGA has the following mandatory memory structures:

Database Buffer Cache

Redo Log Buffer

Java Pool

Streams Pool

Shared Pool – includes two components:

o Library Cache

o Data Dictionary Cache

Other structures (for example, lock and latch management, statistical

data)

Additional optional memory structures in the SGA include:

Large Pool

The SHOW SGA SQL command will show you the SGA memory allocations.

This is a recent clip of the SGA for the DBORCL database at SIUE.

In order to execute SHOW SGA you must be connected with the special

privilege SYSDBA (which is only available to user accounts that are members of

the DBA Linux group).

SQL> connect / as sysdba

Connected.

SQL> show sga

Total System Global Area 1610612736 bytes

Fixed Size

Variable Size

Database Buffers

Redo Buffers

2084296 bytes

1006633528 bytes

587202560 bytes

14692352 bytes

Early versions of Oracle used a Static SGA. This meant that if modifications to

memory management were required, the database had to be shutdown,

modifications were made to the init.ora parameter file, and then the database had

to be restarted.

Oracle 11g uses a Dynamic SGA. Memory configurations for the system global

area can be made without shutting down the database instance. The DBA can

resize the Database Buffer Cache and Shared Pool dynamically.

Several initialization parameters are set that affect the amount of random access

memory dedicated to the SGA of an Oracle Instance. These are:

SGA_MAX_SIZE: This optional parameter is used to set a limit on the

amount of virtual memory allocated to the SGA – a typical setting might be 1

GB; however, if the value for SGA_MAX_SIZE in the initialization parameter file

or server parameter file is less than the sum the memory allocated for all

components, either explicitly in the parameter file or by default, at the time the

instance is initialized, then the database ignores the setting for SGA_MAX_SIZE.

For optimal performance, the entire SGA should fit in real memory to eliminate

paging to/from disk by the operating system.

Buffer Caches

A number of buffer caches are maintained in memory in order to improve system

response time.

Database Buffer Cache

The Database Buffer Cache is a fairly large memory object that stores the actual

data blocks that are retrieved from datafiles by system queries and other data

manipulation language commands.

The purpose is to optimize physical input/output of data.

When Database Smart Flash Cache (flash cache) is enabled, part of the buffer

cache can reside in the flash cache.

This buffer cache extension is stored on a flash disk device, which is a solid

state storage device that uses flash memory.

The database can improve performance by caching buffers in flash memory

instead of reading from magnetic disk.

Database Smart Flash Cache is available only in Solaris and Oracle Enterprise

Linux.

A query causes a Server Process to look for data.

The first look is in the Database Buffer Cache to determine if the requested

information happens to already be located in memory – thus the information would

not need to be retrieved from disk and this would speed up performance.

If the information is not in the Database Buffer Cache, the Server Process

retrieves the information from disk and stores it to the cache.

Keep in mind that information read from disk is read a block at a time, NOT a

row at a time, because a database block is the smallest addressable storage space

on disk.

Database blocks are kept in the Database Buffer Cache according to a Least

Recently Used (LRU) algorithm and are aged out of memory if a buffer cache

block is not used in order to provide space for the insertion of newly needed

database blocks.

There are three buffer states:

Unused - a buffer is available for use - it has never been used or is currently

unused.

Clean - a buffer that was used earlier - the data has been written to disk.

Dirty - a buffer that has modified data that has not been written to disk.

Each buffer has one of two access modes:

Pinned - a buffer is pinned so it does not age out of memory.

Free (unpinned).

The buffers in the cache are organized in two lists:

the write list and,

the least recently used (LRU) list.

The write list (also called a write queue) holds dirty buffers – these are

buffers that hold that data that has been modified, but the blocks have not been

written back to disk.

The LRU list holds unused, free clean buffers, pinned buffers, and free dirty

buffers that have not yet been moved to the write list. Free clean buffers do not

contain any useful data and are available for use. Pinned buffers are currently

being accessed.

When an Oracle process accesses a buffer, the process moves the buffer to the

most recently used (MRU) end of the LRU list – this causes dirty buffers to age

toward the LRU end of the LRU list.

When an Oracle user process needs a data row, it searches for the data in the

database buffer cache because memory can be searched more quickly than hard

disk can be accessed. If the data row is already in the cache (a cache hit), the

process reads the data from memory; otherwise a cache miss occurs and data must

be read from hard disk into the database buffer cache.

Before reading a data block into the cache, the process must first find a free

buffer. The process searches the LRU list, starting at the LRU end of the list. The

search continues until a free buffer is found or until the search reaches the

threshold limit of buffers.

Each time a user process finds a dirty buffer as it searches the LRU, that buffer is

moved to the write list and the search for a free buffer continues.

When a user process finds a free buffer, it reads the data block from disk into the

buffer and moves the buffer to the MRU end of the LRU list.

If an Oracle user process searches the threshold limit of buffers without finding a

free buffer, the process stops searching the LRU list and signals the DBWn

background process to write some of the dirty buffers to disk. This frees up some

buffers.

Database Buffer Cache Block Size

The block size for a database is set when a database is created and is determined

by the init.ora parameter file parameter named DB_BLOCK_SIZE.

Typical block sizes are 2KB, 4KB, 8KB, 16KB, and 32KB.

The size of blocks in the Database Buffer Cache matches the block size for the

database.

The DBORCL database uses an 8KB block size.

This figure shows that the use of non-standard block sizes results in multiple

database buffer cache memory allocations.

Because tablespaces that store oracle tables can use different (non-standard) block

sizes, there can be more than one Database Buffer Cache allocated to match block

sizes in the cache with the block sizes in the non-standard tablespaces.

The size of the Database Buffer Caches can be controlled by the parameters

DB_CACHE_SIZE and DB_nK_CACHE_SIZE to dynamically change the

memory allocated to the caches without restarting the Oracle instance.

You can dynamically change the size of the Database Buffer Cache with the

ALTER SYSTEM command like the one shown here:

ALTER SYSTEM SET DB_CACHE_SIZE = 96M;

You can have the Oracle Server gather statistics about the Database Buffer Cache

to help you size it to achieve an optimal workload for the memory allocation. This

information is displayed from the V$DB_CACHE_ADVICE view. In order for

statistics to be gathered, you can dynamically alter the system by using the

ALTER SYSTEM SET DB_CACHE_ADVICE (OFF, ON, READY)

command. However, gathering statistics on system performance always incurs

some overhead that will slow down system performance.

SQL> ALTER SYSTEM SET db_cache_advice = ON;

SQL> SELECT name, block_size, advice_status FROM

v$db_cache_advice;

NAME

BLOCK_SIZE ADV

-------------------- ---------- --DEFAULT

8192 ON

SQL> ALTER SYSTEM SET db_cache_advice = OFF;

KEEP Buffer Pool

This pool retains blocks in memory (data from tables) that are likely to be reused

throughout daily processing. An example might be a table containing user names

and passwords or a validation table of some type.

The DB_KEEP_CACHE_SIZE parameter sizes the KEEP Buffer Pool.

RECYCLE Buffer Pool

This pool is used to store table data that is unlikely to be reused throughout daily

processing – thus the data blocks are quickly removed from memory when not

needed.

The DB_RECYCLE_CACHE_SIZE parameter sizes the Recycle Buffer Pool.

Redo Log Buffer

The Redo Log Buffer memory object stores images of all changes made to

database blocks.

Database blocks typically store several table rows of organizational data. This

means that if a single column value from one row in a block is changed, the block

image is stored. Changes include INSERT, UPDATE, DELETE, CREATE,

ALTER, or DROP.

LGWR writes redo sequentially to disk while DBWn performs scattered writes

of data blocks to disk.

o Scattered writes tend to be much slower than sequential writes.

o Because LGWR enable users to avoid waiting for DBWn to complete

its slow writes, the database delivers better performance.

The Redo Log Buffer as a circular buffer that is reused over and over. As the

buffer fills up, copies of the images are stored to the Redo Log Files that are

covered in more detail in a later module.

Large Pool

The Large Pool is an optional memory structure that primarily relieves the

memory burden placed on the Shared Pool. The Large Pool is used for the

following tasks if it is allocated:

Allocating space for session memory requirements from the User Global

Area where a Shared Server is in use.

Transactions that interact with more than one database, e.g., a distributed

database scenario.

Backup and restore operations by the Recovery Manager (RMAN)

process.

o RMAN uses this only if the BACKUP_DISK_IO = n and

BACKUP_TAPE_IO_SLAVE = TRUE parameters are set.

o If the Large Pool is too small, memory allocation for backup will fail

and memory will be allocated from the Shared Pool.

Parallel execution message buffers for parallel server operations. The

PARALLEL_AUTOMATIC_TUNING = TRUE parameter must be set.

The Large Pool size is set with the LARGE_POOL_SIZE parameter – this is not

a dynamic parameter. It does not use an LRU list to manage memory.

Java Pool

The Java Pool is an optional memory object, but is required if the database has

Oracle Java installed and in use for Oracle JVM (Java Virtual Machine).

The size is set with the JAVA_POOL_SIZE parameter that defaults to 24MB.

The Java Pool is used for memory allocation to parse Java commands and to

store data associated with Java commands.

Storing Java code and data in the Java Pool is analogous to SQL and PL/SQL

code cached in the Shared Pool.

Streams Pool

This pool stores data and control structures to support the Oracle Streams feature

of Oracle Enterprise Edition.

Oracle Steams manages sharing of data and events in a distributed environment.

It is sized with the parameter STREAMS_POOL_SIZE.

If STEAMS_POOL_SIZE is not set or is zero, the size of the pool grows

dynamically

Shared Pool

The Shared Pool is a memory structure that is shared by all system users.

It caches various types of program data. For example, the shared pool stores

parsed SQL, PL/SQL code, system parameters, and data dictionary information.

The shared pool is involved in almost every operation that occurs in the

database. For example, if a user executes a SQL statement, then Oracle Database

accesses the shared pool.

It consists of both fixed and variable structures.

The variable component grows and shrinks depending on the demands placed

on memory size by system users and application programs.

Memory can be allocated to the Shared Pool by the parameter

SHARED_POOL_SIZE in the parameter file. The default value of this parameter

is 8MB on 32-bit platforms and 64MB on 64-bit platforms. Increasing the value of

this parameter increases the amount of memory reserved for the shared pool.

You can alter the size of the shared pool dynamically with the ALTER SYSTEM

SET command. An example command is shown in the figure below. You must

keep in mind that the total memory allocated to the SGA is set by the

SGA_TARGET parameter (and may also be limited by the SGA_MAX_SIZE if

it is set), and since the Shared Pool is part of the SGA, you cannot exceed the

maximum size of the SGA. It is recommended to let Oracle optimize the Shared

Pool size.

The Shared Pool stores the most recently executed SQL statements and used data

definitions. This is because some system users and application programs will tend

to execute the same SQL statements often. Saving this information in memory can

improve system performance.

The Shared Pool includes several cache areas described below.

Library Cache

Memory is allocated to the Library Cache whenever an SQL statement is parsed

or a program unit is called. This enables storage of the most recently used SQL

and PL/SQL statements.

If the Library Cache is too small, the Library Cache must purge statement

definitions in order to have space to load new SQL and PL/SQL statements.

Actual management of this memory structure is through a Least-Recently-Used

(LRU) algorithm. This means that the SQL and PL/SQL statements that are

oldest and least recently used are purged when more storage space is needed.

The Library Cache is composed of two memory subcomponents:

Shared SQL: This stores/shares the execution plan and parse tree for

SQL statements, as well as PL/SQL statements such as functions, packages,

and triggers. If a system user executes an identical statement, then the

statement does not have to be parsed again in order to execute the statement.

Private SQL Area: With a shared server, each session issuing a SQL

statement has a private SQL area in its PGA.

o Each user that submits the same statement has a private SQL area

pointing to the same shared SQL area.

o Many private SQL areas in separate PGAs can be associated with

the same shared SQL area.

o This figure depicts two different client processes issuing the same

SQL statement – the parsed solution is already in the Shared SQL

Area.

Data Dictionary Cache

The Data Dictionary Cache is a memory structure that caches data dictionary

information that has been recently used.

This cache is necessary because the data dictionary is accessed so often.

Information accessed includes user account information, datafile names, table

descriptions, user privileges, and other information.

The database server manages the size of the Data Dictionary Cache internally and

the size depends on the size of the Shared Pool in which the Data Dictionary Cache

resides. If the size is too small, then the data dictionary tables that reside on disk

must be queried often for information and this will slow down performance.

Server Result Cache

The Server Result Cache holds result sets and not data blocks. The server result

cache contains the SQL query result cache and PL/SQL function result cache,

which share the same infrastructure.

SQL Query Result Cache

This cache stores the results of queries and query fragments.

Using the cache results for future queries tends to improve performance.

For example, suppose an application runs the same SELECT statement

repeatedly. If the results are cached, then the database returns them immediately.

In this way, the database avoids the expensive operation of rereading blocks and

recomputing results.

PL/SQL Function Result Cache

The PL/SQL Function Result Cache stores function result sets.

Without caching, 1000 calls of a function at 1 second per call

would take 1000 seconds.

With caching, 1000 function calls with the same inputs could take 1

second total.

Good candidates for result caching are frequently invoked

functions that depend on relatively static data.

PL/SQL function code can specify that results be cached.

Program Global Area (PGA)

A PGA is:

a nonshared memory region that contains data and control information

exclusively for use by an Oracle process.

A PGA is created by Oracle Database when an Oracle process is started.

One PGA exists for each Server Process and each Background Process. It

stores data and control information for a single Server Process or a single

Background Process.

It is allocated when a process is created and the memory is scavenged by the

operating system when the process terminates. This is NOT a shared part of

memory – one PGA to each process only.

The collection of individual PGAs is the total instance PGA, or instance PGA.

Database initialization parameters set the size of the instance PGA, not

individual PGAs.

The Program Global Area is also termed the Process Global Area (PGA) and is

a part of memory allocated that is outside of the Oracle Instance.

The content of the PGA varies, but as shown in the figure above, generally

includes the following:

Private SQL Area: Stores information for a parsed SQL statement – stores

bind variable values and runtime memory allocations. A user session issuing