* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

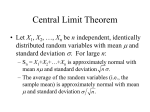

Download DENSITY NOTATION 1. densities Let X be a random variable on (Ω

Survey

Document related concepts

Transcript

DENSITY NOTATION

JOHN THICKSTUN

1. densities

Let X be a random variable on (Ω, F, P ) with pushforward measure X∗ P . This measure

is called the law of X and

Z

Z

d(X∗ P ).

dP =

P (X ∈ A) =

{X∈A}

A

If the law of X is absolutely continuous with respect to Lebesgue measure, that is X∗ P dx, then there exists a Radon-Nikodym derivative d(X∗ P )/dx : R → R such that

Z

Z

d(X∗ P )

d(X∗ P ) =

dx.

dx

A

A

This derivative is called a density and is commonly denoted by p(x) and we write

Z

P (X ∈ A) =

p(x)dx.

A

Similarly, by the law of the unconscious statistician we write

Z ∞

xp(x)dx.

EX =

−∞

Let G ⊂ F be a (sub)σ-algebra. We can define a unique (up to null sets) conditional

expectation E{X|Y }, which for any G ∈ G satisfies

Z

Z

E{X|G}dP =

XdP.

G

G

This relationship is neatly expressed by the following commutative diagram.

R

X

(Ω, F)

E{X|G}

E{·|G}

(Ω, G)

That is, E{X|G} = Y for some G-measurable Y : Ω → R. Conversely, if Y is a random variable with σ(Y ) = G then we define E{X|Y } = E{X|G} and for some measurable

h : R → R, E{X|Y } = h(Y ). Furthermore, this allows us to define a pointwise conditional

expectation E{X|Y = y} = h(y). We might want to extend this definition into a more

1

2

JOHN THICKSTUN

general E{X|Y ∈ A} but here we must be very careful: see, for example, the Borel Kolmogorov paradox.

Recall that P (X ∈ A) = E{1{X∈A} }, and from this observation we can extend our

definition of conditional expectation to a definition of conditional probability. In particular,

we define P (·|G) : F × G → R by

P (X ∈ A|G) = E{1{X∈A} |G}.

This construction is not a priori a probability measure. We say that the conditional

probability is regular if it in fact does satisfy axioms of probability. We assume from

this point on that our conditional probabilities are regular, which allows us to write

Z

Z

P (X ∈ A|G) =

dP (·|G) =

dX∗ P (·|G).

{X∈A}

A

If P (·|G) dx then we define a conditional density p(x|G) = dX∗ P (·|G)/dx and write

Z

P (X ∈ A|G) =

p(x|G)dx

A

If Y generates G then E{X|G} = E{X|Y }, and we write

Z

P (X ∈ A|Y ) =

p(x|y)dx.

A

And likewise,

Z

∞

E{X|Y } =

xp(x|y)dx.

−∞

2. multiple variables

Now let’s consider the case of a pair of random variables X = (Y, Z). Let’s assume both

Y and Z are defined on (Ω, F, P ). We can define a joint density in the manner discussed

above by

Z

P (Y ∈ A, Z ∈ B) =

p(y, z)d(y, z).

A×B

From this definition, Fubini’s theorem, and the definition of a single-variable density,

Z

P (Y ∈ A) = P (X ∈ A × R) =

p(y, z)d(y, z)

A×R

Z Z

∞

=

Z

p(y, z)dzdy =

A

−∞

p(y)dy.

A

This holds for all A and it follows that a.s.

Z ∞

Z

p(y) =

p(y, z)dz, p(z) =

−∞

∞

−∞

p(z, y)dz.

DENSITY NOTATION

3

These are called the marginals of Y and Z. Note that we have slightly abused notation:

p(y) and p(z) are distinct functions, distinguished lexicographically by the name of their

input variable. By our definitions of densities and conditional probabilities in section 1,

Z

P (Y ∈ A|Z)d(Z∗ P )

P (Y ∈ A, Z ∈ B) =

B

Z Z

Z Z

p(y|z)p(z)dz.

p(y|z)dxd(Z∗ P ) =

=

B

B

A

And therefore we a.s. have

p(y, z) =

p(y|z)

.

p(z)

A