* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Presentation by Daniel Glasner

Survey

Document related concepts

Transcript

Semi-Numerical String Matching

Semi-numerical String Matching

All the methods we’ve seen so far have been based

on comparisons.

We propose alternative methods of computation such

as:

Arithmetic.

Bit – operations.

The fast Fourier transform.

Semi-numerical String Matching

We will survey three examples of such methods:

The Random Fingerprint method due to Karp and

Rabin.

Shift–And method due to Baeza-Yates and Gonnet,

and its extension to agrep due to Wu and Manber.

A solution to the match count problem using the fast

Fourier transform due to Fischer and Paterson and

an improvement due to Abrahamson.

Karp-Rabin fingerprint - exact match

Exact match problem: we want to find all the

occurrences of the pattern P in the text T.

The pattern P is of length n.

The text T is of length m.

Karp-Rabin fingerprint - exact match

Arithmetic replaces comparisons.

An efficient randomized algorithm that makes an

error with small probability.

A randomized algorithm that never errors whose

expected running time is efficient.

We will consider a binary alphabet: {0,1}.

Arithmetic replaces comparisons.

Strings are also numbers, H: strings → numbers.

Let s be a string of length n,

H ( s) i 1 2

n

ni

s (i )

Definition:

let Tr denote the n length substring of T starting at

position r.

Arithmetic replaces comparisons.

Strings are also numbers, H: strings → numbers.

T=10110101

P=0101

T=10110101

P=

0101

H(T5) = 5 =

H(P) = 5

T=10110101

P= 0101

H(T2) = 6 ≠

H(P) = 5

Arithmetic replaces comparisons.

Theorem:

There is an occurrence of P starting at position r of

T if and only if H(P) = H(Tr)

Proof:

Follows immediately from the unique

representation of a number in base 2.

Arithmetic replaces comparisons.

We can compute H(Tr) from H(Tr-1)

H (Tr ) 2 H (Tr 1 ) 2n T (r 1) T (r n 1)

T=10110101

T1 = 1 0 1 1

T2 = 0 1 1 0

H (T1 ) H (1011) 11

H (T2 ) 2 11 24 1 0 22 16 6 H (0110)

Arithmetic replaces comparisons.

A simple efficient algorithm:

Compute H(T1).

Run over T

Compute H(Tr) from H(Tr-1) in constant time,

and make the comparisons.

Total running time O(m)?

Karp-Rabin

Let’s use modular arithmetic, this will help us keep

the numbers small.

For some integer p The fingerprint of P is defined

by

Hp(P) = H(P) (mod p)

Karp-Rabin

Lemma:

H p ( P ) {[...({[ P (1) 2(mod p) P (2)] 2(mod p)

P (3)} 2(mod p) P (4))...](mod p) P (n)}(mod p)

H ( P )(mod p)

And during this computation no number ever

exceeds 2p.

An example

P=101111

p=7

H(P) = 47

Hp(P) = 47 (mod 7) = 5

1 2(mod 7) 0 2

2 2(mod 7) 1 5

5 2(mod 7) 1 4

4 2(mod 7) 1 2

2 2(mod 7) 1 5

5(mod 7) 5 H p ( P )

Karp-Rabin

Intermediate numbers are also kept small.

We can still compute H(Tr) from H(Tr-1).

Arithmetic:

H (Tr ) 2 H (Tr 1 ) 2n T (r 1) T (r n 1)

Modular arithmetic:

H p (Tr ) [( 2 H (Tr 1 )(mod p))

(2n (mod p))T (r 1) T (r n 1)](mod p)

Karp-Rabin

Intermediate numbers are also kept small.

We can still compute H(Tr) from H(Tr-1).

Arithmetic:

2n 2(2n1 )

Modular arithmetic:

2n (mod p) 2(2n1 (mod p))(mod p)

Karp-Rabin

How about the comparisons?

Arithmetic:

There is an occurrence of P starting at position r of

T if and only if H(P) = H(Tr)

Modular arithmetic:

If there is an occurrence of P starting at position r

of T

then Hp(P) = Hp(Tr)

There are values of p for which the converse is not

true!

Karp-Rabin

Definition:

If Hp(P) = Hp(Tr) but P doesn’t occur in T starting at

position r, we say there is a false match between P

and T at position r.

If there is some position r such that there is a false

match between P and T at position r, we say there

is a false match between P and T.

Karp-Rabin

Our goal will be to choose a modulus p such that

p is small enough to keep computations efficient.

p is large enough so that the probability of a false

match is kept small.

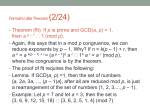

Prime moduli limit false matches

Definition:

For a positive integer u, п(u) is the number of

primes that are less than or equal to u.

Prime number theorem (without proof):

u

u

(u) 1.26

ln( u)

ln( u)

Prime moduli limit false matches

Lemma (without proof):

if u ≥ 29, then the product of all the primes that are

less than or equal to u is greater than 2u.

Example: u = 29, the prime numbers less than or

equal to 29 are: 2,3,5,7,11,13,17,19,23,29, their

product is

6,469,693,230 ≥ 536,870,912 = 229

Prime moduli limit false matches

Corollary:

If u ≥ 29 and x is any number less than or equal to

2u, then x has fewer than п(u) distinct prime

divisors.

Proof:

Assume x has k ≥ п(u) distinct prime divisors

q1 , …, qk then

2u ≥ x ≥ q1* …* qk but

q1* …* qk is at least as large as the product of the

first п(u) prime numbers.

Prime moduli limit false matches

Theorem:

Let I be a positive integer, and p a randomly

chosen prime less than or equal to I.

If nm ≥ 29 then

The probability of a false match between P and T is

less than or equal to п(nm) / п(I) .

Prime moduli limit false matches

Proof:

Let R be the set of positions in T where P doesn’t

begin.

nm

|

H

(

P

)

H

(

T

)

|

2

s

We have

sR

By the corollary the product has at most п(nm)

distinct prime divisors.

If there is a false match at position r then p divides

| H ( P ) H (Tr ) | thus also divides sR | H ( P ) H (Ts ) |

p must be in a set of size п(nm) but p was chosen

randomly out of a set of size п(I).

Random fingerprint algorithm

Choose a positive integer I.

Pick a random prime p less than or equal to I, and

compute P’s fingerprint – Hp(P).

For each position r in T, comput Hp(Tr) and test to

see if it equals Hp(P). If the numbers are equal

either declare a probable match or check and

declare a definite match.

Running time: excluding verification O(m).

How to choose I

The smaller I is, computations are more efficient

The larger I is, the probability of a false match

decresses.

Proposition:

When I = nm2

1. The largest number used in the algorithm

requires at most 4(log(n)+log(m)) bits.

2. The probability of a false match is at most

2.53/m.

How to choose I

Proof:

(nm )

nm ln( nm 2 )

1.26

2

2

(nm )

nm ln( nm )

1 ln( n) 2 ln( m ) 2.53

1.26

m ln( n) ln( m )

m

Extensions

An idea: why not choose k primes?

Proposition:

when k primes are chosen randomly and

independently between 1 and I, the probability of a

false match is at most (nm ) k

(I )

Proof:

We saw that if p allows and error it is in a set of at

most п(nm) integers. A false match can occur only if

each of the independently chosen k primes is in a

set of size of at most п(nm) integers.

An illustaration

k = 4,

n = 250, m = 4000

I = 250*40002 < 232

k

(nm ) 2.53

12

10

( I ) 4000

k

Even lower limits on the error

When k primes are used, the probability of a false

match is at most (n) k

(I )

Proof: Suppose a false match occurs at position r.

That means that each of the primes must divide

|H(P)-H(Tr) | ≤ 2n. There are at most п(n) primes

that divide it.

Each prime is chosen from a set of size п(I) and by

chance is a part of a set of size п(n).

Checking for error in linear time

Consider the list L of locations in T where the KarpRabin algorithm declares P to be found.

A run is a maximal interval of starting locations

l1, l2, …, lr in L such that every two numbers differ

by at most n/2.

Let’s verify a run.

Checking for error in linear time

Check the first two declared occurrences explicitly.

P = abbabbabbabbab

T = abbabbabbabbabbabbabbabbabbax…

P=

abbabbabbabbab

T = abbabbabbabbabbabbabbabbabbax…

If there is a false match stop.

Otherwise P is semi periodic with period

d = l1 – l2.

Checking for error in linear time

d is the minimal period.

P = abbabbabbabbab

T = abbabbabbabbabbabbabbabbabbax…

P=

abbabbabbabbab

T = abbabbabbabbabbabbabbabbabbax…

Checking for error in linear time

P = abbabbabbabbab

T = abbabbabbabbabbabbabbabbabbax…

For each i check that li+1 – li = d.

Check the last d characters of li for each i.

Checking for error in linear time

P = abbabbabbabbab

T = abbabbabbabbabbabbabbabbabbax…

Check l1

Checking for error in linear time

P=

abbabbabbabbab

T = abbabbabbabbabbabbabbabbabbax…

Check l2

P is semi periodic with period 3.

Checking for error in linear time

T = abbabbabbabbabbabbabbabbabbax…

Check li+1 – li = 3

Checking for error in linear time

P=

bab

T = abbabbabbabbabbabbabbabbabbax…

For each i check the last 3 characters of li.

Checking for error in linear time

P=

bab

T = abbabbabbabbabbabbabbabbabbax…

For each i check the last 3 characters of li.

Checking for error in linear time

P=

bab

T = abbabbabbabbabbabbabbabbabbax…

For each i check the last 3 characters of li.

Report a false match or approve the run.

Time analysis

No character of T is examined more than twice

during a single run.

Two runs are separated by at least n/2 positions

and each run is at least n positions long. Thus no

character of T is examined in more than two

consecutive runs.

Total verification time O(m).

Time analysis

When we have a false match we start again with a

different prime.

The expected probability of a false match is O(1/m).

We have converted the algorithm to one that never

mistakes with expected linear running time.

Why use Karp-Rabin?

It is efficient and simple.

It is space efficient.

It can be generalized to solve harder problems

such as 2-dimensional string matching.

It’s performance is backed up by a concrete

theoretical analysis.

The Shift-And

Method

The Shift-And Method

We start with the exact match problem.

Define M to be a binary n by m matrix such that:

M(i,j) = 1 iff the first i characters of P exactly match

the i characters of T ending at character j.

M(i,j) = 1 iff P[1 .. i] ≡ T[j-i+1 .. j]

The Shift-And Method

Let T = california

Let P = for

M=

1

2

3

4

5

6

7

8

9

m = 10

1

0

0

0

0

1

0

0

0

0

0

2

0

0

0

0

0

1

0

0

0

0

n=3

0

0

0

0

0

0

1

0

0

0

M(i,j) = 1 iff the first i characters of P exactly match

the i characters of T ending at character j.

How does M solve the exact match problem?

How to construct M

We will construct M column by column.

Two definitions are in order:

Bit-Shift(j-1) is the vector derived by shifting the

vector for column j-1 down by one and setting the first

bit to 1.

Example:

0 1

1 0

BitShift(1 ) 1

0 1

1 0

How to construct M

We define the n-length binary vector U(x) for each

character x in the alphabet. U(x) is set to 1 for the

positions in P where character x appears.

Example:

1

0

1

U

(a

)

P = abaac

1

0

0

1

U (b) 0

0

0

0

0

U (c ) 0

0

1

How to construct M

Initialize column 0 of M to all zeros

For j > 1 column j is obtained by

M ( j ) BitShift( j 1) U (T ( j ))

An example j = 1

1 2 3 4 5 6 7 8 9 10

T=xabxabaaxa

12345

P=abaac

0

0

U ( x) 0

0

0

1

1

0

2

0

3

0

4

0

5

0

2

3

4

5

6

7

8

9

1 0 0

0 0 0

BitShift(0) & U (T (1)) 0 & 0 0

0 0 0

0 0 0

1

0

An example j = 2

1 2 3 4 5 6 7 8 9 10

T=xabxabaaxa

12345

P=abaac

1

0

U (a ) 1

1

0

1

2

1

0

1

2

0

0

3

0

0

4

0

0

5

0

0

3

4

5

6

7

8

9

1 1 1

0 0 0

BitShift(1) & U (T (2)) 0 & 1 0

0 1 0

0 0 0

1

0

An example j = 3

1 2 3 4 5 6 7 8 9 10

T=xabxabaaxa

12345

P=abaac

0

1

U (b) 0

0

0

1

2

3

1

0

1

0

2

0

0

1

3

0

0

0

4

0

0

0

5

0

0

0

4

5

6

7

8

9

1 0 0

1 1 1

BitShift(2) & U (T (3)) 0 & 0 0

0 0 0

0 0 0

1

0

An example j = 8

1 2 3 4 5 6 7 8 9 10

T=xabxabaaxa

12345

P=abaac

1

0

U (a ) 1

1

0

1

2

3

4

5

6

7

8

1

0

1

0

0

1

0

1

1

2

0

0

1

0

0

1

0

0

3

0

0

0

0

0

0

1

0

4

0

0

0

0

0

0

0

1

5

0

0

0

0

0

0

0

0

9

1 1 1

1 0 0

BitShift(7) & U (T (8)) 0 & 1 0

1 1 1

0 0 0

1

0

Correctness

1)

2)

For i > 1, Entry M(i,j) = 1 iff

The first i-1 characters of P match the i-1characters

of T ending at character j-1.

Character P(i) ≡ T(j).

1) is true when M(i-1,j-1) = 1.

2) is true when the i’th bit of U(T(j)) = 1.

The algorithm computes the and of these two bits.

Correctness

1 2 3 4 5 6 7 8 9 10

T=xabxabaaxa

abaac

1

2

3

4

5

6

7

8

9

1

0

1

0

1

0

0

1

0

1

1

0

1

2

0

0

1

0

0

1

0

0

0

0

3

0

0

0

0

0

0

1

0

0

0

4

0

0

0

0

0

0

0

1

0

0

5

0

0

0

0

0

0

0

0

0

0

M(4,8) = 1, this is because a b a a is a prefix of P of

length 4 that ends at position 8 in T.

Condition 1) – We had a b a as a prefix of length 3

that ended at position 7 in T ↔ M(3,7) = 1.

Condition 2) – The fourth bit of P is the eighth bit of

T ↔ The fourth bit of U(T(8)) = 1.

How much did we pay?

Formally the running time is Θ(mn).

However, the method is very efficient if n is the size

of a single or a few computer words.

Furthermore only two columns of M are needed at

any given time. Hence, the space used by the

algorithm is O(n).

agrep: The Shift-And Method with

errors

We extend the shift-and method for finding inexact

occurrences of a pattern in a text.

Reminder example:

T = aatatccacaa

P = atcgaa

P appears in T with 2 mismatches starting at

position 4,

it also occurs with 4 mismatches starting at position

2.

aatatccacaa

aatatccacaa

atcgaa

atcgaa

agrep

Our current goal given k find all the occurrences of

P in T with up to k mismatches.

We define the matrix Mk to be an n by m binary

matrix, such that:

Mk (i,j) = 1 iff

At least i-k of the first i characters of P match the i

characters up through character j of T.

What is M0?

How does Mk solve the k-mismatch problem?

Computing Mk

We compute Ml for all l=0, … , k.

For each j compute M(j), M1(j), … , Mk(j)

For all l initialize Ml(0) to the zero vector.

The j’th column of Ml is given by:

Computing Mk

The first i-1 characters of P match a substring of T

ending at j-1, with at most l mismatches, and the

next pair of characters in P and T are equal.

j-1

*

*

*

*

*

*

*

*

*

*

i-1

BitShift( M ( j 1)) U (T ( j ))

l

Computing Mk

The first i-1 characters of P match a substring of T

ending at j-1, with at most l -1 mismatches.

j-1

*

*

*

*

*

*

*

*

*

*

i-1

BitShift( M

l 1

( j 1))

Computing Mk

We compute Ml for all l=1, … , k.

For each j compute M(j), M1(j), … , Mk(j)

For all l initialize Ml(0) to the zero vector.

The j’th column of Ml is given by:

M l ( j)

[ BitShift( M l ( j 1)) U (T ( j ))]

BitShift( M l 1 ( j 1))

Example: M1

1 2 3 4 5 6 7 8 9 10

T=xabxabaaxa

P=

abaac

1

2

3

4

5

6

7

8

9

1

0

1

1

1

1

1

1

1

1

1

1

1

2

0

0

1

0

0

1

0

1

1

0

3

0

0

0

1

0

0

1

0

0

1

4

0

0

0

0

1

0

0

1

0

0

5

0

0

0

0

0

0

0

0

1

0

1

2

3

4

5

6

7

8

9

1

0

1

0

1

0

0

1

0

1

1

0

1

2

0

0

1

0

0

1

0

0

0

0

3

0

0

0

0

0

0

1

0

0

0

4

0

0

0

0

0

0

0

1

0

0

5

0

0

0

0

0

0

0

0

0

0

M 0=

Example: M1

1 2 3 4 5 6 7 8 9 10

T=xabxabaaxa

P= abaa

1

2

3

4

5

6

7

8

9

1

0

1

1

1

1

1

1

1

1

1

1

1

2

0

0

1

0

0

1

0

1

1

0

3

0

0

0

1

0

0

1

0

0

1

4

0

0

0

0

1

0

0

1

0

0

5

0

0

0

0

0

0

0

0

1

0

1

0

U (a ) 1

1

0

How much did we pay?

Formally the running time is Θ(kmn).

Again, the method is practically efficient for small n.

Still only a constant number of columns of M are

needed at any given time. Hence, the space used

by the algorithm is O(n).

The match count problem

The match-count problem

We want to count the exact number of characters

that match each of the different alignments of P

with T.

aatatccacaa

atcgaa

4

aatatccacaa

atcgaa

2

The match-count problem

We will first look at a simple algorithm which

extends the techniques we’ve seen so far.

Next, we introduce a more efficient algorithm that

exploits existing efficient methods to calculate the

Fourier transform.

We conclude with a variation that gives good

performance for unbounded alphabets.

Match-count Algorithm 1

We define the matrix MC to be an n by m integer

valued matrix, such that:

MC(i,j) =

The number of characters of P[1..i] that match T[jI+1,..,j]

How does MC solve the match-count problem?

Computing MC

Initialize column 0 of MC to all zeros

For j ≥ 1 column j is obtained by

MC (i 1, j 1)

MC (i , j )

MC (i 1, j 1) 1

P (i ) T ( j )

Otherwise

Total of Θ(nm) comparisons and (simple) additions.

Match-count algorithm 2

Define a vector W that counts the matching

symbols, it’s indices are the possible alignments.

T=ababcaaa

P=abca

W(1) = 2

ababcaaa

abca

W(3) = 4

ababcaaa

abca

W(5) = 1

ababcaaa

abca

W(2) = 0

ababcaaa

abca

W(4) = 1

Match-count algorithm 2

Let’s handle one symbol at a time:

T=ababcaaa

P=abca

Ta = 1 0 1 0 0 1 1 1

Pa = 1 0 0 1

Wa(1) = 1

10100111

1001

Wa(3) = 2

Match-count algorithm 2

We have

W = Wa + Wb + Wc.

Or in the general case

W W

Match-count algorithm 2

We can calculate Wα using a convolution.

Let’s rephrase the problem.

X = Tα padded with n zeros on the right.

Y = Pα padded with m zeros on the right.

We have two vectors X,Y of length m+n.

Match-count algorithm 2

Ta = 1 0 1 0 0 1 1 1

Pa = 1 0 0 1

X=101001110000

Y=100100000000

Match-count algorithm 2

In our modified representation:

W (i )

n m 1

X ( j ) Y (i j )

j 0

Where the indices are taken modulo n+m.

W(1) =

W(2) =

< 1 0 1 0 0 1 1 1 0 0 0 0,

100100000000 >

<010100111000,

010010000000 >

Match-count algorithm 2

In our modified representation:

W (i )

n m 1

X ( j ) Y (i j )

j 0

Where the indices are taken modulo n+m.

This is the convolution of X and the reverse of Y.

Using FFT calculating convolution takes time

O(m log(m)).

Match-count algorithm 2

The total running time is O(|∑| m log(m))

What happens if |∑| is large?

For example when |∑| =n, we get O(n m log(m))

which is actually worse than the naïve algorithm.

Match-count algorithm 3

An idea: some symbols might appear more often

than others.

Use convolutions for the frequent symbols.

Use a more simple counting method for the rest.

Rare symbols

Say α appears less than c times in P.

Record the locations of α in P

l1,…,lr r ≤c.

Go over the text, when we see α at location j we

increment W(j-l1+1) , … , W(j-lr+1+1).

Rare symbols

T = a b a b c a a a…

P=abcac

l1 = 3, l2 = 5

j = 5 → W(5-3+1)++

W(3)++

a b a b c a a a…

abcac

W(5-5+1)++

W(1)++

T = a b a b c a a a...

abcac

How much did we pay?

We can do this for all the rare symbols in one

sweep of T, for each position in T we make up to c

updates in W.

Thus handling the rare symbols will cost us O(cm).

For the frequent symbols we pay one convolution

per symbol so we pay at most

O(n/c m log(m)).

Determining c

We choose the c that gives us the best balance

n

cm m log( m )

c

c 2 n log( m )

c n log( m )

The total running time is O(m n log( m) )

References

Dan Gusfield,

Algorithms on Strings, Trees and Graphs.

Cambridge Univ. Press, Cambridge,1997.

The end